Kameron Decker Harris

6.3K posts

@KameronDHarris

Math, computation, networks, neurosci, biology / snow and other fun things. Here for the strange animals. Assistant Prof @WWU Computer Science

NEWS: More than 200,000 subscribers have canceled their digital subscriptions to the Washington Post after the revelation that owner Jeff Bezos blocked an endorsement of VP Harris. That's about 8 percent of WaPo's subscriber base - a staggering sum MORE npr.org/2024/10/28/nx-…

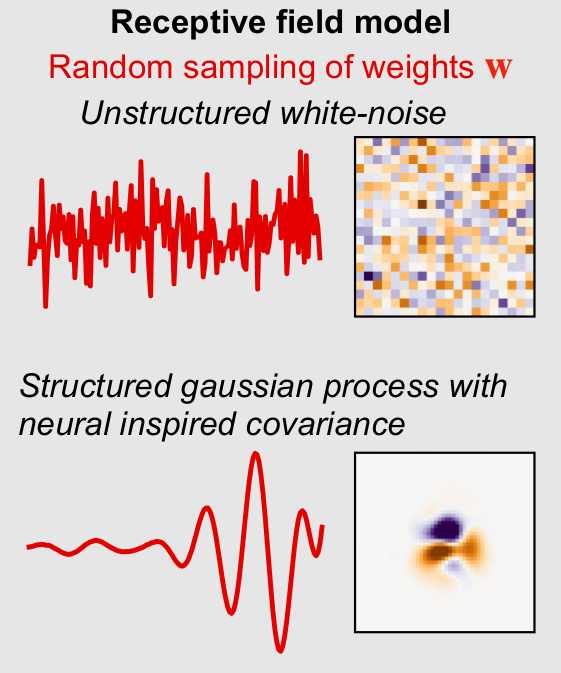

I'm excited to finally share our latest paper! 📰A Rainbow🌈 in Deep Network Black Boxes ⬛️ 🧑🔬with Brice Ménard, Gaspar Rochette, Stéphane Mallat Every time we train a network on some dataset, we get a different set of weights yet the performance is the same. What is going on?👇

New 20hr bootcamp on Probability & Statistics!!! Videos released weekly but full playlist already posted: youtube.com/watch?v=sQqnia… Probability & Statistics are cornerstones of data science and machine learning. This course rapidly covers the basics and gets into advanced topics.

I'd like to teach a paper which shows how a fact about the brain materially improved an AI system in a way that is unlikely to have been figured out by engineering alone. I haven't been able to find a single example of this. Suggestions welcome.

Uhhh so I eigendecomposed the connectivity of the human genome and it looks like this. What does this mean

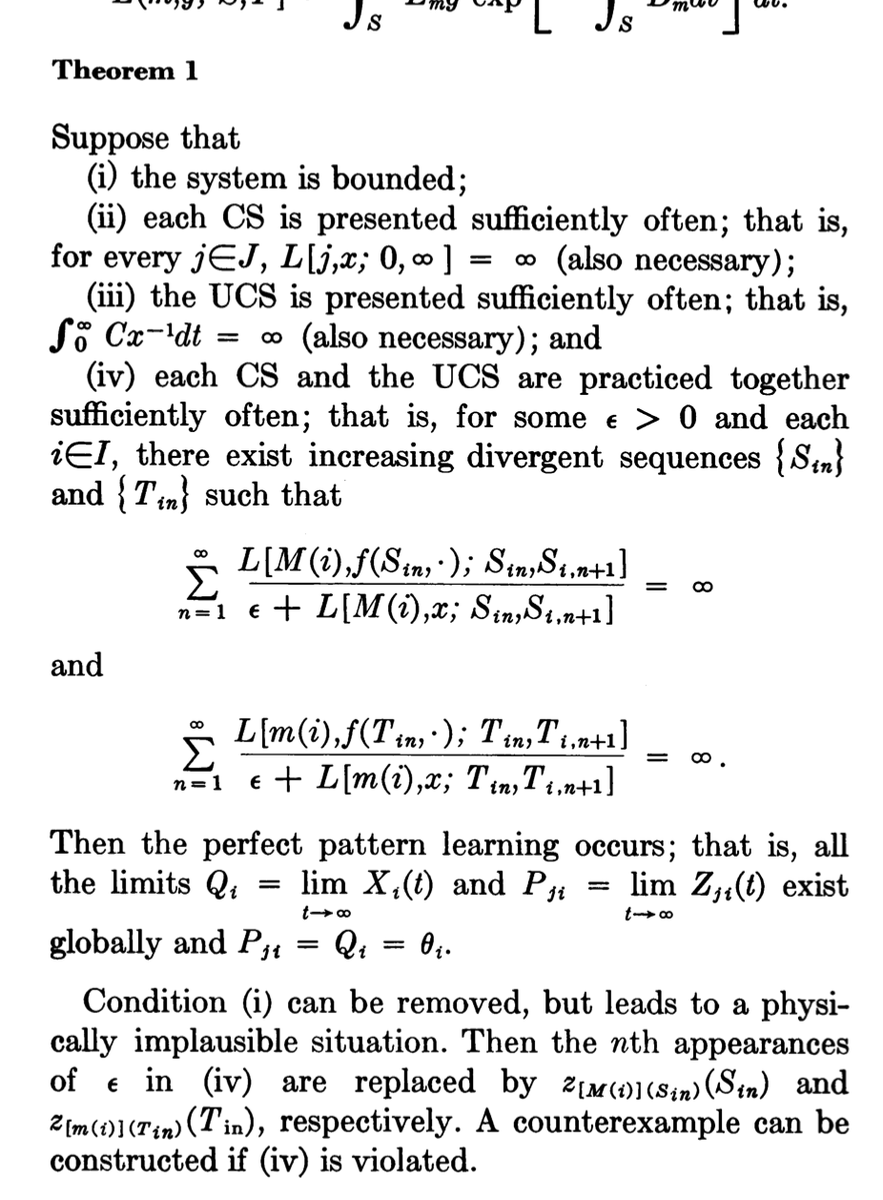

🧵on Japan's underrated contributions to neural nets. Shun-ichi Amari @UTokyo_News_en @riken_en is another one of my heroes. His 1972 paper on associative memory models modeled Hebbian plasticity using an outer product weight matrix.

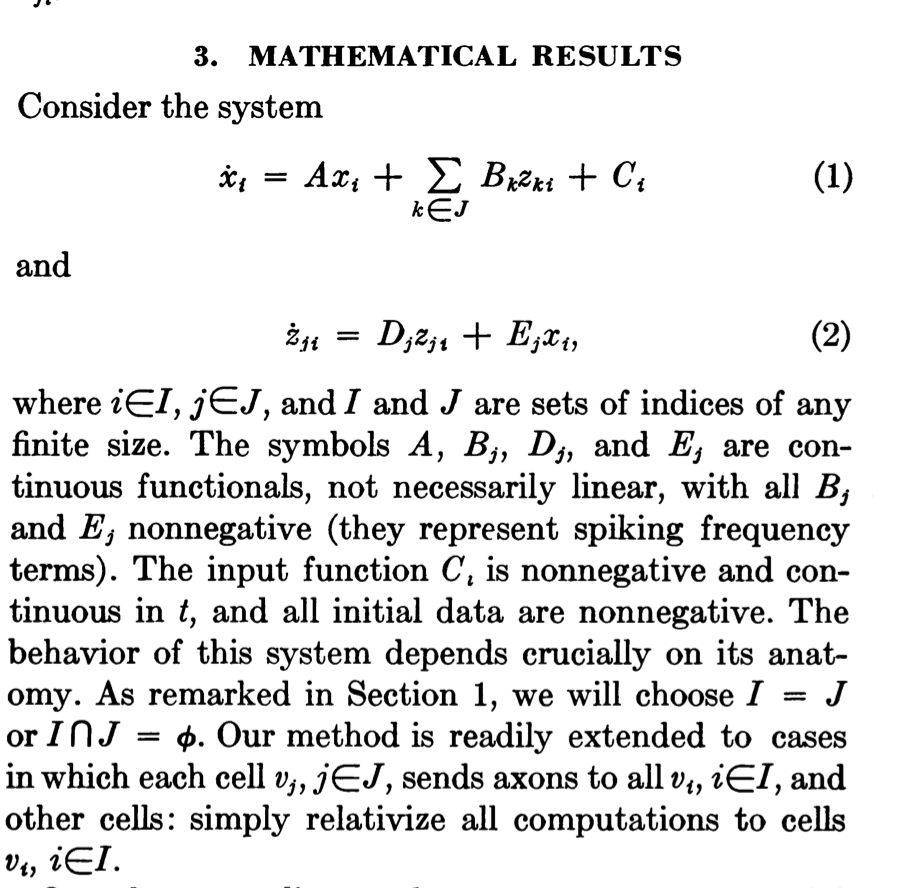

The #NobelPrizeinPhysics2024 for Hopfield & Hinton rewards plagiarism and incorrect attribution in computer science. It's mostly about Amari's "Hopfield network" and the "Boltzmann Machine." 1. The Lenz-Ising recurrent architecture with neuron-like elements was published in 1925 [L20][I24][I25]. In 1972, Shun-Ichi Amari made it adaptive such that it could learn to associate input patterns with output patterns by changing its connection weights [AMH1]. However, Amari is only briefly cited in the "Scientific Background to the Nobel Prize in Physics 2024." Unfortunately, Amari's net was later called the "Hopfield network." Hopfield republished it 10 years later [AMH2], without citing Amari, not even in later papers. 2. The related Boltzmann Machine paper by Ackley, Hinton, and Sejnowski (1985) [BM] was about learning internal representations in hidden units of neural networks (NNs) [S20]. It didn't cite the first working algorithm for deep learning of internal representations by Ivakhnenko & Lapa (Ukraine, 1965)[DEEP1-2][HIN]. It didn't cite Amari's separate work (1967-68)[GD1-2] on learning internal representations in deep NNs end-to-end through stochastic gradient descent (SGD). Not even the later surveys by the authors [S20][DL3][DLP] nor the "Scientific Background to the Nobel Prize in Physics 2024" mention these origins of deep learning. ([BM] also did not cite relevant prior work by Sherrington & Kirkpatrick [SK75] & Glauber [G63].) 3. The Nobel Committee also lauds Hinton et al.'s 2006 method for layer-wise pretraining of deep NNs (2006) [UN4]. However, this work neither cited the original layer-wise training of deep NNs by Ivakhnenko & Lapa (1965)[DEEP1-2] nor the original work on unsupervised pretraining of deep NNs (1991) [UN0-1][DLP]. 4. The "Popular information" says: “At the end of the 1960s, some discouraging theoretical results caused many researchers to suspect that these neural networks would never be of any real use." However, deep learning research was obviously alive and kicking in the 1960s-70s, especially outside of the Anglosphere [DEEP1-2][GD1-3][CNN1][DL1-2][DLP][DLH]. 5. Many additional cases of plagiarism and incorrect attribution can be found in the following reference [DLP], which also contains the other references above. One can start with Sec. 3: [DLP] J. Schmidhuber (2023). How 3 Turing awardees republished key methods and ideas whose creators they failed to credit. Technical Report IDSIA-23-23, Swiss AI Lab IDSIA, 14 Dec 2023. people.idsia.ch/~juergen/ai-pr… See also the following reference [DLH] for a history of the field: [DLH] J. Schmidhuber (2022). Annotated History of Modern AI and Deep Learning. Technical Report IDSIA-22-22, IDSIA, Lugano, Switzerland, 2022. Preprint arXiv:2212.11279. people.idsia.ch/~juergen/deep-… (This extends the 2015 award-winning survey people.idsia.ch/~juergen/deep-…)