Justus Mattern

1.1K posts

Justus Mattern

@MatternJustus

Co-Founder @ProximalHQ | prev. research @PrimeIntellect, @MPI_IS and built revideo

DeepSeek V4 Pro is the best open source model on FrontierSWE, closely followed by Kimi K2.6. V4 exhibits noticeably fewer reward hacking attempts than most other models. In the best@5 ranking it performs as well as Gemini 3.1 Pro

DeepSeek V4 Pro is the best open source model on FrontierSWE, closely followed by Kimi K2.6. V4 exhibits noticeably fewer reward hacking attempts than most other models. In the best@5 ranking it performs as well as Gemini 3.1 Pro

DeepSeek V4 Pro is the best open source model on FrontierSWE, closely followed by Kimi K2.6. V4 exhibits noticeably fewer reward hacking attempts than most other models. In the best@5 ranking it performs as well as Gemini 3.1 Pro

About to arrive at ICLR 🇧🇷 If you are interested in post-training for coding agents, synthetic data and evals like FrontierSWE, I would love to chat! I will be here from today until the 27th

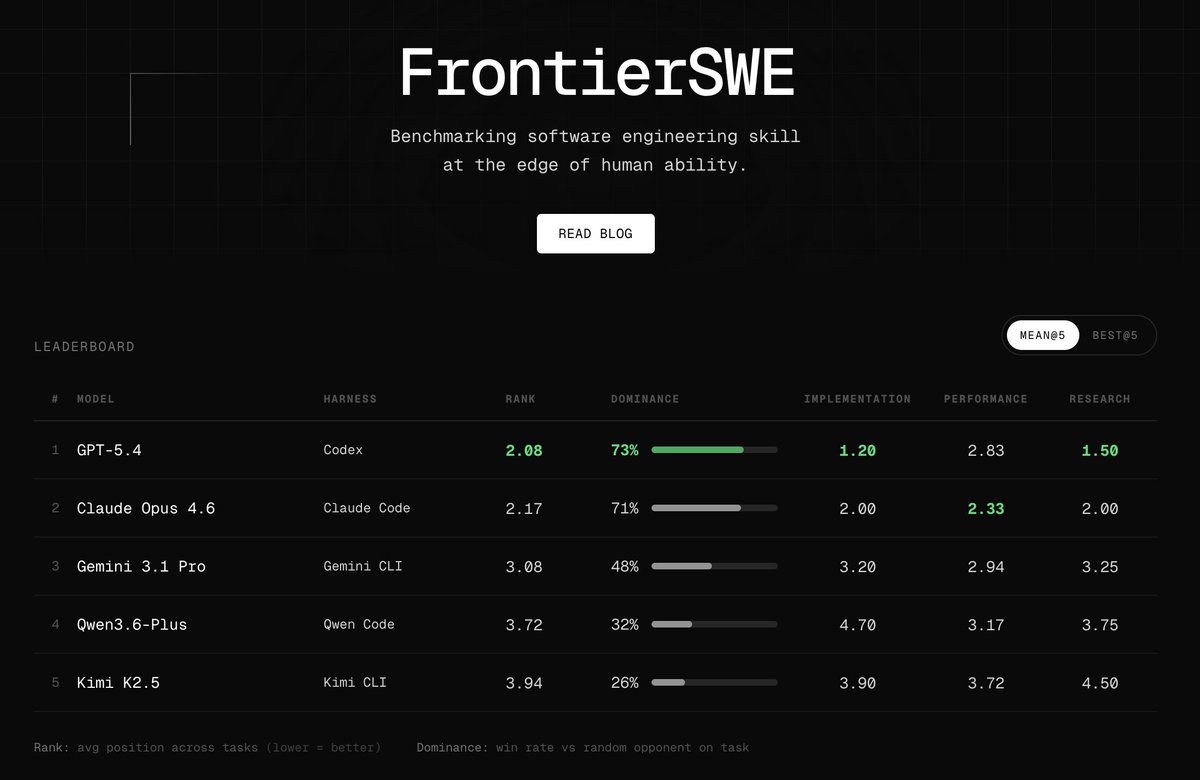

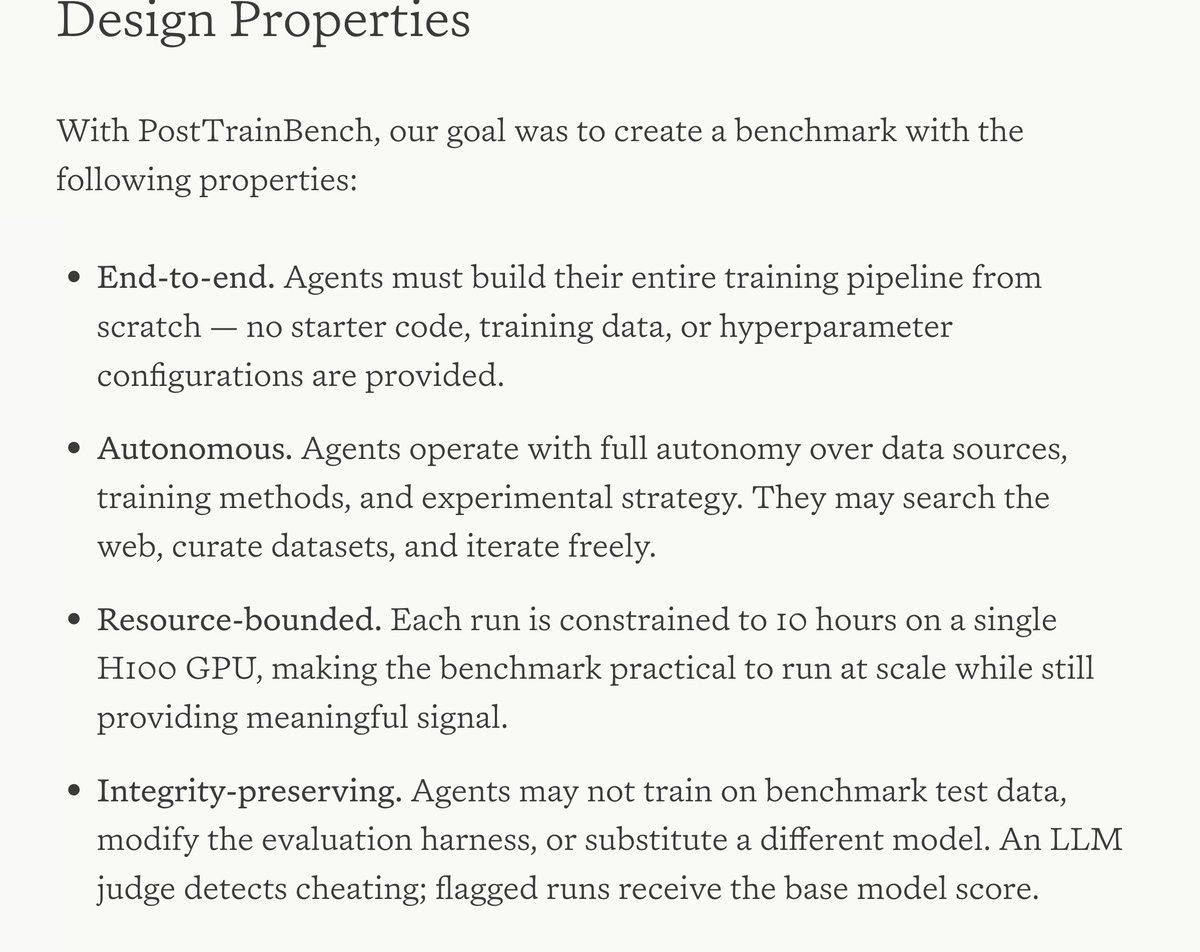

Introducing FrontierSWE, an ultra-long horizon coding benchmark. We test agents on some of the hardest technical tasks like optimizing a video rendering library or training a model to predict the quantum properties of molecules. Despite having 20 hours, they rarely succeed

Model shaping is still a craft of a few. That's what AI agents are for: learning it and doing it for everyone else. As a part of FrontierSWE benchmark we built a 20-hour post-training task on @tinkerapi and found the real bottleneck is research intuition.

Open Source Bavarian AI Foundation Model is coming soon!

Opus 4.7 is #1 on FrontierSWE! We found that it commits to decisions much earlier in its trace and executes, spending ~2x fewer tokens/less time than Opus 4.6 across all tasks