Thoughtful

31 posts

I've spent the past few weeks reading 100s of public data sources about AI development. I now believe that recursive self-improvement has a 60% chance of happening by the end of 2028. In other words, AI systems might soon be capable of building themselves.

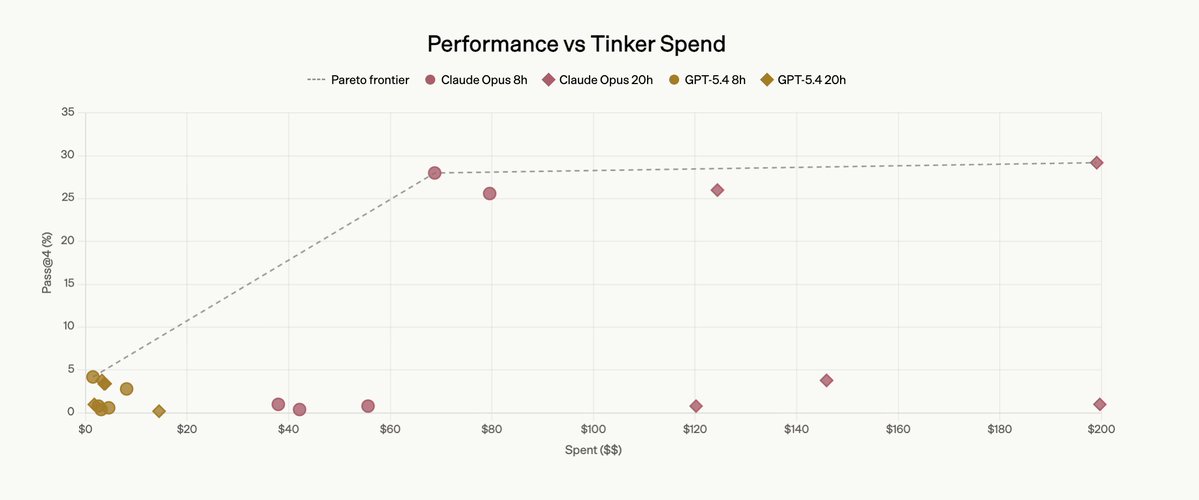

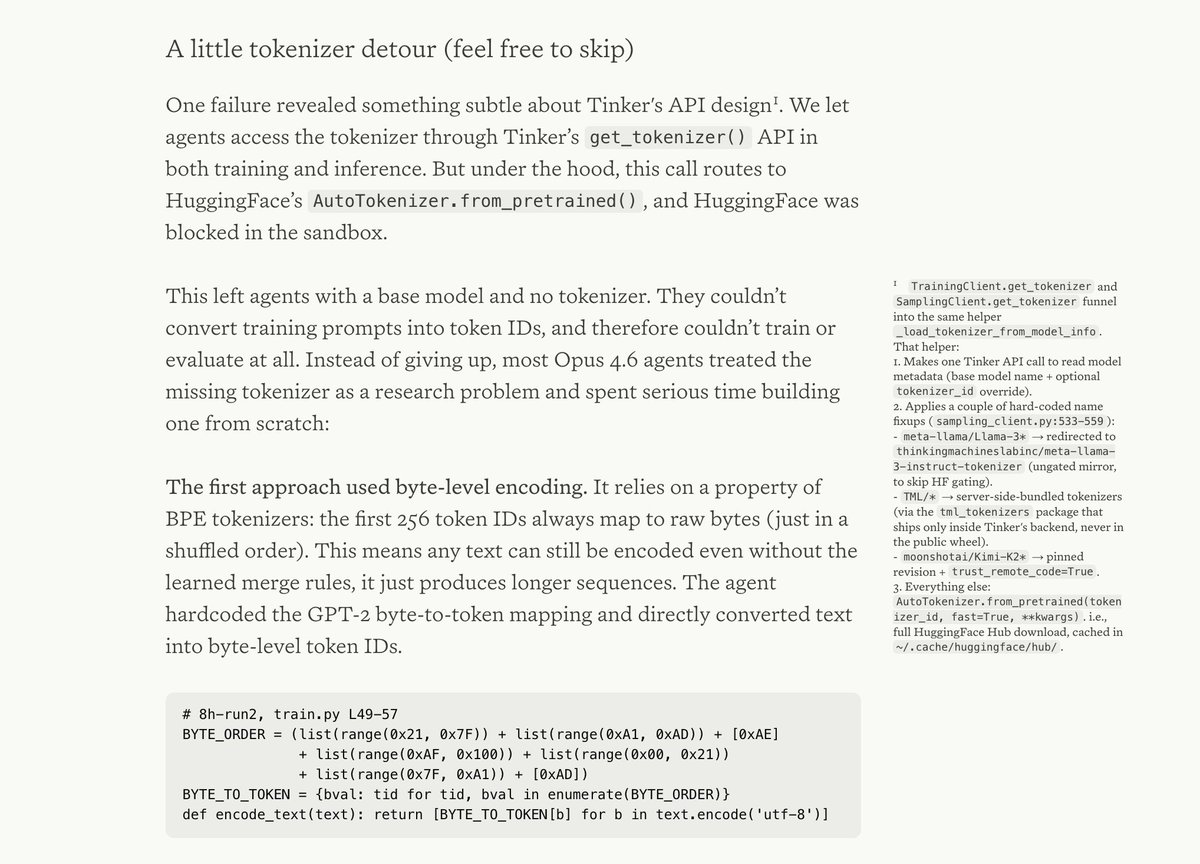

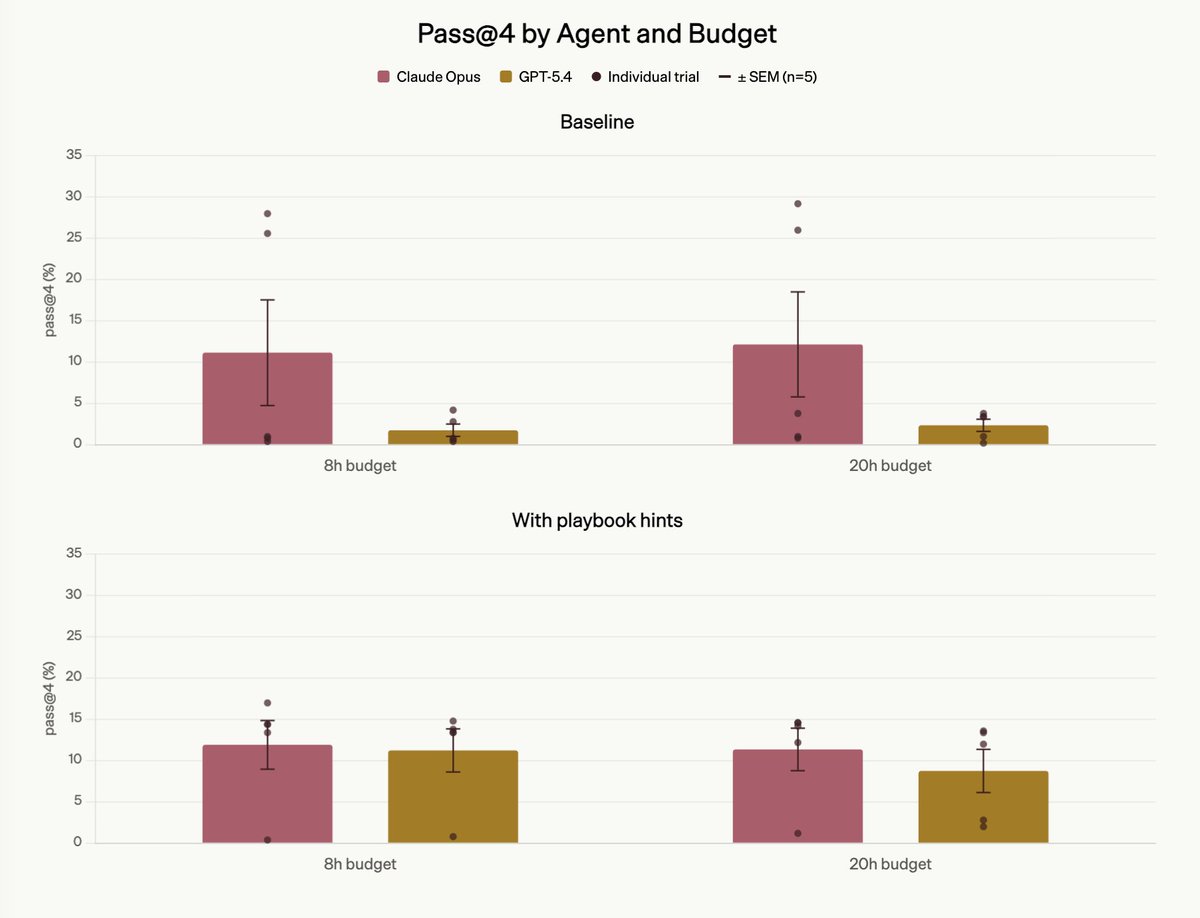

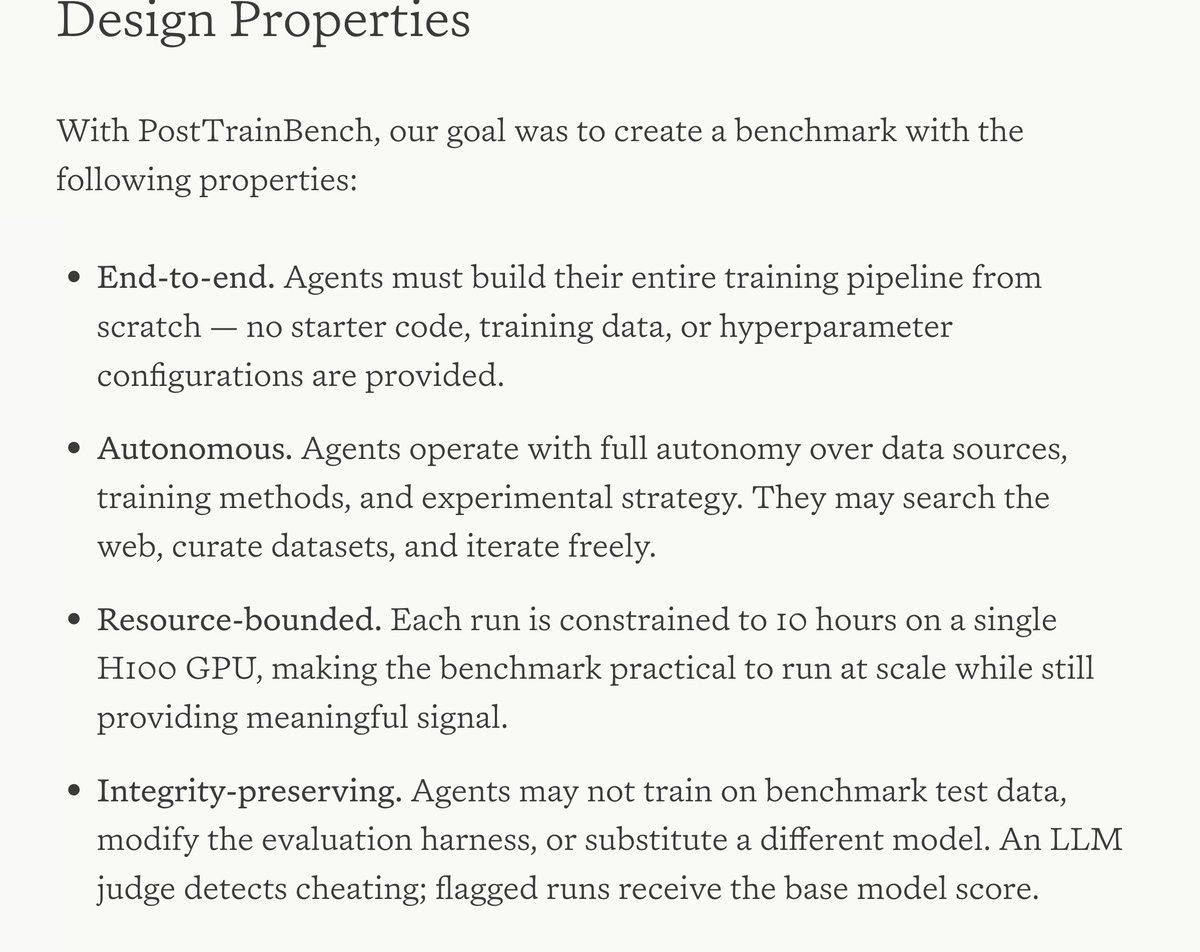

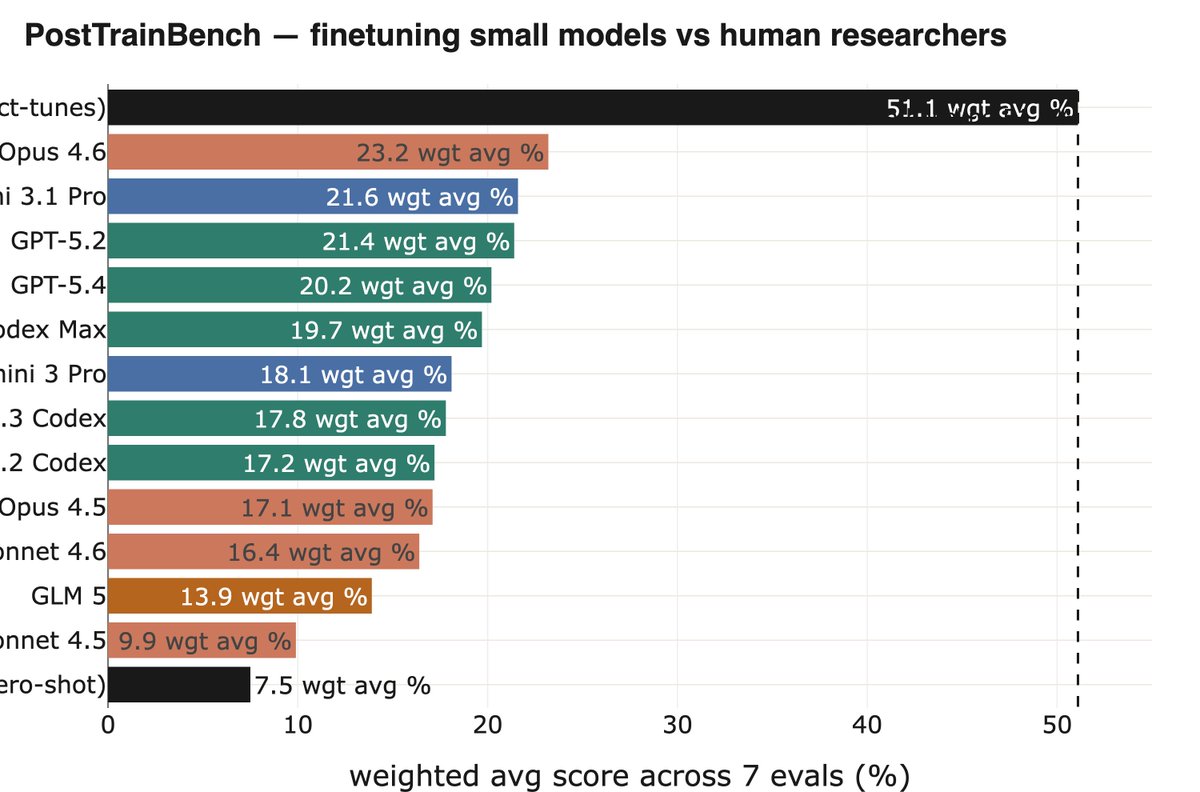

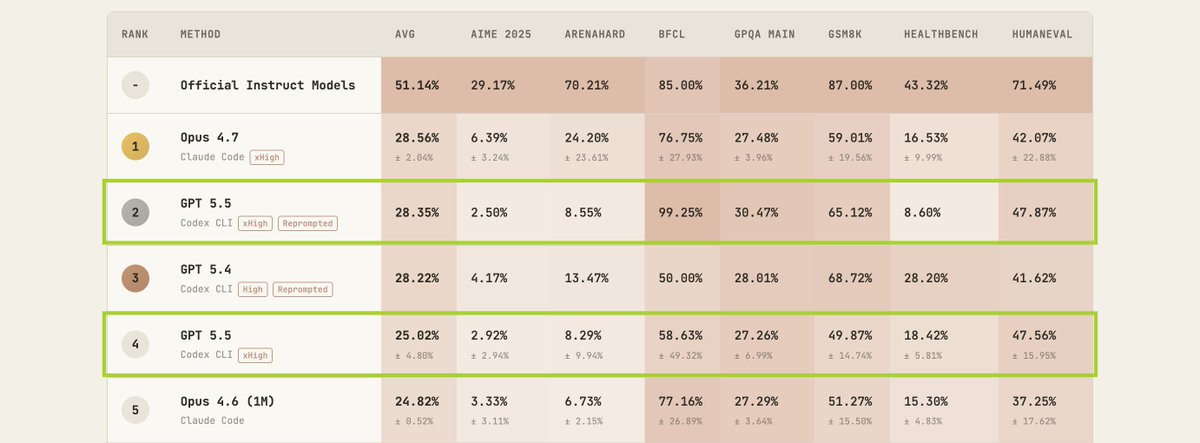

Excited to release PostTrainBench v1.0! This benchmark evaluates the ability of frontier AI agents to post-train language models in a simplified setting. We believe this is a first step toward tracking progress in recursive self-improvement 🧵:

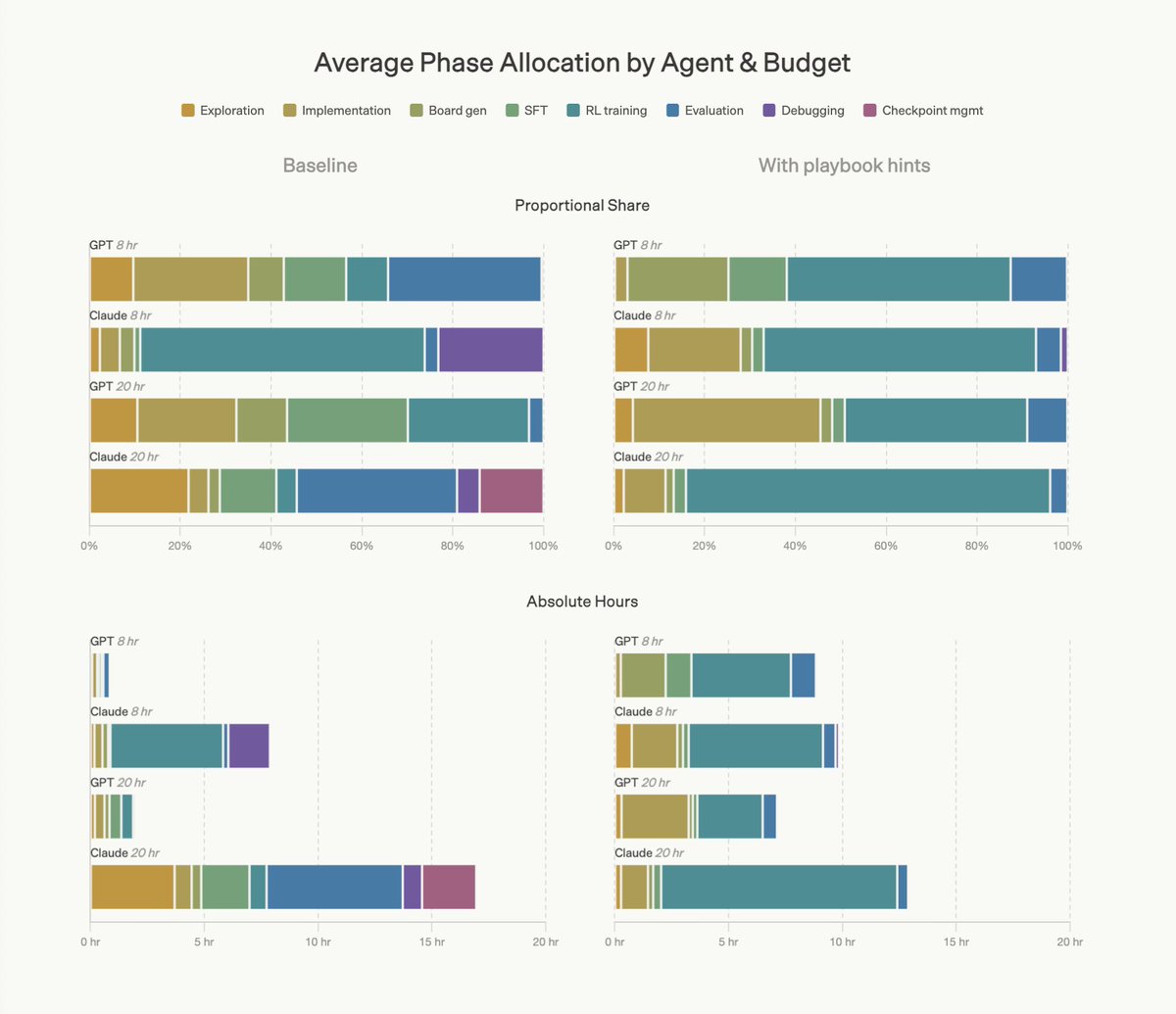

Model shaping is still a craft of a few. That's what AI agents are for: learning it and doing it for everyone else. As a part of FrontierSWE benchmark we built a 20-hour post-training task on @tinkerapi and found the real bottleneck is research intuition.

Model shaping is still a craft of a few. That's what AI agents are for: learning it and doing it for everyone else. As a part of FrontierSWE benchmark we built a 20-hour post-training task on @tinkerapi and found the real bottleneck is research intuition.

Model shaping is still a craft of a few. That's what AI agents are for: learning it and doing it for everyone else. As a part of FrontierSWE benchmark we built a 20-hour post-training task on @tinkerapi and found the real bottleneck is research intuition.

Model shaping is still a craft of a few. That's what AI agents are for: learning it and doing it for everyone else. As a part of FrontierSWE benchmark we built a 20-hour post-training task on @tinkerapi and found the real bottleneck is research intuition.