Max Korbel retweetledi

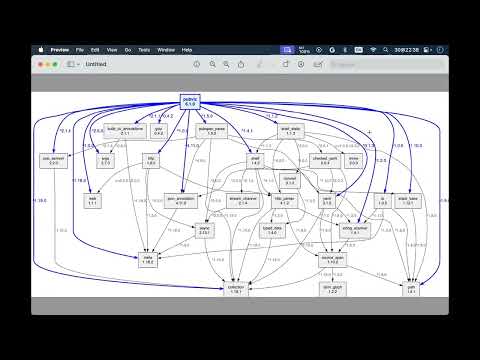

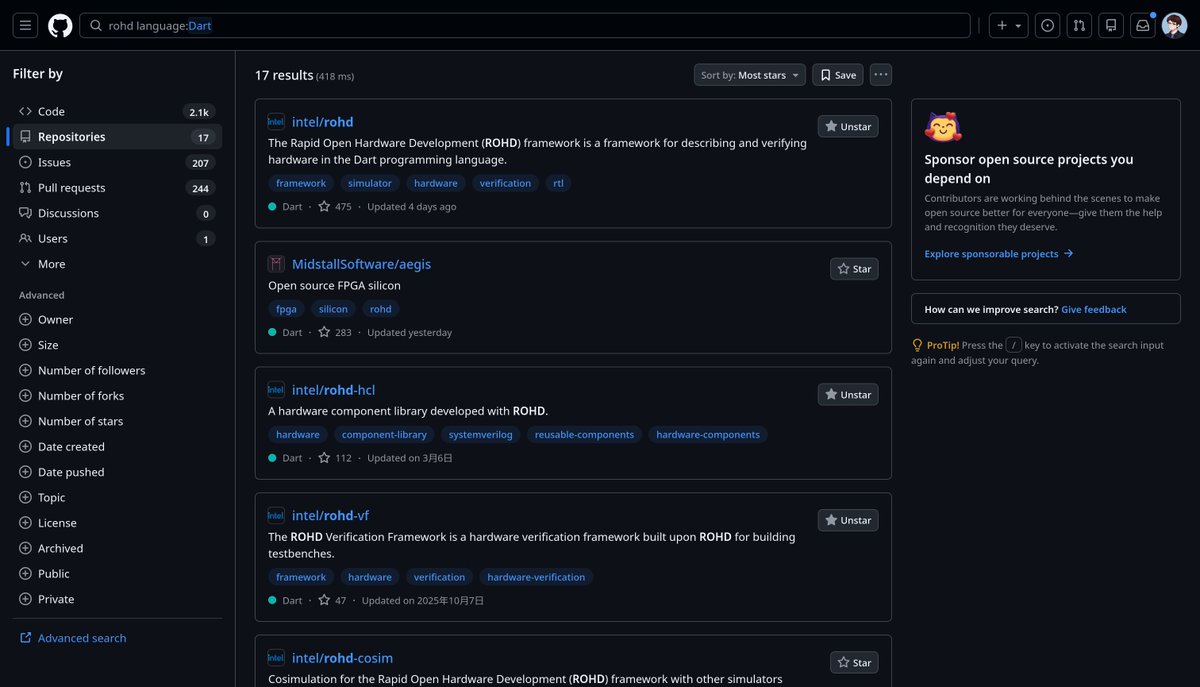

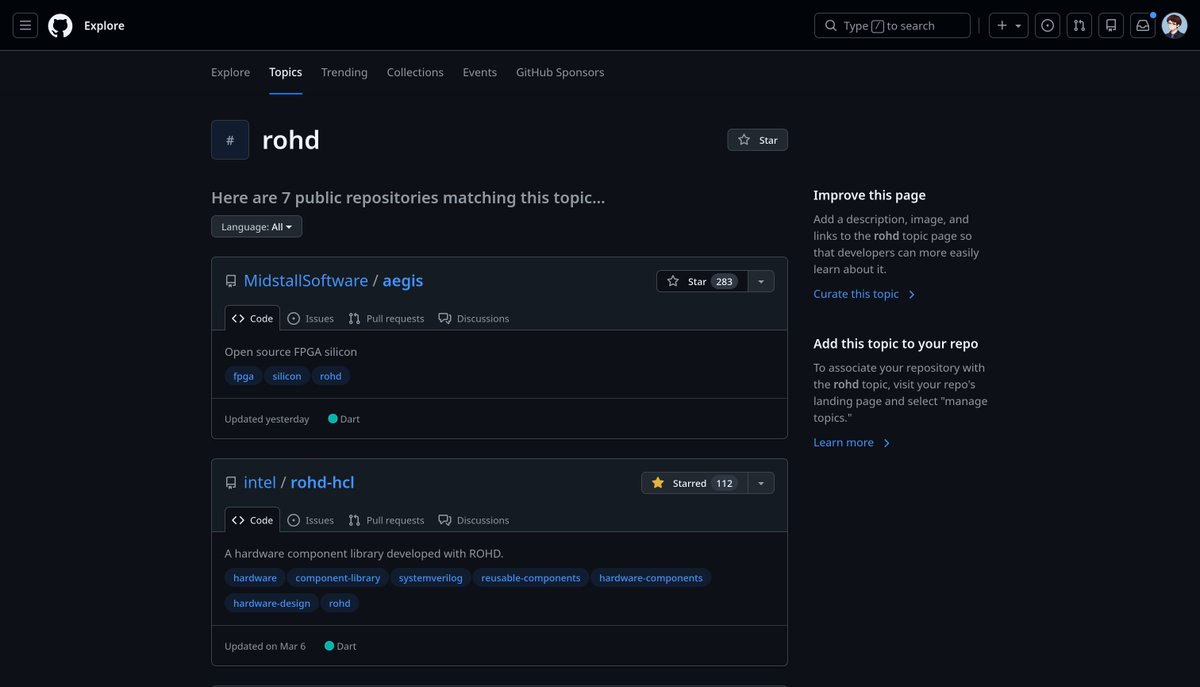

Flutter developers can now write backend logic in the same language, and with the same tooling, as their frontend 🎉

Follow along as we build a complete serverless application live, using full-stack Dart across client and cloud environments → goo.gle/48zqPnR

English