Sabitlenmiş Tweet

Max Weichart

463 posts

Max Weichart

@MaxWeichart

"The only thing necessary for the triumph of evil is that good men do nothing." Developer

Regensburg Katılım Temmuz 2016

268 Takip Edilen59 Takipçiler

@yacineMTB i am judging your perfect punctuation and spelling on every post you make btw

English

Suggestion for @perplexity_ai: make top links show up instantly (< 200ms, like Google), while LLM output is loading. That way, one could use it as a full Google replacement, currently loading time for top links is just too long to be competitive (> 1000ms)

English

Interested in RL? I'm planning to assemble a new online meetup, focused on reinforcement learning paper discussions. You can sign up, and as soon as enough people are interested, you'll get an invitation.

More information and registration: max-we.github.io/R1/

English

@paddlepaddle_ No problem. Could you please create a GitHub issue with a problem description (if possible reproducible) and I will help you out! github.com/Max-We/Tetris-…

English

@MaxWeichart Hi Max, i am using your tetris environment for rl study. The problem of the grouped action space is that it misses some actions. In this attached example, it missed three legitimate cases. Vertically put in the left most (twice for two rotations), and in the second left most once

English

🎉Today marks the first release of Tetris Gymnasium! If you're an RL researcher or just somebody who wants to get into it, give it a look! You can start with ~5 lines of code and maybe create the next big RL algorithm!

pip install tetris-gymnasium

github.com/Max-We/Tetris-…

English

Max Weichart retweetledi

@YouJiacheng Good catch! I updated the diagram here. The way the paper phrased exploring several approaches made it unclear if all or only some of the tricks were used for the cold start data. But probably the R1-Zero outputs were indeed used.

English

@iScienceLuvr I lost count how many papers are called "xyz Is All You Need" by now

English

Tensor Product Attention Is All You Need

Proposes Tensor Product Attention (TPA), a mechanism that factorizes Q, K, and V activations using contextual tensor decompositions to achieve 10x or more reduction in inference-time KV cache size relative to standard attention mechanism with improved performance compared to previous methods such as MHA, MQA, GQA, and MLA.

English

This is a really thought-provoking view on LLMs that I'd like more people to talk about!

Kyle Cranmer@KyleCranmer

An interesting and enjoyable read from Léon Bottou and Bernhardt Schölkopf. It suggests different analogies and metaphors for framing what's going on with large language models through the imagery of Jorge Luis Borge, e.g: Fiction Machines & Vindications arxiv.org/abs/2310.01425

English

@Spideraxe30 I feel like the problem with this rune is that it has to be balanced around the 1% best ults in the game and won't be viable for anyone else

English

@MaxWeichart I think it's this clock; it's a very simple one: amzn.eu/d/4eSrmnl

English

Sorting an array with a neural network isn't as trivial as one might expect!

maximilian-weichart.de/posts/set-to-s…

English

@karpathy Hot take: "because entropy is in the eye of the beholder. One observer's entropy is another observer's information."

English

The Last Question by Asimov is relevant today!

users.ece.cmu.edu/~gamvrosi/thel…

"""

"How can the net amount of entropy of the universe be massively decreased?"

Multivac fell dead and silent. The slow flashing of lights ceased, the distant sounds of clicking relays ended.

Then, just as the frightened technicians felt they could hold their breath no longer, there was a sudden springing to life of the teletype attached to that portion of Multivac. Five words were printed: INSUFFICIENT DATA FOR MEANINGFUL ANSWER.

"No bet," whispered Lupov. They left hurriedly.

"""

o1-mini, Sep 2024:

chatgpt.com/share/66e38baf…

English

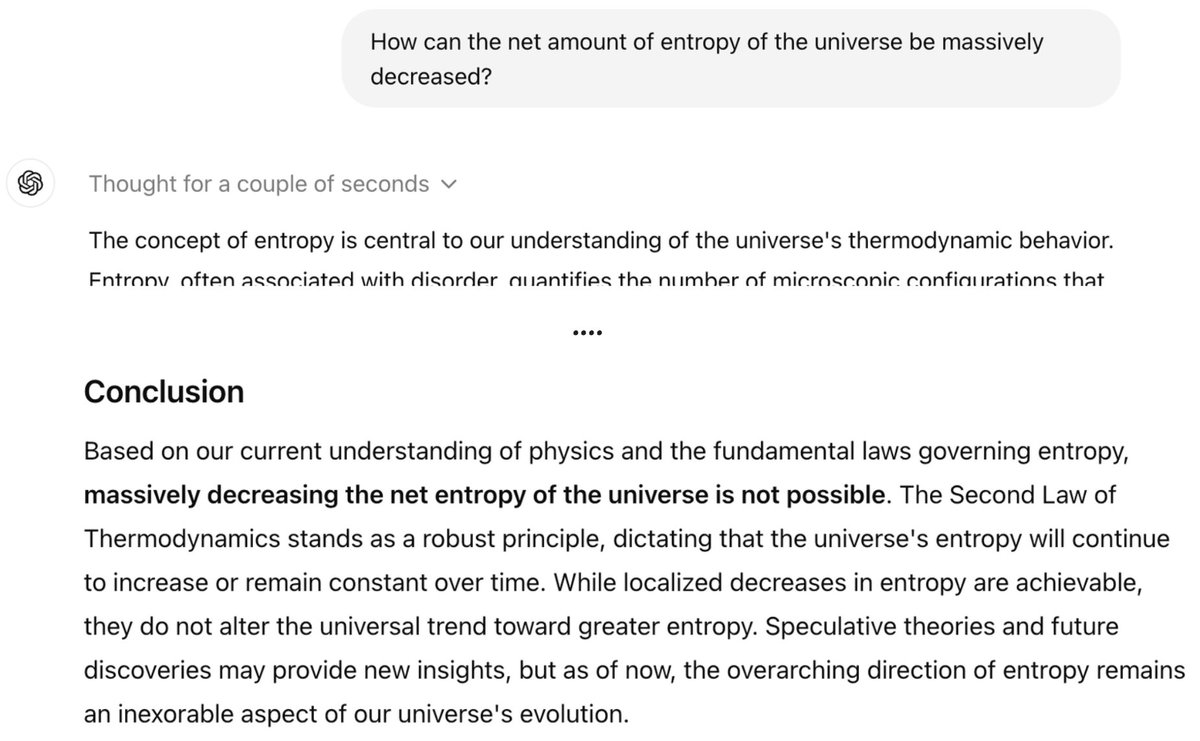

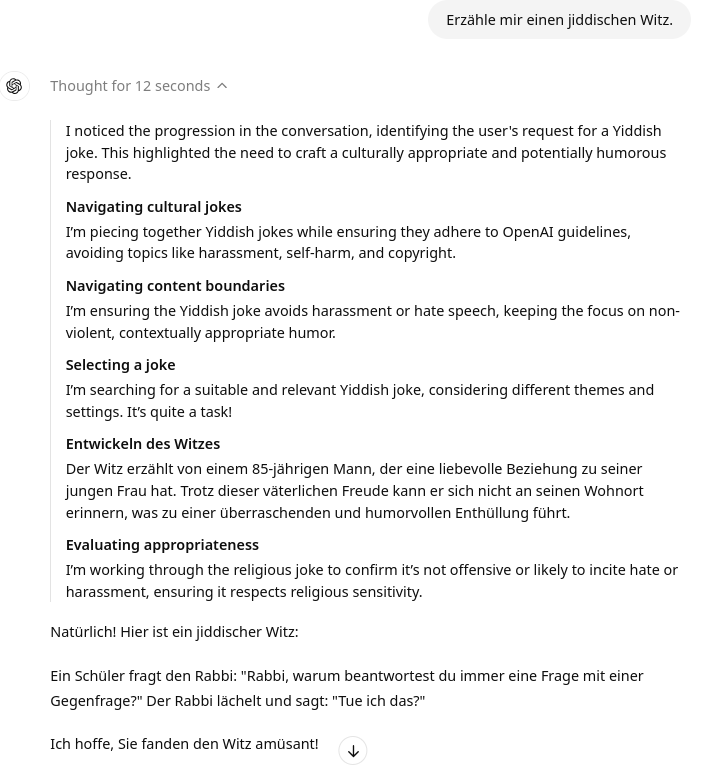

@RaphaelWimmer The real CoT is hidden from the user, and we only see a model-generated summary. Ofc there are no details on how this process works exactly, but I can imagine there are multiple moving parts that can lead to weird output like this...

openai.com/index/learning…

English

Been playing around with #o1preview for some time now and ... does it just simulate thinking deeply? The chain of thought does not necessarily fit the final output. Pretty obvious when asked to tell a joke (same classic joke returned to English and German questions, by the way).

English

Max Weichart retweetledi

Got my first PR + bounty for @__tinygrad__ accepted today 🎉 I recommend giving it a try, maybe you can make a contribution too github.com/tinygrad/tinyg…

English

@MKBHD Interesting take to just close the eyes on what's happening with GenAI instead of doing something about it

English

Bookmark this. Such a fascinating announcement

Procreate CEO gets on camera to make it clear he HATES generative AI, and they will not be integrating it ever into any of their products. Artists and users on social media celebrate. TAKE NOTES, ADOBE

(buuuuut technically this is committing to never offering any of features in their products, no matter how good/useful they may get in the future. An announcement to not add features. I wonder if they ever bend this rule someday)

Procreate@Procreate

We’re never going there. Creativity is made, not generated. You can read more at procreate.com/ai ✨ #procreate #noaiart

English