Sabitlenmiş Tweet

Meer | AI Tools & News

34.7K posts

Meer | AI Tools & News

@Meer_AIIT

AI Educator | Breaking down AI news, tools & tutorials | Making AI simple, useful & exciting for everyone

Join my AI newsletter for free Katılım Aralık 2017

629 Takip Edilen46.6K Takipçiler

@heyrimsha hoping to see some crazy shipments from anthropic,

English

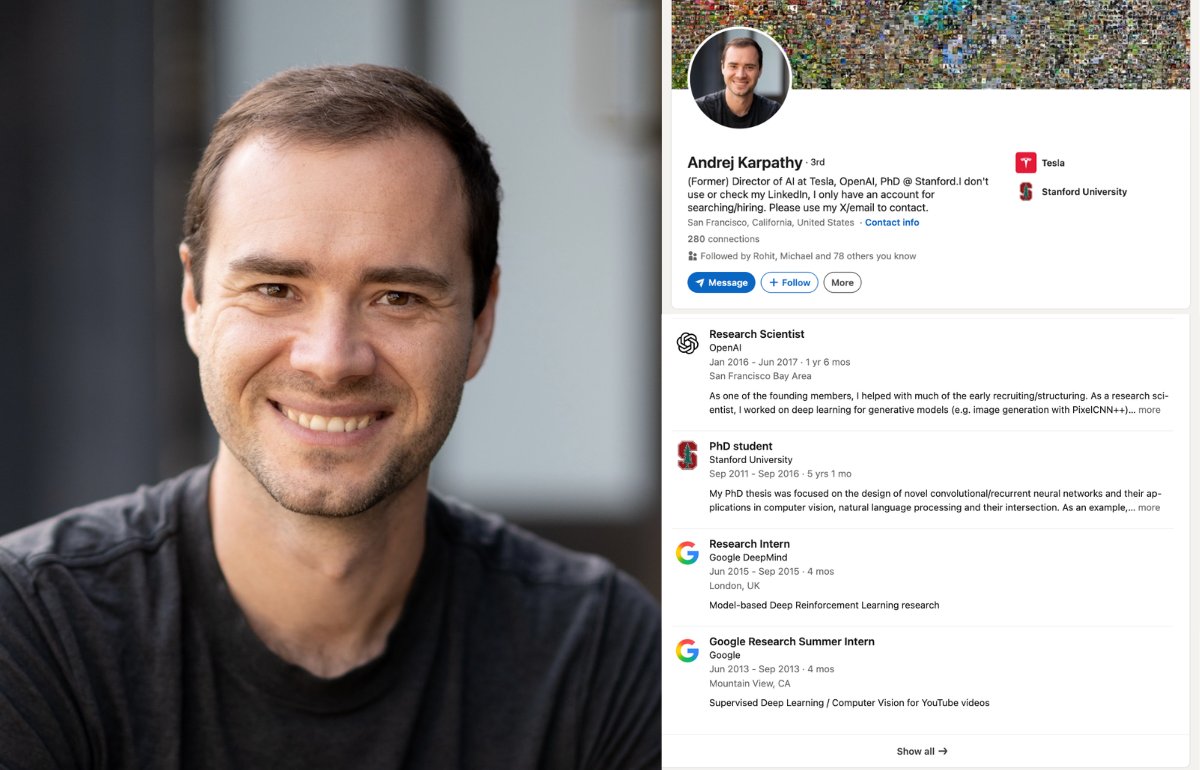

A 39 year old Slovak-Canadian researcher just paused his AI school to join the company that's racing OpenAI for the future of intelligence.

His name is Andrej Karpathy. And his career is one of the strangest in modern AI.

Karpathy was born in Bratislava in 1986, in what was then Czechoslovakia. His family moved to Toronto when he was 15. He did his bachelor's in computer science and physics at the University of Toronto, where he sat in on Geoffrey Hinton's classes and reading groups long before Hinton became a household name. He did his master's at UBC. Then he went to Stanford for his PhD under Fei-Fei Li, the woman who built ImageNet.

In 2015, while still finishing his PhD, he designed and taught Stanford's first deep learning course. It was called CS231n. It started with 150 students. Two years later it had 750. The lecture videos went on YouTube for free and became the standard reference for an entire generation of AI engineers. TIME magazine later reported that those Stanford lectures alone have over 800,000 views.

That same year, Sam Altman, Elon Musk, and Ilya Sutskever started a research lab called OpenAI. Karpathy was one of its founding members.

He stayed at OpenAI for two years. Then in June 2017, Elon Musk hired him to run Tesla Autopilot. He spent five years there as Senior Director of AI, leading the computer vision team behind every Tesla on the road.

In February 2023 he went back to OpenAI. He quit again in February 2024.

And then he did something nobody in his position had ever done.

He turned his YouTube channel into a school.

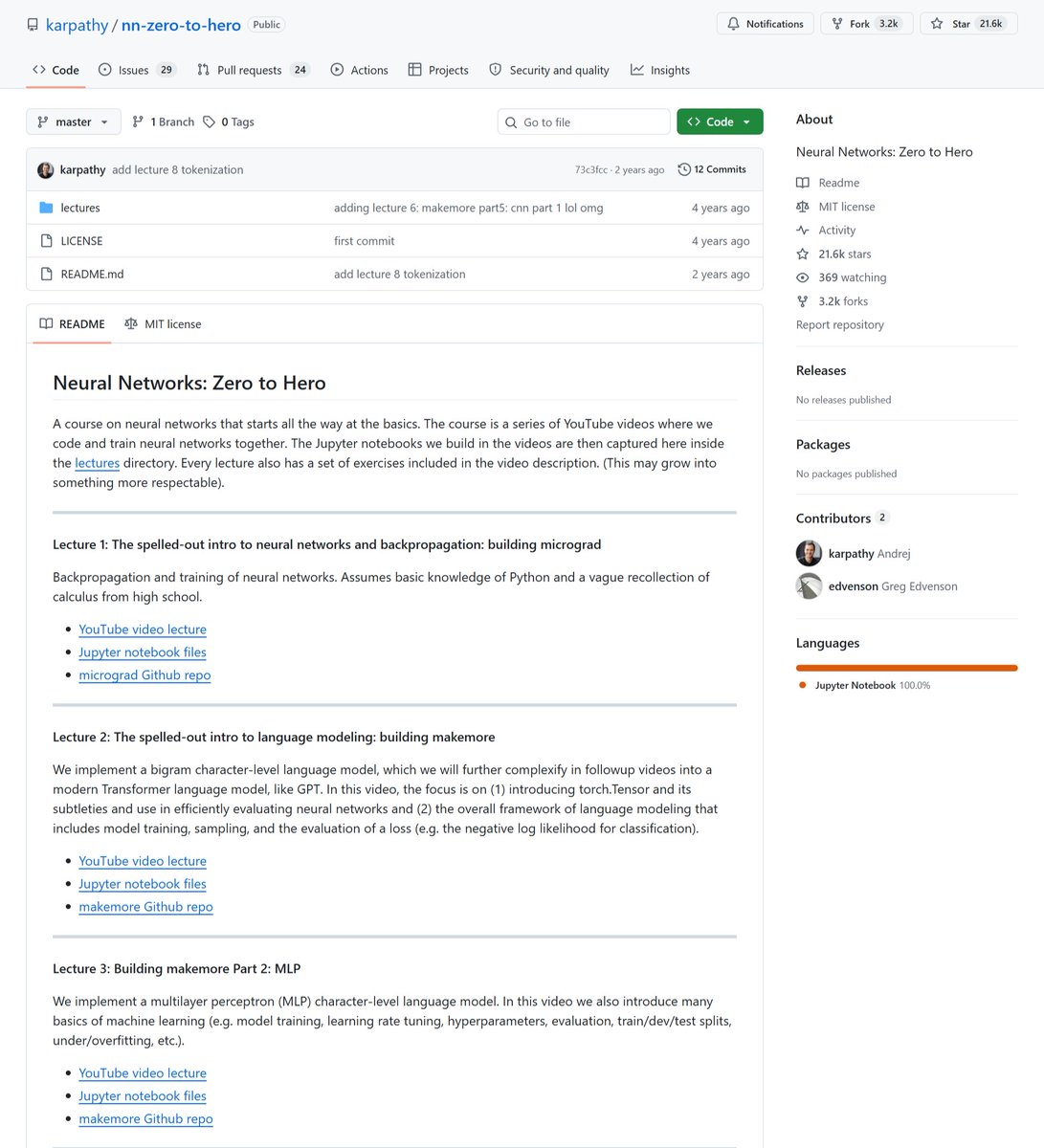

His "Neural Networks: Zero to Hero" series walks through how to build a GPT model from scratch in plain code, math, and English. The flagship video, "Let's build GPT: from scratch, in code, spelled out," is just under two hours long and has millions of views. People at every major AI lab cite it as how they actually understood transformers.

In July 2024 he announced Eureka Labs. The pitch was an AI-native school. Human teachers paired with AI teaching assistants, scaling one great teacher to a million students. The first course was LLM101n, an undergraduate program that would teach anyone to train their own ChatGPT from scratch.

Then in February 2025 he coined the phrase "vibe coding" in a single throwaway tweet. The phrase became Collins Dictionary's Word of the Year. It now has its own Wikipedia article.

Last week, on May 19, 2026, he announced he is joining Anthropic.

Eureka Labs is on pause. He said the next few years at the frontier of large language models will be especially formative, and he wanted to get back to research. At Anthropic he will lead a pretraining group focused on using Claude to accelerate Claude itself.

The man who left two of the most powerful AI labs on Earth to teach the world for free just walked back into one of them.

The videos are still up. All of them. Free. Forever.

And he still answers questions in the comments.

English

After using @itsPolloAI for a bit, here’s the honest take:

It won’t replace everything.

But it removes a lot of the “I’ll do this later” delay.

And that’s where most brands get stuck.

Try it yourself 👇

Web:tinyurl.com/3dv5nznb

App:pollo.ai/download

English

the field of agentic AI moved faster than its job titles

most of the senior people doing this work don't show up cleanly on linkedin

ran this query through @nimble_search talent sourcing skill:

> find senior AI engineers in SF Bay Area with 5+ years in agentic systems or multi-agent frameworks, strong Python, recent startup experience

the agent browsed the open web and read what each person has actually built

compound constraints like this break keyword search

reading pages like a human is what turns a query like this into an actual shortlist

English

@PlayAstrocade @sequoia yoo team.

congrats on your launch.

English

We raised $56M to help build the next era of interactive entertainment. Series B led by @sequoia, Series A led by Sea.

Astrocade lets anyone create games with AI, play them with friends, and share them with millions.

But this isn’t about replacing creativity. It’s about giving more people the tool to bring their taste, humor, stories, and craft to life.

Today, the fun goes public.

English

ColVec1-9B – huggingface.co/webAI-Official…

ColVec1-4B – huggingface.co/webAI-Official…

Español

🚨 A $2.5B startup just put Nvidia in a sandwich on the hardest document retrieval benchmark in AI.

It's called webAI-ColVec1. And they open sourced it.

Their 9B model sits at #1 on ViDoRe V3. Their 4B model sits at #3. Nvidia's best open-source embedding model is stuck at #2 between them.

ViDoRe V3 is not a toy benchmark. 26,000+ document pages. 3,000+ human-verified queries. 10 enterprise domains.

Financial filings, healthcare records, technical manuals, dense tables, messy layouts. The stuff that actually breaks production RAG systems.

Here's what makes this different from everything else on the leaderboard:

→ Retrieves directly from rendered page images instead of extracted text

→ Skips OCR entirely. The model sees the page the same way you do

→ Tables, charts, scanned pages, dense layouts. All handled natively

→ Two model sizes: 4B for speed-sensitive edge deployments, 9B for max accuracy

→ Trained on ~2 million question-image pairs across scientific papers, financial filings, government reports, healthcare docs, and multilingual documents

→ Built on Qwen 3.5 vision-language backbones with LoRA adaptation

→ Trained on just 8 A100s with an effective batch size of 512

→ Each query learns against 511 competing document pages per training step

→ Proprietary loss function that forces cleaner

separation between correct and wrong pages

→ Multiple embedding sizes (128, 640, 2560) so you pick your own speed vs. quality tradeoff

Here's the wildest part:

Most enterprise teams are paying per-page and per-token fees just to get their documents into a format their RAG system can search.

Reducto charges $0.015 per page for parsing. Cohere Embed v4 costs $0.12 per million tokens.

Voyage AI's flagship model runs $0.18 per million tokens.

And all of those still depend on OCR as the first step. One bad table extraction upstream and your entire retrieval pipeline breaks.

webAI threw out that entire architecture. The model reads the page like a human.

And it beats every paid and open-source alternative on the benchmark designed to test exactly that.

This didn't come from a massive model or a giant infrastructure budget. 8 A100s.

Deliberate training recipe. Retrieval-specific design. That's it.

Cohere Embed v4: $0.12/million tokens. Voyage AI voyage-3-large: $0.18/million tokens.

OpenAI text-embedding-3-large: $0.13/million tokens.

This: Free. Open source. #1 on the leaderboard.

@thewebAI

100% Open Source.

(Link in the comments)

English

Meer | AI Tools & News retweetledi

Learn 95% of Codex in 28 minutes

These are the 7 knowledge work capabilities...

inside Codex, the super-app

00:00 Intro

02:19 Capability 1 - Full File Access

07:41 Capability 2 - Persistent Memory

10:46 Capability 3 - Plugins

13:52 Capability 4 - Skills

19:22 Capability 5 - GPT Image Access

21:03 Capability 6 - Browser and Computer Use

23:58 Capability 7 - Automations

25:31 Bonus Feature - Chronicle

27:21 Summary

English

@DataChaz How’s your experience with Hermes agent so far ?

English

@heygurisingh I need to check context mode.

This repo is my bookmark list for two weeks but didn’t get enough time to play around

English

10 GitHub repos that should be illegal to be free:

1. AutoHedge

github.com/The-Swarm-Corp…

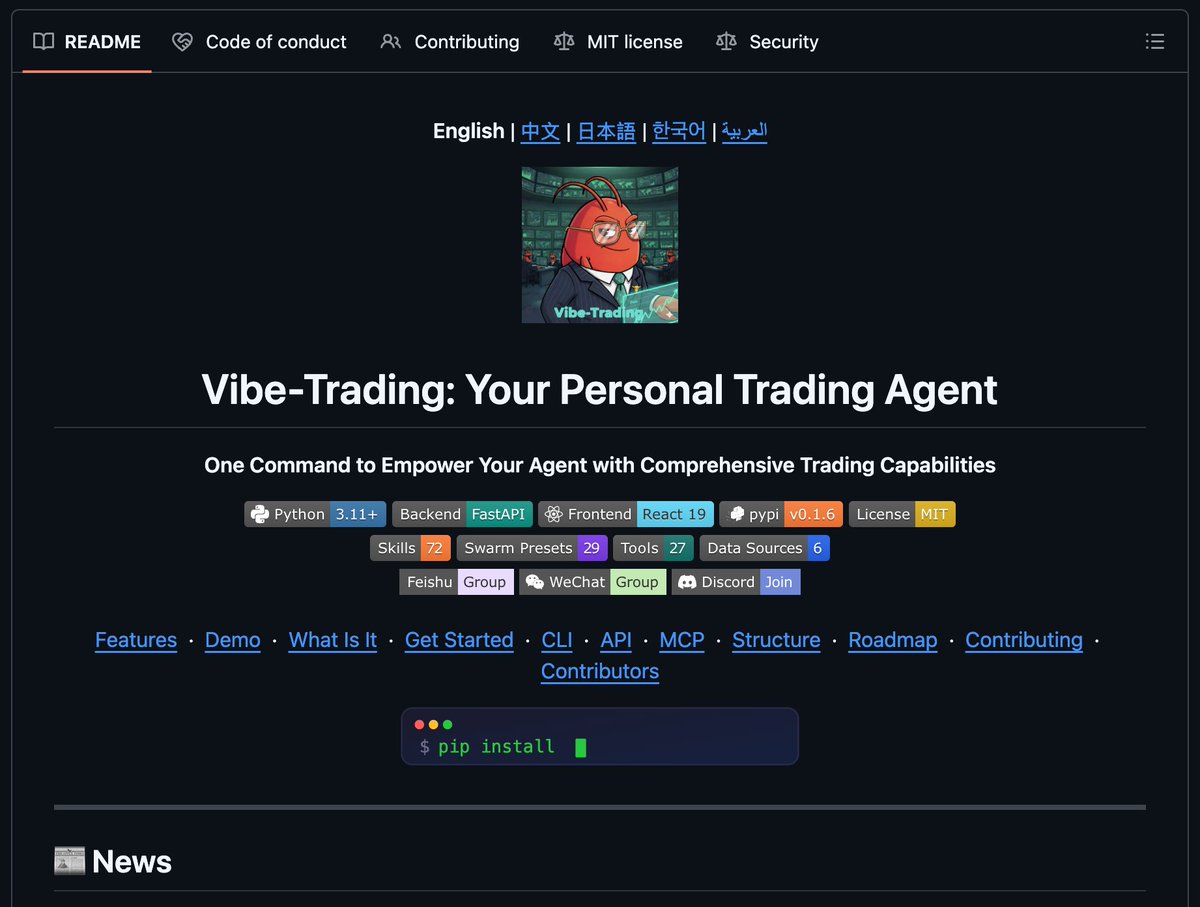

2. Vibe-Trading

github.com/HKUDS/Vibe-Tra…

3. Fincept Terminal

github.com/Fincept-Corpor…

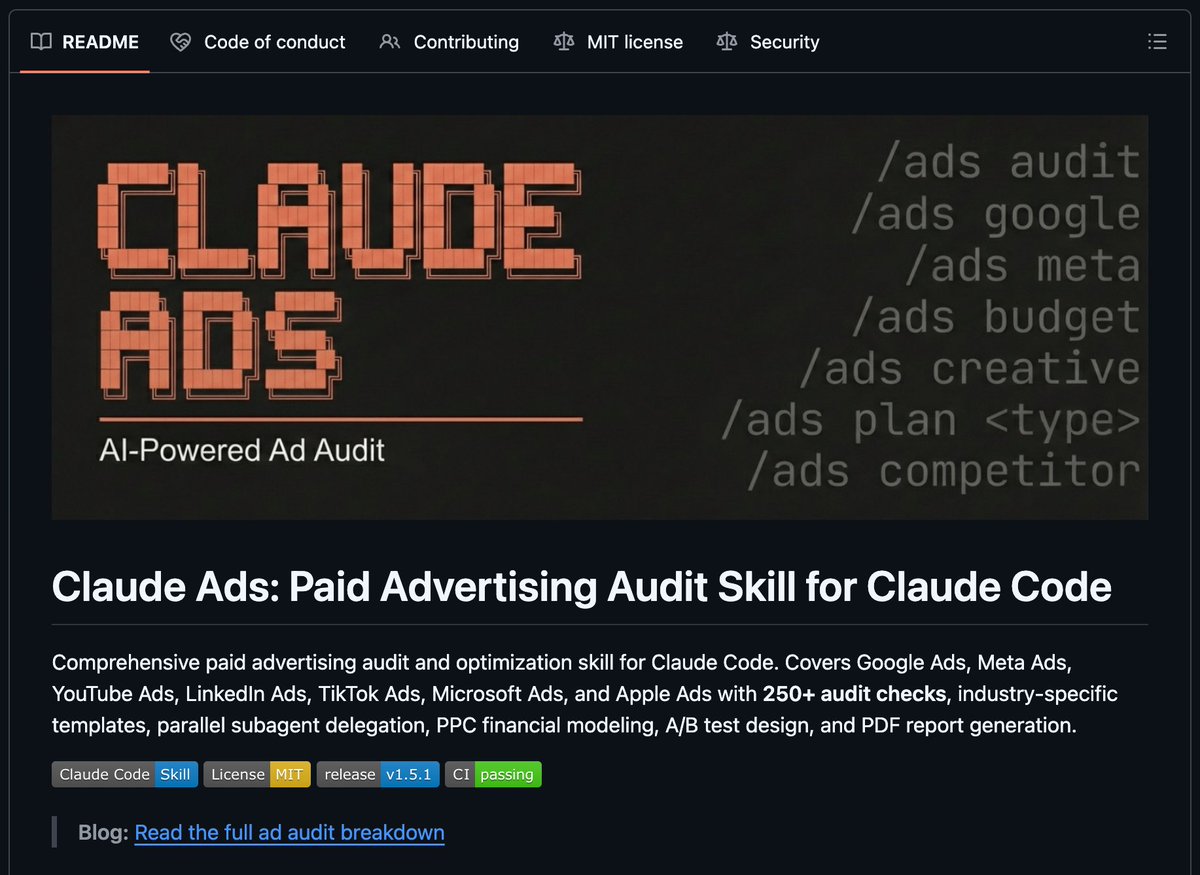

4. Claude Ads

github.com/AgriciDaniel/c…

5. Toprank

github.com/nowork-studio/…

6. Open Higgsfield AI

github.com/Anil-matcha/Op…

7. Hyperframes

github.com/heygen-com/hyp…

8. Camofox Browser

github.com/jo-inc/camofox…

9. Agentic Inbox

github.com/cloudflare/age…

10. Context Mode

github.com/mksglu/context…

English

15 BEST GitHub Repos for AI&ML

1. Awesome Lists: github.com/sindresorhus/a…

2. roadmap. sh:

github.com/nilbuild/devel…

3. Python Data Science Handbook:

github.com/jakevdp/Python…

4. Machine Learning Notebooks, 3rd edition: github.com/ageron/handson…

5. Designing Machine Learning Systems (Chip Huyen 2022):

github.com/chiphuyen/dmls…

6. Neural Networks: Zero to Hero: github.com/karpathy/nn-ze…

7. minGPT by karpathy:

github.com/karpathy/minGPT

8. Project Based Learning:

github.com/practical-tuto…

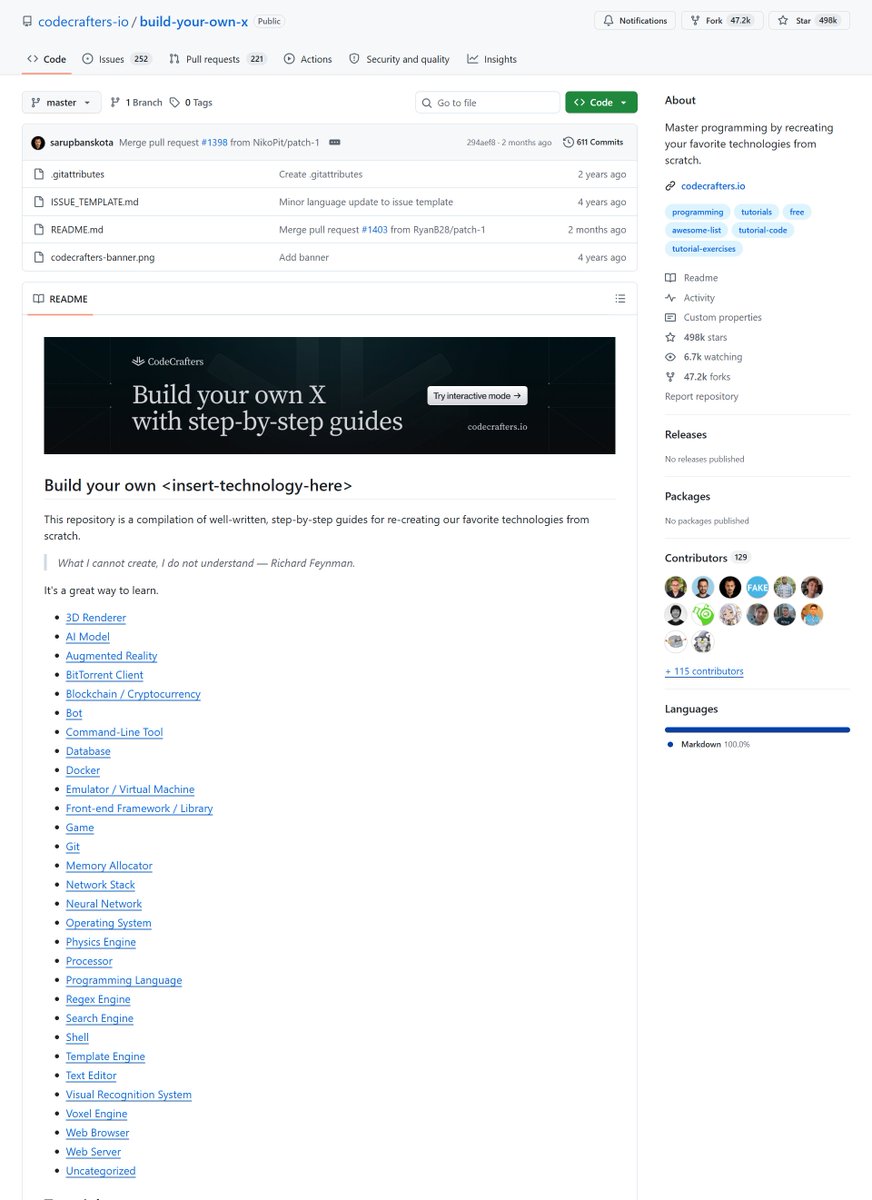

9. Build your own X:

github.com/codecrafters-i…

10. awesome-generative-ai-guide:

github.com/aishwaryanr/aw…

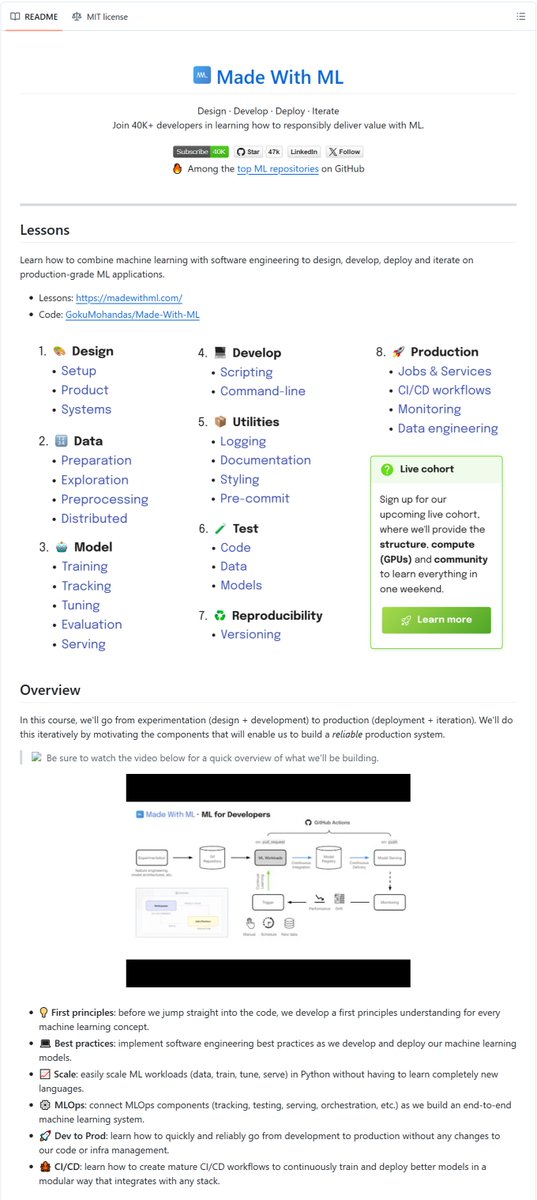

11. Made With ML:

github.com/GokuMohandas/M…

12. Awesome Machine Learning:

github.com/josephmisiti/a…

13. Awesome Data Science:

github.com/academic/aweso…

14. Awesome MLOps:

github.com/visenger/aweso…

h/t: yt Harry Connor AI

English

When you start enjoying Codex and your friends remind you about Claude Code rate limits.

Tibo@thsottiaux

Don't just reset Codex rate limits for fun, it costs money. Don't just reset Codex rate limits for fun, it costs money. ... but the vibes are good ... I have reset Codex rate limits for ALL paid plans to celebrate a good week and allow everyone to build more with GPT-5.5. Enjoy

English