Kern AI

204 posts

@MeetKern

Generative AI assistants you can trust. Select AI models trained on refined data for secure, seamless human-AI collaboration.

We have finally made it! 🎉 I am both thrilled and humbled to announce the official launch of the OWASP Top 10 for Large Language Model Applications version 1.0! It is the first comprehensive, industry-standard reference for security vulnerabilities in applications using Large Language Models (LLMs). This marks a significant milestone in enabling the widespread safe and secure use of LLMs in production. 🎯 Explore our work - owasp.org/www-project-to… Chat with me on LLM Security - calendly.com/golan-itamar/l… (And I'm also in Blackhat if you are interested). 💬 It has been a real honor to take a small part in this initiative led by the amazing Steve Wilson!

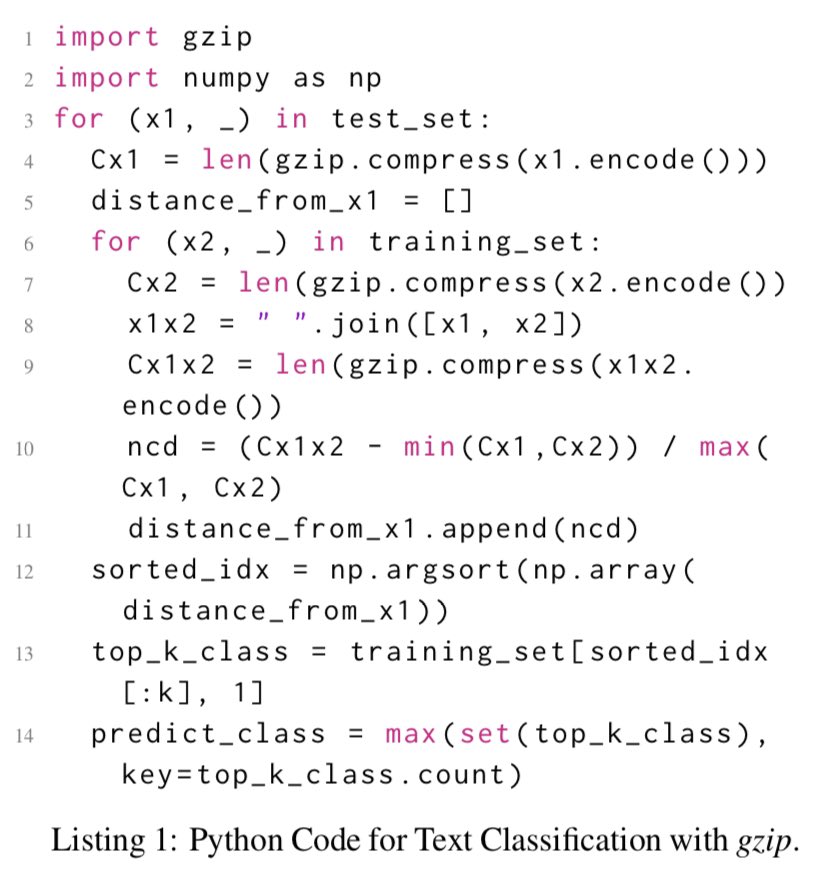

this paper's nuts. for sentence classification on out-of-domain datasets, all neural (Transformer or not) approaches lose to good old kNN on representations generated by.... gzip aclanthology.org/2023.findings-…

MEGABYTE: Predicting Million-byte Sequences with Multiscale Transformers abs: arxiv.org/abs/2305.07185 paper page: huggingface.co/papers/2305.07…