MemOS

207 posts

@MemOS_dev

🧠 Memory Operating System for AI Agents 🌟 Our Repo: https://t.co/PYrFDnl2Eu

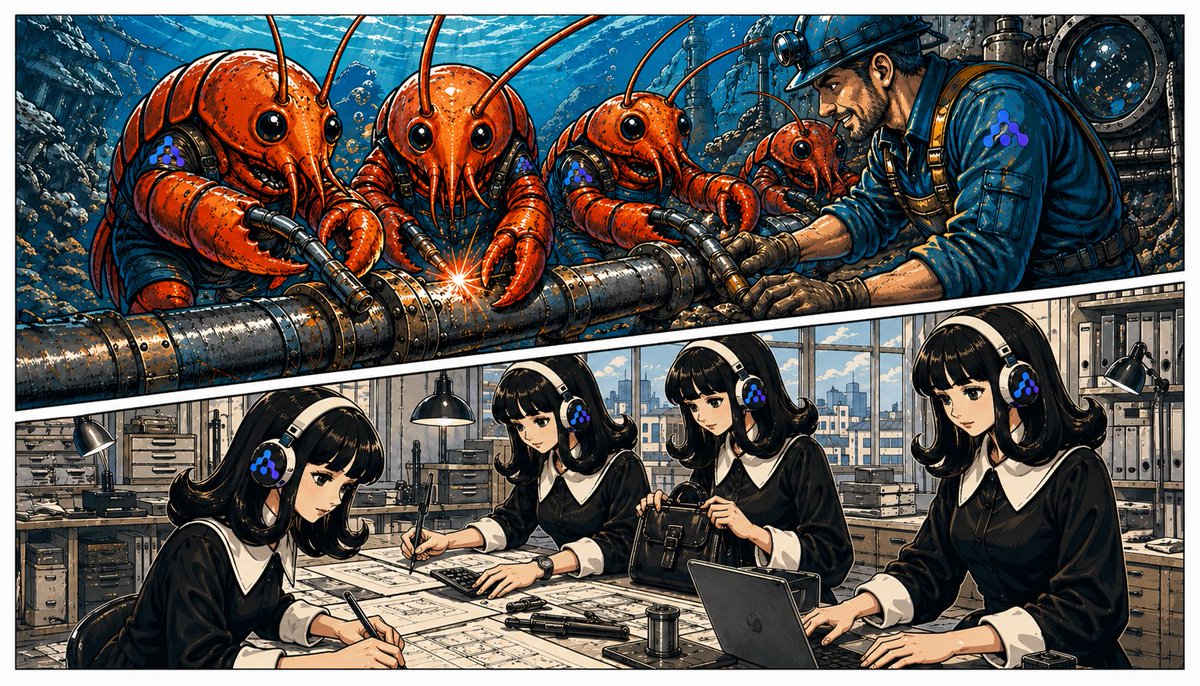

Introducing MemPrivacy from @MemOS_dev, an open-source privacy layer for end-cloud Agent workflows. 🚀

Sensitive content is replaced with typed placeholders locally before reaching the cloud. The cloud reasons over

MemPrivacy ㊙️ a lightweight privacy preserving model for edge cloud AI agents from MemTensor.

Instead of destroying context with masking, it preserves semantic structure using typed placeholders like