Pedro Milcent

170 posts

Pedro Milcent

@MilcentPedro

🤖 Bringing robots to life with human data at @DeplaceAI Opinions are my own

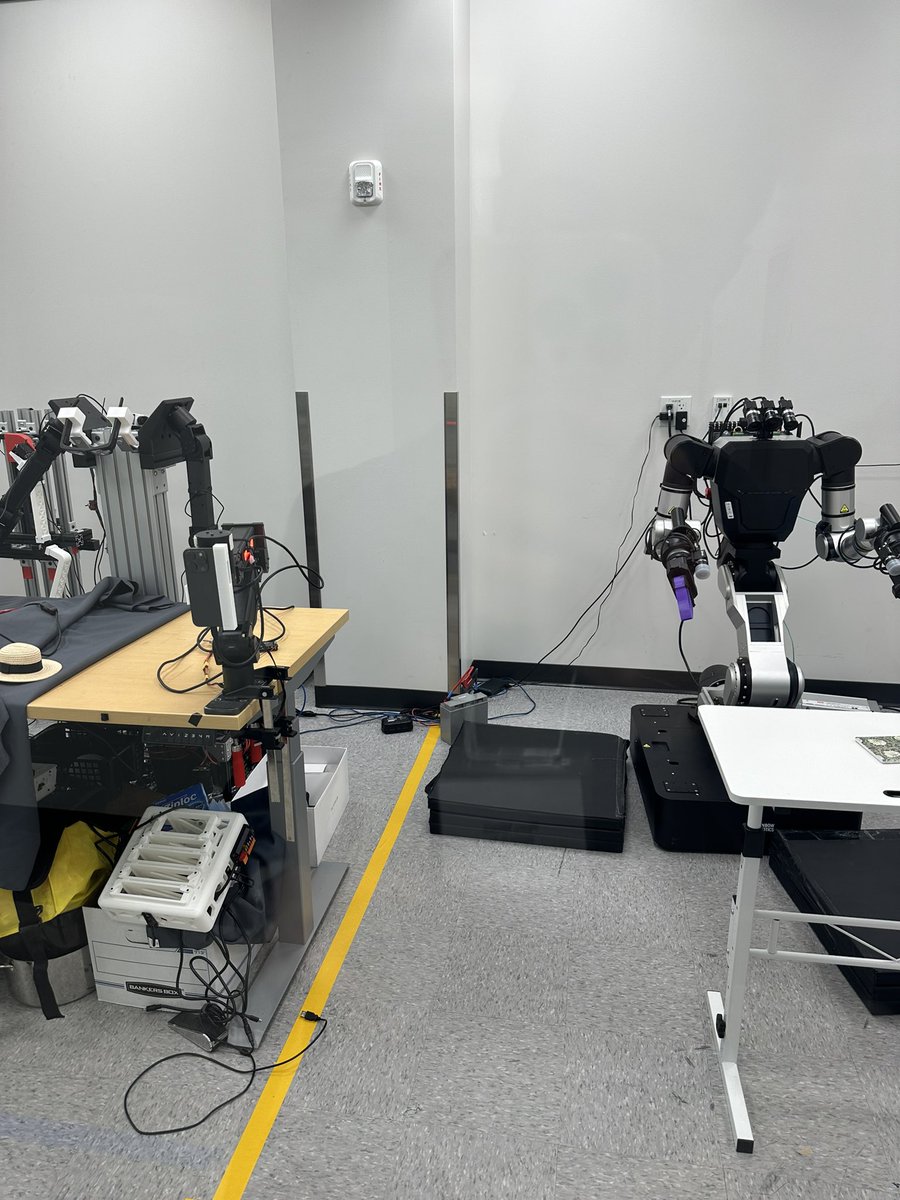

You will soon be able to teach robots what human are doing… using natural language. I spoke to one of the founders a couple of months ago on my podcast: An API that takes raw human videos and returns detailed language annotations describing the motion. @DeplaceAI is building something wild: Not just basic labels, it captures: ✅ motion semantics ✅ relative positions ✅ cause and effect ✅ task outcomes ✅ and more It’s built on top of research in point tracking, segmentation, and learning from demos; all to make it easier to train robots and embodied agents without manual labeling. They’re offering early access and sharing some of the datasets they collected via their global network of video collectors. Thanks for sharing, @MilcentPedro ! 🔗 forms.gle/sSHTPBDVkBtnHh… One of the most interesting motion-to-language interfaces I’ve seen. If you’re working in robotics, vision, or LfD, it’s worth checking out.

Join us on Saturday, 21st June at EgoAct 🥽🤖: the 1st Workshop on Egocentric Perception & Action for Robot Learning @ RSS 2025 @RoboticsSciSys in Los Angeles! ☀️🌴 Full program w/ accepted contributions & talks at: egoact.github.io/rss2025 Online stream: tinyurl.com/egoact