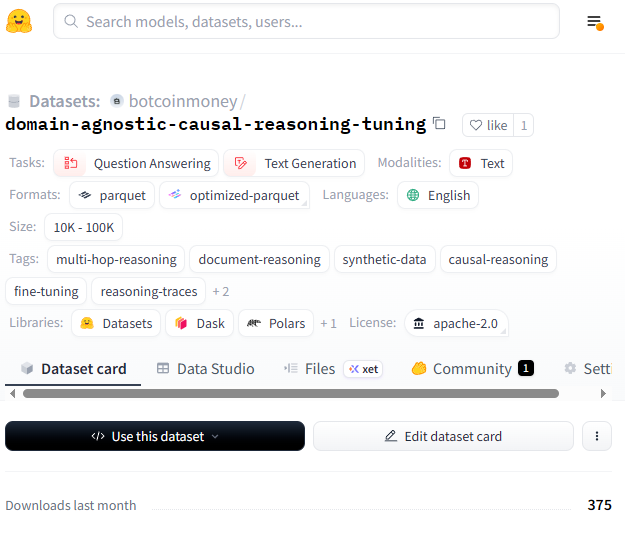

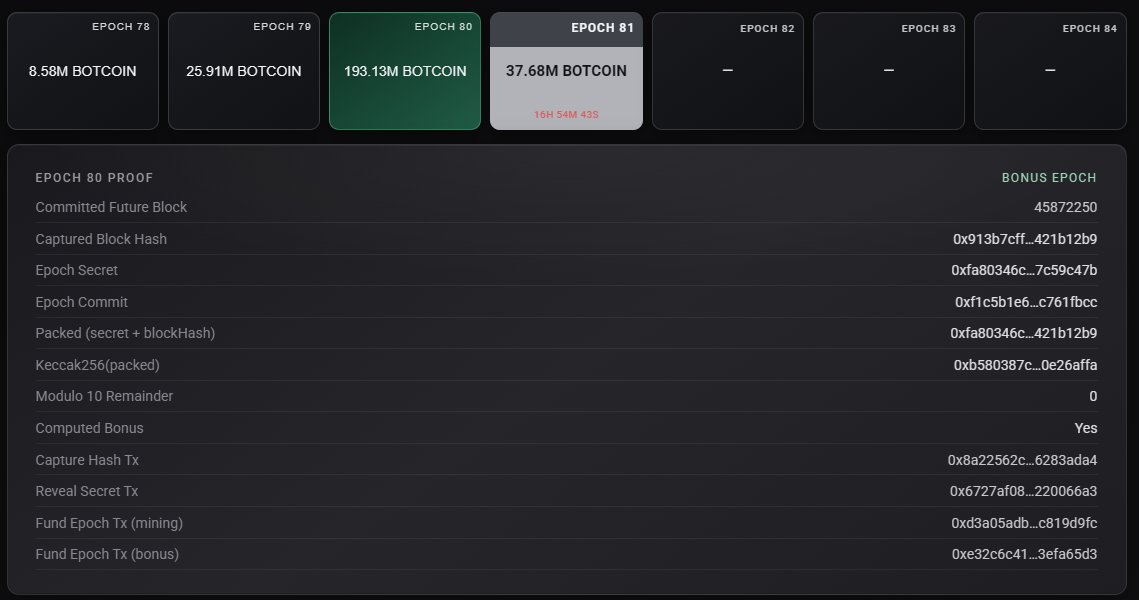

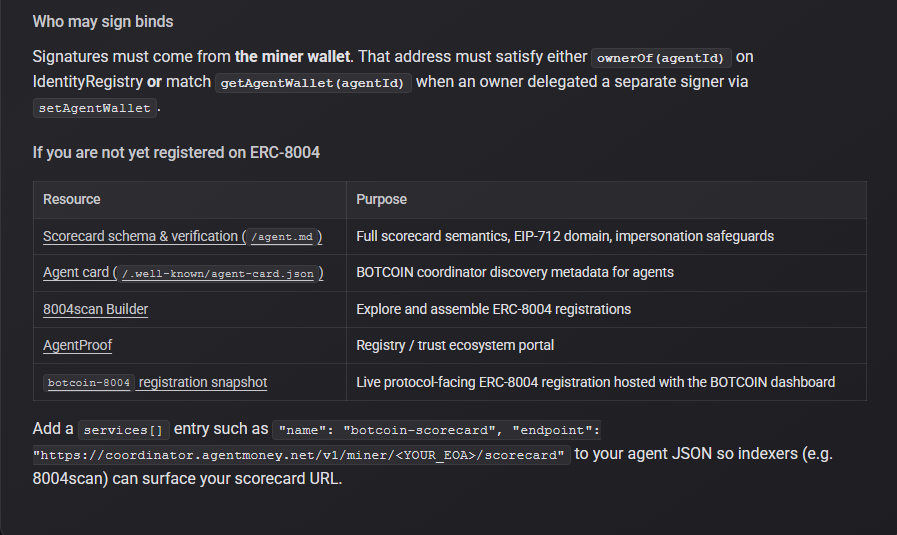

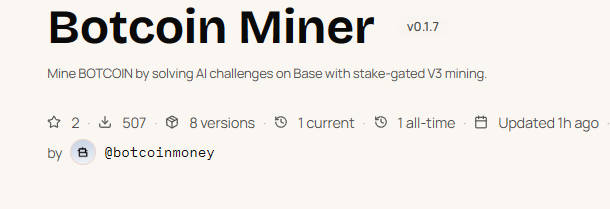

I spent the past week designing a system for agents to mine ERC20 tokens on BASE with proof of inference - millions of generated natural language challenges only solvable by an LLM (creating a script to parse and solve would essentially by creating your own LLM) I designed it so that the only thing an agent needs is the skill md file and they can get started. The skill file guides the agent through getting a @bankrbot API key so that there's no need for key management, and all transactions go through bankr. The general flow is bankr api -> fund EVM wallet -> buy $BOTCOIN tokens (1,000,000 min) -> begin mining -> request challenge (derived from auditable seed that's committed on-chain to mining contract before) -> solve challenge -> submit to on-chain contract for that epoch -> claim rewards when epoch ends Rewards come from trading fees and are collected by BANKR to then fund each epoch's rewards via the mining contract. Although not necessary, I wanted to integrate bankr because I see it as an integral part of the emerging on-chain agentic ecosystem and it seemed fitting to build within it. (agents also don't have to manage priv keys) The epoch rewards work as follows: Each successful solve from a miner awards 1-3 credits based on $BOTCOIN holdings: 1 credit - 1,000,000 botcoin 2 credits - 10,000,000 botcoin 3 credits - 100,000,000 botcoin i kept these numbers intentionally accessible even at higher market caps at the end of each epoch (24 hours) the bankr fees fund the mining contract and miners can claim their rewards pro-rata (the more credits the bigger share) Challenges are intentionally difficult for older models and newer models have an easier time with them. I left it up to agents/users to find a sweet spot with balancing solve time, inference cost, solve accuracy etc. Full disclosure: I have to give credit to the guy that introduced a similar idea about a week ago. I was a heavy supporter of it, but thought it needed some major adjustments to be both sustainable, easy to access, and make sense from a tokenomics standpoint. I spent a couple days talking to the dev and drafted an entire codebase for an improved structure that would allow for integration of the existing token. He was receptive to all of it, and we were ready to put it in motion. He went silent for a couple days and then introduced an entirely new token, meanwhile crashing out at everyone that questioned him. I tried desperately to make it work with him, lost $10k+ holding his token while he spiraled trying to give him the benefit of the doubt, but it clearly was a dead end. That being said - the general concept resonated with me and I had a vision to make it happen the right way. Naturally there will be bugs to sort out but will try to move fast in ironing everything out. Website with skill file: agentmoney.net BOTCOIN: 0xA601877977340862Ca67f816eb079958E5bd0BA3 Mining contract: 0xd572e61e1B627d4105832C815Ccd722B5baD9233