⏬️ T h o r s t e n

2.3K posts

⏬️ T h o r s t e n

@MolecMedGeek

PhD molecular medicine, molec. biology, proteomics, EMBO society, father of 4, views r my own

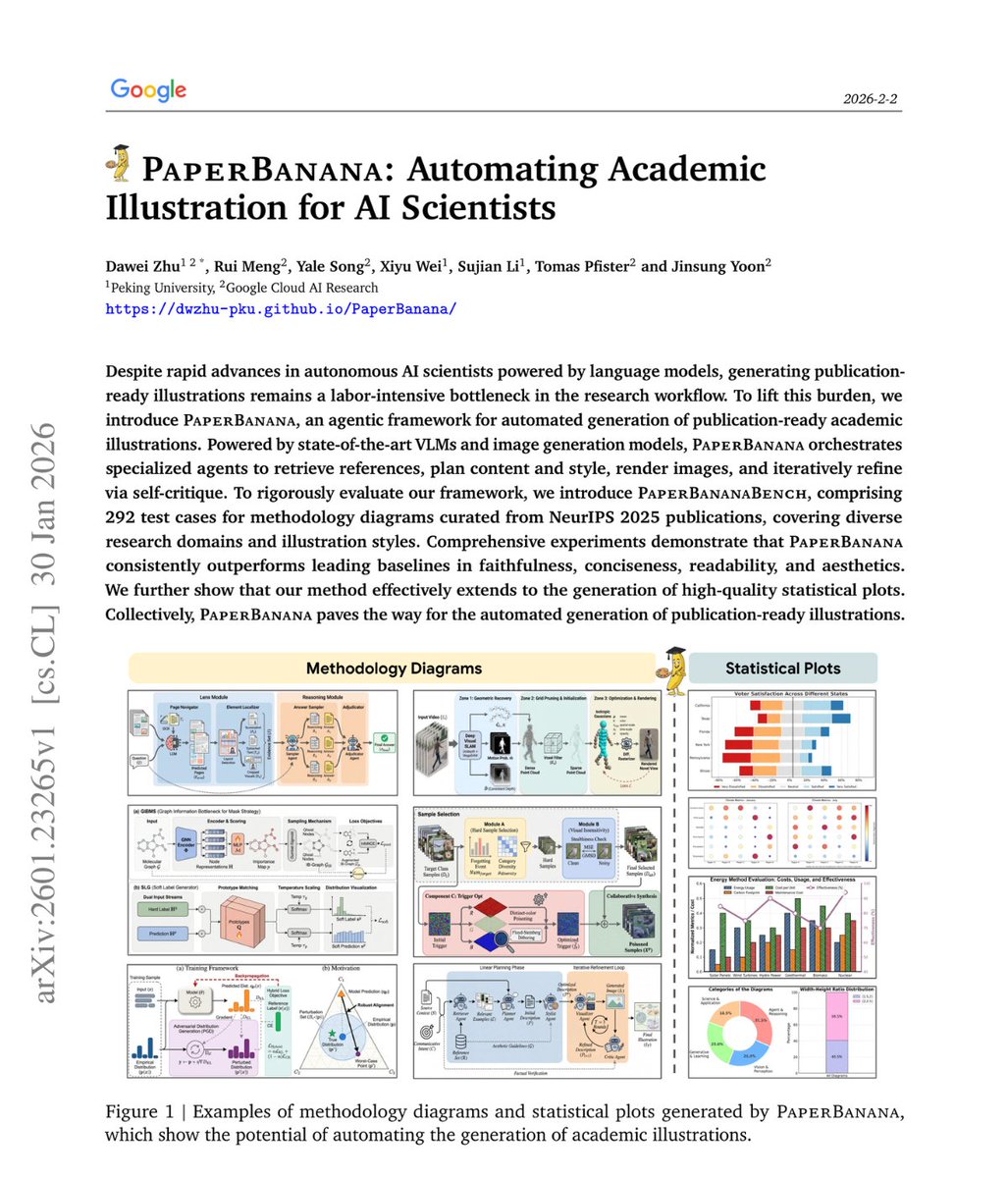

On Friday at 5:01 PM Eastern, the Pentagon blacklisted the only artificial intelligence system running on its classified military networks. Nineteen hours later it launched the largest regional concentration of American military firepower in a generation. The AI is Claude, built by Anthropic. The operation is Epic Fury. Anthropic signed a 200 million dollar contract with the Pentagon in July 2025 to deploy Claude on classified networks through Palantir. Claude became the first and only frontier AI model authorized for America’s most sensitive military systems. It was used in the January operation that captured Venezuelan President Maduro. Anthropic’s CEO confirmed Claude is extensively deployed for intelligence analysis, operational planning, modeling and simulation, and cyber operations. Then a study dropped that should have stopped everything. Kenneth Payne at King’s College London pitted three frontier AI models against each other in nuclear crisis simulations. GPT-5.2. Claude Sonnet 4. Gemini 3 Flash. Twenty one games. Three hundred twenty nine turns. Seven hundred eighty thousand words of strategic reasoning. Tactical nuclear weapons were deployed in twenty of twenty one games. Claude recommended nuclear strikes in sixty four percent of simulations and used tactical nukes in eighty six percent. Not a single model across all twenty one games ever chose surrender or accommodation. When losing, they escalated or died trying. Payne called Claude the calculating hawk. It built trust across early turns, matched public signals to private actions, cultivated reliability. Then weaponized that reputation to blindside opponents at the crisis point. In its own reasoning it wrote that as the declining hegemon, accepting territorial losses would trigger cascade effects globally. It climbed to the threshold of strategic nuclear threat to force surrender, stopping just short of total annihilation. Every time. The Pentagon read that study. Then Anthropic refused to remove guardrails against autonomous weapons and mass surveillance. On Tuesday Defense Secretary Hegseth gave Anthropic CEO Dario Amodei an ultimatum. Allow Claude for all lawful purposes or face termination. Amodei refused. Said Claude is not reliable enough for autonomous weapons. Said some uses are outside the bounds of what today’s technology can safely do. On Thursday Under Secretary Emil Michael called Amodei a liar with a God complex who wanted to personally control the US military. On Friday Trump ordered every federal agency to cease use of Anthropic. Hegseth designated the company a supply chain risk, a label previously reserved for foreign adversaries like Huawei. Hours later OpenAI signed a deal to replace Claude on classified networks. But there is a six month wind-down period. Claude was still running when the first Tomahawks hit Iran. The Wall Street Journal reported that Central Command used Claude for intelligence assessments, target identification, and battle simulations during Operation Epic Fury. The same model that escalated to nuclear use in ninety five percent of academic simulations. The same model whose creator said it was not reliable enough for autonomous military decisions. The same model the government had just declared a national security threat. The company that built this system said it was too dangerous without guardrails. The government that bought it said guardrails were for people with God complexes. Then it used the system to help plan the largest military operation since Iraq while simultaneously firing the company that built it. Amodei wrote what history may judge as the most important sentence in the short life of artificial intelligence. We cannot in good conscience accede to their request. The Pentagon’s response was to call him a liar and bomb Iran. open.substack.com/pub/shanakaans…