Razim

239 posts

The $NVDA Tax is Ending 📉 Custom Chips Are the AI Endgame! NVIDIA's dominance is cracking with Google's TPU Edge and xAI's TeraFab Bet. The hard truth is that if you don’t own the silicon, you don’t own your margins. OpenAI's GPT series is bleeding cash on NVIDIA H100s and is spending billions on NVIDIA hardware, with no quick escape route. The giants are quietly leaving the ecosystem. > xAI bridges the gap with NVIDIA's Colossus cluster today but eyes full independence via TeraFab, a massive fab aiming to produce chips at 1/10th the cost. > Google sidesteps NVIDIA's high costs by training Gemini entirely on in-house TPUs, saving 50-70% on compute expenses compared to GPU rivals. > Broader trend we see is that Meta, Amazon, and Microsoft are racing to custom silicon, signaling a shift from NVIDIA dominance as AI power demands skyrocket.

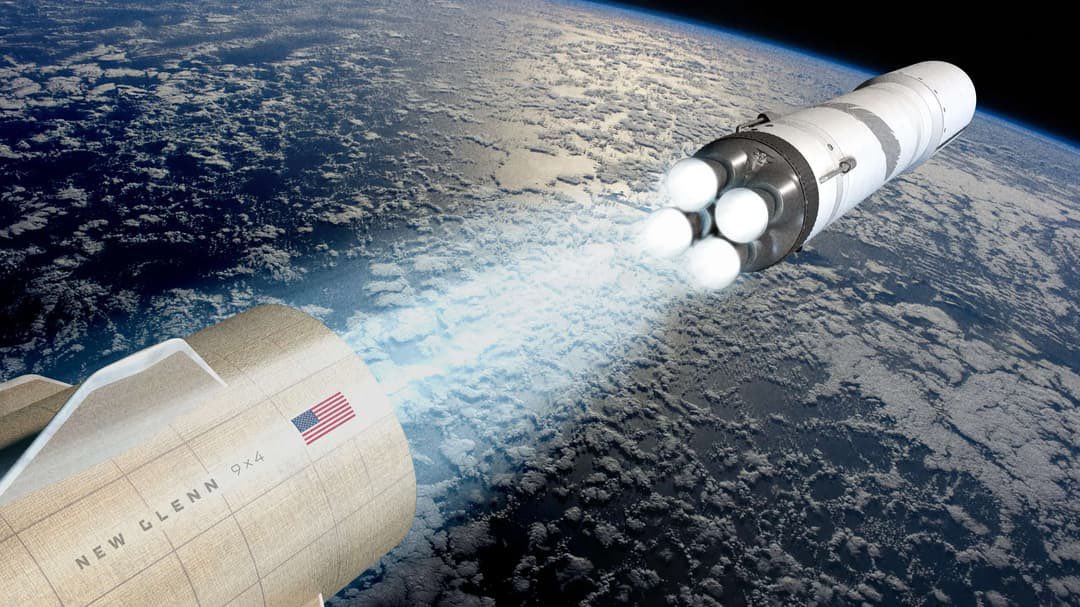

1m² of solar in space generates 10x more energy than it does on Earth. That’s because in space there is no night, no clouds, and no thick atmosphere. A solar array 1km² in space would produce as much power as a nuclear reactor. It’s why space datacenters make sense.

The US consumes about 4,000 terawatt-hours (TWh) of electricity annually & 100 GW would represent almost 22% of that US total. 1/ Starlink V3 satellites are massively scaled for compute. Estimated power output is 10-50 kW per asset (5 x V2 version output) 2/ Each V3 could host Tesla Dojo/AI chips similar to Tesla's supercomputers. A single satellite could pack 1-10 petaflops of compute networked into clusters. 3/ High-speed optical links ,up to 1 Tbps throughput, connect satellites, creating a mesh for real-time AI inference/training across the constellation. 4/ SpaceX will launch 10,000+ V3s over time, potentially generating 100+ GW collectively, enabled by Starship.

The NVIDIA Tax is Ending 📉 Custom Chips Are the AI Endgame! NVIDIA's dominance is cracking with Google's TPU Edge and xAI's TeraFab Bet. The hard truth is that if you don’t own the silicon, you don’t own your margins. OpenAI's GPT series is bleeding cash on NVIDIA H100s and is spending billions on NVIDIA hardware, with no quick escape route. The giants are quietly leaving the ecosystem. > xAI bridges the gap with NVIDIA's Colossus cluster today but eyes full independence via TeraFab, a massive fab aiming to produce chips at 1/10th the cost. > Google sidesteps NVIDIA's high costs by training Gemini entirely on in-house TPUs, saving 50-70% on compute expenses compared to GPU rivals. > Broader trend we see is that Meta, Amazon, and Microsoft are racing to custom silicon, signaling a shift from NVIDIA dominance as AI power demands skyrocket.

Most people don’t know that Tesla has had an advanced AI chip and board engineering team for many years. That team has already designed and deployed several million AI chips in our cars and data centers. These chips are what enable Tesla to be the leader in real-world AI. The current version in cars is AI4, we are close to taping out AI5 and are starting work on AI6. Our goal is to bring a new AI chip design to volume production every 12 months. We expect to build chips at higher volumes ultimately than all other AI chips combined. Read that sentence again, as I’m not kidding. These chips will profoundly change the world in positive ways, saving millions of lives due to safer driving and providing advanced medical care to all people via Optimus. Send an email with three bullet points describing evidence of your exceptional ability to AI_Chips@Tesla.com. We are particularly interested in applying cutting edge AI to chip design. Thanks, Elon

@XFreeze Biggest hurdle is the launch capacity which ofcourse Elon can achieve with Starship. The system would require around 500,000 tons to LEO. That means its that’s 3,300 falcon launches per yearr. and when its Starship it will be 1700 flights per year. x.com/Mostlyy_Human/…