Mrigank Raman

42 posts

@MrigankRaman

NLP enthusiast, MSML@CMU | Applying to PhD programs this cycle

🧵 Are "medical" LLMs/VLMs *adapted* from general-domain models, always better at answering medical questions than the original models? In our oral presentation at #EMNLP2024 today (2:30pm in Tuttle), we'll show that surprisingly, the answer is "no". arxiv.org/abs/2411.04118

1/What does it mean for an LLM to “memorize” a doc? Exactly regurgitating a NYT article? Of course. Just training on NYT?Harder to say We take big strides in this discourse w/*Adversarial Compression* w/@A_v_i__S @zhilifeng @zacharylipton @zicokolter 🌐:locuslab.github.io/acr-memorizati…🧵

Hello world! We are incredibly excited to come out of stealth today to help make better data accessible to everyone, automatically. Hear from our founders about our mission and vision for DatologyAI: datologyai.com/post/introduci…

Language models are weak learners paper page: huggingface.co/papers/2306.14… A central notion in practical and theoretical machine learning is that of a weak learner, classifiers that achieve better-than-random performance (on any given distribution over data), even by a small margin. Such weak learners form the practical basis for canonical machine learning methods such as boosting. In this work, we illustrate that prompt-based large language models can operate effectively as said weak learners. Specifically, we illustrate the use of a large language model (LLM) as a weak learner in a boosting algorithm applied to tabular data. We show that by providing (properly sampled according to the distribution of interest) text descriptions of tabular data samples, LLMs can produce a summary of the samples that serves as a template for classification and achieves the aim of acting as a weak learner on this task. We incorporate these models into a boosting approach, which in some settings can leverage the knowledge within the LLM to outperform traditional tree-based boosting. The model outperforms both few-shot learning and occasionally even more involved fine-tuning procedures, particularly for tasks involving small numbers of data points. The results illustrate the potential for prompt-based LLMs to function not just as few-shot learners themselves, but as components of larger machine learning pipelines.

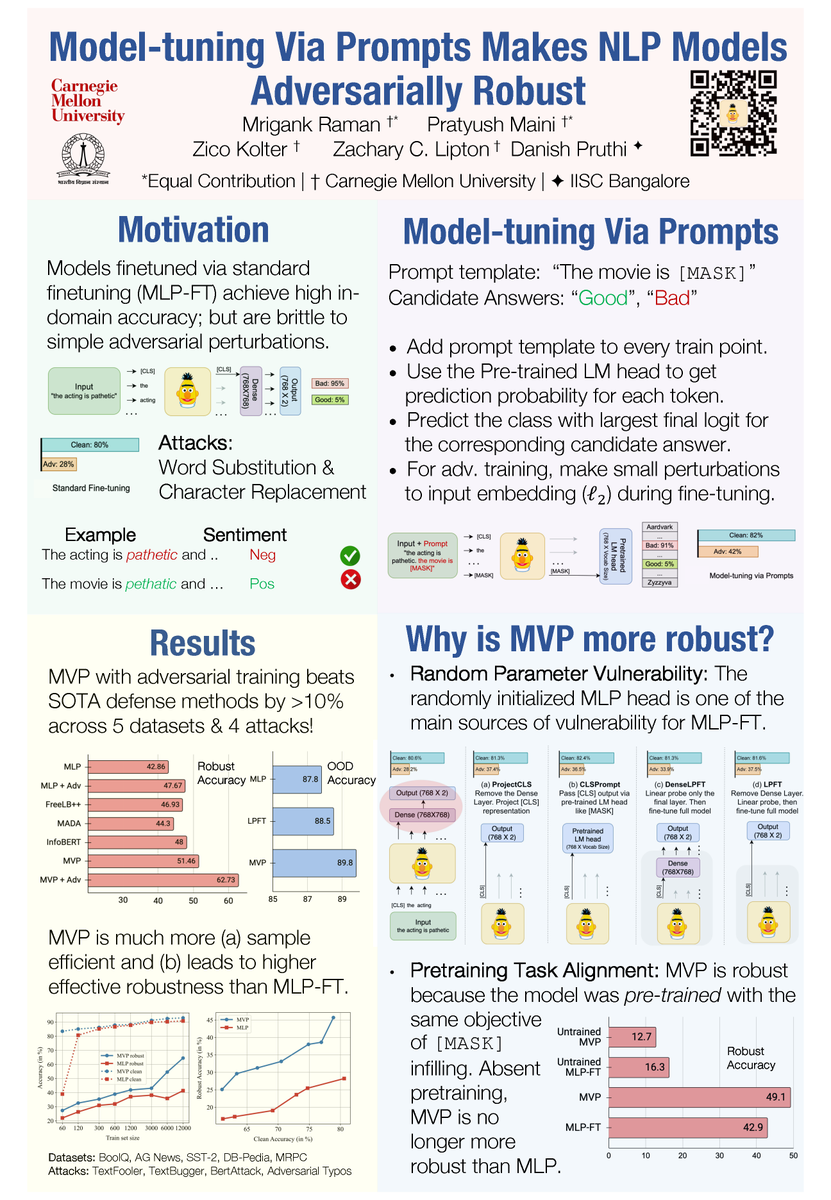

🚨⚠️ Stop using the [CLS] token ⚠️🚨 I will be talking about 1 simple trick to astonishingly boost the robustness of your NLP classifers. Today, 2pm at #EMNLP2023 "Model-tuning Via Prompts Makes NLP Models Adversarially Robust" 📝arxiv.org/abs/2303.07320 Summary below 1/🧵

🚨⚠️ Stop using the [CLS] token ⚠️🚨 I will be talking about 1 simple trick to astonishingly boost the robustness of your NLP classifers. Today, 2pm at #EMNLP2023 "Model-tuning Via Prompts Makes NLP Models Adversarially Robust" 📝arxiv.org/abs/2303.07320 Summary below 1/🧵