Daniel P Jeong

113 posts

Daniel P Jeong

@danielpjeong

PhD student @mldcmu @SCSatCMU + intern @AbridgeHQ | ML for Healthcare | Previously @MSFTResearch, @columbia, @nasajpl

Can language models learn useful priors without ever seeing language? We pre-pre-train transformers on neural cellular automata — fully synthetic, zero language. This improves language modeling by up to 6%, speeds up convergence by 40%, and strengthens downstream reasoning. Surprisingly, it even beats pre-pre-training on natural text! Blog: hanseungwook.github.io/blog/nca-pre-p… (1/n)

1/ We found that deep sequence models memorize atomic facts "geometrically" -- not as an associative lookup table as often imagined. This opens up practical questions on reasoning/memory/discovery, and also poses a theoretical "memorization puzzle."

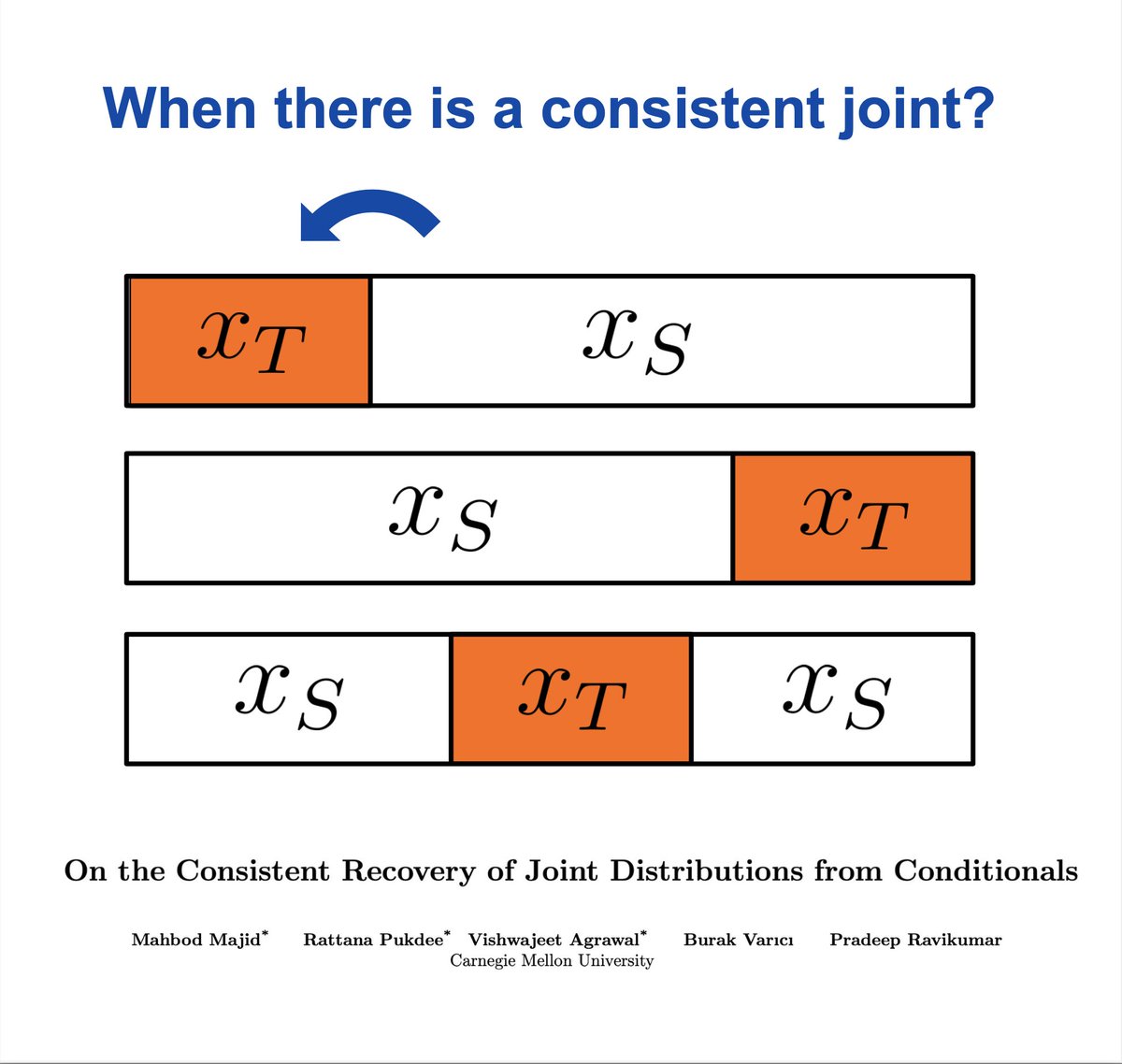

💡Can we trust synthetic data for statistical inference? We show that synthetic data (e.g. LLM simulations) can significantly improve the performance of inference tasks. The key intuition lies in the interactions between the moments of synthetic data and those of real data

[1/9] While pretraining data might be hitting a wall, novel methods for modeling it are just getting started! We introduce future summary prediction (FSP), where the model predicts future sequence embeddings to reduce teacher forcing & shortcut learning. 📌Predict a learned embedding of the future sequence, not the tokens themselves

This Monday, Daniel Jeong from Carnegie Mellon University will be joining us to talk about his work on the limited impact of medical adaptation of LLMs and VLMs. Catch it at 1-2pm PT this Monday on Zoom! Subscribe to mailman.stanford.edu/mailman/listin… #ML #AI #medicine #healthcare

Thinking Machines Lab exists to empower humanity through advancing collaborative general intelligence. We're building multimodal AI that works with how you naturally interact with the world - through conversation, through sight, through the messy way we collaborate. We're excited that in the next couple months we’ll be able to share our first product, which will include a significant open source component and be useful for researchers and startups developing custom models. Soon, we’ll also share our best science to help the research community better understand frontier AI systems. To accelerate our progress, we’re happy to confirm that we’ve raised $2B led by a16z with participation from NVIDIA, Accel, ServiceNow, CISCO, AMD, Jane Street and more who share our mission. We’re always looking for extraordinary talent that learns by doing, turning research into useful things. We believe AI should serve as an extension of individual agency and, in the spirit of freedom, be distributed as widely and equitably as possible. We hope this vision resonates with those who share our commitment to advancing the field. If so, join us. thinkingmachines.paperform.co