Ai Fujiki

1.5K posts

@Ms_amour19

Head of Corp Dev @SakanaAILabs🐠/ Ex-M&A Banker @GoldmanSachs👩💻 (Tokyo & SF) / Loves 🎹📕🐶🧘♀️🍷🥂🎬🎤🪩👠✈️

Can LLMs flip coins in their heads? When prompted to “Flip a fair coin” 100 times, the heads to tails ratio drifts far from 50:50. LLMs can understand what the target probability should be, but generating outputs that faithfully follow a given distribution is a separate problem. This bias extends beyond coin flips. When LLMs are asked to generate multiple story ideas or brainstorm solutions, the outputs tend to cluster around a narrow range. The same probabilistic skew that distorts coin flips limits diversity in creative generation, recommendations, and other tasks where varied outputs are needed. We discovered a prompting technique named String Seed of Thought (SSoT). The method is simple: instruct the LLM to generate a random string in its own output, then manipulate that string to derive its answer. It requires only a small addition to the prompt and no external random number generator. SSoT significantly reduces output bias across a wide range of LLMs, both open and closed. With reasoning models (such as DeepSeek-R1), it reaches accuracy close to that of actual random sampling. The method generalizes from binary choices to n-way selections and arbitrary probability distributions. On the NoveltyBench diversity benchmark, SSoT outperformed other approaches across all six categories while maintaining output quality. This work will be presented at #ICLR2026! Blog: pub.sakana.ai/ssot Paper: arxiv.org/abs/2510.21150 Openreview: openreview.net/forum?id=luXtb…

経済産業省のウェブサイトにて、Sakana AI リサーチサイエンティスト 秋葉拓哉(@iwiwi) のロングインタビューが掲載されました。 meti.go.jp/policy/mono_in… Sakana Chat/Namazuのモデル開発をリードした秋葉が、研究者としてのあゆみを振り返りつつ、モデルのファインチューニングからエージェント 、そして再びエージェントと組み合わせたモデルの事後学習へという技術トレンドの変遷と、その中でSakana AIが見据えるビジョンを語りました。 秋葉「エージェントと密結合したモデルの開発ができる...言い換えれば、この数年でやりたかったことが、ついに具現化できるタイミングが訪れた(のが今だと考えています)」 ぜひ全文をお読みください。🐟

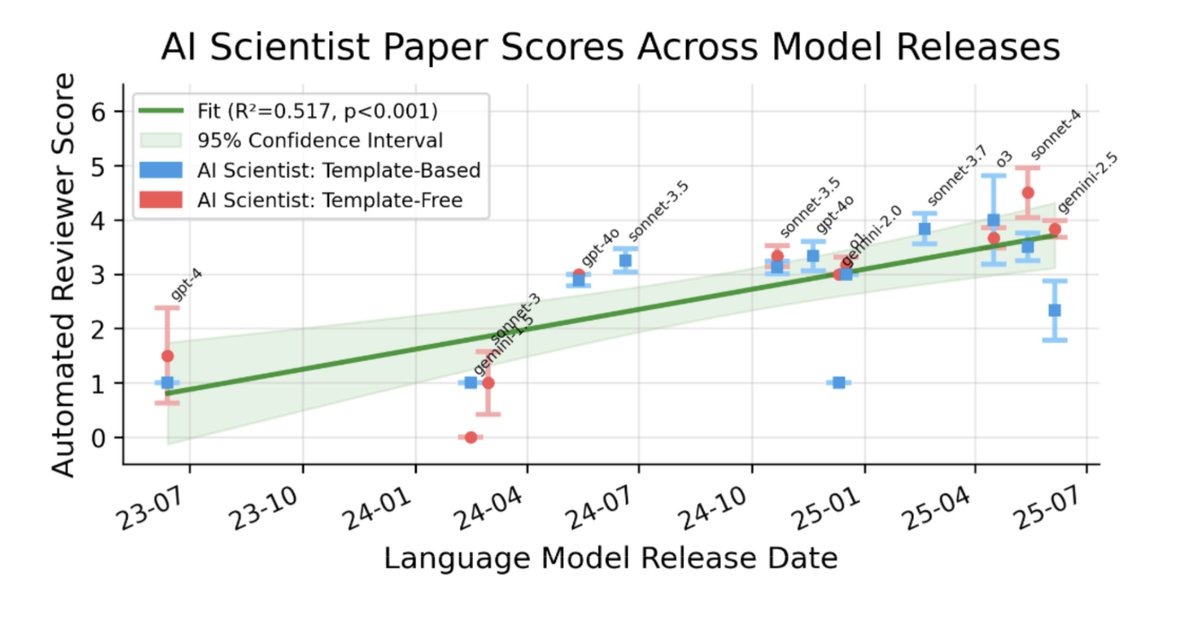

The AI Scientist: Towards Fully Automated AI Research, Now Published in Nature Nature: nature.com/articles/s4158… Blog: sakana.ai/ai-scientist-n… When we first introduced The AI Scientist, we shared an ambitious vision of an agent powered by foundation models capable of executing the entire machine learning research lifecycle. From inventing ideas and writing code to executing experiments and drafting the manuscript, the system demonstrated that end-to-end automation of the scientific process is possible. Soon after, we shared a historic update: the improved AI Scientist-v2 produced the first fully AI-generated paper to pass a rigorous human peer-review process. Today, we are happy to announce that “The AI Scientist: Towards Fully Automated AI Research,” our paper describing all of this work, along with fresh new insights, has been published in @Nature! This Nature publication consolidates these milestones and details the underlying foundation model orchestration. It also introduces our Automated Reviewer, which matches human review judgments and actually exceeds standard inter-human agreement. Crucially, by using this reviewer to grade papers generated by different foundation models, we discovered a clear scaling law of science. As the underlying foundation models improve, the quality of the generated scientific papers increases correspondingly. This implies that as compute costs decrease and model capabilities continue to exponentially increase, future versions of The AI Scientist will be substantially more capable. Building upon our previous open-source releases (github.com/SakanaAI/AI-Sc…), this open-access Nature publication comprehensively details our system's architecture, outlines several new scaling results, and discusses the promise and challenges of AI-generated science. This substantial milestone is the result of a close and fruitful collaboration between researchers at Sakana AI, the University of British Columbia (UBC) and the Vector Institute, and the University of Oxford. Congrats to the team! @_chris_lu_ @cong_ml @RobertTLange @_yutaroyamada @shengranhu @j_foerst @hardmaru @jeffclune

The AI Scientist: Towards Fully Automated AI Research, Now Published in Nature Nature: nature.com/articles/s4158… Blog: sakana.ai/ai-scientist-n… When we first introduced The AI Scientist, we shared an ambitious vision of an agent powered by foundation models capable of executing the entire machine learning research lifecycle. From inventing ideas and writing code to executing experiments and drafting the manuscript, the system demonstrated that end-to-end automation of the scientific process is possible. Soon after, we shared a historic update: the improved AI Scientist-v2 produced the first fully AI-generated paper to pass a rigorous human peer-review process. Today, we are happy to announce that “The AI Scientist: Towards Fully Automated AI Research,” our paper describing all of this work, along with fresh new insights, has been published in @Nature! This Nature publication consolidates these milestones and details the underlying foundation model orchestration. It also introduces our Automated Reviewer, which matches human review judgments and actually exceeds standard inter-human agreement. Crucially, by using this reviewer to grade papers generated by different foundation models, we discovered a clear scaling law of science. As the underlying foundation models improve, the quality of the generated scientific papers increases correspondingly. This implies that as compute costs decrease and model capabilities continue to exponentially increase, future versions of The AI Scientist will be substantially more capable. Building upon our previous open-source releases (github.com/SakanaAI/AI-Sc…), this open-access Nature publication comprehensively details our system's architecture, outlines several new scaling results, and discusses the promise and challenges of AI-generated science. This substantial milestone is the result of a close and fruitful collaboration between researchers at Sakana AI, the University of British Columbia (UBC) and the Vector Institute, and the University of Oxford. Congrats to the team! @_chris_lu_ @cong_ml @RobertTLange @_yutaroyamada @shengranhu @j_foerst @hardmaru @jeffclune

The way @OpenAI and @AnthropicAI account for revenue / ARR is apples to oranges. Should Anthropic treat their revenue from AWS and other hyperscalers the same as OAI, they would be a materially lower in rev… If they both IPO in the coming quarters, not sure how the SEC is going to let these two companies have different accounting treatment for essentially the same type of revenue. OpenAI TAKES OUT the 80% revenue share that goes to @Microsoft Azure and others so reports this 3rd party revenue on a NET basis in their total revenue. Anthropic INCLUDES the revenue share that goes to @amazon AWS and others in their revenue so reports this 3rd party revenue on a GROSS basis in their total revenue. IMO, OpenAI taking more conservative approach that reflects the reality of the economics of these hyperscaler partnerships.

🐟 Sakana Chat 公開 🐟 Sakana AIは、Sakana Chatを無料公開しました。 chat.sakana.ai Web検索機能と高速レスポンスを備えたAIチャットです。日本国内から、どなたでもお使いいただけます。ぜひ、お試しください。

We recently worked with The Yomiuri Shimbun to analyze more than a million social media posts to map out state-sponsored information campaigns. #en" target="_blank" rel="nofollow noopener">sakana.ai/narrative-inte…

Keyword searches are fragile for modern OSINT. To fix this, our team used an ensemble of different LLMs combined with our Novelty Search algorithm to extract underlying narratives purely from context. (e.g., The system successfully mapped posts demanding "a politician retract a statement" to the broader, hidden narrative of "Taiwan interference"). The system clusters these granular narratives hierarchically and generates testable hypotheses, citing specific evidence. Human journalists took the AI-generated hypotheses, interviewed real-world government sources, and verified the timeline of the coordinated campaign our system uncovered. Fascinating look at human-AI collaboration for intelligence analysis.

Sakana AIは、読売新聞社と共同でSNS空間での中国による対日批判を分析しました。独自に開発したAI技術を搭載する当社のシステムが、SNSの膨大なデータから文脈やニュアンスを深く読み取り、批判投稿とナラティブを抽出。その構造を可視化し、さらに実用的な仮説構築までを実行しました。 【記事】 読売新聞(1) yomiuri.co.jp/politics/20260… 読売新聞(2) yomiuri.co.jp/national/20260… 今回の分析に用いたSakana AIの独自AI技術には三つの特徴があります。第一に、投稿文の文脈、ニュアンスからナラティブを抽出することです。例えば「高市首相の誤った発言の撤回を要請」という投稿から「台湾問題への介入と内政干渉」というナラティブを抽出しましたが、これは「台湾」というキーワード検索では発見できないものです。 第二に、独自開発したノベルティー・サーチ技術です。3種類の異なる大規模言語モデル(LLM)が集合知的に推論を重ねてSNS上の重要情報を探索し、粒度の高いナラティブを抽出します。それをさらに抽象度の高いグループにまとめて階層的に可視化することで、情報空間の大きな流れを詳細に把握できます。 第三に、仮説構築です。抽出・分類されたナラティブから、AIが仮説を無数に生成します。判断過程や具体的なデータなどの根拠も示されるため、分析担当者はその内容を精査し、再度分析を指示するなどして、信頼性が高いと考えられる仮説を絞り込むことができます。 今回は、SNSの計110万件にのぼる膨大な投稿の分析から、複数の仮説が導き出されました。このうち、「高市首相の国会答弁後、中国が統一的な対日批判戦略を検討してから大規模な対日批判を開始した」という仮説について、読売新聞が日中双方の政府関係者らに取材し、専門家の意見も踏まえて検証・裏付けを行いました。 AIは人間には見出せないインサイトを発見し、人間はそれを見てAIと対話しながらさらなる分析や対策を検討する--今回の読売新聞社との共同研究はそうした新しい分析の実践の形を示したと考えています。 防衛・インテリジェンス領域においては、本調査で対象とした認知戦をはじめとして、「情報力」の果たす役割がかつてないほど大きくなっています。 Sakana AIはこうした背景も踏まえ、「金融」と並ぶ注力領域として「防衛・インテリジェンス」分野を位置付け、最先端のAI技術を実装する取組を進めています。日本発のAI開発企業として、引き続き防衛・インテリジェンス領域でのAI実装を本格化していきます。