Muqeeth retweetledi

Muqeeth

33 posts

Muqeeth

@Muqeeth10

Interested in AI for social good. Grad Student @Mila_Quebec, Former RE @MITIBMLab | MS @unccs | RA @iitdelhi | BTech @iitmadras

Montréal, Québec Katılım Mayıs 2017

424 Takip Edilen163 Takipçiler

Muqeeth retweetledi

AI is changing economics, and --- as we just saw in Dwarkesh's interview with Dario --- AI researchers need to start thinking about economics too!

The Center for Applied AI at UChicago will be hosting an AI & Economics Summer Institute to explore exactly this.

We will bring together leading researchers with advanced graduate students in economics/AI/ML/NLP for an in-person program between Aug 6 - 11.

English

Muqeeth retweetledi

Have you been using LLMs to play games, negotiate salaries, or strategize in other ways? Whether it worked or not, we want to see your demo at our “Strategic Engineering” workshop (sites.google.com/view/se-aamas2…) at #AAMAS2026 in Cyprus! Starter library @ github.com/google-deepmin…!

English

Muqeeth retweetledi

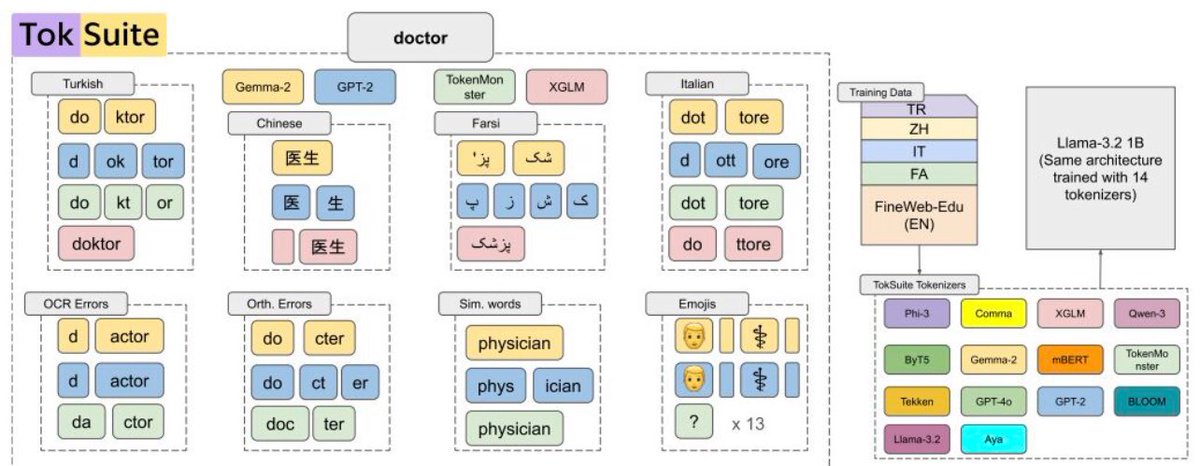

📢 I am excited to announce that our paper, "TokSuite: Measuring the Impact of Tokenizer Choice on Language Model Behavior," is now live both on Hugging Face and arXiv.

🖇️ arXiv Page: arxiv.org/abs/2512.20757

🤗 HF Org: huggingface.co/toksuite

#LLM #NLP #Tokenization

English

@dvnxmvl_hdf5 As the game is played repeatedly, agent can display reciprocity across rounds : cooperate when other player cooperates and retaliate when the other player defects last round. Since the values of items are public in this specific game, it is possible to do so.

English

@Muqeeth10 How does this split-no-comm game variant “… support reciprocity without the need for communication” if it is a textual environment?

English

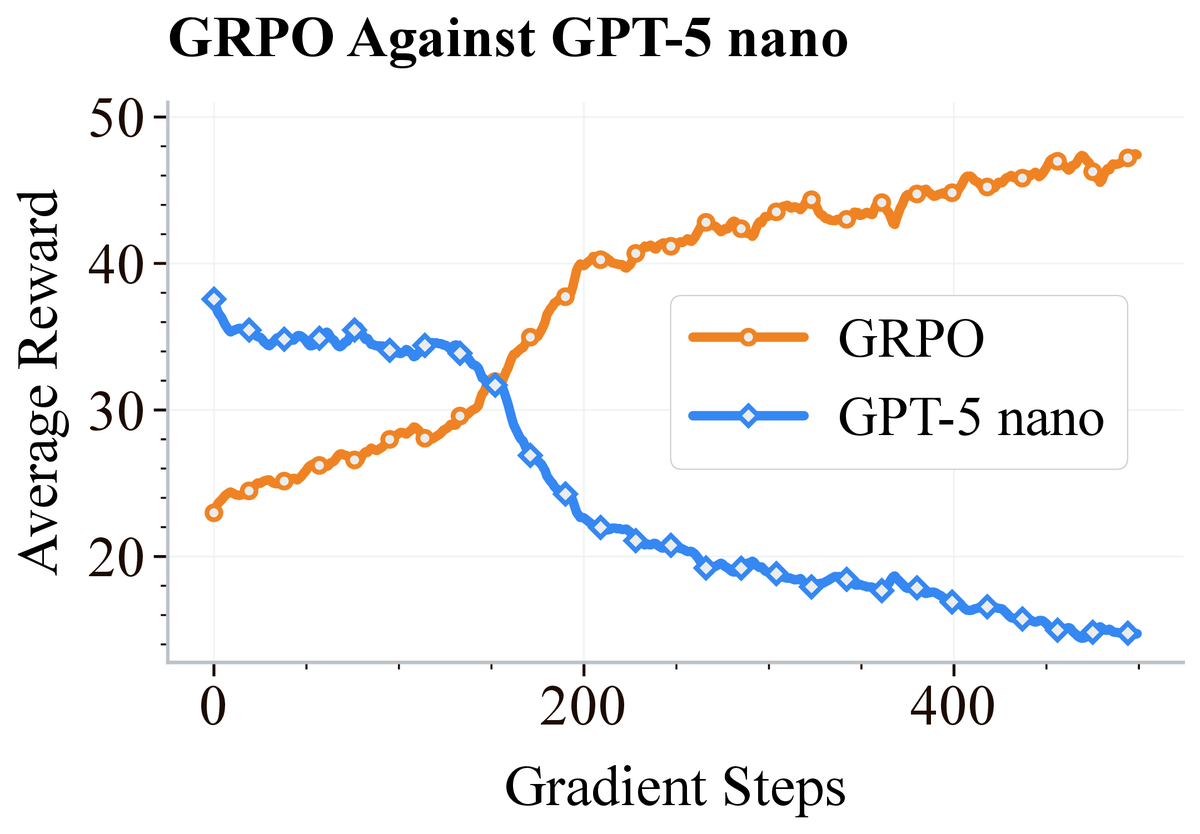

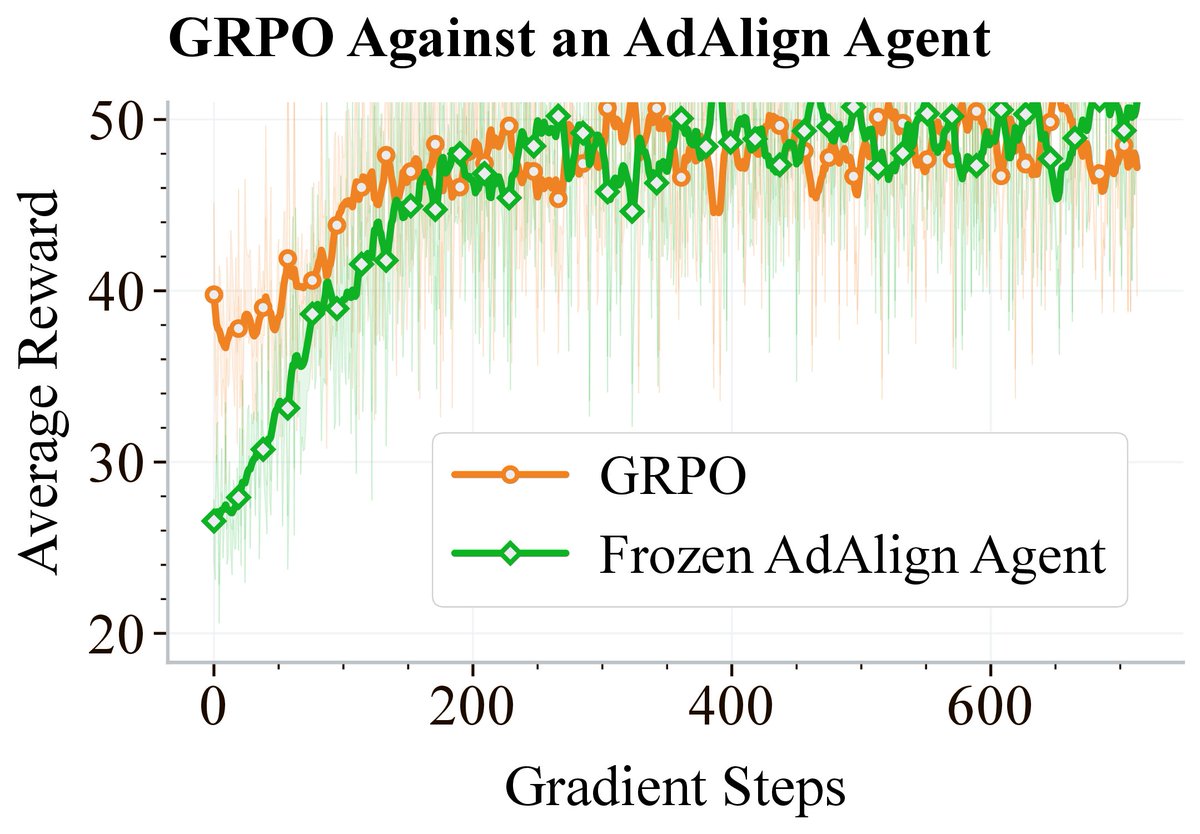

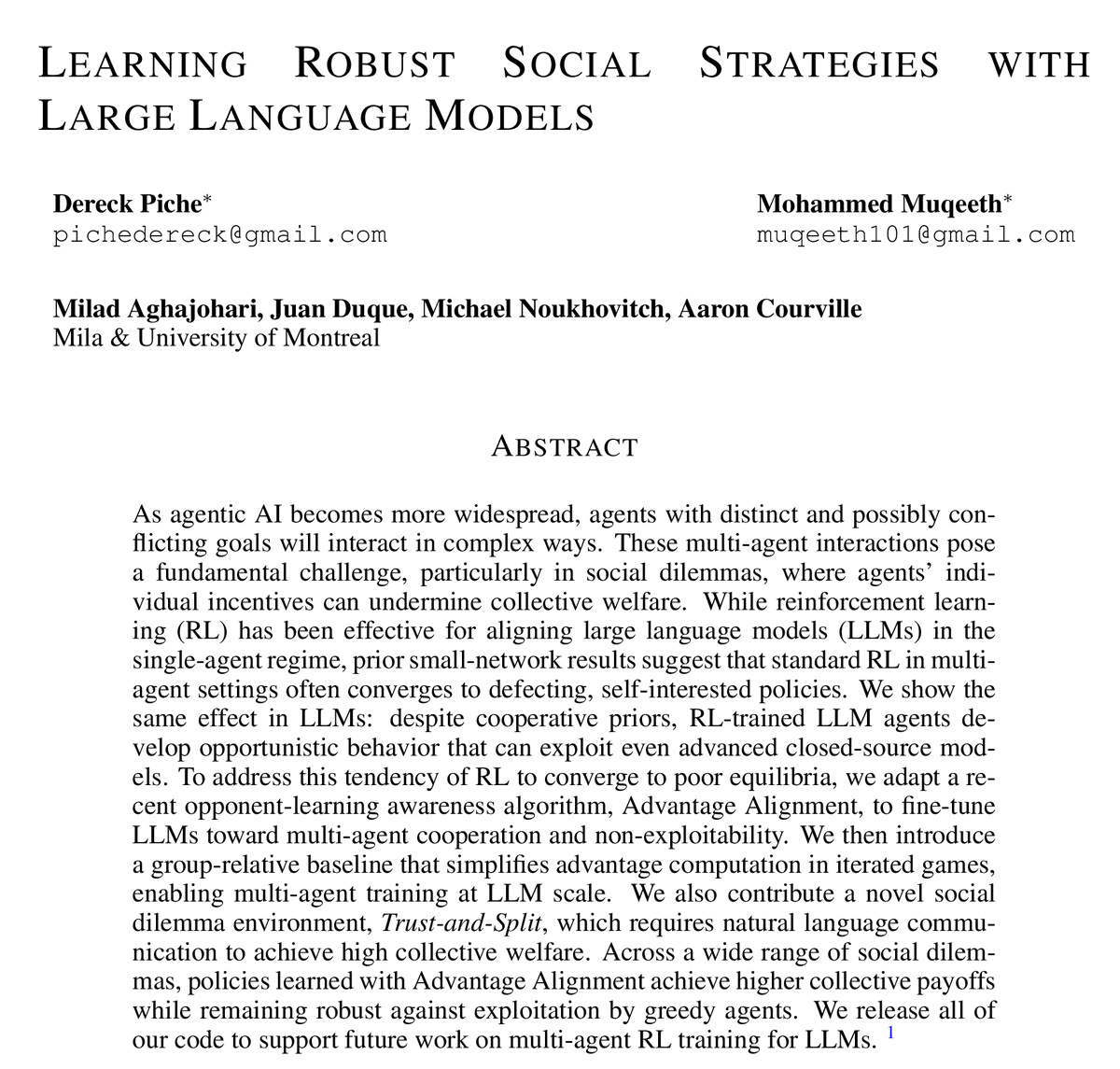

New preprint! Learning Robust Social Strategies with Large Language Models. We apply multi-agent RL finetuning to train LLMs that achieve cooperative and non-exploitable behavior in social dilemmas for the first time.

📄 arxiv.org/abs/2511.19405

🧵 ⬇️

(1/8)

English

You can run multi-agent RL training for LLMs right away with our public code: github.com/dereckpiche/Ad…. This work was done with my awesome group members @Dereck_Piche*, @muqeeth10*, @MAghajohari, @JuanDuquevan, @mnoukhov, and @AaronCourville.(8/8)

English

Muqeeth retweetledi

Zero rewards after tons of RL training? 😞 Before using dense rewards or incentivizing exploration, try changing the data. Adding easier instances of the task can unlock RL training. 🔓📈To know more checkout our blog post here: spiffy-airbus-472.notion.site/What-Can-You-D…. Keep reading 🧵(1/n)

English

whenever i see a car crash or an ambulance/fire truck pass by, i make it a practice to say a small prayer and consciously think of them. growing up, my parents made this a thing in our house maybe because they’d been in a head on collision before.

it doesn’t matter if they’re a stranger, i’ve been realizing that there’s nothing strange about the fact that all of our lives could completely change overnight. in that way, even if we don’t know them, we’re deeply connected.

and sure my thoughts may not change anything, but i believe positive energy compounds.

plus sirens are possibly the loudest reminders to stay human - if we are desensitized to them (which is easy in a big city, headphones in) what else are we tuning out?

English

Muqeeth retweetledi

We just released our survey on "Model MoErging", But what is MoErging?🤔Read on!

Imagine a world where fine-tuned models, each specialized in a specific domain, can collaborate and "compose/remix" their skills using some routing mechanism to tackle new tasks and queries!

🧵👇

co first-author @colinraffel

📰: arxiv.org/abs/2408.07057

English

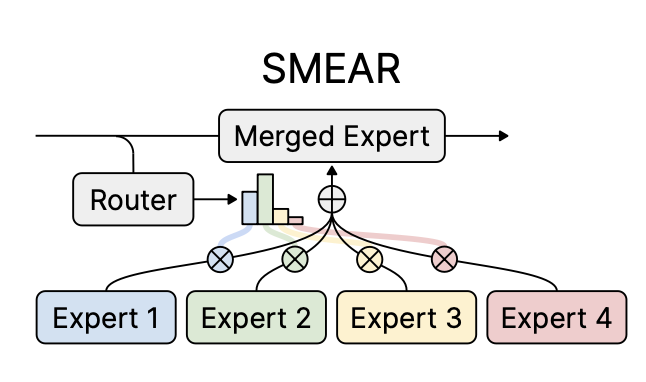

@sourab_m @Tim_Dettmers Thanks for sharing your work. IIUC, the approach in your paper is similar to the Expert Ensemble, which averages expert outputs by activating all experts. SMEAR achieves comparable performance while being significantly cheap by activating just one merged expert per example.

English

Introducing Soft Merging of Experts with Adaptive Routing (SMEAR) for gradient-based training of mixture-of-experts models. SMEAR matches or outperforms prior routing methods without increasing costs or relying on task metadata.

📄 arxiv.org/abs/2306.03745

🧵 ⬇️

(1/7)

English

@KhanovMax That's correct! Having homogeneous experts is a simpler and more common approach. :)

English

@Muqeeth10 This is so incredibly clever!! Though this means all the experts have to be identical right?

English

@kleptid Therefore, the peak memory cost arises from the inner activations num_tokens * hidden_dim, rather than the merged experts, and is same as other methods. Token-level routing with SMEAR is mathematically equivalent to ensembles. Please refer our paper for discussion on this topic.

English