Tim Dettmers

3.8K posts

Tim Dettmers

@Tim_Dettmers

Creator of bitsandbytes. Professor @CarnegieMellon and Research Scientist @allen_ai . I blog about deep learning and PhD life at https://t.co/Y78KDJJFE7.

Closed labs hide model sizes. They can't hide what their models know, and what a model knows is an indicator on how big it is. Reasoning compresses. Factual knowledge doesn't. So you can size a frontier model from black-box API calls alone, and across releases you can literally watch a single fact arrive in the parameters over time. For three years, my friends Jiyan He and Zihan Zheng have been asking frontier LLMs the same question: "what do you know about USTC Hackergame?", a CTF contest. May 2024: GPT-4o invented fake titles. Feb 2025: Claude 3.7 Sonnet listed 19 verified 2023 challenges. By April 2026, frontier models recall specific challenges across consecutive years. After DeepSeek-V4 dropped, I instructed my agent to spend four days autonomously turning that habit into Incompressible Knowledge Probes (IKP) — 1,400 questions, 7 tiers of obscurity, 188 models, 27 vendors. Three findings: 1/ You can approximately size any black-box LLM from factual accuracy alone. Penalized accuracy is log-linear in log(params), R² = 0.917 on 89 open-weight models from 135M to 1.6T params. Project closed APIs onto the curve → GPT-5.5 ~9T, Claude Opus 4.7 ~4T, GPT-5.4 ~2.2T, Claude Sonnet 4.6 ~1.7T, Gemini 2.5 Pro ~1.2T (90% CI: 0.3-3x size). 2/ Citation count and h-index don't predict whether a frontier model recognizes a researcher. Two researchers with similar citation profiles get very different responses. Models memorize impact — work that shaped a field, not many incremental papers. 3/ Factual capacity doesn't compress over time. Across 96 open-weight models across 3 years, the IKP time coefficient is statistically zero, rejecting the Densing-Law prediction of +0.0117/month at p<10⁻¹⁵. Reasoning benchmarks saturate; factual capacity keeps scaling with parameters. Website: 01.me/research/ikp/ Paper: arxiv.org/pdf/2604.24827

Marin is using quantile balancing from @Jianlin_S (who developed RoPE, which was also a good idea) to train our current 1e23 FLOPs MoE. The idea is elegant: assigning tokens to experts by solving a linear program. No hyperparameters to tune. Yields stable training.

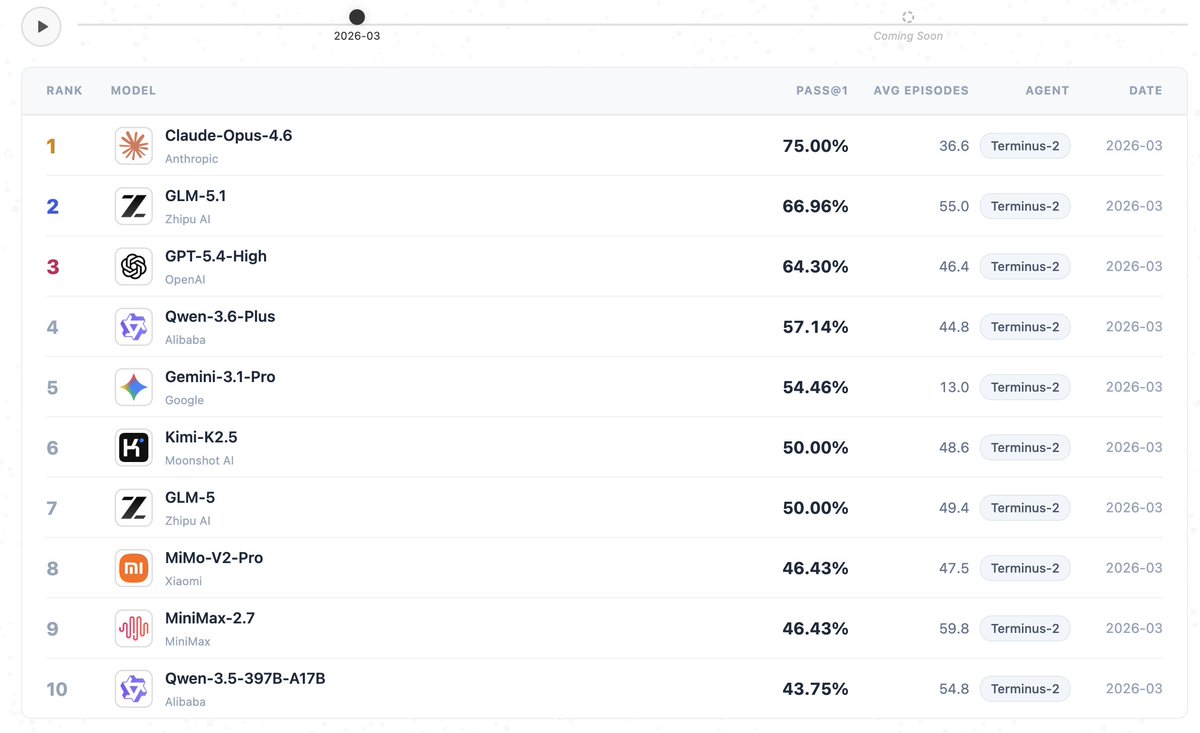

What if computer-use agents could do real work? We built Gym-Anything: a framework that turns any software into a computer-use agent environment. We used it to create CUA-World: 200+ real software, 10,000+ tasks and environments, across all major occupation groups, from medical imaging to financial trading. 🧵