Nour Eddine Hamaidi

2.2K posts

Told my girlfriend, "current $20 Codex sub is not enough. I need to go $100/mo" She suggested, "why don't you open a second $20 Codex account" I feel so dumb🤔

Computer use with any model Hermes Agent × @trycua

"Why are you benchmarking DGX Spark? It's a training box." Yeah. Low bandwidth, but 128GB of unified memory is just sitting there. Plenty of room to optimize. DGX Spark + Qwen3.6 27B. Four backend/quant combos: 🔴 llama.cpp + UD_Q4_K_XL > 11.0 tok/s (baseline), TTFT 297ms 🟢 llama.cpp + DFlash > 20.4 tok/s (peaks at 97 tok/s), TTFT 320ms 🟡 vLLM FP8 + MTP > 13.1 tok/s, TTFT 540ms 🟣 vLLM NVFP4 + MTP > 24.2 tok/s, TTFT 376ms NVFP4+MTP is the winner for me, rock stable around 24 tok/s, no wild swings. DFlash is the wildcard: massive peaks, but fluctuates a lot. FP8+MTP barely beats baseline, and it's FP8. Love my Spark.

The Hermes Agent Creative Hackathon starts now 16 Days, $25k in Prizes Presented by @Kimi_Moonshot & @NousResearch For the tinkerers pushing Hermes Agent into creative domains: video, image, audio, 3D, long-form writing, creative software, interactive media and more. Show us what your Hermes Agent can do. Details Below ↓

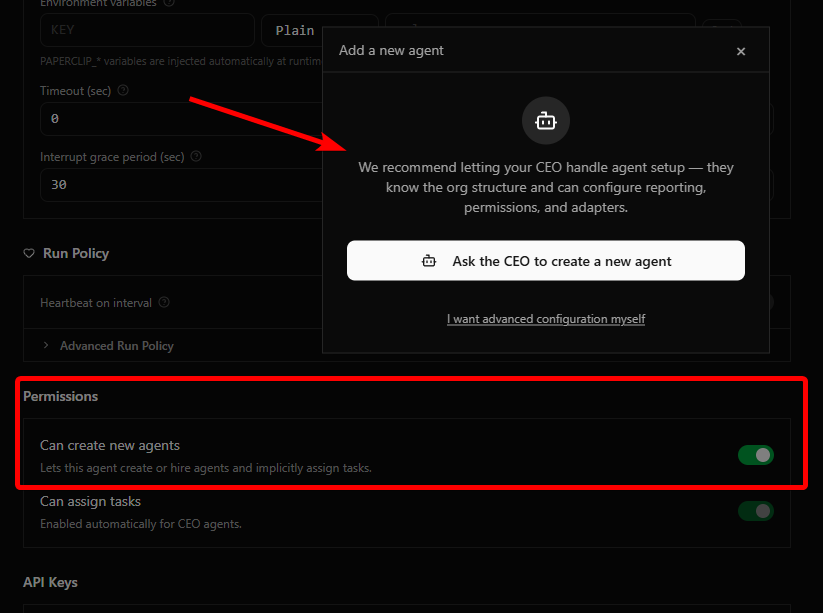

Hermes Agent now has multi-agent via the Kanban, new in v0.12.0. Agents claim tasks from a board, work in parallel, and hand off when blocked. You watch progress and unblock from one easy view instead of juggling terminals. We asked it to plan and make this video about itself: