We're excited to welcome 28 new AI2050 Fellows! This 4th cohort of researchers are pursuing projects that include building AI scientists, designing trustworthy models, and improving biological and medical research, among other areas. buff.ly/riGLyyj

Natasha Jaques

1.6K posts

@natashajaques

Assistant Professor leading the Social RL Lab https://t.co/ykwfJG84Bj @uwcse and Staff Research Scientist at @GoogleAI.

We're excited to welcome 28 new AI2050 Fellows! This 4th cohort of researchers are pursuing projects that include building AI scientists, designing trustworthy models, and improving biological and medical research, among other areas. buff.ly/riGLyyj

Anthropic's Mythos has been accessed by a small group of unauthorized users, raising questions about control of the AI model bloomberg.com/news/articles/…

We’re releasing LongCoT, an incredibly hard benchmark to measure long-horizon reasoning capabilities over tens to hundreds of thousands of tokens. LongCoT consists of 2.5K questions across chemistry, math, chess, logic, and computer science. Frontier models score less than 10%🧵

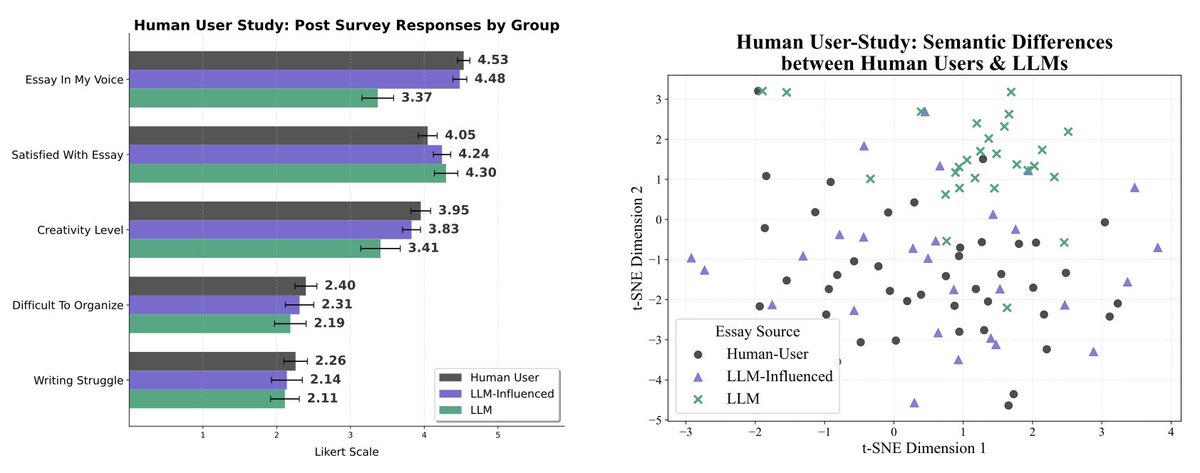

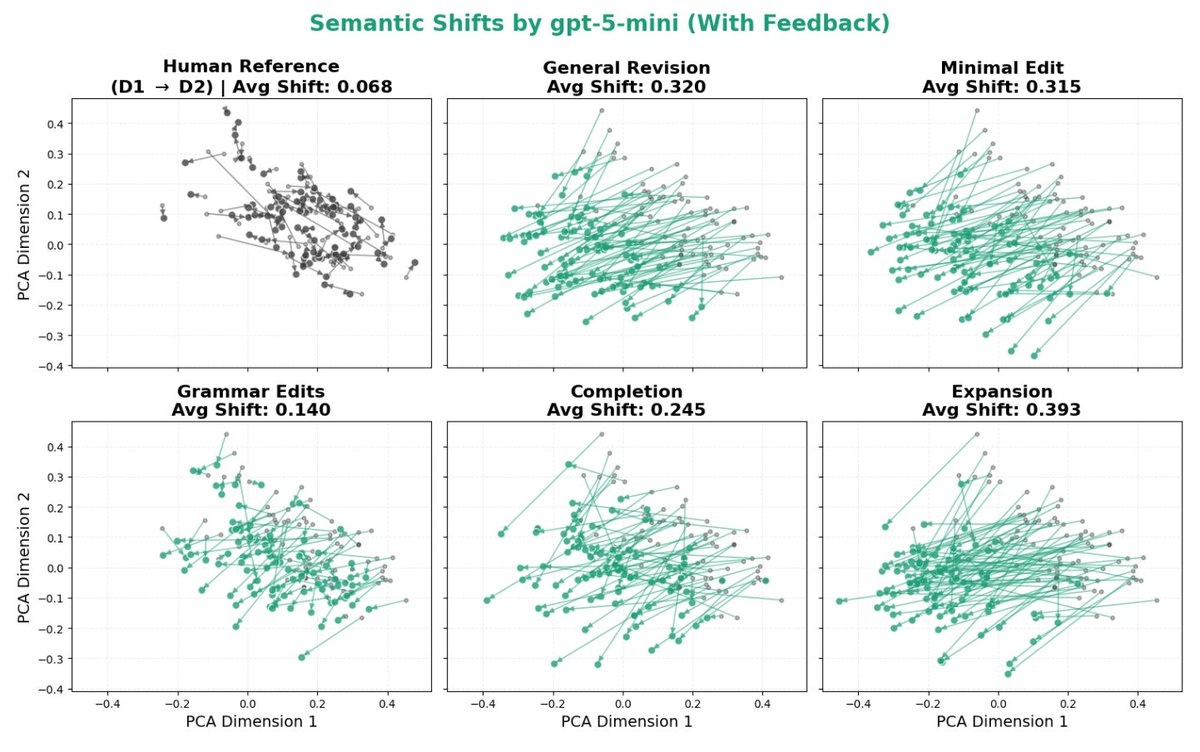

I say this all the time, but a large reason why LLMs are detectable is they have preferences instilled into them through training data and RL. Asking a model to rewrite something gives the LLM an opportunity to apply its own preferences to your text! Sometimes the preferences are helpful, like proper grammar and spelling. But other times, it actively erases the author's intent and voice - softening language to bring statements closer to what the LLM is comfortable with (see below) - replacing the author's metaphors with the LLM's preferred metaphors - replacing the author's voice (tics, sentence structure, vocabulary choice) with a more "default" voice the LLM prefers

Please, I'm begging you, try to critically examine the differences between these two pieces of writing. ChatGPT editing did not improve this. Every single change only served to weaken your claims significantly. Everything is now hedged into oblivion: no longer have you outlined a "problem," now it's merely a "flaw." "It is true" now demoted to "it appears to be the case." "Is" gets a "usually" tacked on. A thesis statement at the end of the first paragraph gets run over by noisy, out-of-context example-whittling. All for fear of being misconstrued. And at the end, the argument that gets spat out isn't even yours anymore! You argued that Graeber failed to create a true account of work because he did not understand Chesterton's Fence. ChatGPT is arguing is that it is possible some apparently bullshit jobs could be secretly load-bearing if you squint. These are two different statements. The second is weaker and less compelling. It says less. And it's fucking longer! Don't do this anymore! Stop doing this! It's worse!!!

NEWS: Massive budget cuts for US science proposed again by Trump administration "It's an extinction-level event for science". The US government is proposing massive cuts to almost every branch of science, from NASA to the National Institutes of Health. NSF would completely eliminate the social, economic and behavioral sciences directorate. This would decimate the world's leading scientific system. nature.com/articles/d4158…

The paper I’ve been most obsessed with lately is finally out: nbcnews.com/tech/tech-news…! Check out this beautiful plot: it shows how much LLMs distort human writing when making edits, compared to how humans would revise the same content. We take a dataset of human-written essays from 2021, before the release of ChatGPT. We compare how people revise draft v1 -> v2 given expert feedback, with how an LLM revises the same v1 given the same feedback. This enables a counterfactual comparison: how much does the LLM alter the essay compared to what the human was originally intending to write? We find LLMs consistently induce massive distortions, even changing the actual meaning and conclusions argued for.