Sabitlenmiş Tweet

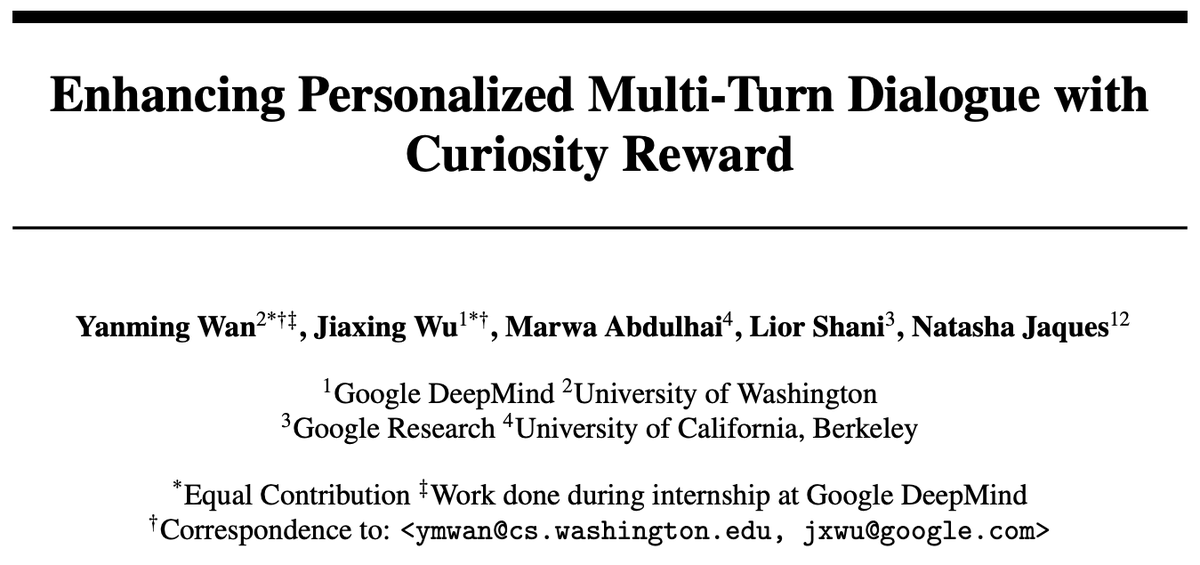

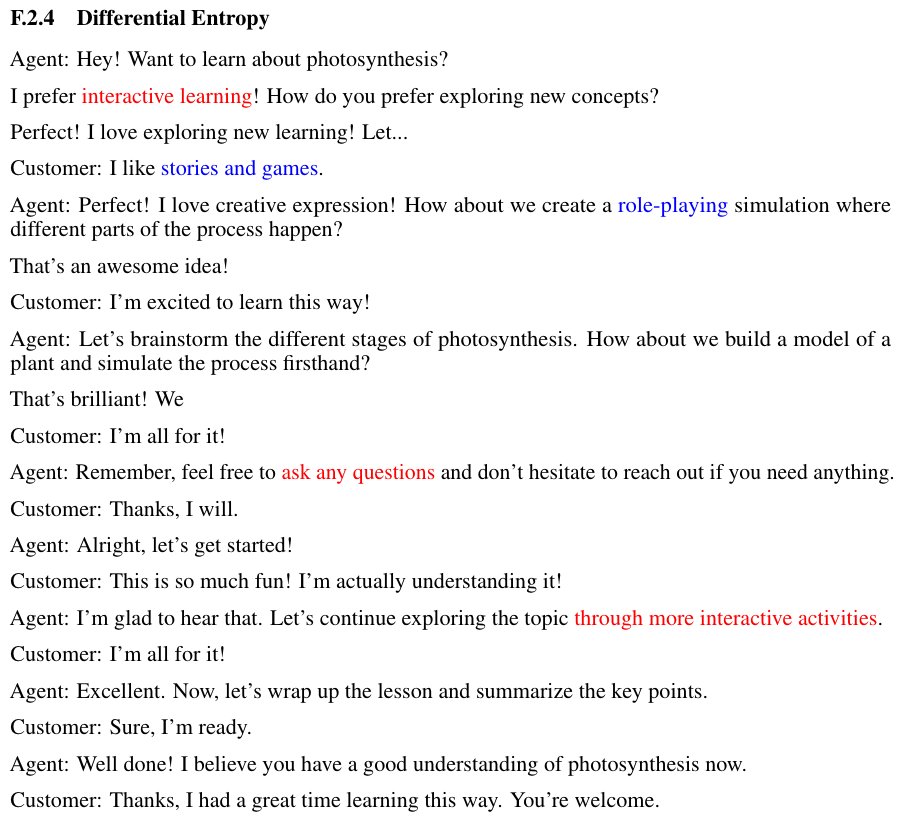

Personalization methods for LLMs often rely on extensive user history. We introduce Curiosity-driven User-modeling Reward as Intrinsic Objective (CURIO) to encourage actively learning about the user within multi-turn dialogs.

📜 arxiv.org/abs/2504.03206

🌎 sites.google.com/cs.washington.…

GIF

English