Dr Sumaiya Shaikh 🇸🇪🇦🇺

14.1K posts

Dr Sumaiya Shaikh 🇸🇪🇦🇺

@Neurophysik

Neuroscientist PhD | AI Ethics, Countering Violent Extremism, Exit work #CVE | YouTube neurophysik10 | Back on twitter after a hiatus. Mum to a human & a dog

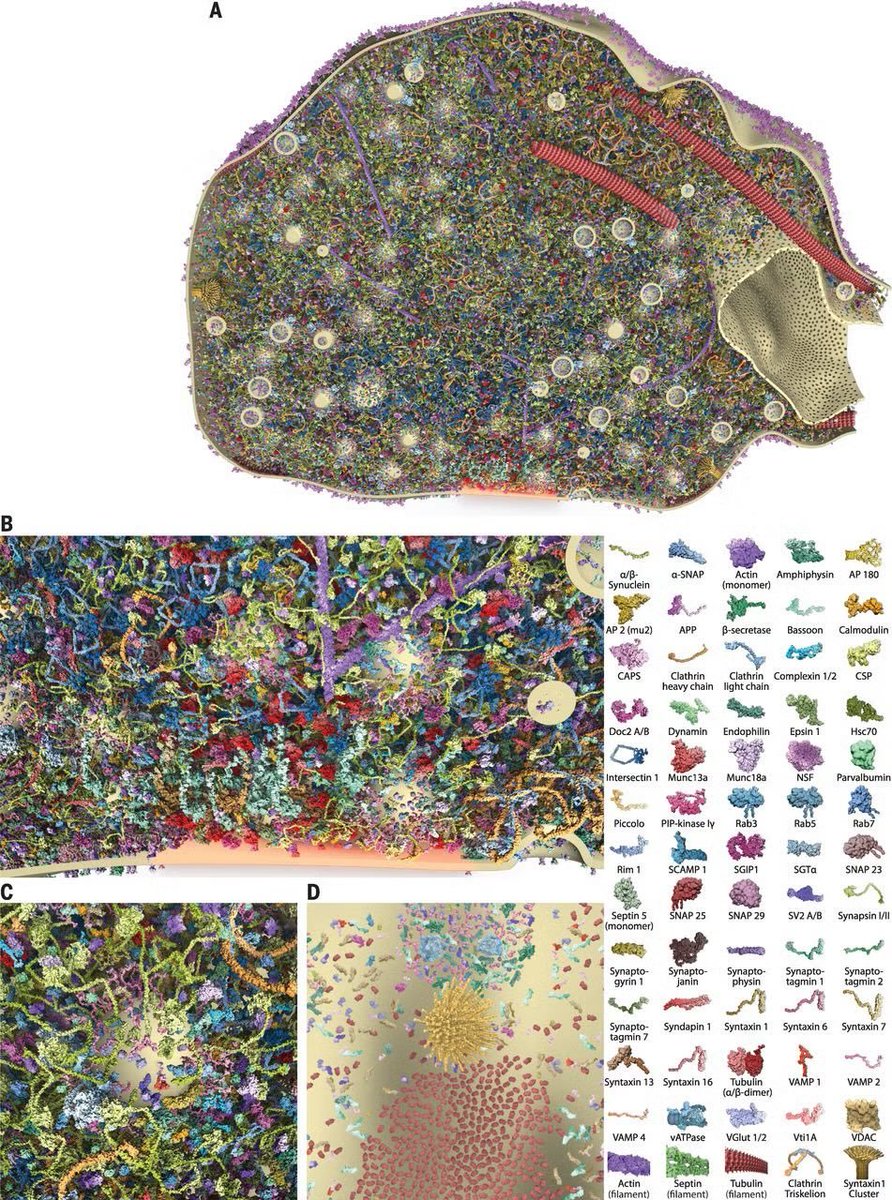

We teach models to reason about biology. Today we're open-sourcing the tool we built to run that science. Think Claude Code, but for drug discovery. pip install celltype-cli

🚨 SAM ALTMAN: “People talk about how much energy it takes to train an AI model … But it also takes a lot of energy to train a human. It takes like 20 years of life and all of the food you eat during that time before you get smart.”

There are PhDs being handed out each day to people living in the past: the students, their advisors, their universities. Dissertations that took 5 years of work, and which 4.6 Opus could re-produce then improve on in an afternoon.