Nico Hezel

35 posts

Nico Hezel

@NicoHezel

Image Processing and Machine Learning fanatic with an affinity for performance optimization.

@sudoingX getting 20-30 tok/s on 6800XT 16GB and 7950X3D 64GB Slow but usable

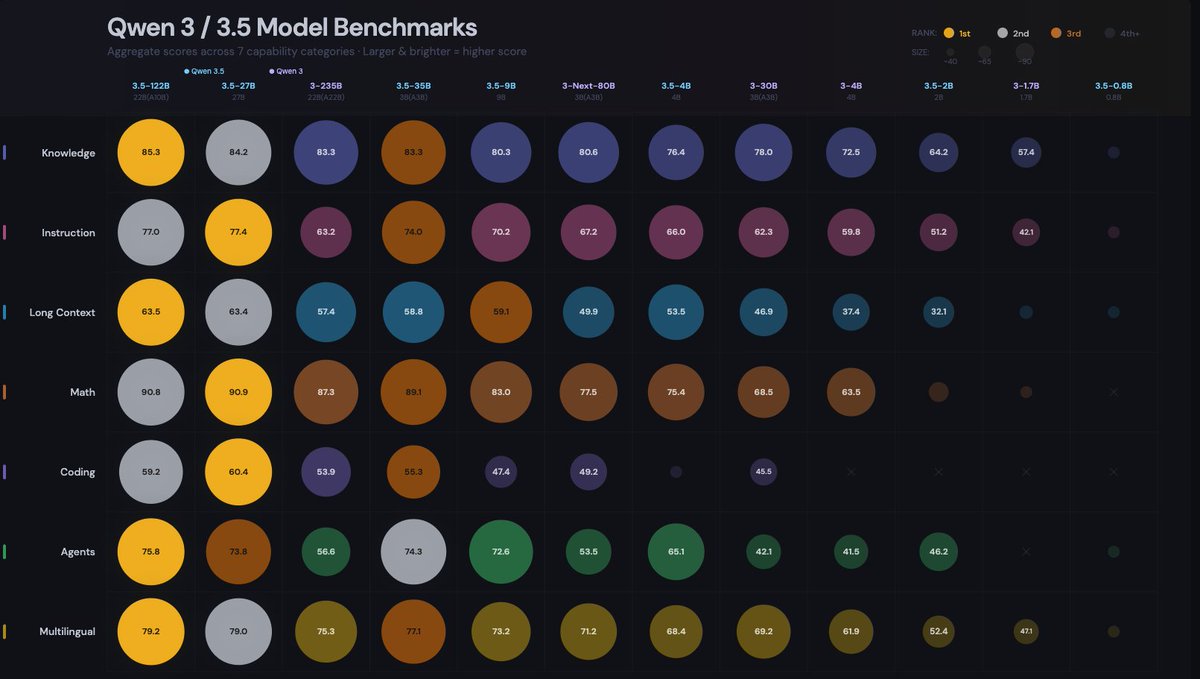

the numbers coming in from this thread: 5090: 166 tok/s (z33b0t), 153 tok/s (EmmanuelMr) 4090: 122 tok/s (StubbyTech) 3090: 112 tok/s (sudo), 100 tok/s (Eduardo) 6800XT: 20-30 tok/s (Dark) Qwen3.5-35B-A3B. 4-bit quant, 19.7 GB on disk. fits entirely on any single 3090 24GB card with room to spare. no offloading, no splitting, full speed. 5090 owners keep pushing the ceiling and we haven't found it yet. NVIDIA side is stacking up. where are the ROCm numbers? report your GPU and tok/s below. building the full map.

What's wrong here? Evaluated Llama 4 Scout, both locally and through Together AI. How can a local 2.71-bit quantized GGUF beat the online full version in the MMLU Pro CS benchmark? Consistent results over six runs, some with default settings and some with recommended ones. Weird!