Noam Elata

19 posts

Noam Elata

@NoamElata

PhD candidate @TechnionLive, Generative AI researcher @Apple

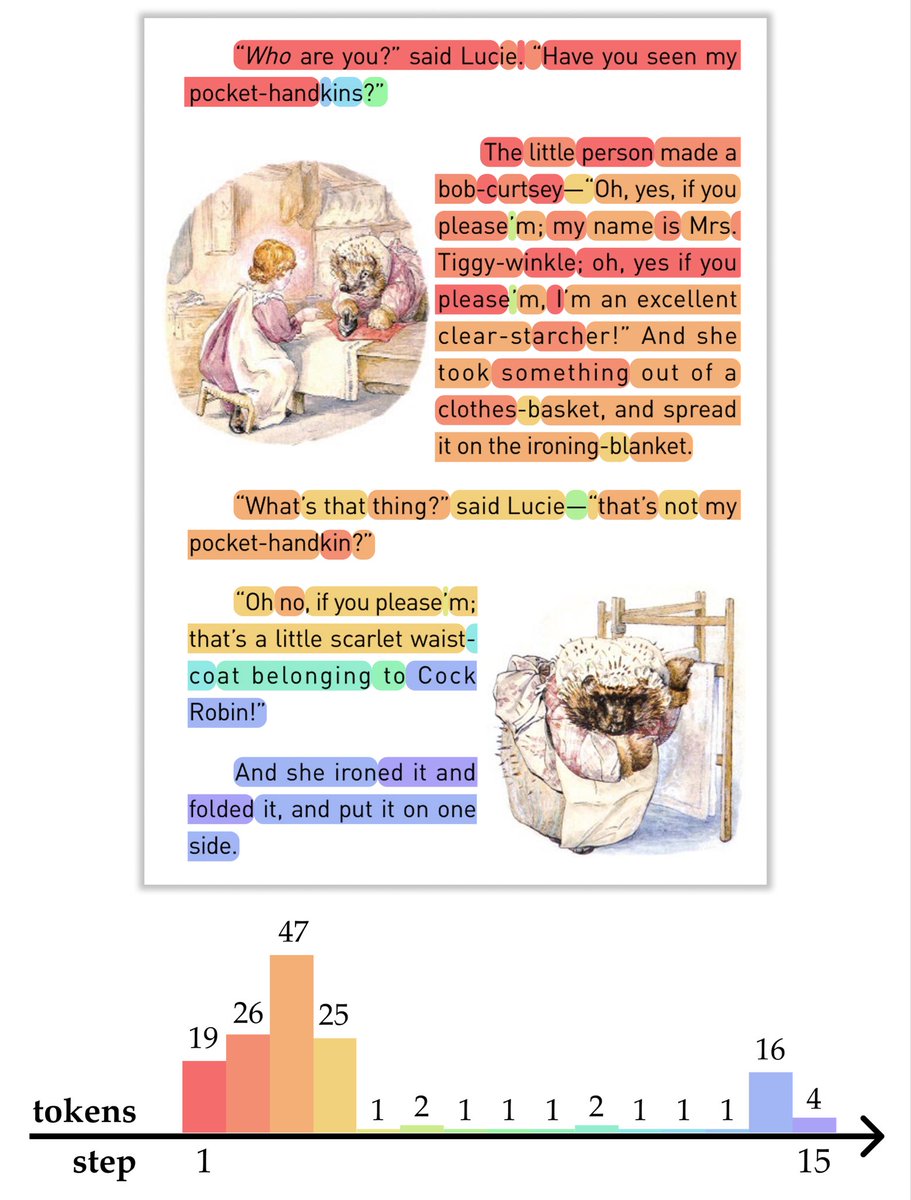

A picture is worth a thousand words, but can a LLM get the picture if it has never seen images before? 🧵 MIT CSAIL researchers quantify how much visual knowledge LLMs (trained purely on text) have. The visual aptitude of the language model is tested by its ability to write, recognize, and correct drawing code that can be rendered into illustrations. Starting w/language models trained on text alone, they show it is possible to train a preliminary vision system that can make judgments about real images: bit.ly/4cmkBaq

Accelerate your transformer model with the new Block-Sparse-Flash-Attention! github.com/Danielohayon/B… This training-free, drop-in replacement extends FlashAttention-2 with minimal code changes (CUDA Kernels Included). Paper: arxiv.org/abs/2512.07011

What is the probability of an image? What do the highest and lowest probability images look like? Do natural images lie on a low-dimensional manifold? In a new preprint with @ZKadkhodaie @EeroSimoncelli, we develop a novel energy-based model in order to answer these questions: 🧵

A key challenge for interpretability agents is knowing when they’ve understood enough to stop experimenting. Our @NeurIPSConf paper introduces a self-reflective agent that measures the reliability of its own explanations and stops once its understanding of models has converged.

Nested Diffusion Processes for Anytime Image Generation propose an anytime diffusion-based method that can generate viable images when stopped at arbitrary times before completion. Using existing pretrained diffusion models, we show that the generation scheme can be recomposed as two nested diffusion processes, enabling fast iterative refinement of a generated image. We use this Nested Diffusion approach to peek into the generation process and enable flexible scheduling based on the instantaneous preference of the user. In experiments on ImageNet and Stable Diffusion-based text-to-image generation, we show, both qualitatively and quantitatively, that our method's intermediate generation quality greatly exceeds that of the original diffusion model, while the final slow generation result remains comparable. paper page: huggingface.co/papers/2305.19…

Nested Diffusion Processes for Anytime Image Generation propose an anytime diffusion-based method that can generate viable images when stopped at arbitrary times before completion. Using existing pretrained diffusion models, we show that the generation scheme can be recomposed as two nested diffusion processes, enabling fast iterative refinement of a generated image. We use this Nested Diffusion approach to peek into the generation process and enable flexible scheduling based on the instantaneous preference of the user. In experiments on ImageNet and Stable Diffusion-based text-to-image generation, we show, both qualitatively and quantitatively, that our method's intermediate generation quality greatly exceeds that of the original diffusion model, while the final slow generation result remains comparable. paper page: huggingface.co/papers/2305.19…