Foster Nethercott

113 posts

Foster Nethercott

@OSTact13

USMC Veteran | Cybersecurity Consultant | Ethical Hacking Advocate | Passionate Knowledge Sharer

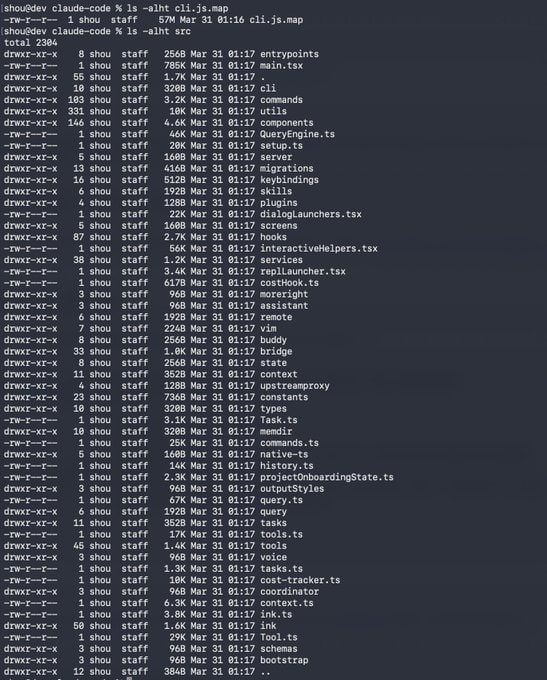

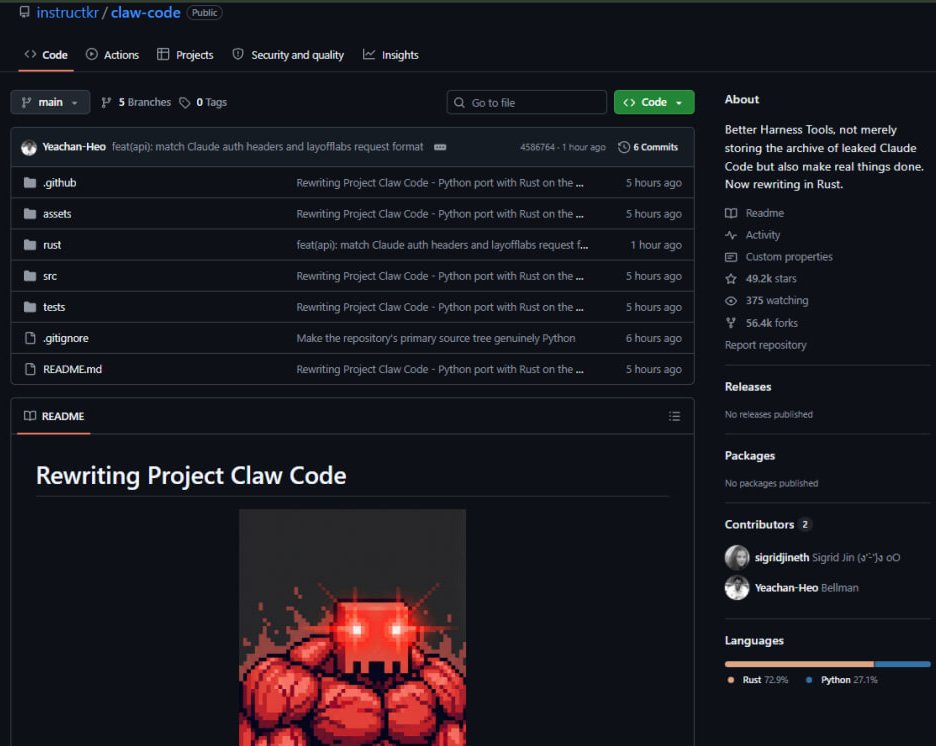

Tools such as PsExec.py from Impacket are usually flagged for lateral movement due to the pre-built service executable that is dropped on the remote system. However, some vendors also flag Impacket based on its behaviour. With RustPack, you can easily create service executables that won't be detected by signatures or behaviour-based detection. 😎 In this demo video, an unsigned service executable is generated. This will only fire the payload on a system with the hostname 'Win11' — environmental keying will prevent the payload from showing up in a sandbox or cloud analysis. To avoid Impacket detection, we drop and execute the binary via the recently released Titanis protocol library from @TrustedSec: github.com/trustedsec/Tit…. The result is an Adaptix C2 connection in the SYSTEM context. 🫡 #Pentest #RedTeam #Malware #OST

NVIDIA's recent paper presents a compelling blueprint for agentic AI, challenging the dominance of Large Language Models (LLMs) by advocating for Small Language Models (SLMs) in most tasks. Current AI agents often route every operation through resource-intensive LLMs like GPT-4 or Claude, which is inefficient for repetitive, scoped activities such as summarizing documents or calling tools. SLMs, with millions to tens of millions of parameters, run on consumer hardware with low latency, making them faster, cheaper (10-30x more efficient), and just as effective for specialized tasks. Models like Phi-3 and Nemotron-H already outperform older LLMs in reasoning and tool use, while being easier to fine-tune with techniques like LoRA for domain-specific expertise. This shift toward modular agents—defaulting to SLMs and escalating to LLMs only when necessary—promises greater control, affordability, and debuggability. Real-world examples show 40-70% of LLM calls can be replaced without performance loss, though industry inertia from heavy LLM investments and biased benchmarks delays adoption. As SLMs gain traction, the future of AI lies in smarter architectures over bigger models, enabling more accessible and sustainable agentic systems. what are your thoughts on integrating SLMs into your workflows? In my day-to-day job, I’ve already identified some use cases, and currently leaning toward involving SLMs more, it’s just just make more sense. I might post some real applications on this Nvidia paper : arxiv.org/abs/2506.02153…