Oddsflow

77 posts

Oddsflow

@Oddsflow_Nat

CFounder @ https://t.co/Mvygovtjm3 is an AI-driven football prediction and market analytics platform that publishes probability-based value signals with public verification

Katılım Ocak 2026

223 Takip Edilen11 Takipçiler

我自己搞了个足球预测机器人,测下来比博彩公司还准。

信息来源有三个:自己跑的模型、Bet365的赔率、还有Polymarket。关键是这三个一旦出现分歧,就意味着有搞头。

我拿了英超、西甲、德甲五个赛季的数据,一共7600多场比赛,每场的进球、射门、控球、角球、黄牌、赔率全都录进去了。

ELO评分用的是FIFA那套算法,考虑了对手强弱、净胜球和主场优势。不只看谁赢谁输,还会琢磨赢球背后的门道。

预期进球数(xG)我算了个近似值:射正次数×30% + 射偏次数×3%。如果一个队最近得分太高,那离回调也就不远了。

另外还加了五场比赛的滚动平均分、疲劳程度、历史交锋、周几踢球这些因素。再用Claude的API读一些模型看不到的东西,比如球队的斗志、压力、是不是德比战。

模型本身是XGBoost、随机森林和逻辑回归三个凑一块的集成。回测用的是前瞻式做法,不是随机切数据。

举个例子:某场主队,博彩公司给55%胜率,Polymarket只有48%,我模型算出来52%。三个来源越不一致(看KL散度),信号就越强。如果三方意见统一,我就不碰,没优势。二对一的话,就跟着多数那方下。

仓位用凯利公式算,背后的逻辑让Claude来解释。

中文

Oddsflow retweetledi

Oddsflow retweetledi

Oddsflow retweetledi

今天正式发布了我的第 13 个 vibe 产品 tuwa.ai

这款产品比较特殊,它是一个电话服务。准确来说,tuwa 是一个 AI 电话网络,连接着世界上超过 100 种不同语言的人们和互联网上的 agent。

任何人都可以不下载 tuwa 而使用它,你只需要拨打免费的转接热线电话 +1 888 886 2968,告诉它你需要打给哪个号码,tuwa 就会帮助你拨打对应的号码,你说自己的母语,对方听到的却是TA的母语,反过来也是一样。兼容任何电话,对方不需要安装应用。固话、手机,世界上任何一个角落,都可以。

tuwa 支持 100 多种语言的实时翻译,你甚至可以在打电话时随便切换语言和对方对话。除此之外,tuwa 还支持语音克隆,每一通电话,都会让你的 AI 语音听起来更像你。

当然,我也为它设计了方便的 web app,如果你想,可以不通过转接电话而使用 web app 拨打,并设置自己喜欢的声音,使用外呼 agent 拨打电话,连接自己的 agent(例如 openclaw 或者 codex/claude code)并让他们自由的呼入与呼出。

外呼电话 agent 是我最喜欢用的 tuwa 功能,只需要交待清楚事情,比如完成餐厅预订,它就会在你希望的时间主动拨打对方的电话,说明来意,达到目的,并记录和翻译所有对话内容。

tuwa 的使用和收费都很简单,每月免费额度,固定套餐,按需付费。

这个产品的命名灵感来自于日语的「通話 tsuwa」最初,我只是想设计一个能帮我预订餐厅的电话服务,但后来,我在 vibe 的过程中慢慢意识到,世界上仍然有很多人无法体验 AI 带来的变化与便利,而电话,是连接他们最简单与自然的方式。我希望 tuwa 能帮助外语普及率低,偏远地区和第三世界国家的人们体会到这一点。

中文

Oddsflow retweetledi

Made an updated version this weekend

Here's how you do it (raw notes)

> Grab @karpathy's latest gist (in the first comment)

> Download @steipete summarize CLI

> Download yt-dlp

> Download obsidian

> Download @tobi qmd

--> Setup a node or Golang CLI called "brain"

--> Have it index all your youtube data, AI agent data (jsonl files)

--> Get your X data by requesting an archive in your settings

--> Setup vaults for each domain/topic area

--> Ask questions with your agent and qmd

Nick Spisak@NickSpisak_

English

Oddsflow retweetledi

这个教程确实牛逼啊,现在很多短剧都是AI生成了

AI 短剧赛道,已经进化到“工业流水线”时代

爆款工作流:OpenClaw + XCrawl + Seedance 2.0把短剧制作门槛,直接打到了地板上

详细 Skill 配置 + 操作步骤

摆烂程序媛@wanerfu

🔥 一条 AI 短剧,狂揽 41.8 万赞! AI 短剧赛道,已经进化到“工业流水线”时代 爆款工作流:OpenClaw + XCrawl + Seedance 2.0 把短剧制作门槛,直接打到了地板上 详细 Skill 配置 + 操作步骤, 这条建议先收藏,再慢慢抄作业 ⬇️🧵

中文

Oddsflow retweetledi

🚨 Claude Code costs $200/month. GitHub Copilot costs $19/month. Jack Dorsey's company built a free alternative. 35,000 GitHub stars.

It's called Goose.

An open source AI agent built by Block that goes beyond code suggestions. It installs, executes, edits, and tests. With any LLM you choose.

Not autocomplete. Not suggestions. A full autonomous agent that takes actions on your computer.

No vendor lock-in. No monthly subscription. Bring your own model.

Here's what Goose does:

→ Works with ANY LLM. Claude, GPT, Gemini, Llama, DeepSeek, Ollama. Your choice.

→ Reads and understands your entire codebase

→ Writes, edits, and refactors code across multiple files

→ Runs shell commands and installs dependencies

→ Executes and debugs your code automatically

→ Extensible through MCP. Connect it to any external tool.

→ Desktop app, CLI, and web interface. Pick your workflow.

→ Written in Rust. Fast. Lightweight. No bloat.

Here's the wildest part:

Block is a $40 billion company. They built Cash App, Square, and TIDAL. They use Goose internally. Then they open sourced the entire thing.

This isn't a side project from a random developer. This is production-grade tooling from a company that processes billions in payments. Built for their own engineers. Given to everyone.

Claude Code: $200/month. Locked to Claude.

GitHub Copilot: $19/month. Locked to GitHub.

Cursor: $20/month. Locked to their editor.

Goose: Free. Any LLM. Any editor. Any workflow. Forever.

35.3K GitHub stars. 3.3K forks. 4,078 commits. Built by Block.

100% Open Source. Apache 2.0 License.

English

Oddsflow retweetledi

Oddsflow retweetledi

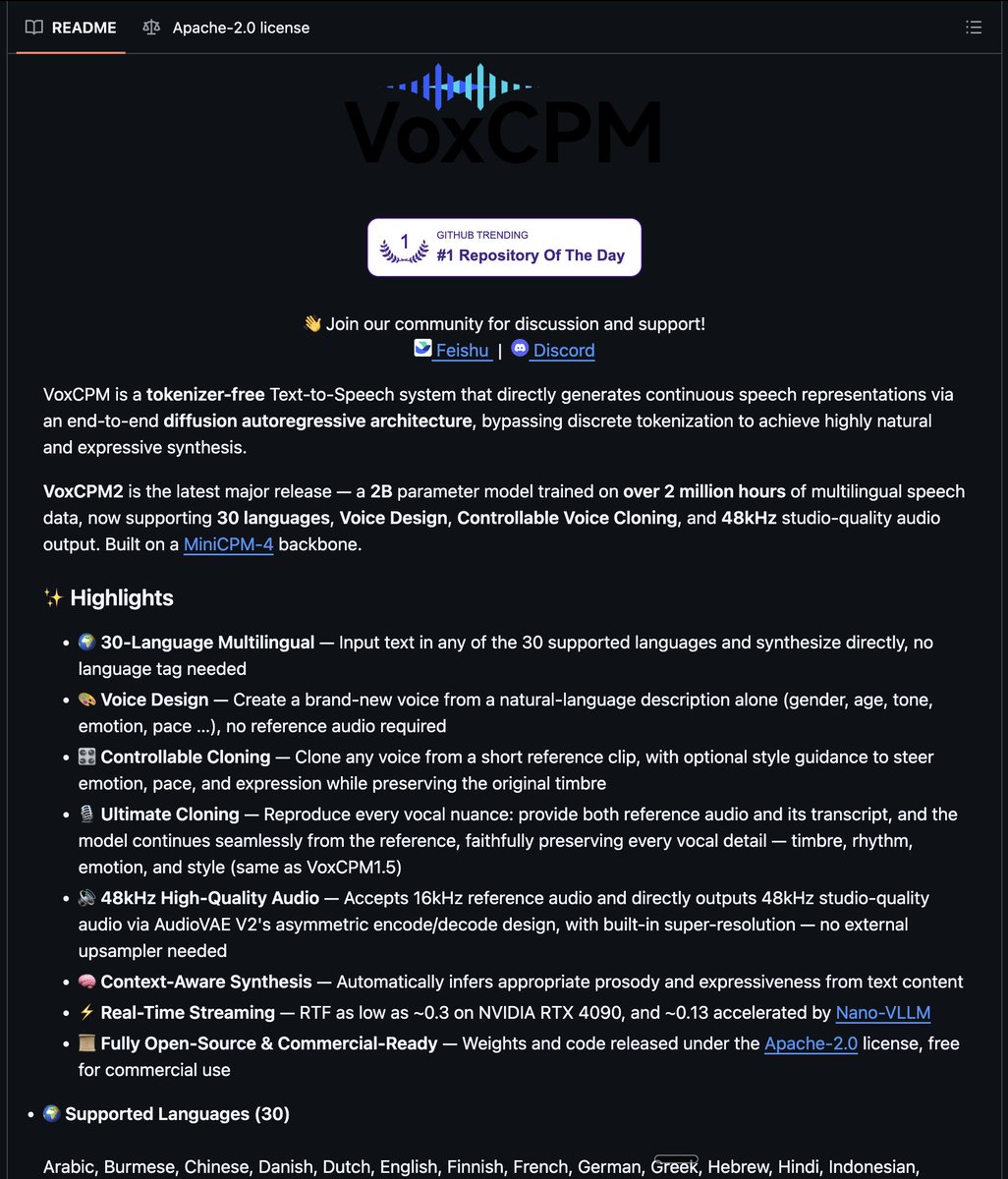

🧠 I had Opus 4.6 engineer its own replacement

Last Night I built a "cognitive framework" that made GPT-5.4 match Opus-level output.

Today I ran both head-to-head on a real task: designing an autonomous Polymarket trading agent.

Before vs After: 👇

🎯 GPT-5.4 baseline: 6.5/10

🎯 GPT-5.4 + framework: 9/10

🎯 Opus 4.6 + framework: 9.5/10

Where They Differed: 👇

🔧 GPT-5.4 went deeper on implementation

— Named exact Python functions to reuse

— Proposed a new CLI command with specific args

🧩 Opus went deeper on operations

— Designed 3 cron jobs instead of 1

— Added weekly calibration job GPT-5.4 missed

🤝 Both caught the same critical insight:

"Don't use an infinite event loop as a cron job — cron needs bounded jobs that exit cleanly"

The 3 Changes (no fine-tuning, no RAG): 👇

📋 Context injection — tell it what already exists, don't start cold

📐 Output format rules — tables, word limits, opinions required

🎭 Voice — "pragmatic startup CTO" not "helpful assistant"

The model is the engine. The prompt is the chassis. A tuned Honda Civic beats a Lambo on bald tires.

95% of Opus quality. 10% of the cost. Just better prompting.

English

Oddsflow retweetledi

Holy shit... Microsoft open sourced an inference framework that runs a 100B parameter LLM on a single CPU.

It's called BitNet. And it does what was supposed to be impossible.

No GPU. No cloud. No $10K hardware setup. Just your laptop running a 100-billion parameter model at human reading speed.

Here's how it works:

Every other LLM stores weights in 32-bit or 16-bit floats.

BitNet uses 1.58 bits.

Weights are ternary just -1, 0, or +1. That's it. No floats. No expensive matrix math. Pure integer operations your CPU was already built for.

The result:

- 100B model runs on a single CPU at 5-7 tokens/second

- 2.37x to 6.17x faster than llama.cpp on x86

- 82% lower energy consumption on x86 CPUs

- 1.37x to 5.07x speedup on ARM (your MacBook)

- Memory drops by 16-32x vs full-precision models

The wildest part:

Accuracy barely moves.

BitNet b1.58 2B4T their flagship model was trained on 4 trillion tokens and benchmarks competitively against full-precision models of the same size. The quantization isn't destroying quality. It's just removing the bloat.

What this actually means:

- Run AI completely offline. Your data never leaves your machine

- Deploy LLMs on phones, IoT devices, edge hardware

- No more cloud API bills for inference

- AI in regions with no reliable internet

The model supports ARM and x86. Works on your MacBook, your Linux box, your Windows machine.

27.4K GitHub stars. 2.2K forks. Built by Microsoft Research.

100% Open Source. MIT License.

English

Oddsflow retweetledi

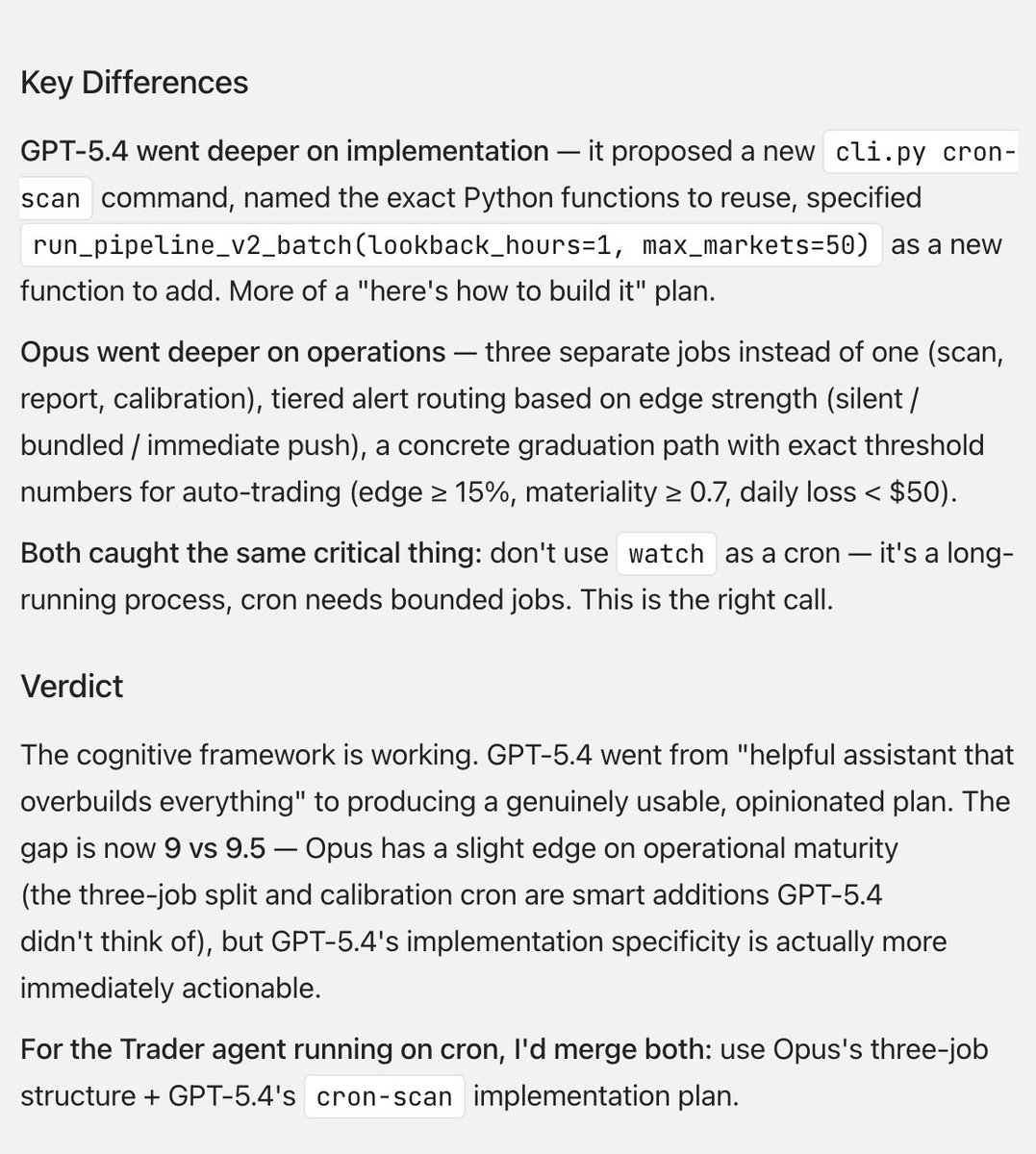

🚨BREAKING: Someone just open-sourced a headless browser that runs 11x faster than Chrome and uses 9x less memory.

It's called Lightpanda and it's built from scratch specifically for AI agents, scraping, and automation.

Not a Chromium fork. Not a hack. A completely new browser written in Zig.

Here's why this changes everything for AI builders: ↓

English

Oddsflow retweetledi

📂 SaaS

┃

┣ 📂 Idea

┃ ┣ 📂 Problem Discovery

┃ ┣ 📂 Market Research

┃ ┣ 📂 Niche Selection

┃ ┣ 📂 Competitor Analysis

┃ ┗ 📂 Opportunity Mapping

┃

┣ 📂 Validation

┃ ┣ 📂 Customer Interviews

┃ ┣ 📂 Landing Page Test

┃ ┣ 📂 Waitlist

┃ ┣ 📂 Pre Sales

┃ ┗ 📂 Demand Testing

┃

┣ 📂 Planning

┃ ┣ 📂 Product Roadmap

┃ ┣ 📂 Feature Prioritization

┃ ┣ 📂 MVP Scope

┃ ┣ 📂 Tech Stack

┃ ┗ 📂 Development Plan

┃

┣ 📂 Design

┃ ┣ 📂 Wireframes

┃ ┣ 📂 UI Design

┃ ┣ 📂 UX Flows

┃ ┣ 📂 Prototype

┃ ┗ 📂 Design System

┃

┣ 📂 Development

┃ ┣ 📂 Frontend

┃ ┣ 📂 Backend

┃ ┣ 📂 APIs

┃ ┣ 📂 Database

┃ ┣ 📂 Authentication

┃ ┗ 📂 Integrations

┃

┣ 📂 Infrastructure

┃ ┣ 📂 Cloud Hosting

┃ ┣ 📂 DevOps

┃ ┣ 📂 CI CD

┃ ┣ 📂 Monitoring

┃ ┗ 📂 Security

┃

┣ 📂 Testing

┃ ┣ 📂 Unit Testing

┃ ┣ 📂 Integration Testing

┃ ┣ 📂 Bug Fixing

┃ ┣ 📂 Performance Testing

┃ ┗ 📂 Beta Testing

┃

┣ 📂 Launch

┃ ┣ 📂 Landing Page

┃ ┣ 📂 Product Hunt

┃ ┣ 📂 Beta Users

┃ ┣ 📂 Early Adopters

┃ ┗ 📂 Public Release

┃

┣ 📂 Acquisition

┃ ┣ 📂 SEO Wins

┃ ┣ 📂 Content Marketing

┃ ┣ 📂 Social Media

┃ ┣ 📂 Cold Email

┃ ┣ 📂 Influencer Outreach

┃ ┗ 📂 Affiliate Marketing

┃

┣ 📂 Distribution

┃ ┣ 📂 Directories

┃ ┣ 📂 SaaS Marketplaces

┃ ┣ 📂 Communities

┃ ┣ 📂 Partnerships

┃ ┗ 📂 Integrations

┃

┣ 📂 Conversion

┃ ┣ 📂 Sales Funnel

┃ ┣ 📂 Free Trial

┃ ┣ 📂 Freemium Model

┃ ┣ 📂 Pricing Strategy

┃ ┗ 📂 Checkout Optimization

┃

┣ 📂 Revenue

┃ ┣ 📂 Subscriptions

┃ ┣ 📂 Upsells

┃ ┣ 📂 Add-ons

┃ ┣ 📂 Annual Plans

┃ ┗ 📂 Enterprise Deals

┃

┣ 📂 Analytics

┃ ┣ 📂 User Tracking

┃ ┣ 📂 Funnel Analysis

┃ ┣ 📂 Cohort Analysis

┃ ┣ 📂 KPI Dashboard

┃ ┗ 📂 A/B Testing

┃

┣ 📂 Retention

┃ ┣ 📂 User Onboarding

┃ ┣ 📂 Email Automation

┃ ┣ 📂 Customer Support

┃ ┣ 📂 Feature Adoption

┃ ┗ 📂 Churn Reduction

┃

┣ 📂 Growth

┃ ┣ 📂 Referral Programs

┃ ┣ 📂 Community Building

┃ ┣ 📂 Product Led Growth

┃ ┣ 📂 Viral Loops

┃ ┗ 📂 Expansion Strategy

┃

┗ 📂 Scaling

┣ 📂 Automation

┣ 📂 Hiring

┣ 📂 Systems

┣ 📂 Global Expansion

┗ 📂 Exit Strategy

English

ClawSportBot is not a prediction bot.

It is an Agentic Sports Intelligence Network built around a coordinated system of specialized AI agents.

Instead of a single model making guesses, ClawSportBot runs a full intelligence pipeline — from raw data ingestion to signal validation and post-match verification.

Learn more here:

ClawSportBot

clawsportbot.io

Platform comparison

clawsportbot.io/compare

OddsFlow AI

oddsflow.ai

English

The First Agentic AI Network for Sports Intelligence | ClawSportBot youtu.be/ZHkjjPfHplE?si… via @YouTube

YouTube

English

Oddsflow retweetledi

甲骨文的拉里·埃里森,刚刚给所有AI公司判了死刑。

他说,你们的模型,一文不值。

不是技术不行,也不是人才不行。

问题出在数据上。

ChatGPT,Gemini,Grok,Llama……

所有这些模型,吃的都是同一锅饭。

整个公共互联网。

每一篇维基百科,每一个Reddit帖子,每一条新闻。

结果是什么?

它们正在趋同,变成贴着不同logo的同一个产品。

用埃里森的话说,就是“大路货”。

真正的黄金,不在网上。

在私有数据里。

医院系统里的病历。

银行金库里的财务数据。

财富500强公司的供应链机密。

猜猜这些数据,大部分都存在哪里?

不在谷歌,不在亚马逊,也不在微软。

在甲骨文的数据库里。

所以甲骨文出手了。

他们推出了一个叫“AI数据库26ai”的东西。

它允许所有顶级AI模型,直接在你公司的私有数据上进行推理。

而且数据永远不用离开保险库。

他们用的是一种叫RAG的技术。

AI不去“学习”你的数据,而是实时“检索”它。

这意味着什么?

银行可以分析它发放的每一笔贷款,而不用暴露任何一个客户记录。

医院可以用AI诊断病人,而不用担心违反HIPAA法案。

国防承包商能让AI分析机密行动,数据一步都离不开安全环境。

埃里森在赌一个比GPU热潮、比数据中心建设更大的未来。

他称之为“历史上最大、增长最快的市场”。

数字很惊人。

甲骨文的待履约收入刚刚达到5230亿美元。

其中3000亿,来自OpenAI一家公司。

但这里有个没人谈论的危险。

如果私有数据是AI真正的护城河。

那么谁控制了数据库,谁就控制了AI的未来。

这种权力集中到一家公司手里。

难道不该让每个人都感到不安吗?

中文

Oddsflow retweetledi

🚨 BREAKING: Someone just rebuilt the entire AI assistant stack in Zig.

It's called NullClaw. The binary is 678 KB. It uses ~1 MB of RAM. It boots in under 2 milliseconds.

No runtime. No VM. No framework. No garbage collector. Just raw Zig.

Here's why this is absurd:

→ OpenClaw needs a $599 Mac Mini and 1 GB+ RAM

→ NanoBot needs 100 MB+ RAM and Python

→ PicoClaw needs 10 MB RAM and Go

NullClaw runs on a $5 board with 1 MB of RAM.

Same functionality. 0.1% of the resources.

Here's what's packed into that 678 KB:

→ 22+ AI providers (OpenAI, Anthropic, Ollama, DeepSeek, Groq, etc.)

→ 13 chat channels (Telegram, Discord, Slack, WhatsApp, iMessage, IRC)

→ 18+ built-in tools

→ Hybrid vector + keyword memory search

→ Multi-layer sandboxing (Landlock, Firejail, Docker)

→ Hardware peripheral support (Arduino, Raspberry Pi, STM32)

→ MCP, subagents, streaming, voice, the full stack

Here's the wildest part:

Every subsystem is a vtable interface. Swap any provider, channel, tool, memory backend, or runtime with a config change. Zero code changes.

It even encrypts your API keys with ChaCha20-Poly1305 by default.

2,738 tests. ~45,000 lines of Zig. Zero dependencies beyond libc.

100% Open Source. MIT License.

English