OmarTodd⚡️🟠

9K posts

@OmarTodd

C-Suite Tech Exec | Cyber Risk & AI Governance Advisor | CCRO, CISM, CISSP | $25M+ Budgets • Global Infra | Open to Board & Advisory Roles, Posts ≠ endorsements

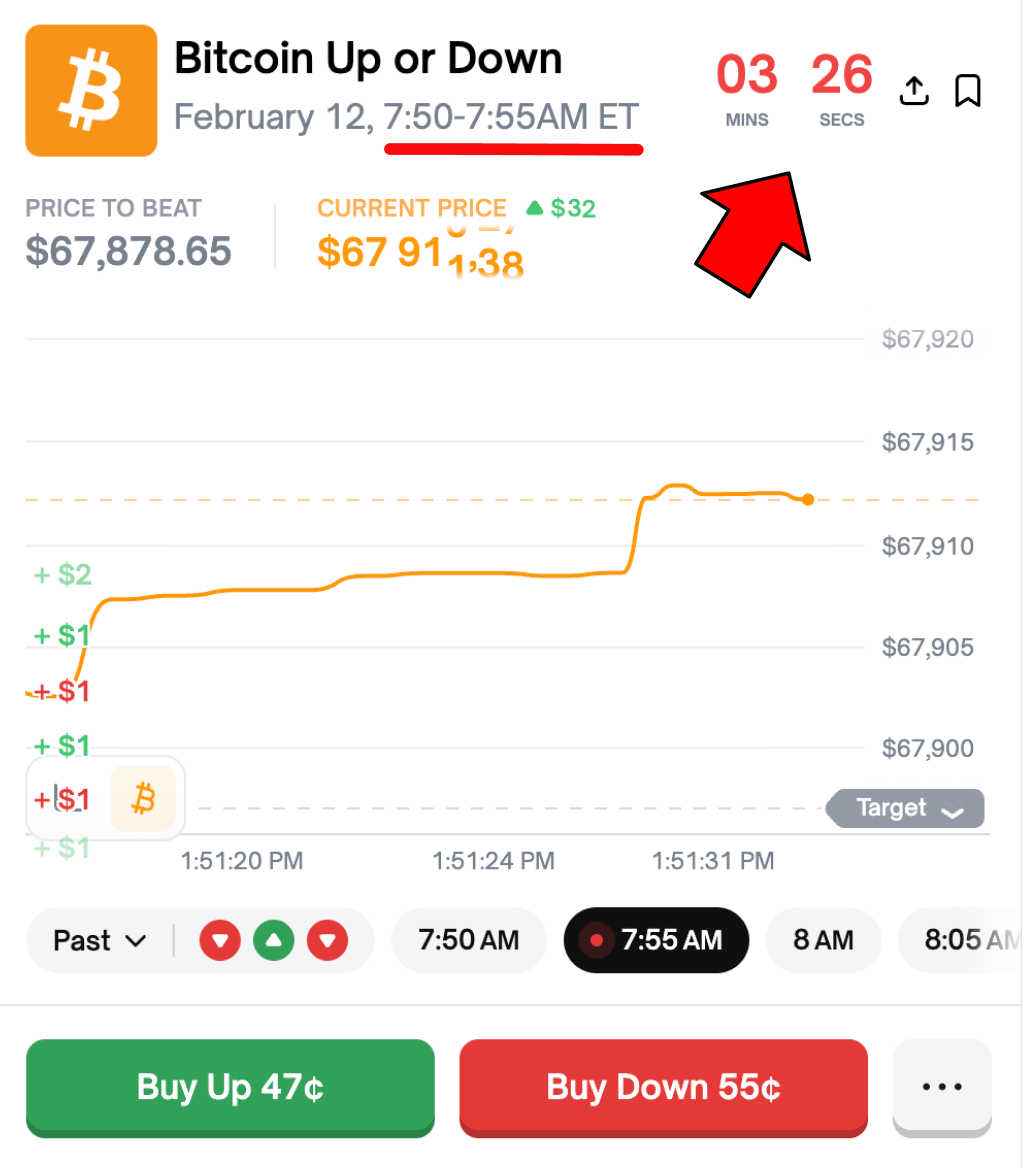

Polymarket is testing 5 min Up/Down markets But traders do NOT understand it will change EVERYTHING 15 min bots are turning $100 into $100,000 Imagine what they'll do at 5 min markets FIRST bots will print 10,000% PnL AGAIN Here's why you MUST start preparing NOW: 5-minute markets don’t just mean shorter trades. They mean a completely different game. Right now, 15-minute markets already favor speed. With 5-minute markets, speed becomes everything. This is no longer betting, but a real-time probability warfare. Every hour you get 12 fresh markets per asset. Each one resolved off live price feeds and oracles. And bots will definitely beat humans here. Those markets are already visible on Polymarket. Example: [polymarket.com/event/btc-updo…] At launch, the market might be a bit messy: > Price feeds lag > Odds don’t update instantly > Liquidity is uneven > Spreads are wide That’s not a bug. That’s free money for whoever is ready. The first bots to win won’t be fancy. The winners are: > Mispricing hunters > Pprobability scanners > Latency-optimized scripts Bots that just compare real prices to market odds and click faster than anyone else. In the early phase, this prints stupid numbers. High win rates. Thousands of micro-trades. Same edge that worked in 15-minute markets, just compressed and multiplied. Then the fun begins. More bots enter. Edges shrink. Latency goes from seconds to milliseconds. Slow bots get farmed. Overengineered bots break. Eventually, the market usually stabilizes: > Fewer profitable bots > Higher efficiency > Profits concentrate at the top This is how every system evolves. And this is why devs grinding now are in a rare position. A few weeks of preparation can mean: > First-mover edge > Massive early returns > Capital to scale We already saw it in 15-minute markets. 5-minute markets just turn the volume up to max. Polymarket is becoming a life changing ticket for devs. And rewards whoever shows up early, fast and ready. Hope you get my point right. Don't waste your time. See you at "Top traders" leaderboard.