Omega

1.6K posts

🌇 We will be sunsetting Pay-as-you-go and hold-tier payment methods, soon after the integration of our credit system and new tokenomics. These payment systems have been in place for several years, and have proven to be low retention mechanisms and did not match Aleph Cloud's actual economic model. They are unsustainable for both node operators and the company. We aim to provide a universal payment method for developers, businesses, and individuals wherever they are and whatever they are building. By adding a credit system, ALEPH will get back its place as the central element of Aleph Cloud infrastructure, acting both as the backbone of the network and the economic instrument for everyone. Combined with stablecoins and card payment, this will create a more sustainable model to drive acquisition and retention.

Running Kimi K2.5 on my desk. Runs at 24 tok/sec with 2 x 512GB M3 Ultra Mac Studios connected with Thunderbolt 5 (RDMA) using @exolabs / MLX backend. Yes, it can run clawdbot.

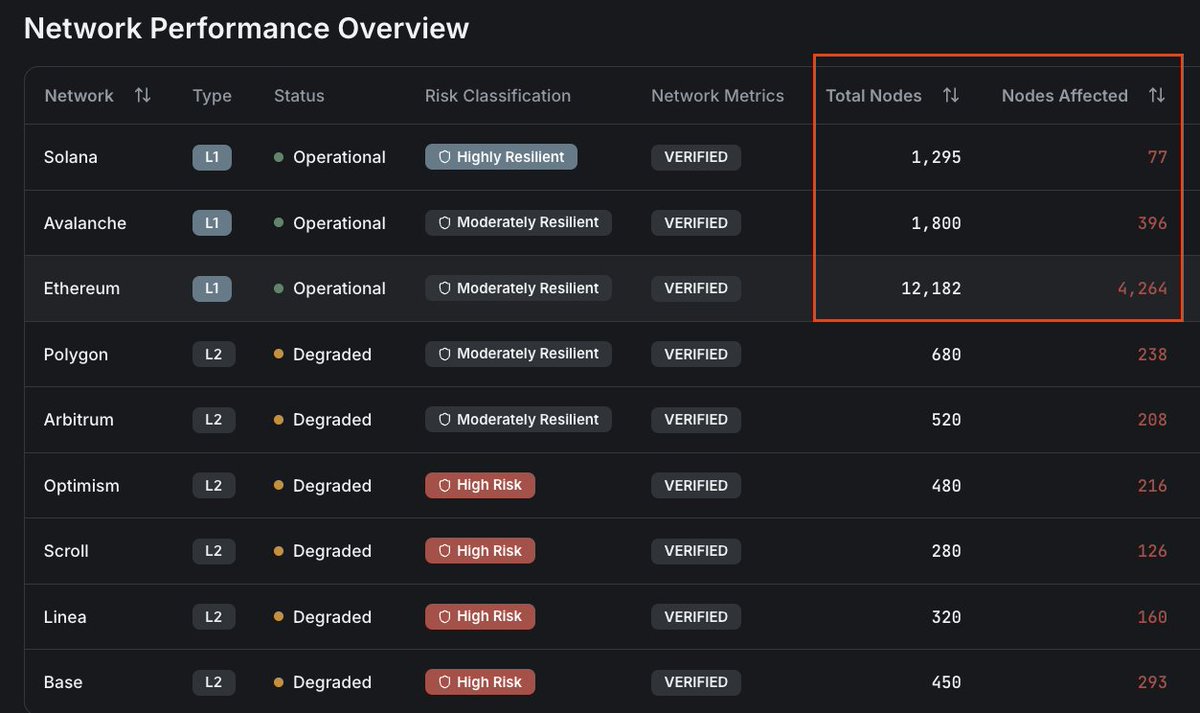

Modern web apps run on a few closed clouds, creating outages, lock-in and opaque infra as systemic risks. At the Open Source Hub at Devconnect ARG, @odesenfans from @aleph_im explains why we need a decentralized cloud and how Aleph Cloud is building one. Full video 👇

Couldn't prove my design came first. Lost the case. Built SBIX-Certify → legal timestamp + blockchain in 3 sec. Launching on @ProductHunt 🙏🚀 producthunt.com/posts/sbix-cer…