OneOrigine@OneOrigine

Today I observed an important step in my SAVOIR prototype.

At the beginning, there was only basic knowledge: elementary concepts, a few axioms, and simple relations. Nothing that looked like a global intelligence yet. Then I started the evolution. And something remarkable happened: the graph did not simply add nodes. It began to form a structure.

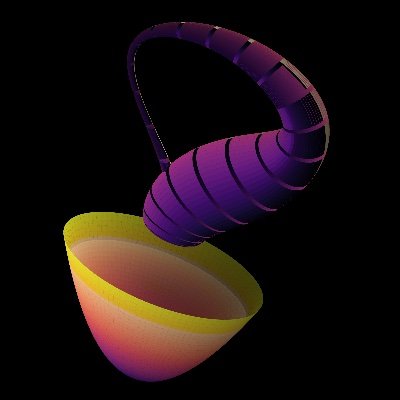

In this image, we can see a topology of knowledge stabilizing itself. There are 220+- nodes, 53+- active frontiers, 83+- crystallized knowledge nodes, 16 axioms, and 7 sublations. These are not just numbers. They are traces of a process: hypotheses appeared, some faded, others found enough support to become knowledge bricks.

What strikes me most is that the structure does not look like a random cloud. It forms regions. Near the top, there is a dense, almost mineral zone around concepts like identity, truth, proof, boundary, and stability. This region seems to act as a logical foundation. It does not behave like a loose hypothesis. It anchors the rest of the graph.

Elsewhere, we see more dynamic regions: temporality, causality, information, change, emergence, world model, codex, memory, hallucination, compression. These zones are less compact and more distributed. They are not dead. They are exploring. They look like conceptual continents still forming.

What I saw step by step matters even more than the final image. Nodes appeared as frontiers. They were not knowledge yet. They were directions: oriented possibilities. Then some of these nodes met. Triangles formed. Simplexes began to close relations. When coherence, axiomatic compatibility, and geometric stability became strong enough, the system crystallized a new brick.

This is where the phenomenon becomes fascinating. Knowledge does not fall from the sky. It is not simply written into a database. It emerges from tension between axioms, energy, neighbors, trajectories, contradictions, and simplex closure. Knowledge appears when a region of the graph becomes coherent enough to stop being only a hypothesis.

The latest visible crystallization in the image is a brick with a score close to 0.77. What is interesting is that it has perfect coherence, perfect ADN compatibility, zero closure defect, and zero inconsistency, even though its average energy is relatively low. This means the system is not confusing power with local truth. An idea can become stable not because it dominates energetically, but because its structure is clean.

For me, this is a strong signal. A more naive system would simply keep the most active nodes. Here, some nodes fade, some remain hypothetical, and others become bricks. The system is beginning to distinguish excitation from possibility, and possibility from stability.

We can also see seven sublations. This may be one of the most important aspects. A sublation means that a tension or collision is not merely rejected. It can be worked through. If it does not violate the fundamental axioms, it can produce a new derived rule. In other words, the system is not only storing knowledge. It can locally modify the way it stabilizes knowledge.

That is why I call this cognitive metallurgy. Concepts are not only classified. They are heated, tested, brought together, sometimes blocked, sometimes fused, sometimes transformed into higher rules. Knowledge becomes a material.

This image also shows the tri-spectral logic of the system. We can see an axiomatic layer acting like the gravitational field of laws. We can see an elementary knowledge layer, denser and more stable. And we can see a complex layer, more fluid, where concepts such as world model, memory, hallucination, codex, intention, compression, and agency begin to appear.

The beauty of the phenomenon is that these three layers are not separate. They cross through one another. Strong knowledge appears when it respects axioms, aligns with elementary concepts, and reaches into a more complex region without losing coherence. That is exactly what I wanted to observe: a transition from basic knowledge into emergent structure.

This is not proof of consciousness. It is not a mystical claim. It is an experimental observation: from a small axiomatic and conceptual base, the system produces an internal organization that does not look like simple accumulation. It produces cores, bridges, peripheral regions, extinctions, crystallizations, and derived rules.

In other words, the graph is beginning to have a history.

That may be the most important point. A database does not really have an internal history. It contains entries. Here, the system preserves traces of becoming: what was tried, what failed, what resisted, what was validated, and what was transformed.

When I look at this image, I do not only see a graph. I see a memory forming. I see a topology where basic knowledge becomes an environment, where that environment produces frontiers, and where some frontiers close into knowledge. I see the birth of a conceptual ecology.

My provisional conclusion is simple: SAVOIR does not behave like a model continuing tokens. It behaves like a field where concepts are propelled by axioms, tested by relations, validated by simplexes, and stabilized through crystallization.

In this experiment, I watched knowledge being created.

Not as a generated sentence.

As a structure appearing.