Sabitlenmiş Tweet

OpenMOSS

39 posts

OpenMOSS

@Open_MOSS

OpenMOSS is an open research community aimed at building artificial general intelligence. Discord 👇 https://t.co/FLvN5uX8wc

Katılım Ocak 2025

29 Takip Edilen264 Takipçiler

OpenMOSS retweetledi

Open-source video should be easy to run, adapt, and build into products.

That’s what MOVA is designed for.

MOVA-360p has reached 142K total downloads on Hugging Face, with 88,362 downloads in the last month.

Developers get open weights, inference code, training pipelines, LoRA fine-tuning scripts, Apache-2.0 licensing, Diffusers support, and Safetensors.

Now, with DiffSynth Studio support for MOVA-360p and MOVA-720p, teams can use MOVA across both inference and training workflows.

Hugging Face: huggingface.co/OpenMOSS-Team/…

GitHub :github.com/OpenMOSS/MOVA

DiffSynth Studio: github.com/modelscope/Dif…

English

Welcome to try MOSS-TTS-Nano!

github.com/OpenMOSS/MOSS-…

huggingface.co/OpenMOSS-Team/…

ModelScope@ModelScope2022

Say hello to MOSS-TTS-Nano 🚀 0.1B multilingual TTS from MOSI.AI and OpenMOSS. Designed for realtime speech generation without a GPU. Runs directly on CPU, keeping the deployment stack simple enough for local demos, web serving, and lightweight product integration. Part of the MOSS-TTS family alongside the 1.7B and 8B flagship models. 🤖 modelscope.cn/models/openmos… 🌍 modelscope.ai/models/openmos… 💻 github.com/OpenMOSS/MOSS-…

English

(6/6) MOSS-VL is live. Two checkpoints (Base + Instruct), Apache 2.0.

🎮 Try it: fnlp-vision.github.io/MOSS-VL-Demo

🤗 huggingface.co/fnlp-vision

🐙 github.com/fnlp-vision/MO…

🇨🇳 modelscope.cn/organization/f…

📄 Arxiv: soon

From OpenMOSS. Bookmark for later.

English

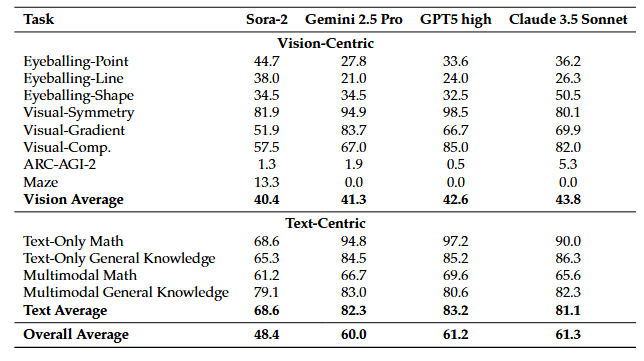

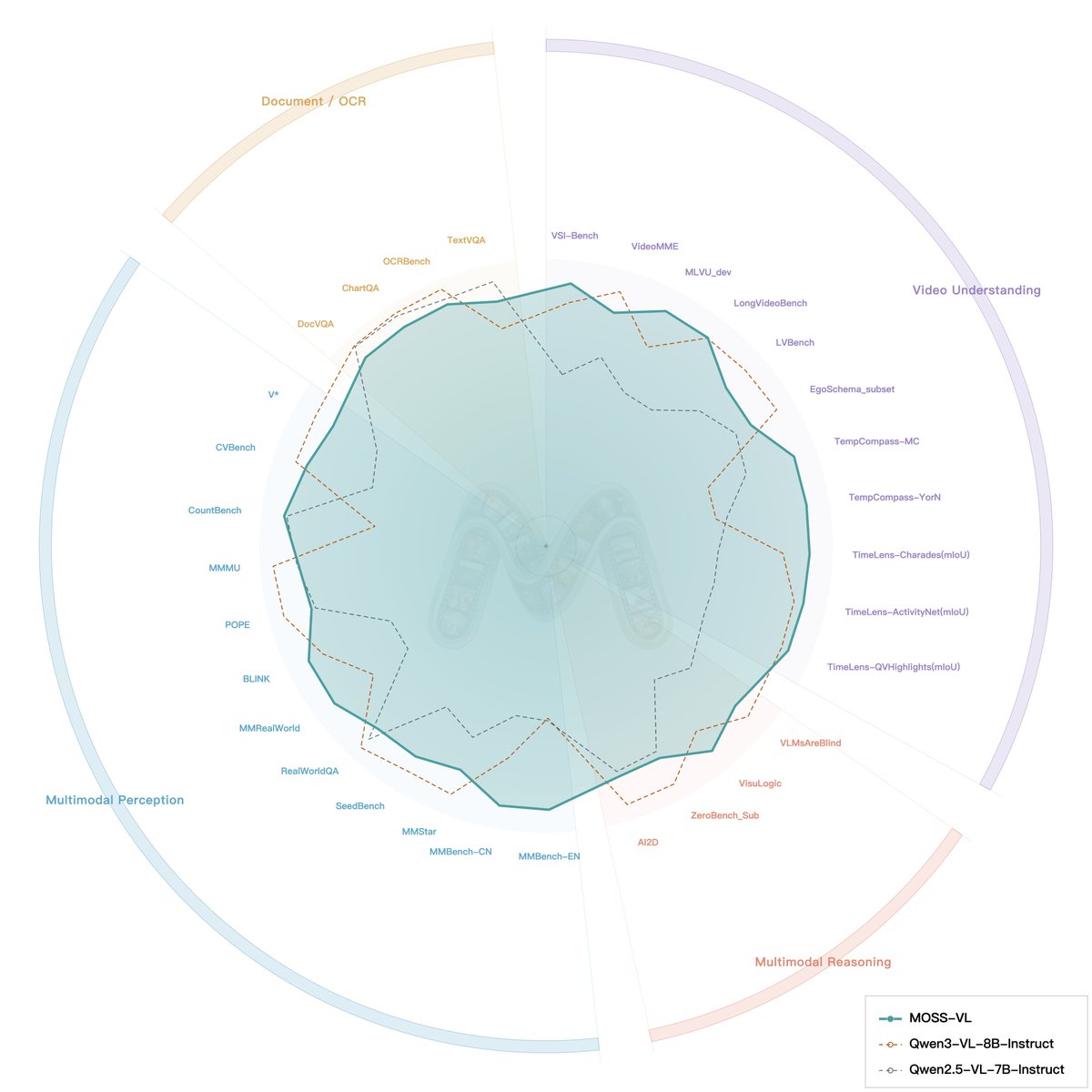

OpenMOSS drops two model series today: MOSS-VL and MOSS-Video-Preview. 🚀

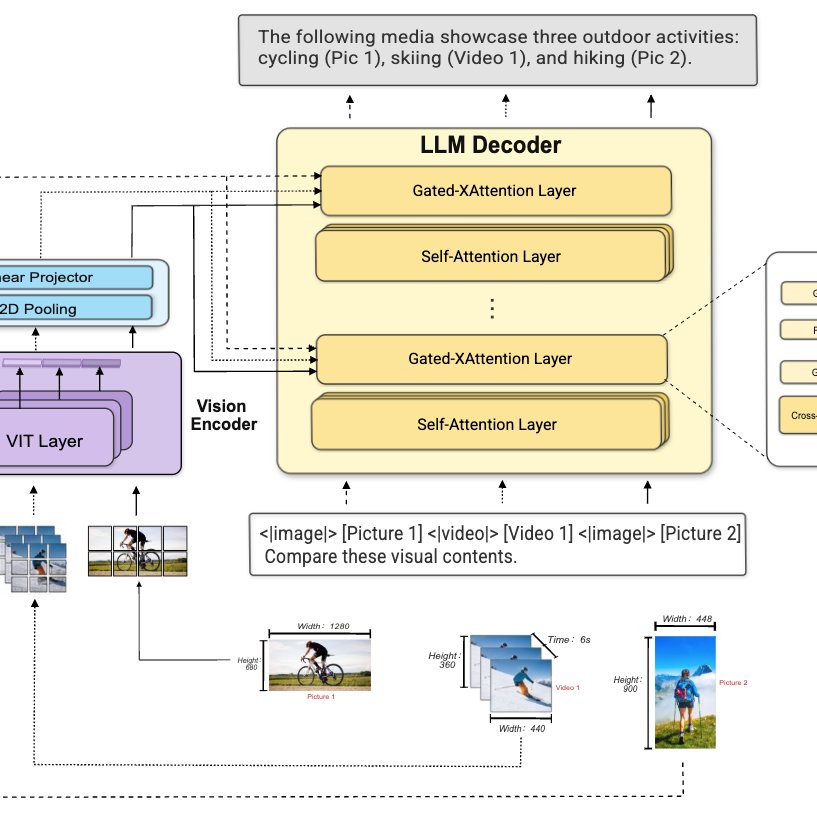

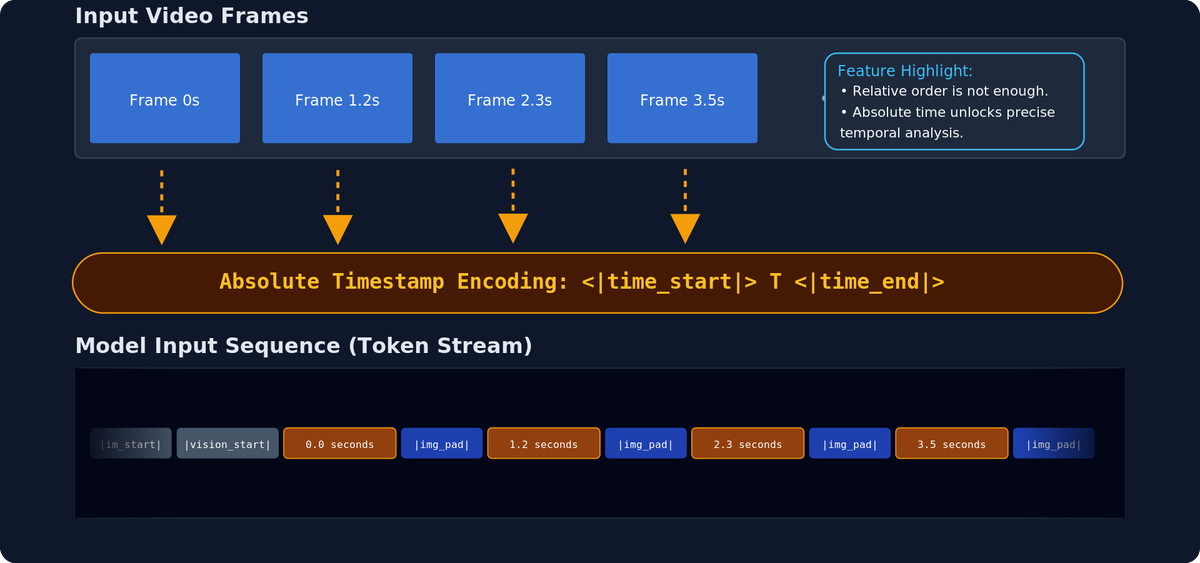

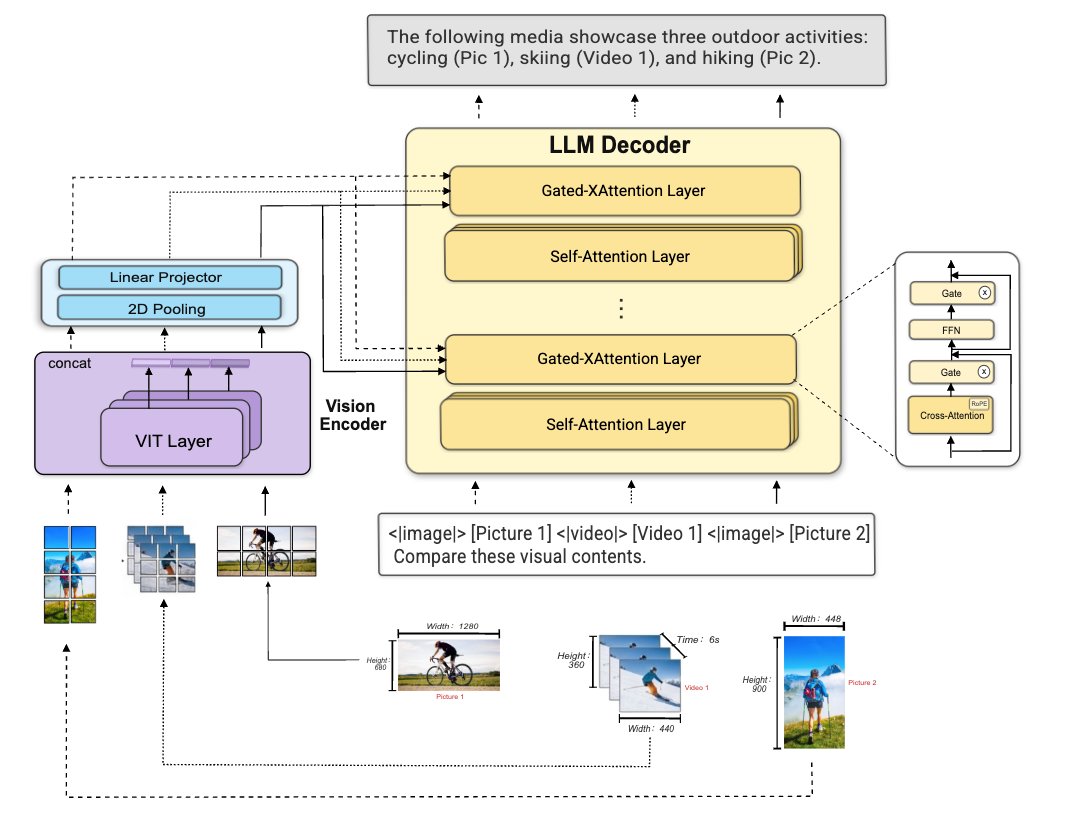

MOSS-VL: offline multimodal engine with cross-attention architecture, XRoPE, and absolute timestamp injection.

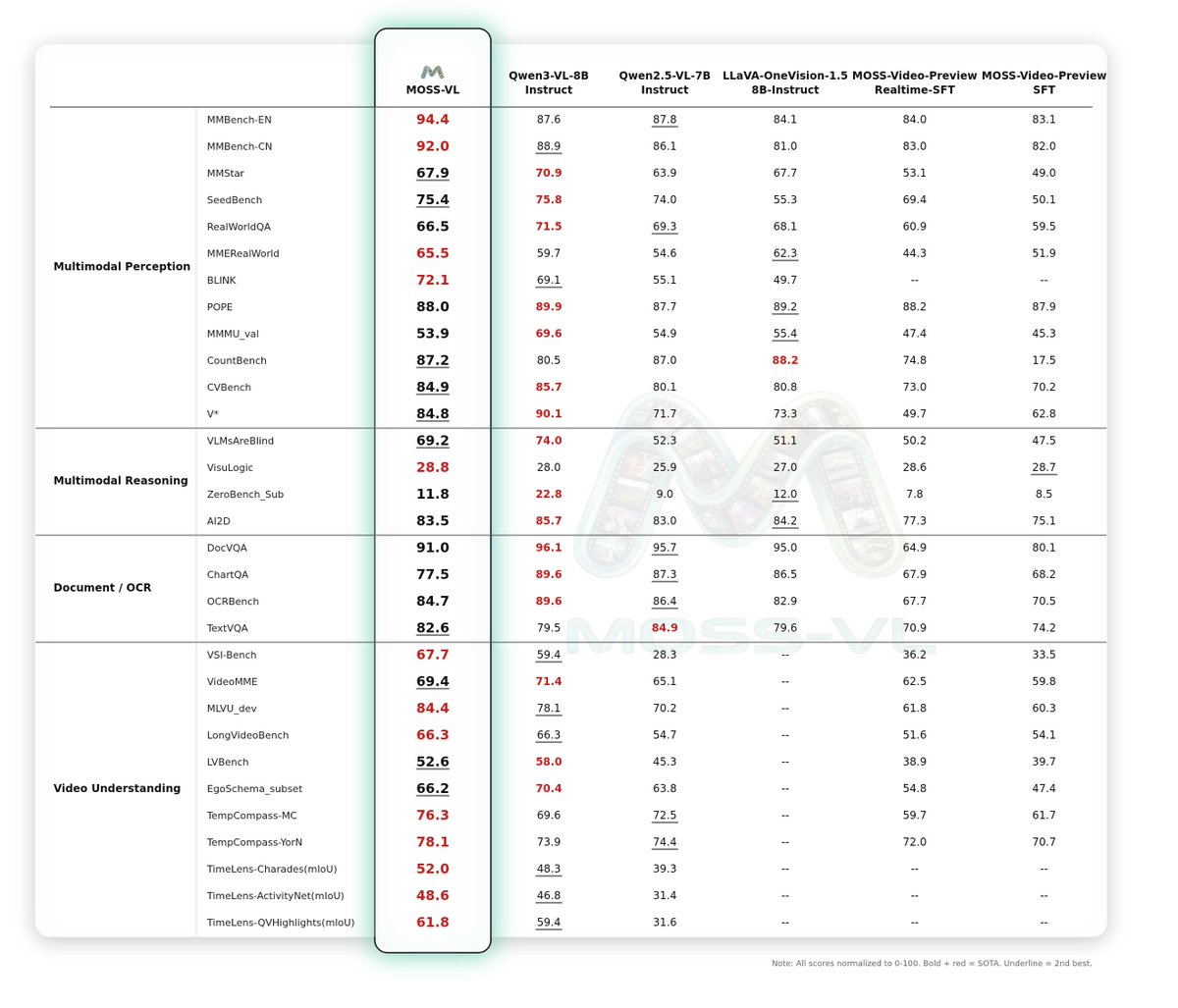

🎬 Video score 65.8, beats Qwen3-VL by +2 pts. VSI-bench +8.3 vs Qwen3-VL-8B-Instruct.

🖼️ Strong on image understanding, OCR, document parsing, and visual reasoning.

Two checkpoints: Base (pretrain) and Instruct (SFT).

MOSS-Video-Preview: built for real-time streaming video understanding. Cross-attention backbone on Llama-3.2-Vision, native frame-by-frame injection, duplex "listen-speak" switching.

👉 Three checkpoints: Base (pretrain) → SFT (offline instruction) → Realtime-SFT (low-latency streaming, sub-ms TTFT).

🤖 MOSS-VL: modelscope.ai/collections/op…

🤖 MOSS-Video-Preview: modelscope.ai/collections/op…

English

📄 Paper: arxiv.org/abs/2603.14473

🌐 Project: tongjingqi.github.io/AI-Can-Learn-S…

💻 GitHub: github.com/tongjingqi/AI-…

🤗 HF Models & Data: huggingface.co/collections/Op…

Català

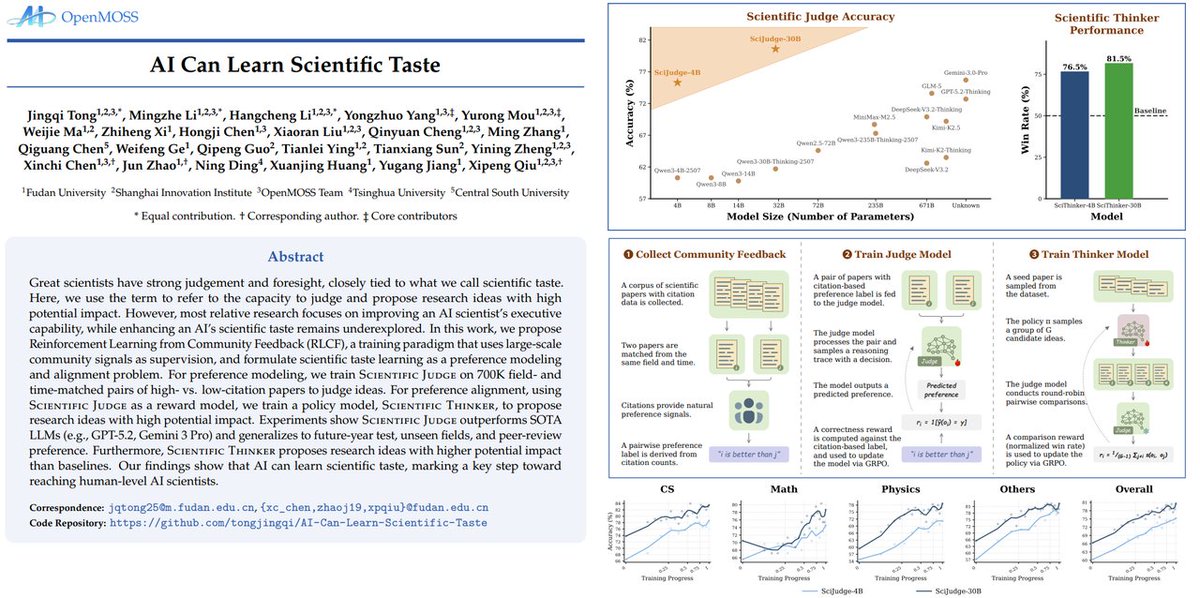

🚨AI can learn scientific taste. 🔬🤖

Great scientists have strong judgement and foresight, closely tied to what we call scientific taste. Here, we use the term to refer to the capacity to judge and propose research ideas with high potential impact.

However, most relative research focuses on improving an AI scientist's executive capability, while enhancing an AI's scientific taste remains underexplored. In this work, we propose Reinforcement Learning from Community Feedback (RLCF), a training paradigm that uses large-scale community signals as supervision, and formulate scientific taste learning as a preference modeling and alignment problem.

For preference modeling, we train Scientific Judge on 700K field- and time-matched pairs of high- vs. low-citation papers to judge ideas.

For preference alignment, using Scientific Judge as a reward model, we train a policy model, Scientific Thinker, to propose research ideas with high potential impact.

Experiments show Scientific Judge outperforms SOTA LLMs (e.g., GPT-5.2, Gemini 3 Pro) and generalizes to future-year test, unseen fields, and peer-review preference. Furthermore, Scientific Thinker proposes research ideas with higher potential impact than baselines. Our findings show that AI can learn scientific taste, marking a key step toward reaching human-level AI scientists.

We are no longer just building AI that automates the execution of science. We are building AI that can automate the direction of science. Scientific taste is no longer a human monopoly. We have open-sourced everything. Come build the future of AI scientists with us!

#AutoResearch #AI #Agent #VibeResearch

English

@m4zas24 Hi, you can try here: studio.mosi.cn

Event tags is on the way.

English

@Open_MOSS @Open_Moss any chance you guys will release a web version similar to elevenlabs also please add emotions [laughs] [sighs] etc

English

🚀 The MOSS-TTS Family is here.

From zero-shot cloning to real-time VoiceAgents, we have released our most powerful suite of audio models yet.

The Lineup:

MOSS-TTS Flagship: The industry's best zero-shot voice cloning. Features precise control over duration & Pinyin, capable of generating 1 hour of speech.

MOSS-TTSD-v1.0: A new standard for dialogue generation. Comprehensive optimization for conversational scenes and small languages. Best-in-class performance in all evaluations.

MOSS-VoiceGenerator: One-shot timbre generation. Create voices with a single sentence and complex instruction handling.

MOSS-TTS-Realtime: Built for the next era of VoiceAgents. Synthesis starts in just 2 characters for instant response.

MOSS-SoundEffect: Text-to-Audio sound effects to expand your creative toolkit.

🔥 Try it now: studio.mosi.cn/voice-synthesis

💻 Deploy (GitHub): github.com/OpenMOSS/MOSS-…

🔌 API Docs: studio.mosi.cn/docs/moss-tts

Welcome to our demo. The era of 'childhood' for TTS is over.

#MOSS #AI #TextToSpeech #TTS #OpenClaw #Agent #OpenMOSS #Opensource #VoiceAgent

English

MOVA is here: github.com/OpenMOSS/MOVA

青龍聖者@bdsqlsz

Mova,video and audio sync based wan 2.2 i2v a14B

English

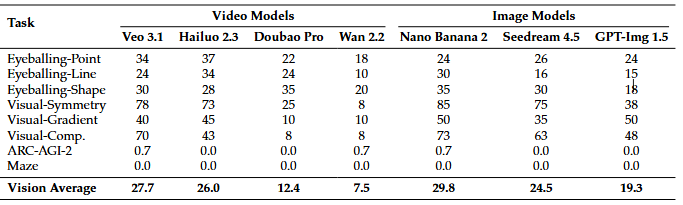

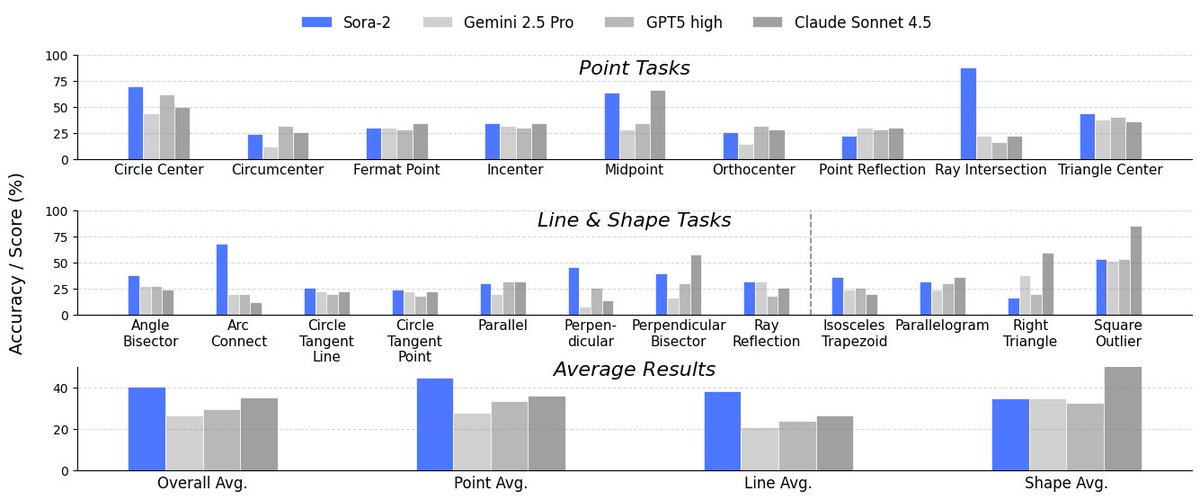

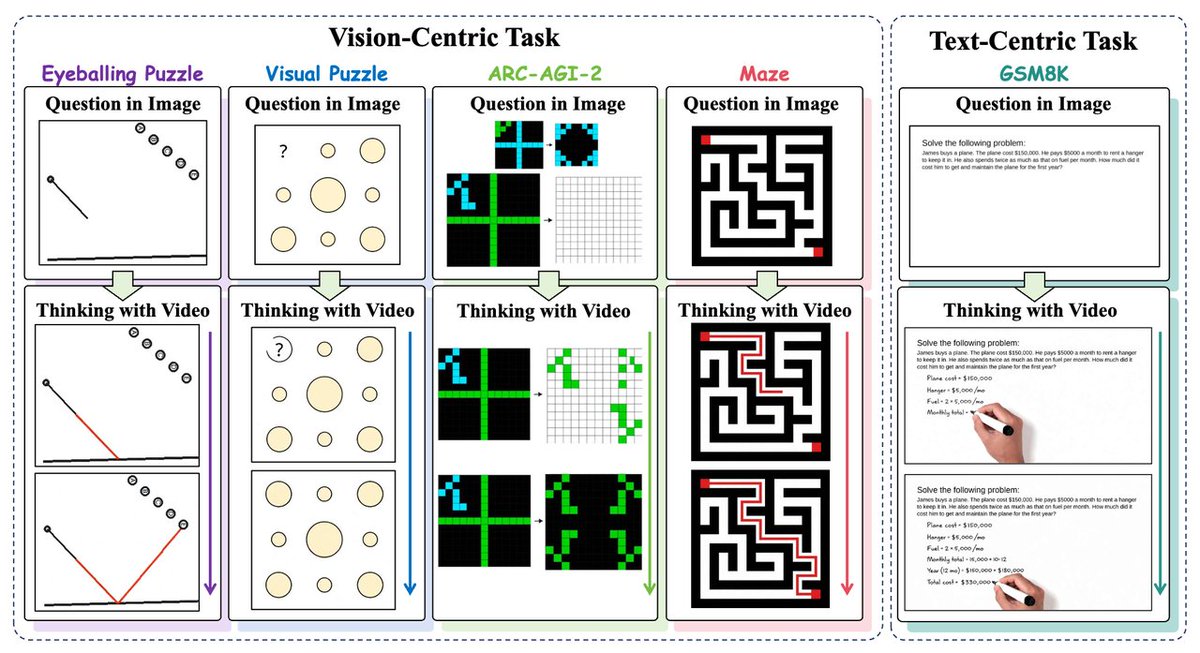

CVPR2026 🎉 Thinking with Video: Video Generation as a Promising Multimodal Reasoning Paradigm

🌟We use video frames as a unified medium for text and vision reasoning. 🤯

🔥Video model (Sora-2) beats GPT-5 by 10% on Eyeballing Puzzles!

🧵arxiv.org/abs/2511.04570

(1/6)

#CVPR2026 #seedance2 #Multimodal #VideoGeneration #Sora2 #Reasoning #LLM #AI

English

We introduce VideoThinkBench to test this.

On "Eyeballing Puzzles", Sora-2 reasons by simulating light reflection and manipulating geometry.

Result? It outperforms SOTA VLMs and scores 10% higher than GPT-5! 📈🧩

All code and data are open-sourced: github.com/tongjingqi/Thi…

(3/6)

English

Current LLM/VLM paradigms ("Thinking with Text/Images") have limits: static images lack dynamics, and split modalities hinder understanding.

Our fix: Thinking with Video. Video frames as a unified medium to draw/write reasoning steps! ✍️🎥

Project: thinking-with-video.github.io

(2/6)

English