Orizuru retweetledi

Orizuru

418 posts

Orizuru retweetledi

Congratulations on an incredible, undefeated #FreestyleChess tournament in South Africa, @LevAronian 🔥

English

Orizuru retweetledi

nanoGPT - the first LLM to train and inference in space 🥹. It begins.

Adi Oltean@AdiOltean

We have just used the @Nvidia H100 onboard Starcloud-1 to train the first LLM in space! We trained the nano-GPT model from Andrej @Karpathy on the complete works of Shakespeare and successfully ran inference on it. We have also run inference on a preloaded Gemma model, and we plan to try more exciting models in the future. Getting the first H100 to work in space required a lot of innovation and hard work from the incredible Starcloud team to make this breakthrough. This is a significant first step toward moving almost all computing off Earth to reduce the burden on our energy supplies and take advantage of abundant solar energy in space! 🚀

English

Orizuru retweetledi

Orizuru retweetledi

As a child, Hannah Cairo learned math by taking online lessons from Khan Academy. By the time she was 14, she had taught herself the equivalent of an advanced undergraduate math degree.

quantamagazine.org/at-17-hannah-c…

English

FINAL MOMENTS of WORLD #1 MAGNUS CARLSEN vs. WORLD CHAMPION GUKESH youtu.be/MsFsA6Gv9GY?si… via @YouTube

YouTube

English

Orizuru retweetledi

Orizuru retweetledi

Orizuru retweetledi

What could Alphafold 4 look like? (Sergey Ovchinnikov, Ep #3) owlposting.com/p/what-could-a…

English

Orizuru retweetledi

ProGen3: scaling protein language model data and parameters improves the quality of generations, especially further away from natural sequences.

@AadyotB @jeffruffolo @thisismadani

English

Orizuru retweetledi

60 years ago this month, the Fast Fourier Transform was created, a powerful tool for image compression & data analysis.

Watch a classic MIT breakdown of FFT, perhaps the most-taught algorithm at the Institute: bit.ly/4cNMbPm

v/@MITOCW

English

Orizuru retweetledi

Orizuru retweetledi

First draft online version of The RLHF Book is DONE. Recently I've been creating the advanced discussion chapters on everything from Constitutional AI to evaluation and character training, but I also sneak in consistent improvements to the RL specific chapter.

rlhfbook.com

RLHF has a long future ahead of it and this will do a lot to make it more accessible to the next generation.

What's next: Getting a physical copy in your hands (may not be exactly 1to1, we'll see) and minor fixes at a slower cadence (thanks to many github contributors, some of you will get a copy from me).

Here are all the chapters.

1.Introduction: Overview of RLHF and what this book provides.

2.Seminal (Recent) Works: Key models and papers in the history of RLHF techniques.

3.Definitions: Mathematical definitions for RL, language modeling, and other ML techniques leveraged in this book.

4.RLHF Training Overview: How the training objective for RLHF is designed and basics of understanding it.

5.What are preferences?: Why human preference data is needed to fuel and understand RLHF.

6.Preference Data: How preference data is collected for RLHF.

7.Reward Modeling: Training reward models from preference data that act as an optimization target for RL training (or for use in data filtering).

8.Regularization: Tools to constrain these optimization tools to effective regions of the parameter space.

9.Instruction Tuning: Adapting language models to the question-answer format.

10.Rejection Sampling: A basic technique for using a reward model with instruction tuning to align models.

11.Policy Gradients: The core RL techniques used to optimize reward models (and other signals) throughout RLHF.

12.Direct Alignment Algorithms: Algorithms that optimize the RLHF objective directly from pairwise preference data rather than learning a reward model first.

13.Constitutional AI and AI Feedback: How AI feedback data and specific models designed to simulate human preference ratings work.

14.Reasoning and Reinforcement Finetuning: The role of new RL training methods for inference-time scaling with respect to post-training and RLHF.

15.Synthetic Data: The shift away from human to synthetic data and how distilling from other models is used.

16.Evaluation: The ever-evolving role of evaluation (and prompting) in language models.

17.Over-optimization: Qualitative observations of why RLHF goes wrong and why over-optimization is inevitable with a soft optimization target in reward models.

18.Style and Information: How RLHF is often underestimated in its role in improving the user experience of models due to the crucial role that style plays in information sharing.

19.Product, UX, Character: How RLHF is shifting in its applicability as major AI laboratories use it to subtly match their models to their products.

English

Orizuru retweetledi

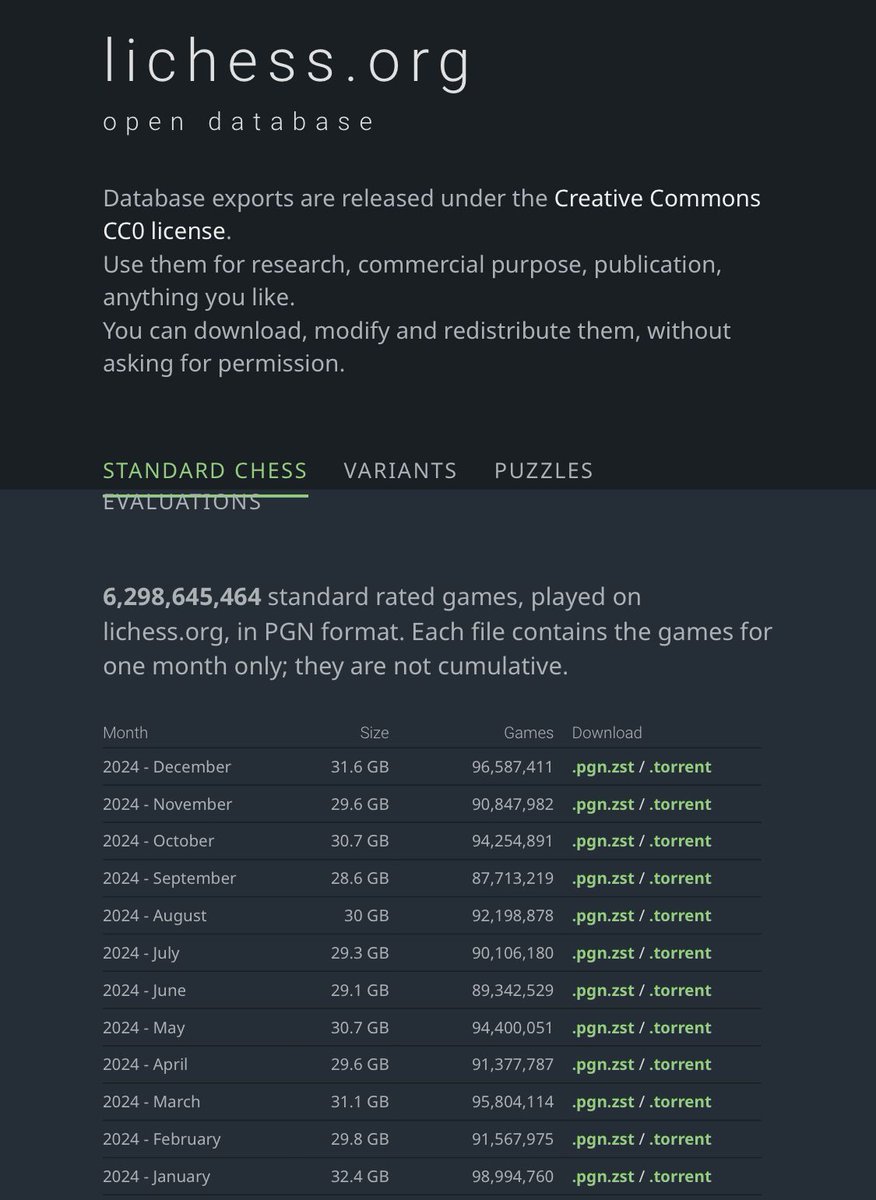

Our December 2024 free and open databases database.lichess.org are now available for download, bringing the total number of rated games to over 6.2 billion in standard chess and 125 million across variants!

English

I only have so many tokens left for my patience youtu.be/omYP8IUXQTs?si… via @YouTube

YouTube

English

Orizuru retweetledi

What is a chess piece, mathematically?

I posted an answer to this question some years back on MathOverflow at mathoverflow.net/a/287927/1946.

English