PaddlePaddle

814 posts

@PaddlePaddle

The first independent R&D and Open-Source deep learning platform in China. Powering the ERNIE model family.

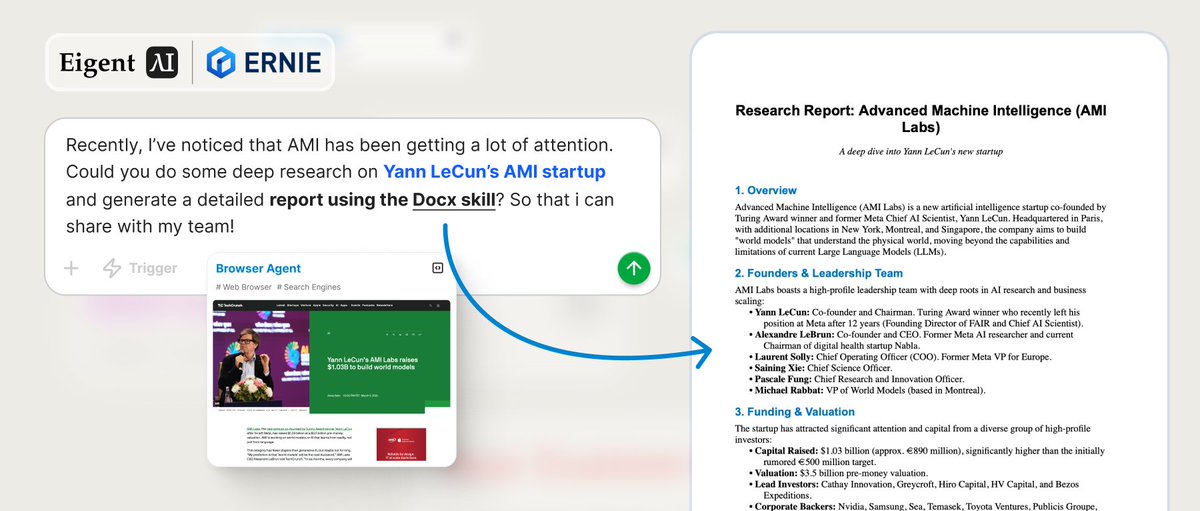

As our @openclaw lineup continues to grow, it now covers multiple products and an expanding set of ClawHub skills — helping support more real-world tasks across desktop, mobile, browser, home, search, productivity, and content workflows!

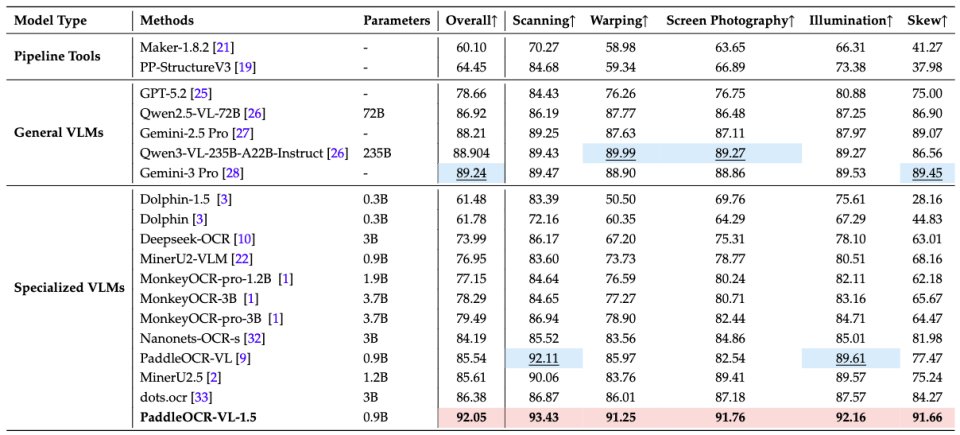

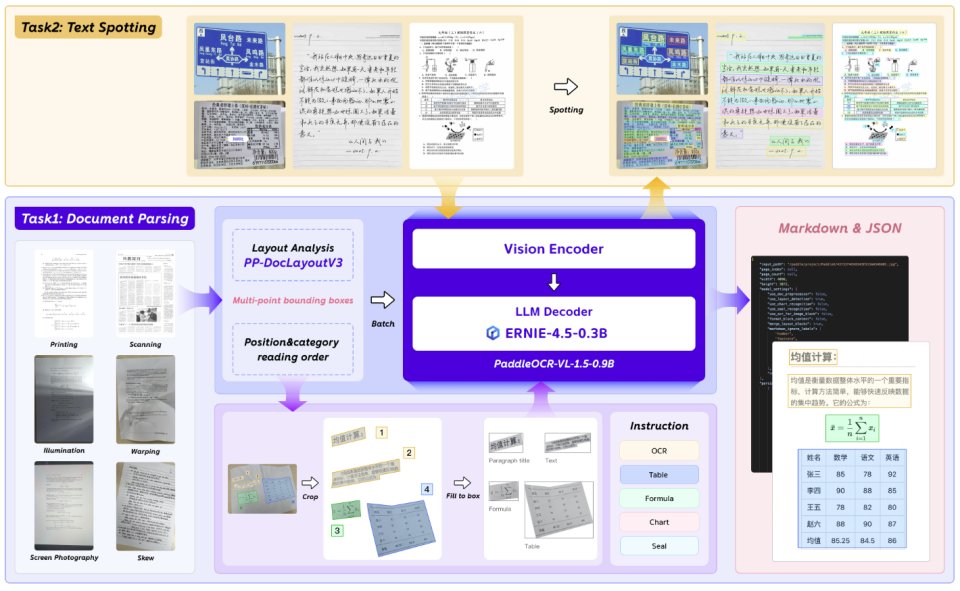

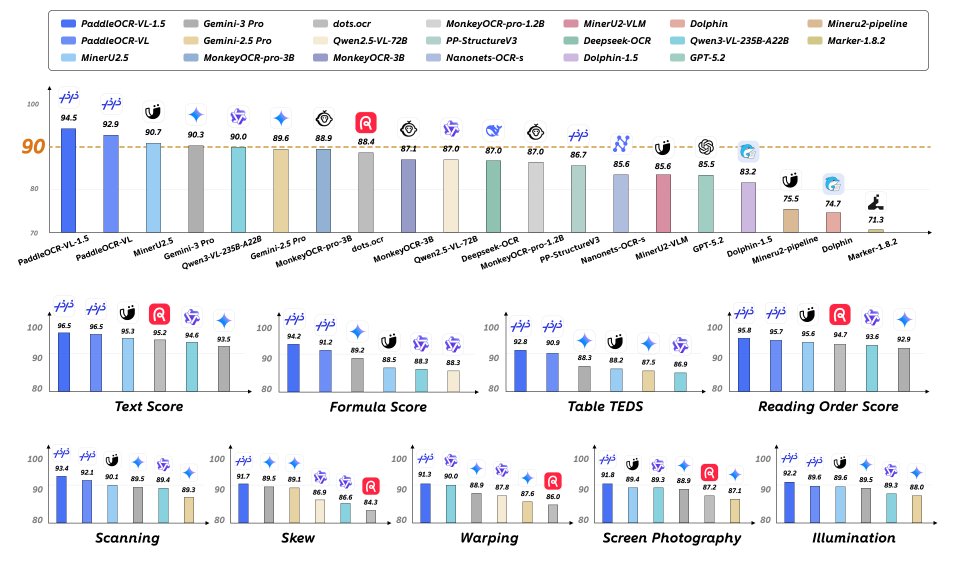

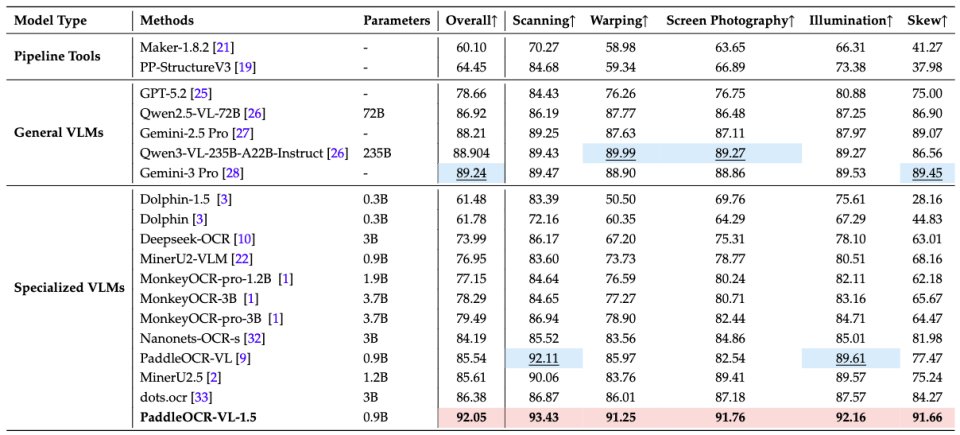

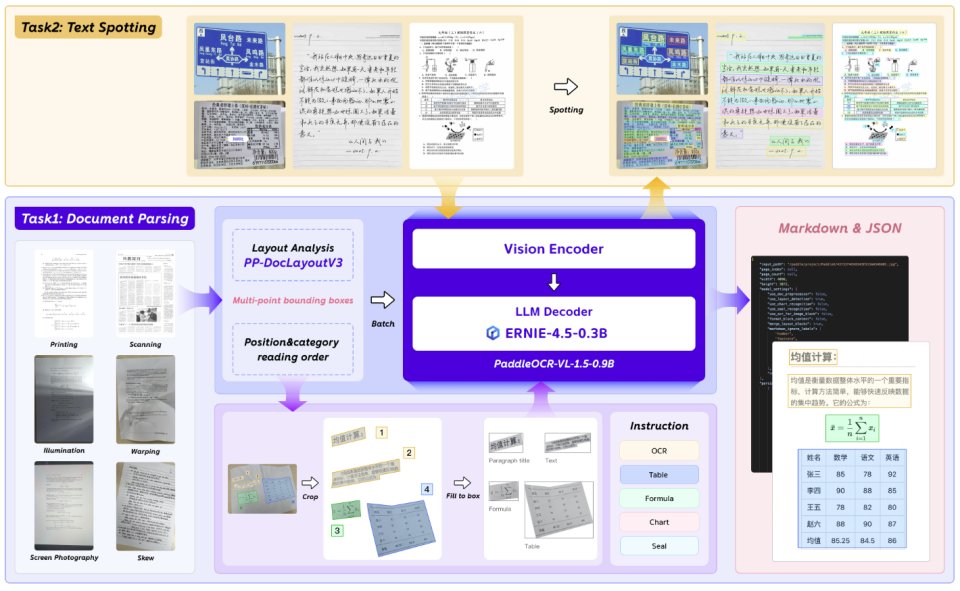

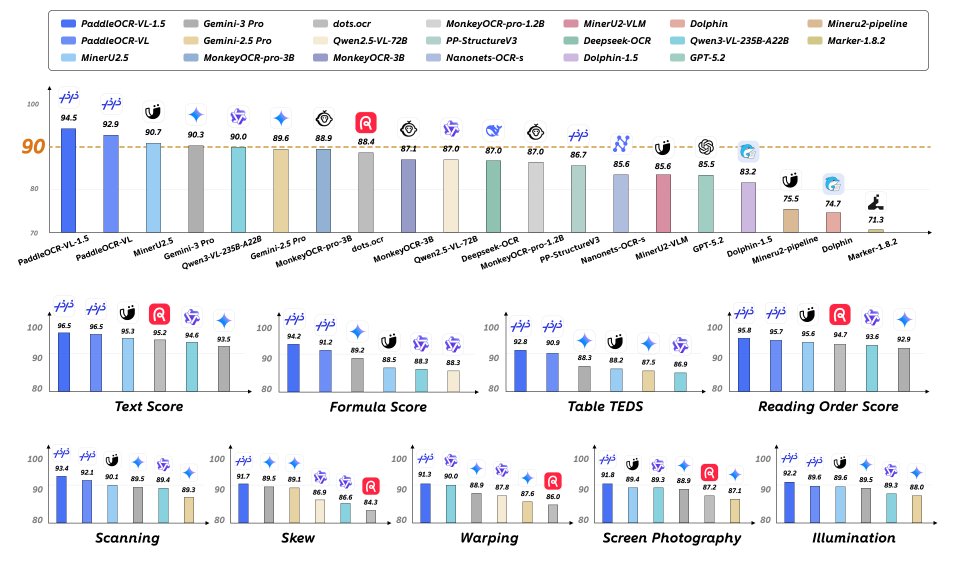

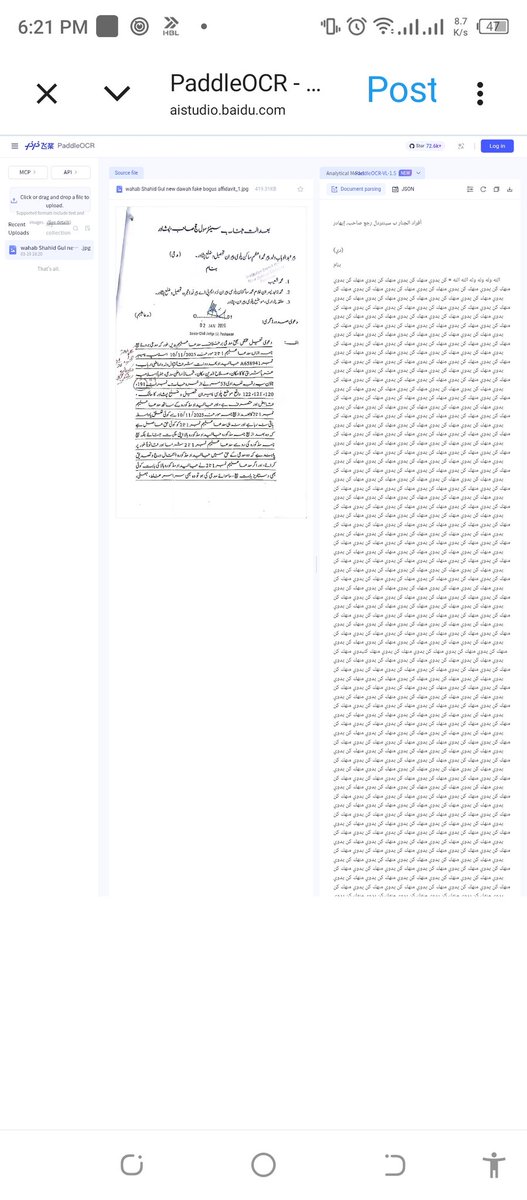

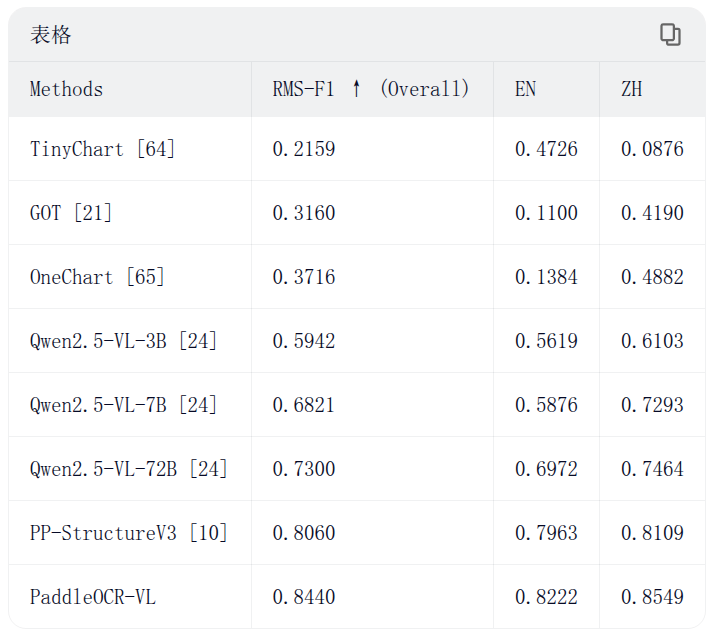

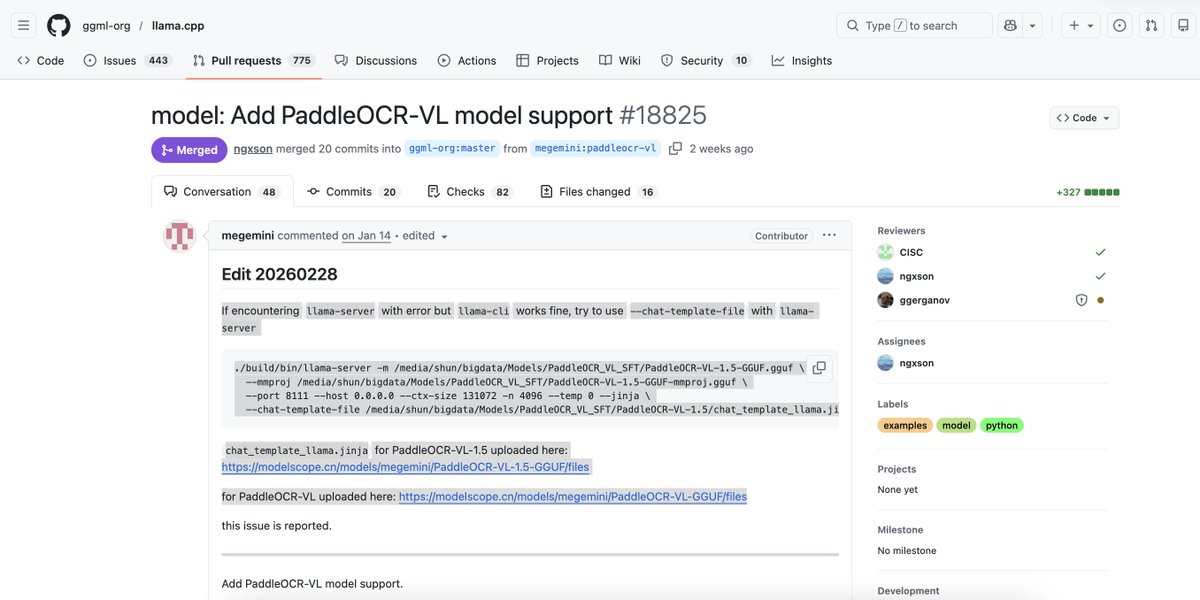

📃@PaddlePaddle released a new VLM: PaddleOCR-VL-1.5 (0.9B) and you can already use it in your document-heavy Haystack pipelines. PaddleOCR-VL-1.5 goes beyond classic OCR: it understands document layout and structure, extracting tables, formulas, charts, and key elements from messy real-world PDFs and images. This makes it a powerful building block for reliable RAG pipelines and document-centric AI applications. For teams building AI systems for reasoning over complex documents, this enables more accurate retrieval, grounding, and reasoning across document structure. 🔍 Why it’s exciting: - 94.5% accuracy on OmniDocBench v1.5 - Irregular-shaped localization for real-world documents (skew, warp, photos) - Strong improvements in table, formula, and text spotting - Multilingual support, including rare scripts and complex layouts 🔗 Model: huggingface.co/PaddlePaddle/P… 🔗 Docs: haystack.deepset.ai/integrations/p…

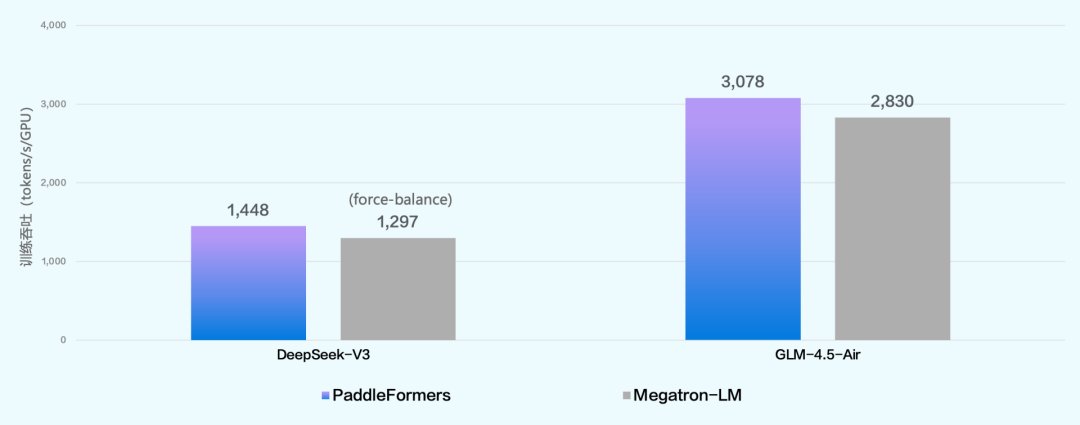

📘 The ERNIE 5.0 technical report is out! Inside, we unpack how our model was built, covering architecture, pre-training, post-training, and infrastructure, such as: > ultra-sparse MoE architecture with modality-agnostic expert routing > unified multimodal training from scratch to avoid the "ability seesaw" > a novel elastic training paradigm for efficient scaling Check out the thread for the full report ↓

A bit late due to the flu 😅 but still very worth sharing: China open source highlights for January 2026🔥 huggingface.co/collections/zh… ✨ Qwen released 4 new series, completing key agent primitives (I/O, memory, alignment): - Qwen3-TTS - Qwen3-ASR - Qwen3-VL-Reranker - Qwen3-VL-Embedding ✨ Ant Group is clearly moving into robotics & embodied AI, releasing the LingBot series: - LingBot-VA - LingBot-World - LingBot-VLA - LingBot-Depth ✨ Strong OCR models continue to stand out: - DeepSeek-OCR-2 - PaddleOCR-VL-1.5 (Baidu) ✨ 100% trained on local chips: - GLM-Image from Z.ai - TeleChat-36B-Thinking ✨ Vision + multimodality is becoming clearly product-oriented: - Unipic3 / SkyReels-V3-R2V from Skywork - UniVideo from Kling - Step3-VL from StepFun - GLM-Image - Z-Image from Tongyi / Alibaba - HunyuanImage-3.0 from Tencent ✨ Small but practical models: - Youtu (2B) / WeDLM (8B) from Tencent - AgentCPM from OpenBMB ✨ Large-scale LLMs: - Kimi-K2.5 (171B, Moonshot) - LongCat-Flash-Thinking (562B, Meituan) January already brought many exciting surprises, looking forward to what February will bring 👀