Paul Atreides

11.6K posts

Paul Atreides

@PaulAtretre

Poker Pro, aka: 'Slick Willy Omaha', aka: 'River Killer of Omaha', aka: 'Head Hunter of Omaha' "I can kill you with a word"

Photonics stocks are going parabolic: • $LITE +1621% • $AAOI +1434% • $COHR +529% • $CRDO +377% • $VIAV +353% • $FN +281% • $MTSI +189% • $MRVL +186% • $IPGP +136% • $POET +127%

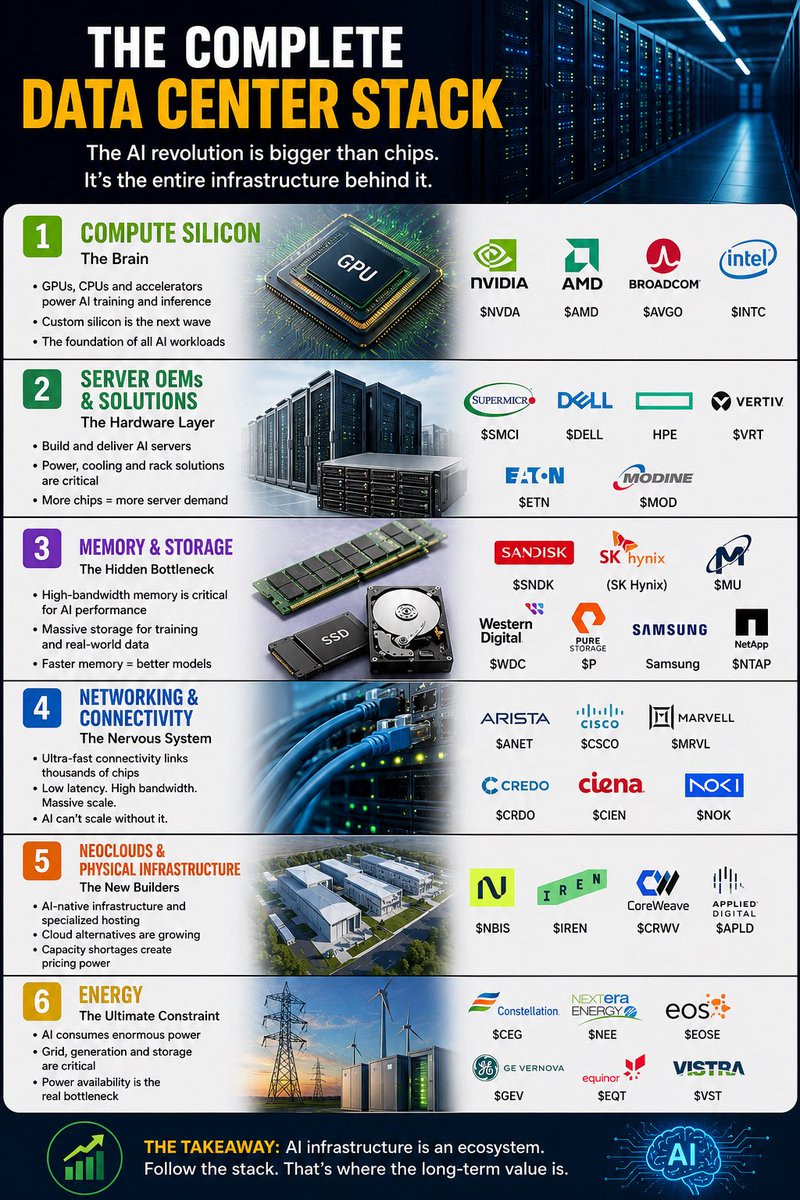

Top high growth stocks 📝 Foundry / Fab $TSM — Sole Rubin chip foundry Optical / Networking $LITE — NVIDIA’s optical partner $COHR — NVIDIA $2B investment $ALAB — CXL interconnect winner $AAOI — Datacenter transceiver ramp $AXTI — 40% InP substrate share $ANET — AI data center switching $CIEN — Coherent optical networking $CRDO — High-speed interconnect $APH — DC interconnect market Data Center Infrastructure $DELL — AI server king $NBIS — NVIDIA-backed neocloud $CRWV — Hyperscale AI colocation $CDNS — Digital twin simulation $ORCL — AI infrastructure juggernaut $EME — Mega-campus EPC contractor Power / Cooling $VRT — NVIDIA co-engineered cooling $AGX — Power EPC contractor $POWL — Switchgear data centers $EME — Critical electrical systems Chips & Silicon $NVDA — Owns entire stack $MRVL — Custom AI ASICs $AVGO — AI revenue doubled $AMD — MI450/Helios H2 ramp Memory $MU — Only US HBM3e/4 $SNDK — NAND supercycle $CAMK — HBM4 inspection $EWY — SK Hynix and Samsung rep Semi Equipment $ICHR — Fluid delivery subsystems for semiconductor capex equipment $FORM — HBM4 probe card standard; MEMS microsprings industry benchmark for vertical stack testing

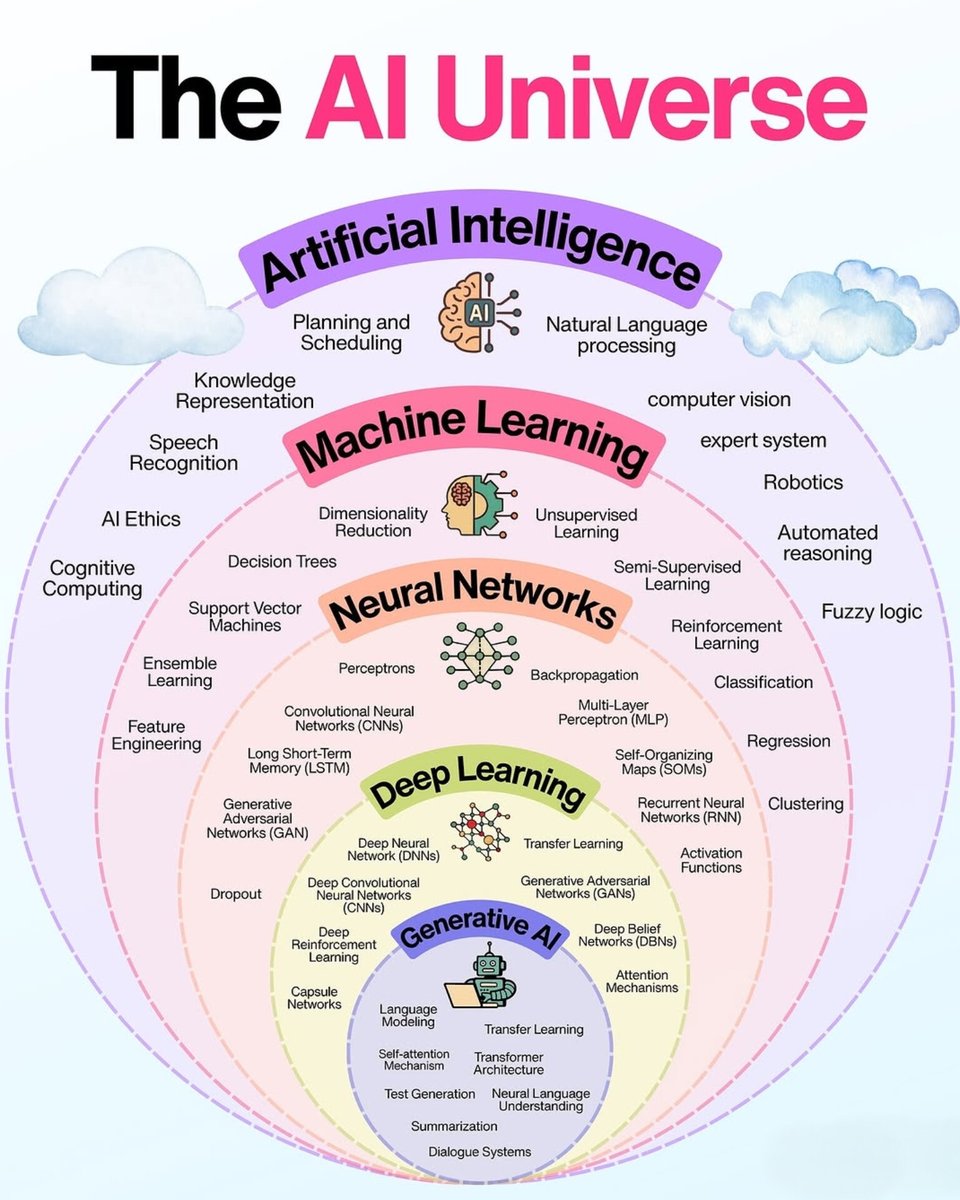

How do we make LLMs faster and lighter? Don’t force the GPU to adapt to sparsity. Reshape the sparsity to fit the GPU! ⚡️ Excited to share our new #ICML2026 paper in collaboration with @NVIDIA: "Sparser, Faster, Lighter Transformer Language Models". This work introduces new open-source GPU kernels and data formats for faster inference and training of sparse transformer language models: Paper: arxiv.org/abs/2603.23198 Blog: pub.sakana.ai/sparser-faster… Code: github.com/SakanaAI/spars… While LLMs are undoubtedly powerful, they are increasingly expensive to train and deploy, with a large part of this cost coming from their feedforward layers. Yet, an interesting phenomenon occurs inside these layers: For any given token, only a small fraction of the hidden activations actually matter. The rest approximate zero, wasting computation. With ReLU and very mild L1 regularization, this sparsity can exceed 95% with little to no impact on downstream performance. So, can we leverage this sparsity to make LLMs faster? The challenge is hardware. Modern GPUs are optimized for dense matrix multiplications. Traditional sparse formats introduce irregular memory access and overheads that cancel out their theoretical savings for GEMM operations. Our contribution is twofold: 1/ We introduce TwELL (Tile-wise ELLPACK), a new sparse packing format designed to integrate directly in the same optimized tiled matmul kernels without disrupting execution. 2/ We develop custom CUDA kernels that fuse multiple sparse matmuls to maximize throughput and compress TwELL to a hybrid representation that minimizes activation sizes. We used our kernels to train and benchmark sparse LLMs at billion-parameter scales, demonstrating >20% speedups and even higher savings in peak memory and energy. This work will be presented at #ICML2026. Please check out our blog and technical paper for a deep dive!

The SpaceX IPO will ignite a trillion-dollar space race. 𝗦𝗽𝗮𝗰𝗲 𝗜𝗻𝗳𝗿𝗮𝘀𝘁𝗿𝘂𝗰𝘁𝘂𝗿𝗲 $RKLB Rocket Lab $SIDU Sidus Space $FLY Firefly Aerospace $RDW Redwire Space $LUNR Intuitive Machines $MDA MDA Space $VOYG Voyager Space $YSS York Space Systems $SPCE Virgin Galactic $FJET Starfighters Space 𝗦𝗮𝘁𝗲𝗹𝗹𝗶𝘁𝗲 𝗖𝗼𝗺𝗺𝘂𝗻𝗶𝗰𝗮𝘁𝗶𝗼𝗻𝘀 $ASTS AST SpaceMobile $GSAT Globalstar $SATS EchoStar $IRDM Iridium Communications $ETL Eutelsat $TSAT Telesat $GILT Gilat Satellite Networks $VSAT Viasat 𝗦𝗽𝗮𝗰𝗲 𝗜𝗺𝗮𝗴𝗶𝗻𝗴 $PL Planet Labs $GSAT Globalstar $SATL Satellogic $BKSY BlackSky Technology $SPIR Spire Global 𝗦𝗽𝗲𝗰𝗶𝗮𝗹𝗶𝘁𝘆 𝗠𝗮𝘁𝗲𝗿𝗶𝗮𝗹𝘀 $CRS Carpenter Technology $MTRN Materion $HXL Hexcel $ATI ATI $GLW Corning $PKE Park Aerospace 𝗔𝗲𝗿𝗼𝘀𝗽𝗮𝗰𝗲 & 𝗗𝗲𝗳𝗲𝗻𝘀𝗲 $RTX RTX Corporation $LMT Lockheed Martin $KTOS Kratos Defense & Security $VOYG Voyager Space $LHX L3Harris Technologies $NOC Northrop Grumman $BA Boeing $AIR Airbus $HO Thales 𝗦𝗽𝗮𝗰𝗲 𝗖𝗼𝗺𝗽𝗼𝗻𝗲𝗻𝘁𝘀 $TDY Teledyne Technologies $APH Amphenol $KRMN Karman Space $RBC RBC Bearings $PH Parker Hannifin $AME AMETEK $GHM Graham $HEI Heico $DCO Ducommun $ATRO Astronics $TDG TransDigm

Some of our communities saw significant snowfalls of 12" or more overnight, leaving approximately 55,000 customers without power. Our crews are out repairing damage, focusing first on repairs that will restore power for the largest number of customers. We appreciate your patience as work continues in hazardous conditions with more snow expected this morning. Customers can check their outage status by visiting the outage map, which displays the anticipated time for restoration when available, texting STAT to 98936, or using our website. outagemap-xcelenergy.com/outagemap/