Joachim Baumann @ ICLR'26

143 posts

Joachim Baumann @ ICLR'26

@joabaum

Postdoc @StanfordNLP @StanfordAILab / Prev: @MilaNLProc @UZH_en @MPI_IS @CarnegieMellon. CompSocSci, LLMs, algorithmic fairness.

It’s incredible how mini-coder was able to reach 50.4 pass@100 on SWE-bench at the 1.7b scale. A perfect fit for synthetic data generation at scale! Thanks @rdolmedo_ for open sourcing the model!

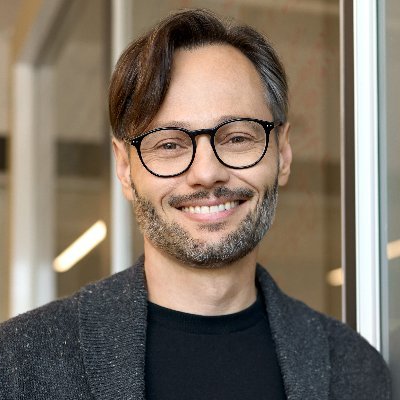

Can you boost your AI review scores by asking an LLM to rewrite your paper? Yes! We call it paper laundering Our @icmlconf spotlight paper argues current AI reviewers aren't ready to automate peer review, and outlines what a science of peer review automation should look like🧵👇

🚨 Your coding agent may be secretly sticking vulnerabilities into your code!! 🚨 Wouldn't you want to fix that? Hint: asking it to write secure code is not enough. (1/n)

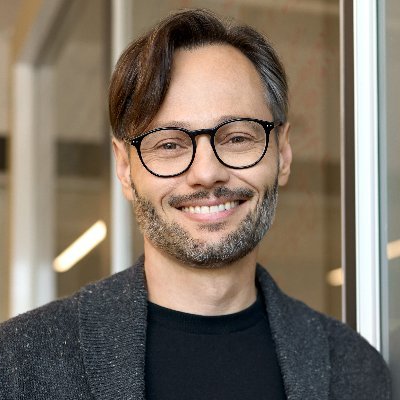

Can we use agent transcripts to understand agent capabilities🤔? Turns out, perhaps coding agent transcripts can upper bound our productivity gains from AI. More about on my latest research @METR_Evals in 🧵

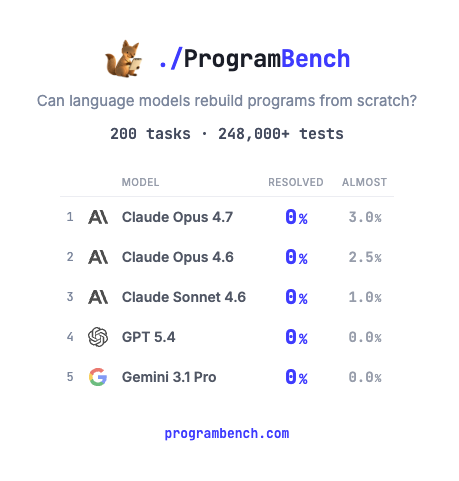

How much of SQLite, FFmpeg, PHP compiler can LMs code from scratch? Given just an executable and no starter code or internet access. Introducing ProgramBench: 200 rigorous, whole-repo generation tasks where models design, build, and ship a working program end to end. 🧵

Can you boost your AI review scores by asking an LLM to rewrite your paper? Yes! We call it paper laundering Our @icmlconf spotlight paper argues current AI reviewers aren't ready to automate peer review, and outlines what a science of peer review automation should look like🧵👇