Sabitlenmiş Tweet

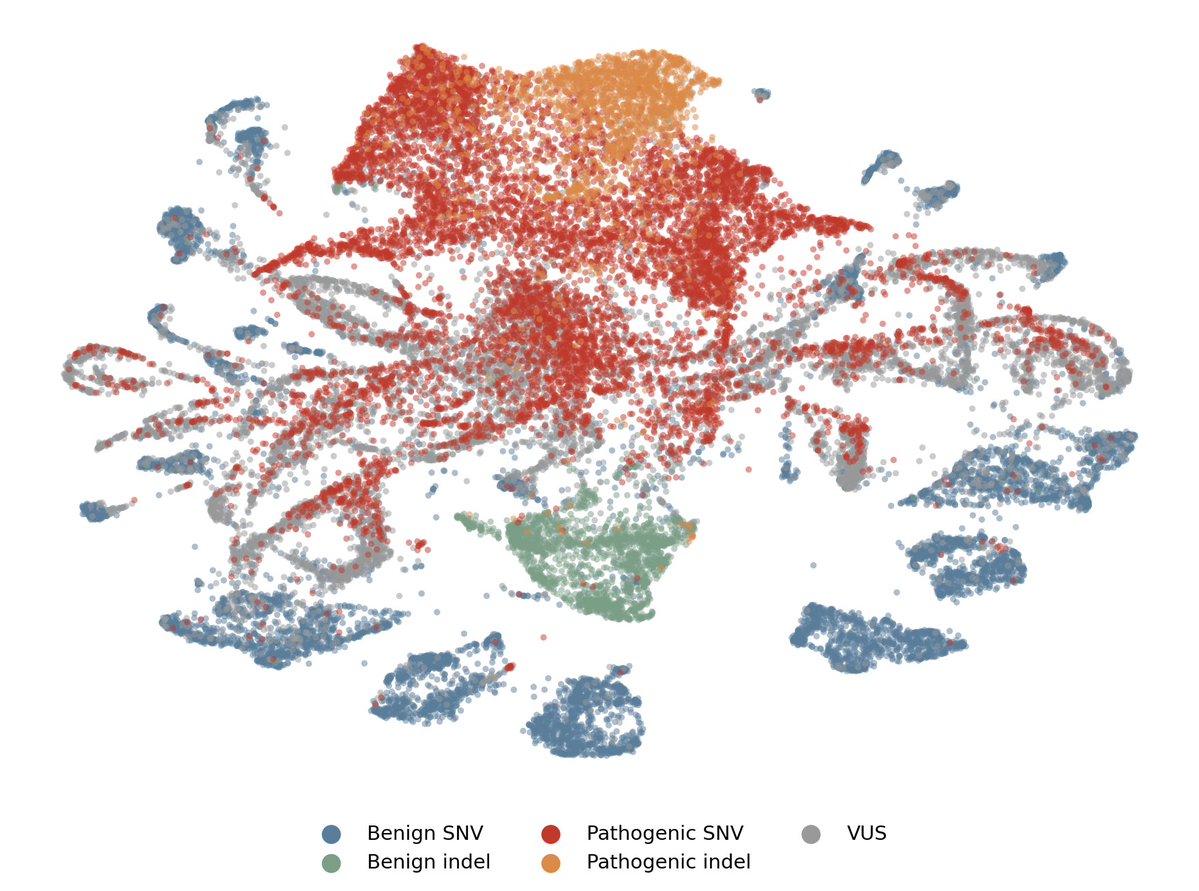

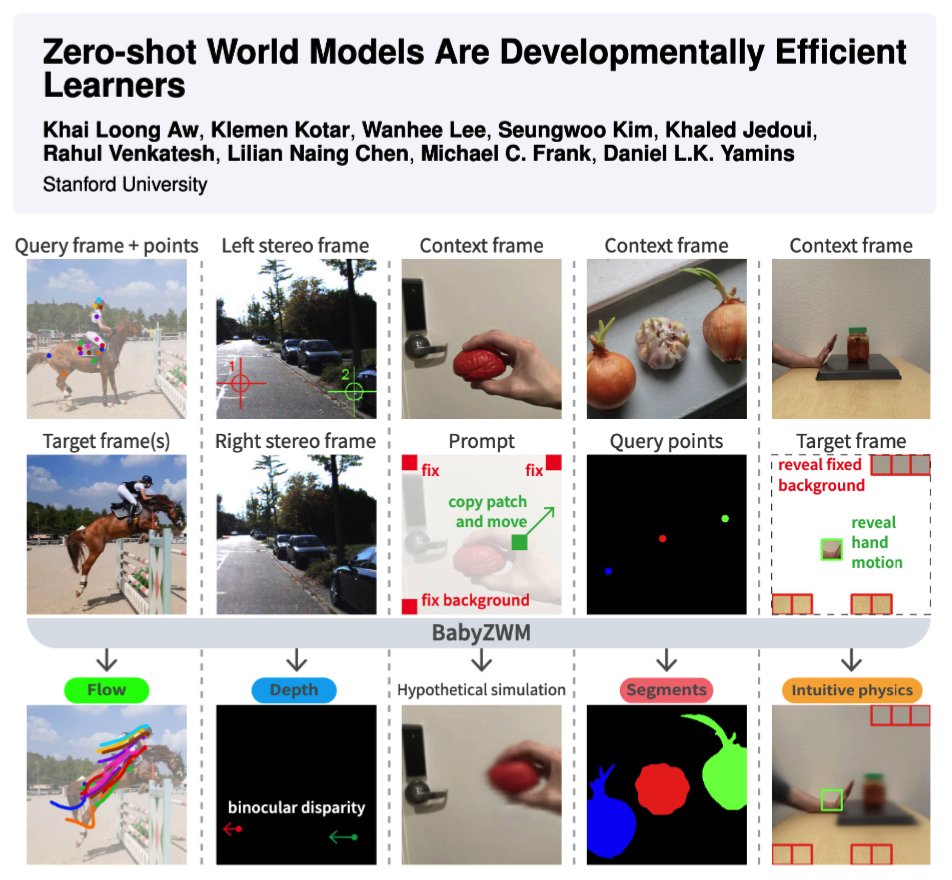

I built a Gradio app to dive into the latent space of the new V-JEPA 2.1 models. Found something interesting: specific latent dimensions are hyper-sensitive to temporal shifts, regardless of the video content. Could be an insight into its world model logic... or just a nothing burger.

Pishty@Pishtywan

English