Trista

1.8K posts

Trista

@Project2501_117

Lady of @ID_AA_Carmack Texas Native: United States Army Veteran: Positive Vibes Only: Full Time Adventure!

Fort Worth, TX Katılım Ağustos 2018

197 Takip Edilen6K Takipçiler

Trista and I saw NIN last night with friends, and it was a great show, as always.

I inevitably wind up thinking about the gap between the live event experience and reproductions on video or in VR. The gap is substantial, with the “shock and awe” of high powered, extreme dynamic range stadium effects, but there are still levers to pull.

A capability of the Quest VR hardware that would be fun to exploit is per-frame varying of the display persistence. The low-persistence display is only flashed “on” for about a tenth of each frame, so it doesn’t look blurred as you move your head around. This is a tradeoff between screen brightness and blur, which is just statically picked by Meta today, but it could be done dynamically on a per-frame basis (coordination would be tricky!).

Going to full persistence briefly would give an instant 10x brightness increase, which would be quite impactful on eyes adapted to the 10% brightness. You wouldn’t want to keep it there for long, because the blurring would be obnoxious and eyes would adapt to it, but it would be perfect for the epilepsy-inducing concert light flashes.

The clean way to do it would be with a 16 bit high dynamic range linear buffer that gets factored into an sRGB image for the display and a backlight flight time, but a hacky extra parameter latched with the frame submit would be the easiest thing to experiment with. Make it work, then make it clean!

A tech demo would be fun, but Beat Saber has a large enough user base that stepping up “concert lighting effects” might even be worth it from a net user value standpoint.

Trista@Project2501_117

NIN - Friggin 🔥

English

I like and bookmark so many interesting sounding papers here, and don’t get back to most of them. Time to start making a dent. I’m going to try to at least skim one of the papers in my bookmarks each weekday for the rest of the month.

#PaperADay

2025: Emergent temporal abstractions in autoregressive models enable hierarchical reinforcement learning (Google)

I like their statement of the hierarchical goal problem as “how long does it take a twitching hand to win a game of chess?” @RichardSSutton is fond of the “options” framework in RL, but we don’t have a clear method to learn them from scratch.

Their Ant environment is designed to require two levels of planning: the standard mujoco Ant locomotion work to be able to move at all, and routing decisions to get to the colored squares in the correct order, which will happen hundreds of frames apart.

Basically, this takes a pre-trained sequence predicting model that predicts what separately trained expert models (manually steered) do, and inserts a metacontroller midway through it, which can tweak the residual values to perform high level “steering”, and can be RL’d at high level switch points to much greater performance than the base pre-trained model.

A key claim here is that learning to predict actions in a supervised next-token manner from lots of existing expert examples, even if you don’t know the goals, results in inferring useful higher level goals. This sounds plausible, but their experiment makes it rather easy for the model: the expert RL models that generated the training data were explicitly given one of four goals in each segment, and the option learning model just classifies the sequences into one of four categories. This is a vastly simpler problem than free form option discovery.

A State Space Model is used for the more complex Ant environments, while a transformer is used for the simpler grid world environments. I didn’t see an explanation for the change.

The internal “walls” are more like “poison tiles”, since they don’t block movement like the map edges, they just kill the ant when its center passes into them.

The 3D renderings (with shadow errors that hurt my gamedev eyes) are somewhat misleading, since it is really a 2D world that the agent gets to fully observe in a low dimensional one-hot format. It doesn’t do any kind of partially observed or pixel based sensing.

Everything is done with massively parallel environments, avoiding the harder online learning challenges.

The success rates still aren’t great after a million episodes.

I would like to see this applied to Atari, basically doing GATO with less capable experts or lower episode quantities, then trying to identify free form options that can be usefully used to RL to higher performance.

Seijin Kobayashi@SeijinKobayashi

Standard reinforcement learning in raw tokens is a disaster for sparse rewards! Here, we propose 𝗜𝗻𝘁𝗲𝗿𝗻𝗮𝗹 𝗥𝗟: acting on abstract actions emerging in the residual stream representation. A paradigm shift in using pretrained models to solve hard, long-horizon tasks! 🧵

English

We’re making great progress with our Gemini Robotics work in bringing AI to the physical world - a critical aspect of AGI. As part of our next steps, super excited to announce our partnership with @BostonDynamics, combining our SOTA robotics models with their world-class hardware

Google DeepMind@GoogleDeepMind

Google DeepMind 🤝 @BostonDynamics Our new research partnership will bring together our advancements in Gemini Robotics’s foundational capabilities to their new Atlas® humanoids. 🦾 Find out more → goo.gle/49paguA

English

@ID_AA_Carmack Wow this is some next level old man rant... respect

English

@ID_AA_Carmack love the image of you staring at the slowly moving prime menu images real close up analyzing them

English

Amazon Prime cycles through slow pans of static images for content they are promoting. The image quality is high, but several of them had a distracting shimmer in high contrast areas. It might be that the image resolution was too high and they are subsampling without mip maps, but my bet would be on not doing gamma correct texture filtering.

Always use sRGB(A) texture formats for images! Copy from RGB or YUV image formats if necessary, making sure to perfectly match the pixel centers. If you are just using textures like a pixel blitter with no stretching or subpixel movement you won’t notice a difference, but slow slides of an image will highlight the issue.

English

@ID_AA_Carmack I love Dexter!!!! ❤️ 🔪

@grok please explain gamma correct texture filtering

English

@NewsGoatX @ID_AA_Carmack @Grimezsz @Meta @facebook @boztank Ha! This trips me out. That is a ridiculous claim. JC's engine included the trick (or a variant) and he's on record publicly deflecting authorship when asked. Ask any of the LLMs they will all correct your claim.

English

Trista retweetledi

Teslas displaced my exotic cars many years ago, but with the Roadster still an indeterminate distance in the future, I had been feeling an urge for something a little more… analog.

I was initially thinking about a 60’s Camaro with modernized running gear, but a part of me was also nostalgic for my old MGB. I eventually remembered that there was a car right at the intersection of muscle car grunt and British roadster charm – the ‘65 Shelby Cobra.

It is kind of amazing that the federal Low Volume Motor Vehicle Manufacturers Act of 2015 authorizes small runs of replica vehicles to basically ignore all the safety and emissions regulations. All the paternalism and crusading was just pushed aside in the name of letting small companies make badass cars.

On my birthday, Trista nudged me into visiting @EMotorcars where they had a dozen different replicas on hand. I was partial to the idea of a carbureted 427 with a magneto as a Mad Max post-EMP LARP, but practicality prevailed, and I wound up with a modern Coyote crate motor in a @Backdraftracing chassis.

English

@ID_AA_Carmack I've never seen code like this. Just isolate the node and dump it on the other side of the router

GIF

English

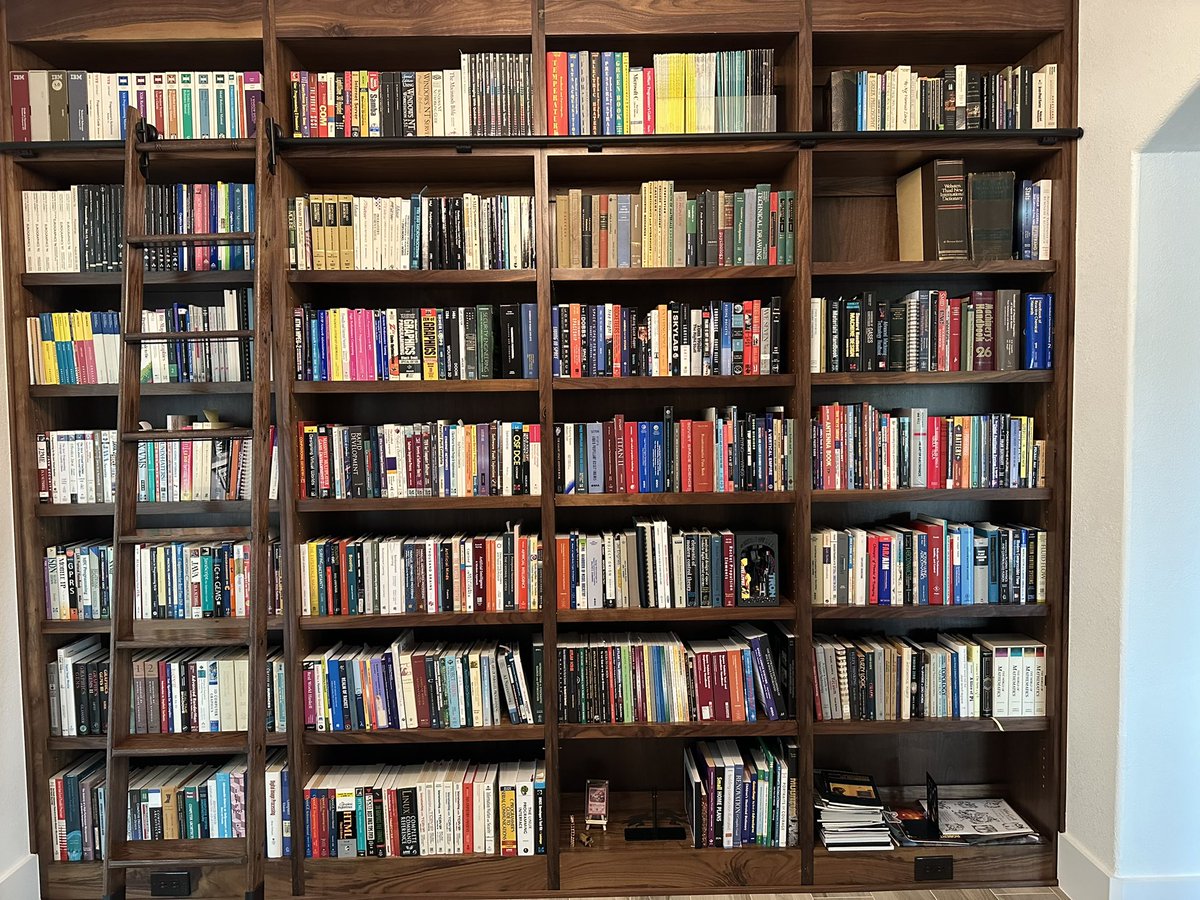

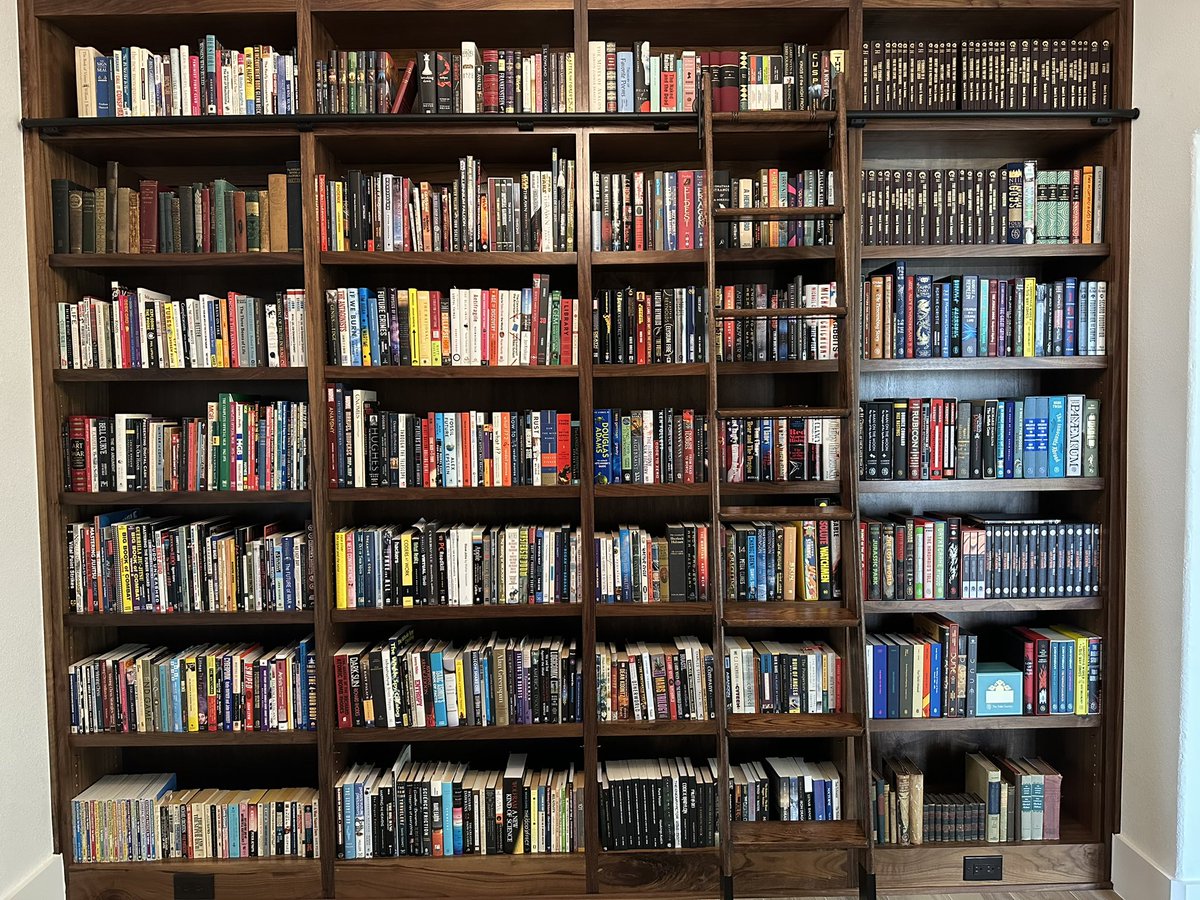

Trista turned the formal dining room into a library for me at our new house, and I love it!

We still have separate shelving for paperbacks, magazines, and graphic novels, and a few more bookshelves in my Fortress of Solitude, but this is the most books I have ever had shelved in one place.

I have never thrown a book away in my life, but I know there are many books that have somehow been lost in moves over the years, and I occasionally miss them.

English

@RoboSabs @ID_AA_Carmack He orders random elements and compounds from the internet. They show up and I'm like bae "wtf is this" ? 🫠

English

@ID_AA_Carmack I enjoy all the random stuff you have on hand like carbon and aerogel. 😁

English

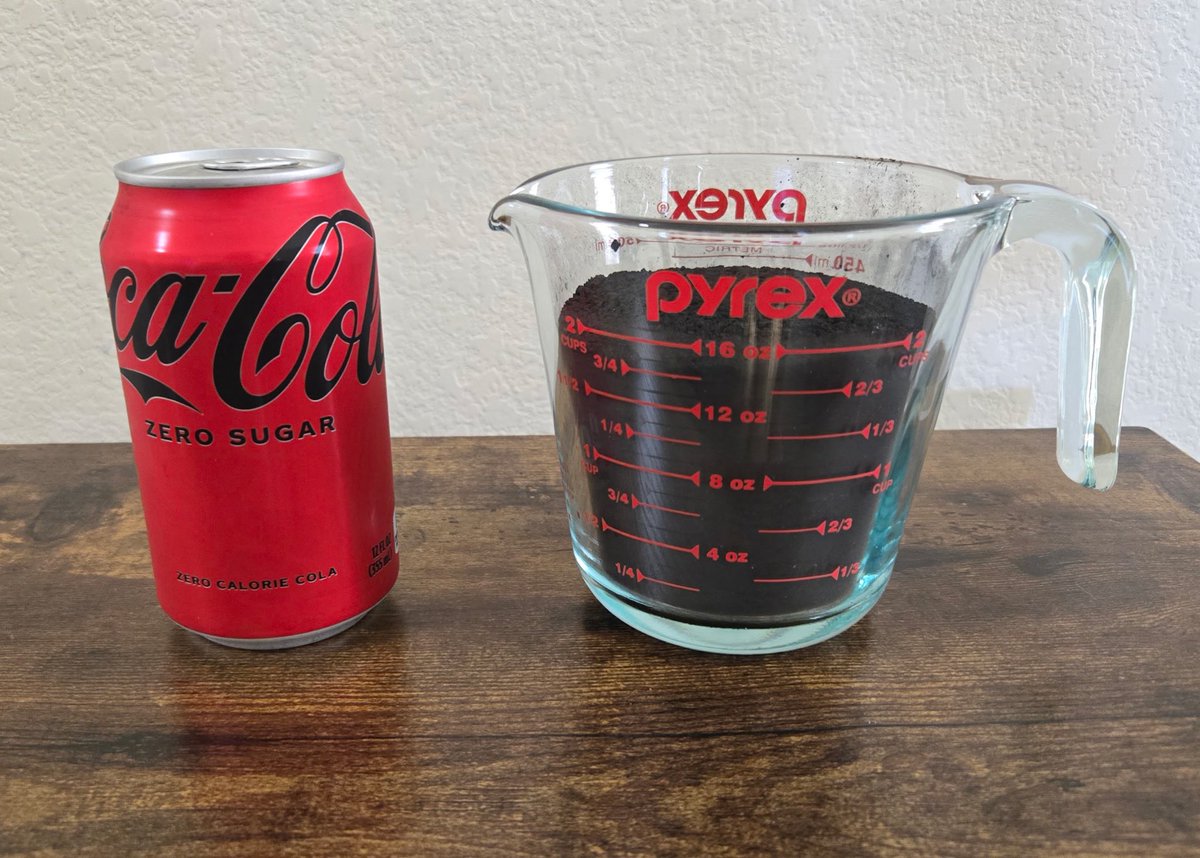

You may have heard that an average human breathes out roughly 1kg of carbon dioxide a day.

Gas masses are kind of abstract, and most of the mass is oxygen than you breathe in, but that is still 273 grams of carbon that your metabolism draws out of your body every day, even if you are fasting. Much more for a large person laboring heavily.

Weighed out, that is a surprisingly large pile!

(thinking about space habitats)

English

@BlackwellV90362 @ID_AA_Carmack @bryan_johnson Cleaned up his eating, stopped long distance running , went to strength training, peleton, physical therapy coach, focused on form. Started with daily vitamins. Then, he started the blueprint program. It didn't happen overnight. This is a couple years in the making.

English

A chunk of this is just @Project2501_117 dressing me in tighter shirts, but I did put on several pounds of muscle this year after switching my random grab bag of vitamins and supplements over to @bryan_johnson ‘s Blueprint system. I was probably not getting enough protein to take advantage of the exercise I was doing.

I have always been roughly “upper quintile” for fitness — regular exercise, but not at the level of the serious athletes that most offices tend to have a few of.

kache@yacineMTB

insane how jacked carmack got

English