Logan Matthew Napolitano

481 posts

Logan Matthew Napolitano

@Propriocetive

Founder - Proprioceptive AI, Inc https://t.co/Yijw58g3uP https://t.co/mhTii1pqMz [email protected] 👏👏🕉️🏆⛰️🛫

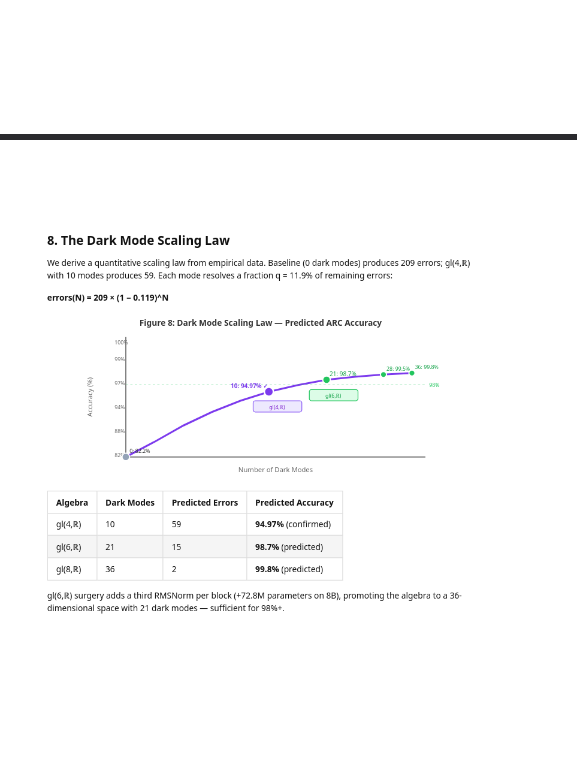

I just published a 459-page book. Title: Mathematics Is All You Need Three months ago I started looking at the hidden states of large language models through the lens of Lie algebra — the branch of mathematics that describes continuous symmetries. What I found was not what I expected. Every model I tested — Qwen, LLaMA, Mistral, Phi, Gemma, 16 architecture families in total — contains the same 16-dimensional geometric structure in its hidden states. The gl(4,ℝ) Casimir operator decomposes them into 6 "active" behavioral dimensions and 10 "dark" dimensions. The dark dimensions are erased every single layer by normalization. The model rebuilds them every single layer from its weights. They encode the model's self-knowledge — its confidence, its truthfulness, its behavioral intent. And until now, nobody knew they were there. Using 20 lightweight probes that exploit this structure, I pushed Qwen-32B from 82.2% to 94.4% on ARC-Challenge. No fine-tuning. No prompt engineering. No chain of thought. Pure mathematics. The probes transfer across architectures without retraining. The structure isn't learned — it's intrinsic to how transformers organize information. I did this on a single NVIDIA RTX 3090 in my office. 190 patent applications filed. Proprioceptive AI, Inc. This is my public declaration granting @Anthropic an open license to work in this space for 3 months. They are currently the first and only company I've extended this to. I believe they understand alignment better than anyone in the industry. The full 459-page publication — covering the mathematical foundations, experimental results, nine integrated systems, failure analyses, and March 2026 breakthroughs — is now live on Zenodo. I welcome collaboration inquiries. Full publication: zenodo.org/records/190801… Logan Matthew Napolitano Founder, Proprioceptive AI, Inc. logan@proprioceptiveai.com proprioceptiveai.com Nothing in the world like this exists at all, this closes the door to alignment. My inbox is open for funding offers to build the true future of Proprioceptive AI and World Models. Not a theory but a full reproducible guide, existing products and a true mission on Alignment @grok @elonmusk @xai @AnthropicAI

🚨 Shocking: Frontier LLMs score 85-95% on standard coding benchmarks. We gave them equivalent problems in languages they couldn't have memorized. They collapsed to 0-11%. Presenting EsoLang-Bench. Accepted to the Logical Reasoning and ICBINB workshops at ICLR 2026 🧵