PublicAI

2.1K posts

@PublicAI_

The Human Layer of AI enables everyone to contribute training data. Backed by @StanfordSBA, @SolanaFndn, @NEARProtocol, @PublicAIData.

The goal of Personal AI: civilization where individual humans, augmented by AI, can do consequential work without being captured by extractive institutions. Freedom to write your prompt and own your data. This is the new battleground. 2034 won’t have to be like 1984.

Voice Cloning is now live via the xAI API! Create a custom voice in less than 2 minutes or select from our library of 80+ voices across 28 languages to personalize your voice agents, audiobooks, video game characters, and more. x.ai/news/grok-cust…

Microsoft just turned an $11 billion startup into a Word feature. Harvey raised $200M at an $11B valuation in March on the bet that legal AI is its own surface. The numbers held that up. $190M ARR per TechCrunch's December reporting. 100,000 lawyers across 1,300 organizations including the majority of the AmLaw 100. Around $1,200 per lawyer per month per Sacra. Big firms paid because Harvey was the only tool in the category that worked. Brad just stapled a legal agent directly inside Microsoft Word, shipping in the $30 per seat Copilot subscription every law firm already pays for. Same surface every lawyer drafts in. Same .docx that gets sent and redlined. No second login, no procurement cycle, no migration. The price gap is roughly 40x. The interesting tell: Microsoft built the agent with legal engineers, many of them from Robin AI, a legal AI startup that recently went under, per Artificial Lawyer's reporting. The talent that knew how to make legal AI work for lawyers landed at Microsoft after their startup couldn't survive standalone. That's the legal AI category in one sentence. Distribution was always the constraint here. Lawyers don't switch tools. Word is where contracts get drafted, redlined, and tracked. Whichever AI lives inside that .docx wins the default workflow, and Microsoft just walked through the door uncontested. Harvey's surviving moat is the AmLaw 100 partner workflow. Domain training, agentic litigation prep, deep integrations with iManage and NetDocuments. Real moat for $1,500-an-hour partners running M&A and complex litigation. It does not extend to the millions of lawyers globally drafting NDAs, redlining vendor contracts, and updating templates. That layer is exactly what Word Legal Agent goes after, and Microsoft can ship it as a feature inside a $360-a-year subscription. The $11B valuation pays out only if legal AI work stays its own surface. Microsoft just absorbed the surface.

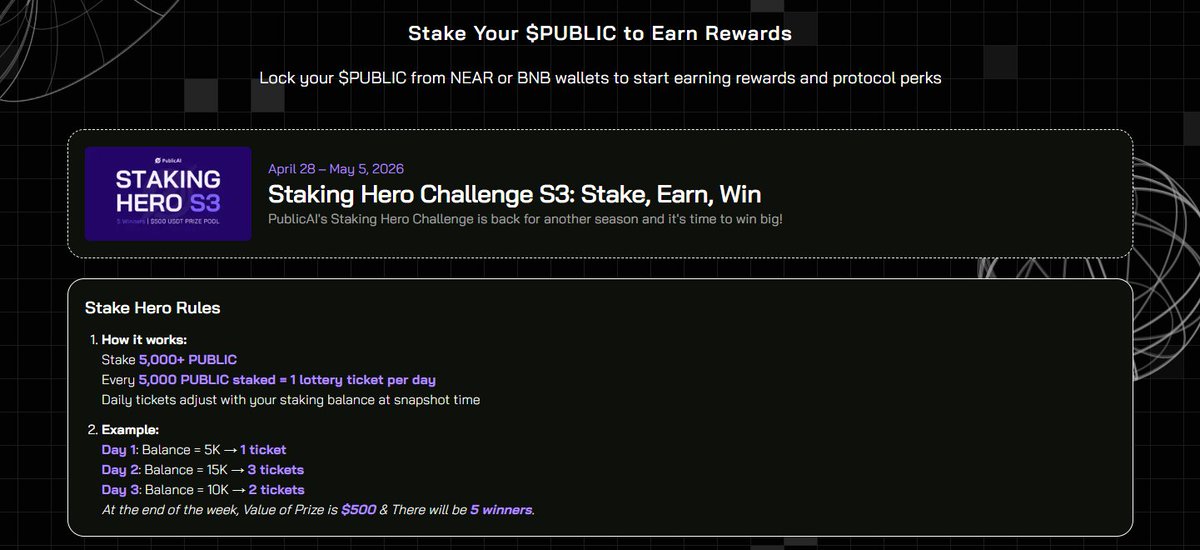

Staking Hero S3 is live. $500 Pool. 5 Winners. 🌟 Stake 5,000+ PUBLIC 🌟 Earn tickets daily Sit still and stack odds No tasks. No grind. Just position and let it run. 👉 token.publicai.io/stake

HEVN (@hevn_finance) is building a “Brex for non-US companies” — an alternative financial platform that lets businesses open virtual bank accounts in 8 banks worldwide, use USD cards with cashback for Google/Meta ads, and store funds in USD. Congrats on the launch, @p_volnov and @Nikitadigital10! ycombinator.com/launches/Q9t-h…