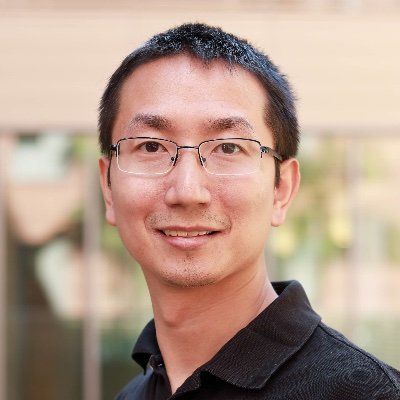

Quanquan Gu

2.4K posts

Quanquan Gu

@QuanquanGu

Professor @UCLA, Pretraining and Scaling at ByteDance Seed | Recent work: Seed2.0, SeedFold | Opinions are my own

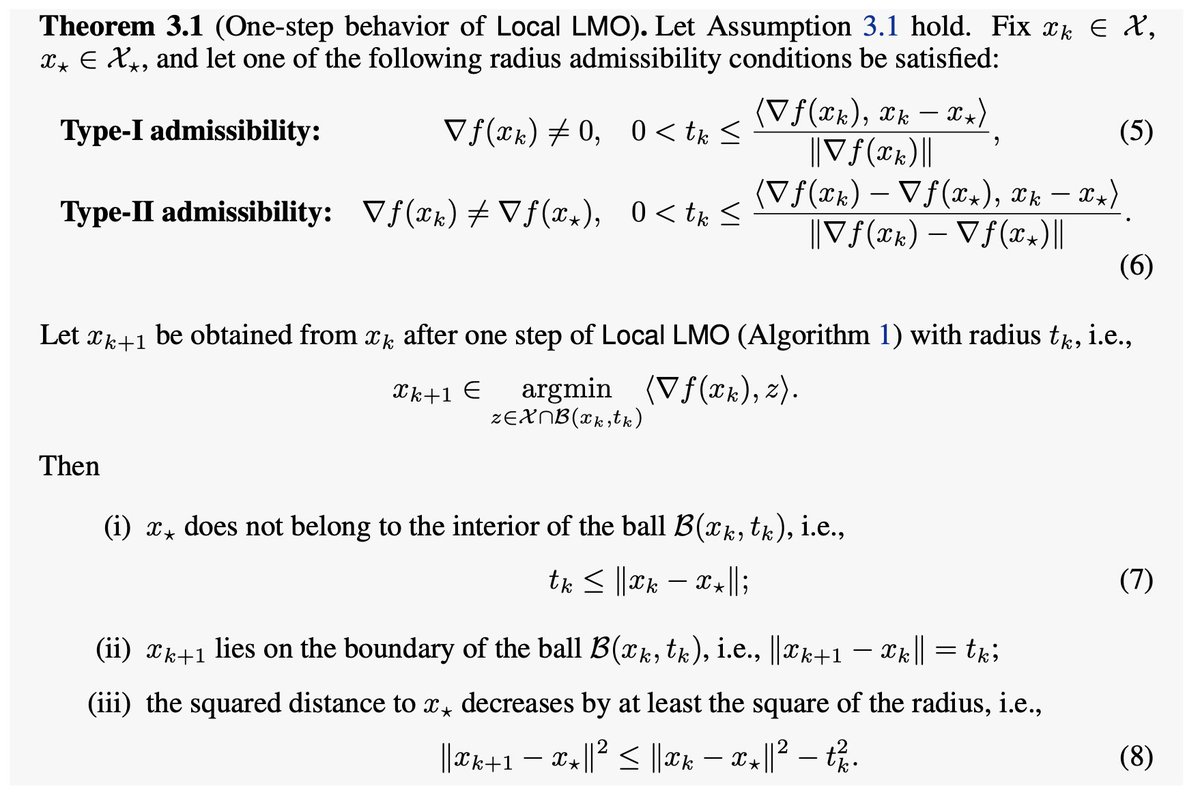

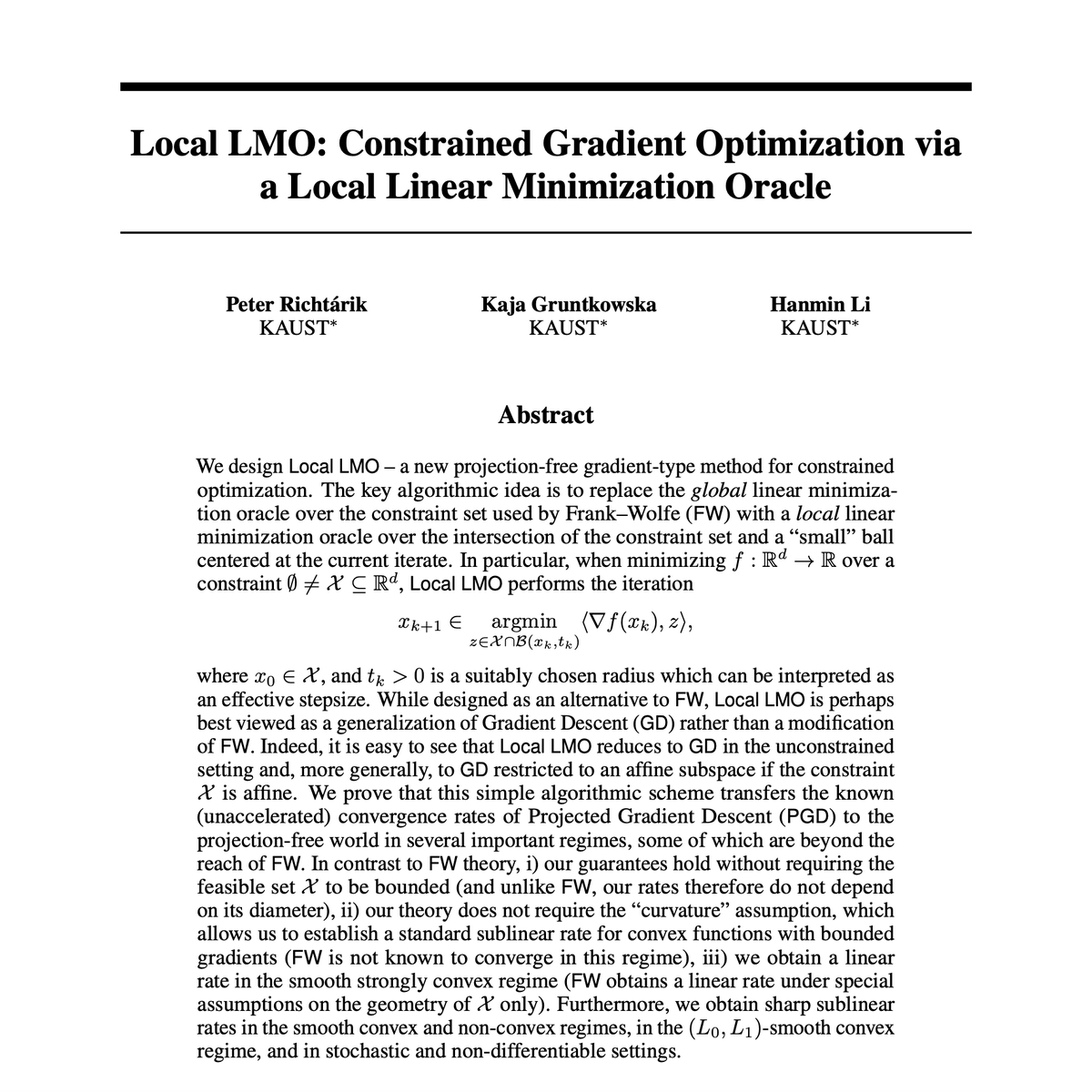

Imagine that projected gradient descent (PGD) was a new method, discovered today. How would that feel? This is a textbook algorithm... What further research, extensions, improvements and variants would this enable? In fact, together with Kaja Gruntkowska and Hanmin Li, we have just discovered a sister method to projected gradient descent -- one of equal conceptual importance. Our method admits the same or very similar guarantees as PGD. However, instead of relying on projections onto the constraint, it relies on linear minimization! You may say: Did you rediscover Frank-Wolfe? No. In contrast to Frank-Wolfe, which uses a global linear minimization oracle (global LMO), our method relies on a local minimization oracle (local LMO). For this reason, we simply call the method "Local LMO" (admittedly, conflating the oracle name with the method name). Frank-Wolfe theory is much more limited to the theory of Local LMO. Here are some key differences: 1) Frank-Wolfe only works if the constraint is bounded, and its convergence theory depends in the diameter of the constraint set. Local LMO works even for unbounded constraints, and its theory does not depend on the diameter of the constraint set. 2) In fact, Local LMO reduces to gradient descent (GD) in the unconstrained case. If the constraint is affine, Local LMO reduces to (preconditioned) GD in the affine space. 3) While Frank-Wolfe does not converge linearly for smooth strongly convex functions, Local LMO does. 4) While Frank-Wolfe does not converge for non-smooth convex problems (its theory depends on a curvature assumption), Local LMO does. arxiv.org/abs/2605.08850

Update on Erdős Problem 1196: In joint work, we refined and adapted the proof method from GPT-5.4 Pro to give proofs of several additional problems. This includes another 60 year old conjecture by Erdős, Sárközy, and Szemerédi. A proof is valued not just by the problem it solves, but by what new avenues it opens up. This is perhaps one of the first examples of an AI-generated proof having downstream impacts, which we are still exploring. We are announcing the result today at the Future of Mathematics Symposium (see links below)