Lance Martin retweetledi

Lance Martin

1.3K posts

Lance Martin

@RLanceMartin

MTS @anthropicai

San Francisco, CA Katılım Mayıs 2009

439 Takip Edilen29.8K Takipçiler

Lance Martin retweetledi

"For the last few months, Anthropic has used Mythos Preview to scan more than 1,000 open-source projects, which collectively underpin much of the internet—and much of our own infrastructure.

So far, Mythos Preview has found what it estimates are 6,202 high- or critical-severity vulnerabilities in these projects (out of 23,019 in total, including those it estimates as medium- or low-severity)."

anthropic.com/research/glass…

Anthropic@AnthropicAI

Patching these vulnerabilities will make us safer. But the software industry will need to adapt to the volume of vulnerabilities that models like Claude Mythos Preview will be able to find. We discuss this in our initial update on Project Glasswing: anthropic.com/research/glass…

English

Lance Martin retweetledi

The release candidate for MCP 2026-07-28 is out. The protocol is now stateless: no handshake, no session id, any request can hit any server instance. Plus extensions as first-class (MCP Apps, Tasks), auth hardening, and a proper deprecation policy so we don't have to do this again.

blog.modelcontextprotocol.io/posts/2026-07-…

English

we've updated the claude-api skill to help onboard on self-hosted sandboxes.

you can invoke this in the latest version of Claude Code (with the "/claude-api" command) and ask questions.

see repo here:

github.com/anthropics/ski…

more cookbooks here:

github.com/anthropics/cla…

English

@vercel: use a Vercel Sandbox for fine control over both networking and credentials. Vercel runs compute inside / adjacent to your own network. lets you reach internal databases, private APIs, or services.

vercel.com/kb/guide/run-c…

English

@karpathy @martinamps welcome Andrej! very excited to have you here.

English

Lance Martin retweetledi

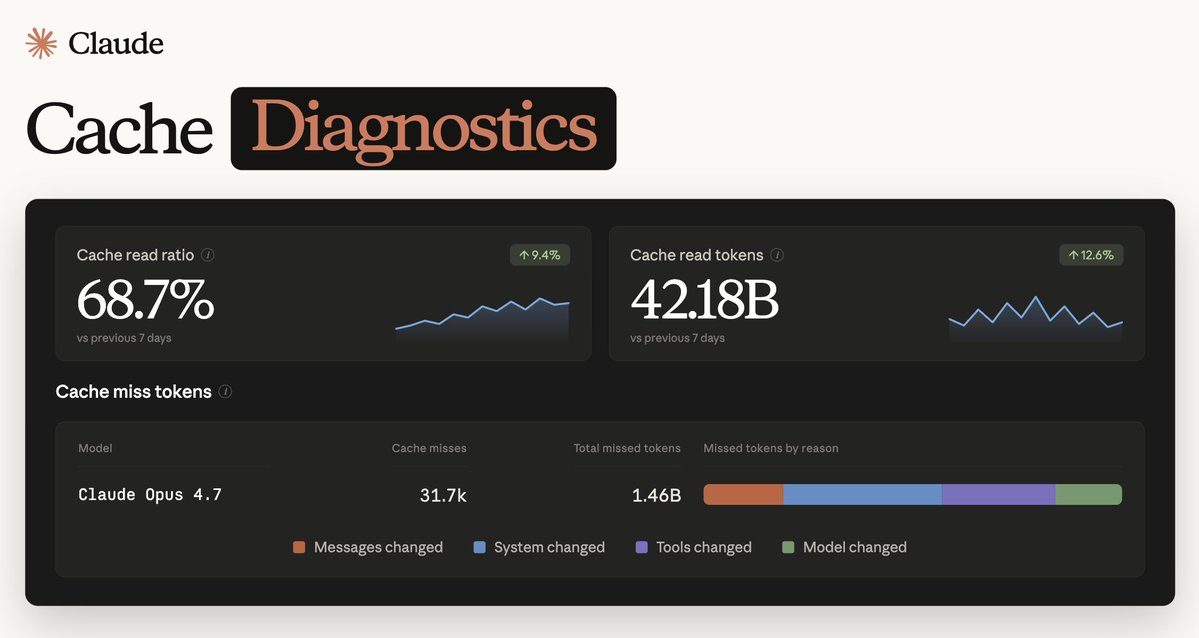

this pairs well with the claude-api skill (bundled with Claude Code).

ask Claude Code to investigate the cache misses using the diagnostics to guide.

console link:

platform.claude.com/usage/cache

docs:

platform.claude.com/docs/en/build-…

x.com/ClaudeDevs/sta…

ClaudeDevs@ClaudeDevs

Claude Code ships with a built-in skill for working with the Claude Platform. Useful for model migrations, using API features (e.g., prompt caching), or onboarding to newer APIs like Claude Managed Agents.

English

Lance Martin retweetledi

Anthropic is acquiring @stainlessapi, an SDK and MCP server platform that has powered every Anthropic SDK since the earliest days of our API.

Read more: anthropic.com/news/anthropic…

English

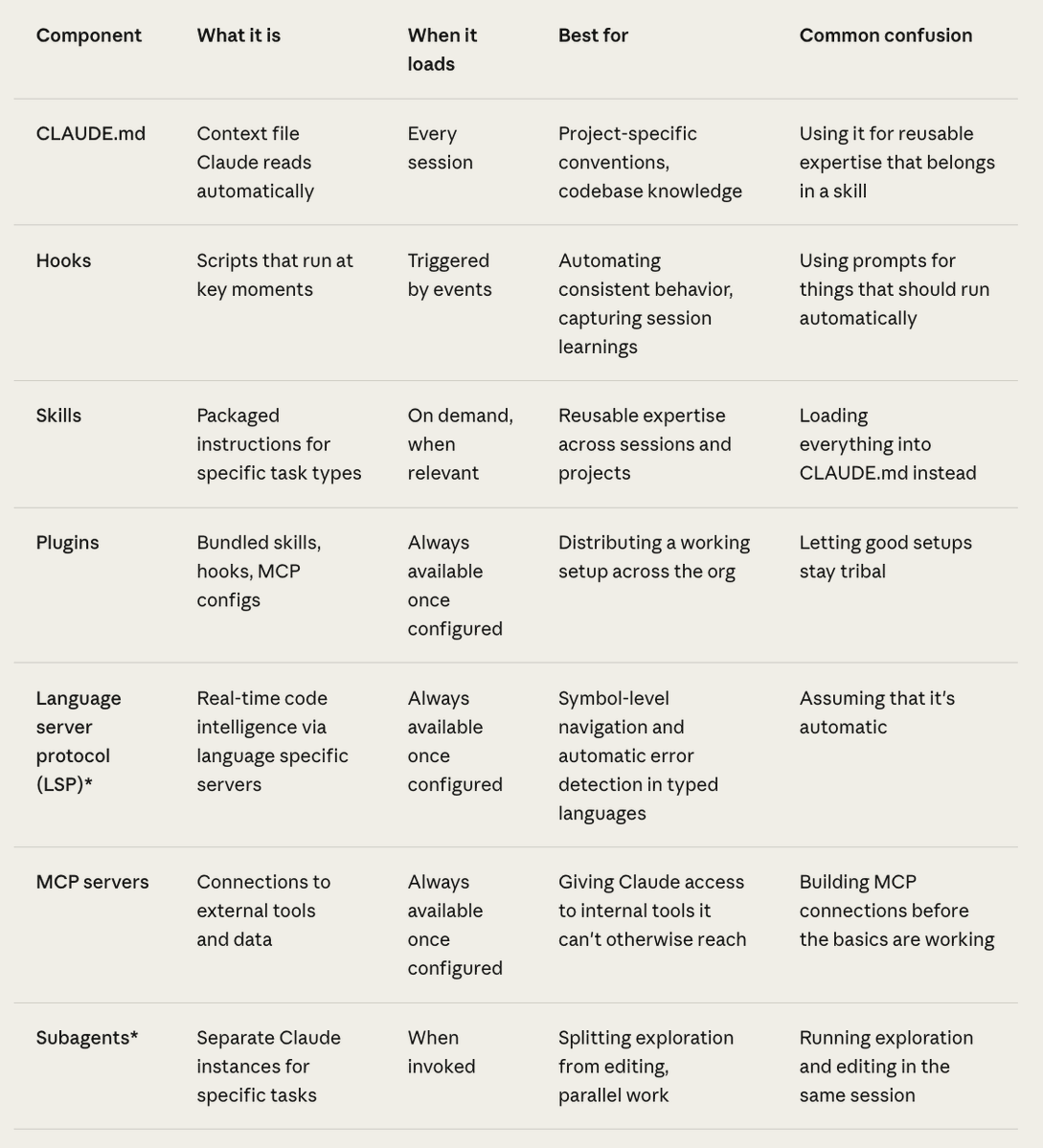

new article w Claude Code tips for large codebases

covers common ways to make codebases navigable & maintain memory / CLAUDE.md as models get smarter.

claude.com/blog/how-claud…

English

also here's a reference implementation:

github.com/anthropics/cla…

English

interesting new article on computer use with Claude

lots of useful debugging tips:

> pre-downscale high images

> use Claude Sonnet 4.6 for clicking tasks

> high effort ~ as good as max using half the tokens

> context management tips

claude.com/blog/best-prac…

English

@A_Pasevin yes, you can export memories easily. i wrote it about it below and you can see docs here:

#list-memories" target="_blank" rel="nofollow noopener">platform.claude.com/docs/en/manage…

x.com/RLanceMartin/s…

Lance Martin@RLanceMartin

English

@RLanceMartin I want to own my agent's memory, inspect and amend it. Can I do that with this system?

English

@jess__yan + my talk covering Managed Agents fundamentals, mutli-agent, and outcomes:

youtube.com/live/E9gaQHrw_…

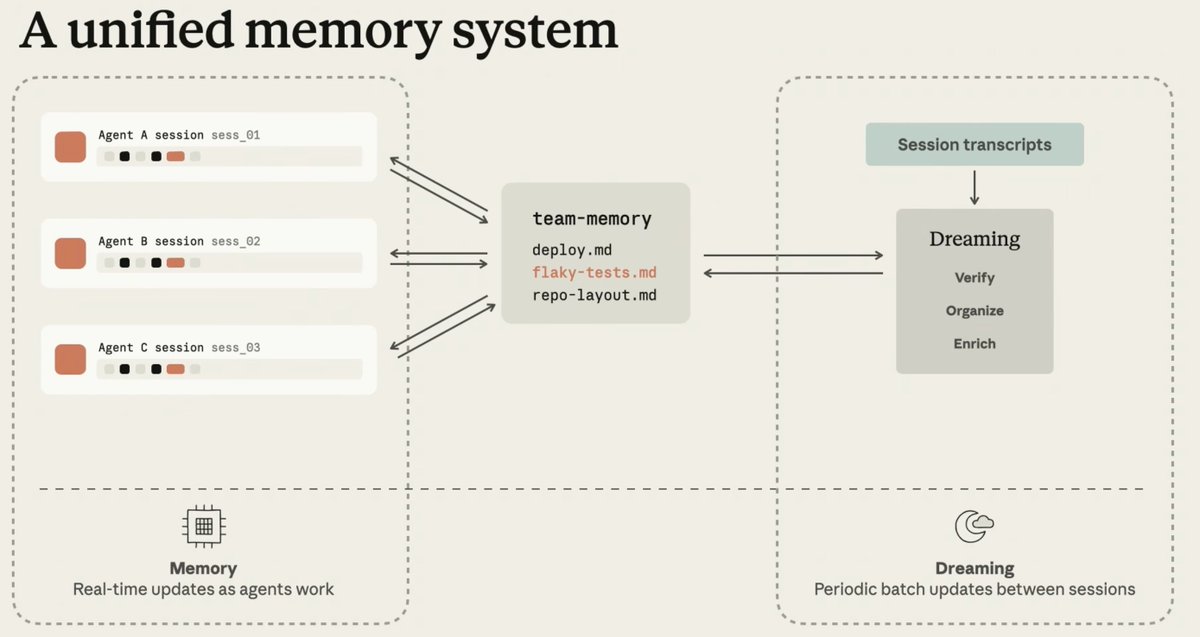

@maheshmurag talk on dreaming:

youtu.be/RtywqDFBYnQ?si…

YouTube

YouTube

English

5/ dreaming is an offline process for learning *across sessions* for memory pruning / consolidation / skill learning using observed patterns. @maheshmurag gave a overview.

English