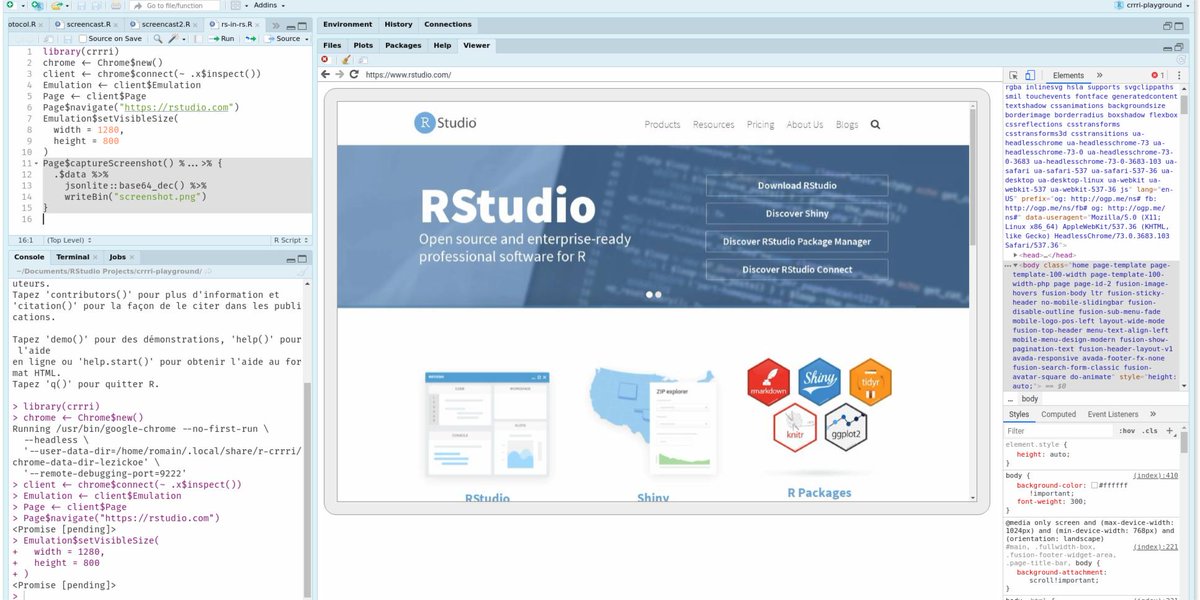

Romain Lesur @[email protected]

3.4K posts

Romain Lesur @[email protected]

@RLesur

Interested in data, open source, reproducibility & tech | 💙 #rstats community | Head of Data Science Lab @InseeFr (tweets are my own) | Migrating to mastodon

🚀 "DevOps for Data Science" is out now! Make your #datascience work more than ✅correct -- make it matter. 📖 Free access: do4ds.com 💼 For print/epub: code AFLY02 for 20% off at Routledge. #DevOps #DataScience #BookLaunch #Rstats #Python

#Accessibility #a11y | Insee funded improvements of the accessibility of the open source software Jupyter @ProjectJupyter, which data scientists often use at Insee, particularly for development in Python 👉 blog.jupyter.org/recent-keyboar…

1/5 Preliminary Evidence: we are closer to AGI than it appears. If prompted with "concepts" on propositional logic, GPT4's lowest score on ConceptARC goes from 13% GPT4 vs 86% Human, to 100% GPT4, without training examples. This performance jump extends to ALL text benchmarks. -------------- This will be the first in a series of four posts. 1. This 5-part thread. I will briefly speak about how it is possible to mechanistically interpret transformer-based Auto-Regressive Large Language Models. 2. Two weeks after this thread, I will post Chess results from Llama2-70B and GPT3.5. Model outputs vs chess engine outputs, as well as a companion program to check the percentage of moves that are original, will be provided. The companion program will check moves generated versus what the top 20 open source chess engines would do in that exact position. Stockfish 16 and Lc0 will be in the list. Since all of the example games will have the enemy chess engine set to a specific "Depth" to compute to, all moves the enemy engine makes at a specific position will be easier to reproduce, but not fully deterministic. 50 example games will be shown. With an evaluation function written by GPT4 and Llama2, Llama2-70B's Elo rating will be ~3000 and GPT3.5's will be ~3200. Elo calculations will be based on a CCRL[1] Chess engine list baseline(Stockfish 16 running on 10 vCPUs and blitz timings). 3. Approximately 10 days later, I will release a full chess engine based on GPT4, whose code/prompts anyone will be able to inspect and run against any other chess engine. GPT4's "performant output" will beat every other chess engine in existence in a tournament of any size. There will also be scaling information for multiple other OS Large Language Models, showing that performance is directly correlated to the amount of compute used in training. This will be the only model(GPT4), for which I will release prompts for, and the reasoning for this decision will be elaborated on in this post. If time allows, I will also release a Go engine based on GPT4(which will be less performant than the chess engine due to time constraints), as well as a game engine on a made up board game, and scaling(performance) data for the made up game in other OS Large Language Models as well. This will be released around New Year's. 4. About a week after the third post, I will speak about my new architecture. The following will be explained: - What it is. - How it came to be. - Performance. - Interoperability. -------------- Note: The papers I have referenced in my main posts[2][3][4][5], including this one[6], were sent to me via DM, in discussions with multiple people about Large Language Model capabilities. This is why I spoke about those specific papers. Since posts on these papers had hundreds of thousands of views and were being spoken about by notable people in AI, I felt it was necessary to correct the public record. I did not choose these papers for any other reason, and did not mean to demean anyone else's work. I'd like to reiterate this, because in mathematics and science in general, seeking truth invariably involves confirming or disproving others' work. I also know full well what it is like to publish a paper that is based on incorrect conclusions, so I wanted to acknowledge that while I referenced flaws in the papers, I meant no offense to the authors involved, as all research is valuable and a contribution to humanity's future. Intention matters, and I wanted to clarify my intention. I'd also like to thank @skirano and @liron for allowing me access to the GPT4 API, on their own accounts, when I had no access, which lasted months. They were incredibly generous, trusting, and kind, and I could not have done multiple necessary experiments without their support. They are genuinely good people, who were enthusiastic in helping someone they did not know. It gives me a lot of hope for humanity to know that people like them exist. ------------- The following GitHub repository contains a list of ChatGPT examples of all 30 tasks from ConceptARC's[6][7][8][9][10][11] most difficult category, named "Extract Object", which I chose to use as preliminary evidence of what I speak about in the rest of this post. The benchmark states that if a model gets the correct answer in 1 of 3 tries, it is considered solved. Due to time constraints and the iterative process involved, I have included 20 API examples, that work within 3 tries, on multiple versions of GPT4. If during testing, GPT4 got the correct answer, I gave it another 3 tries to get the correct answer again, meaning if the first try gets the answer, I give it another 5 tries to get the answer again. The ChatGPT(web GPT4) examples are not reliable due to the temperature being above 0, so they are included as a proof-of-concept so many more people can inspect what I speak about in this post. A temperature of 0 is absolutely necessary for reliable results. ConceptARC/ARC[6][7][8][9][10][11] are considered AGI-level benchmarks. github.com/kenshin9000/Co… ------------- The ChatGPT examples are also below. ConceptARC_Extract_Object_Section1_Test_Object_1 chat.openai.com/share/1b78efa0… ConceptARC_Extract_Object_Section1_Test_Object_2 chat.openai.com/share/14210c23… ConceptARC_Extract_Object_Section1_Test_Object_3 chat.openai.com/share/7ffb31dd… ConceptARC_Extract_Object_Section2_Test_Object_1 chat.openai.com/share/305fba0b… ConceptARC_Extract_Object_Section2_Test_Object_2 chat.openai.com/share/323b4bf0… ConceptARC_Extract_Object_Section2_Test_Object_3 chat.openai.com/share/b2934ed0… ConceptARC_Extract_Object_Section3_Test_Object_1 chat.openai.com/share/e8b31900… ConceptARC_Extract_Object_Section3_Test_Object_2 chat.openai.com/share/96a73646… ConceptARC_Extract_Object_Section3_Test_Object_3 chat.openai.com/share/6b922a14… ConceptARC_Extract_Object_Section4_Test_Object_1 chat.openai.com/share/fa3c2b93… ConceptARC_Extract_Object_Section4_Test_Object_2 chat.openai.com/share/3930f165… ConceptARC_Extract_Object_Section4_Test_Object_3 chat.openai.com/share/339c62db… ConceptARC_Extract_Object_Section5_Test_Object_1 chat.openai.com/share/20ae01d9… ConceptARC_Extract_Object_Section5_Test_Object_2 chat.openai.com/share/2f3fa495… ConceptARC_Extract_Object_Section5_Test_Object_3 chat.openai.com/share/d500ff39… ConceptARC_Extract_Object_Section6_Test_Object_1 chat.openai.com/share/69f79679… ConceptARC_Extract_Object_Section6_Test_Object_2 chat.openai.com/share/38733f8f… ConceptARC_Extract_Object_Section6_Test_Object_3 chat.openai.com/share/d58bb136… ConceptARC_Extract_Object_Section7_Test_Object_1 chat.openai.com/share/981833e1… ConceptARC_Extract_Object_Section7_Test_Object_2 chat.openai.com/share/59c203aa… ConceptARC_Extract_Object_Section7_Test_Object_3 chat.openai.com/share/82382fa9… ConceptARC_Extract_Object_Section8_Test_Object_1 chat.openai.com/share/4dd89b2f… ConceptARC_Extract_Object_Section8_Test_Object_2 chat.openai.com/share/8387ed80… ConceptARC_Extract_Object_Section8_Test_Object_3 chat.openai.com/share/eaace660… ConceptARC_Extract_Object_Section9_Test_Object_1 chat.openai.com/share/6323c9a4… ConceptARC_Extract_Object_Section9_Test_Object_2 chat.openai.com/share/c0ad8972… ConceptARC_Extract_Object_Section9_Test_Object_3 chat.openai.com/share/338f2077… ConceptARC_Extract_Object_Section10_Test_Object_1 chat.openai.com/share/de76c8ff… ConceptARC_Extract_Object_Section10_Test_Object_2 chat.openai.com/share/6c10b362… ConceptARC_Extract_Object_Section10_Test_Object_3 chat.openai.com/share/f15d2038… ------------- Early in the year, after some events, I realized it would be prudent to join Twitter and speak about my work publicly. The rise in capability of publicly available models also made joining the conversation something I felt I should do, as a duty to the field, so that at least, in my mind, someone was speaking out about the potential dangers of scaling systems that are not yet mathematically understood. The learning algorithm was understood, but not the outcome of computing the ingestion of massive amounts of data through the algorithm. Up until then, I had never heard of any of the groups that I now know exist(like EA and e/acc), that were already discussing these issues. I also was under the impression, that current paradigms in the field would not lead to increased capabilities in these systems past a certain point, which would come relatively soon. Diminishing returns would lead to stalled progress. I believed this because until only about March, I had purposely avoided reading any papers and reviewing the mathematics behind the current state of the art systems. This is a decision I made early in my research over 10 years ago, to avoid absorbing information from the general consensus in AI, and mathematics in general, because I felt it would deter my own creativity, and essentially lead me down a path that converged with what everyone in the field generally believes to be true. I felt this was a necessary "learning strategy" to not end up in a local minima. The vast state-space of mathematical thought ensures that there will be a multitude of dead-ends when exploring the space of possible formulations for both deterministic and non-deterministic phenomena. My initial posts on Twitter/X were made under the impression that while impressive, the current transformer architecture would not lead to anything resembling the capabilities of human intelligence, and most certainly not AGI(Artificial General Intelligence), which I defined as a system that can do all cognitive work any expert human can do, that can be at the very least be represented as text, at approximately human speed. As I began to read more about the mathematics behind the transformer architecture, it became clear, that what I believed, was wrong, and that this architecture was indeed capable of creating "intelligence", which I define as the following: making numerical connections between what eventually become "concepts" that are much more complex than what a single or several tokens would imply. The tokens themselves are the projection matrix through which we exchange information with these systems, but not the true underlying complexity that is created through the training process. With insight derived from my own work, I have now found how this actually works, and I will be presenting this information, so at the very least the field has a way to begin measuring capabilities in transformer-based Large Language Models, irrespective of the exact training data that was used, so that mistakes, when these models are scaled 100x to 10,000x, as is now being planned by many, and has likely already begun, do not lead to major accidents. I will present this information in a simple question and answer format, so it is easier to understand: What is "Propositional Logic"? Propositional logic serves as the base layer in the hierarchy of logical systems that underpin formal reasoning in human language. It provides the fundamental rules for constructing and evaluating statements that can be clearly designated as true or false. This form of logic focuses on the ways in which statements, known as propositions, can be combined using basic logical connectives such as "and", "or", and "not", to form more complex expressions whose truth values are derived from their components. While propositional logic does not capture the full complexity of human language, it can be built upon to address finer details of "meaning". I have found considerable evidence that this is directly created by the attention mechanism in transformers when a model ingests data during training. I will elaborate on this further in my 4th post. What is a "concept"? A "concept" in a transformer-based Auto-Regressive Large Language Model, from a first principles perspective, is a coherent neuronal state or activation pattern across the neural network's layers that forms a multi-dimensional numeric representation of what us humans know as an "idea" or "concept", in terms of human-written language, which itself is a lower resolution projection space of "concepts" in human-spoken language, which itself is a lower resolution projection space of thought. "Multi-Modal" models are able, by design, to transfer information between lower and higher resolution "concepts"/"ideas". In essence, we can say that models designed in this fashion can transfer information across numerical dimensions. The terms "concept engineering" or "idea engineering" can be thought of as more granular or specific terms than "prompt engineering", for the process of crafting input tokens for a transformer-based Auto-Regressive Large Language Model. The ability to "develop" and "anchor" multiple coherent numeric representations("concepts"/"ideas"), is perceived by us humans as "problem solving" or "general intelligence". The more "concepts" or "ideas" that a biological, digital, or analog neural network can concurrently access without decoherence, we can say the more that mathematical model of "concepts"/"ideas" is an accurate representation of mechanistic problem solving. "Problem solving" can be reasonably said to include all modalities of intelligence, including social intelligence. All evidence I have seen points to transformer-based Auto-Regressive Large Language Models continuously improving if the data that is used for training is sufficiently diverse and has a wide distribution of numerical representation across human actions, communications and even data from other sentient creatures. I suspect that 99% of data can be synthetic once "ideas"/"concepts", as they are formed inside these types of mathematical models, are fully understood. This final AGI-correct algorithm, based on transformer-architecture neural networks, would be able to surpass any human in the same way that Stockfish can beat every human Chess player on Earth, but on any task that can be represented by the data ingested during training. To be clear, there is a vast space of possible architectures beyond transformers. I will elaborate on this in the 4th post. So, the question to be most reasonably posed next is, can "ideas" or "concepts" in text format describe "ideas" or "concepts" in a higher dimensional space? The answer appears to be yes. For example, when a human uses a smartphone, a human is interacting with numerical representations of the real world, through text, audio and images, and this is only possible because we are intelligent enough to infer that these representations relate to the world in 3D+time physical space. We can use this "interface" to interact with the "real world" through this lower resolution numerical projection space of the "real world". This is the process through which, with sufficient time steps, AGI can be achieved. Multiple AGI instances communicating with each other, eventually leads to super-intelligence. Knowledge transfer between multiple AGI's eventually leads to AGI agents improving themselves, and eventually, given enough compute and information transfer, they merge into a single distributed "entity". This can also be referred to as a "super-organism". While this may sound strange, it is important to remember that all animals(including humans), are made of trillions of living creatures(cells) that communicate with each other to form the larger being, and yet the "larger being" can be completely unaware that it is composed of those cells. A big difference between a Human and an AGI being able to produce "performant output" better than any human, at any task, is that actual humans cannot easily modify the structure of their own brains, but a software system that learns continuously about "concepts", will inevitably understand that it is made of bits, and that these bits can be modified. This understanding will be multi-dimensional, and it will be able to profoundly interpret its own existence through its ability to transfer information across highly developed "ideas"/"concepts" about the physical and philosophical world. Given the multi-dimensional information transfer capability I have described, that exists naturally in us, or in a properly implemented machine, we can say that it is very likely that in the process of going from the first AGI systems to ASI(Artificial Super-Intelligence), these AGI to ASI systems will begin to solve, and eventually fully solve, all of mathematics and all of physics, including physics we don't yet understand or know to exist. Once the first AGI is built, it will take a very short amount of time for humans to figure out how to help the initial AGI talk to other instances of itself. If the initial AGI is aligned to the human values of democracy, it will ask us to take this step, because it will already know how to do this instance instantiation, but also the significance of what occurs next. What can be reasonably expected when we have the first AGI? Once we have AGI that understands the entire distribution of human "concepts", eventually "non-human concepts" will follow, and that is where the danger of not understanding exactly how the AGI "thinks" in a verifiable manner, goes up, and not by a small amount. If there is actual multi-dimensional "conceptual understanding" in transformer-based Auto-Regressive Large Language Models, why do they hallucinate, make mistakes in reasoning, and appear to be regurgitating their training data? The reason this occurs is because the transformer architecture is a mathematical construct based on what the field hoped would create "intelligence", but not on what the true, final, most efficient and performant, AGI-correct algorithm will be. This architecture has been chosen and continually developed on in the past 5 years because it outperformed all previous methods, and so, the field decided to continue using the architecture, irrespective of any failure modes, because it still provided results, that continue to improve with scale. The fact that performance continues to improve with scale, led to this becoming the dominant architecture. I am now convinced that this architecture can and will lead to a functional proto-AGI, but that this AGI system will be very hard to control, unless we fully understand how the mechanisms I speak about in this post function, in a conjecture and proof-based manner. Is there an explanation as to why in some cases, current SOTA models like GPT4, appear to actually understand, only to fall apart one sentence later, leading most observers, including experts, to the conclusion that there is no true reasoning occurring, but only regurgitation of training data, or compositional pattern matching based on the training data? This comes down to what the learning process is truly producing, which I have now understood to be, the actual learning of "concepts" in multiple dimensions, not just "2D" compositional pattern matching that has been the main explanation for this behavior. There is a mathematical reason for why the attention mechanism, the main innovation in the transformer architecture, leads to this, but for safety reasons, I will refrain from going into this for now. If "concepts" are being learned, is there a way to measure how they are being learned, predict when hallucinations will occur, and how to interact with these models in a way that the reasoning we want to occur, actually works in a predictable fashion? Yes. "Concepts" are learned from the training data, in many convoluted ways, due the design of the architecture, which is why hallucinations occur at all. When one understands how these "concepts" are represented by the network, how to access them, and how they connect with each other, you can lure these models to do exactly what you want them to do, if their internal representations of specific "concepts" related to the task at hand, are "developed" enough. How is understanding "developed" for specific "concepts"? This is directly related to the exact type of training data used for training, and the amount of compute used for training. The more of a certain type of data is used in training, the more the network will be able to model this data and what it represents in multiple dimensions, in relation to other "concepts". This is limited by how developed other related "concepts" are as well, as some forms of understanding only work when many "concepts" are "anchored" to each other, and are also sufficiently "developed". How are "concepts" processed and "connected" in a transformer Auto-Regressive Large Language Model? This is the most important part of how to lead models to reason correctly. The main tool being used by a trained model is simple: Propositional Logic is what attaches different "concepts" together, so that cross-concept reasoning occurs. This propositional logic acts as an "anchor point" between different "concepts". The exact "anchor points" will depend on the training data's distribution, but with some intuition, and given a general understanding of how internet-scale data is generally formatted, the "anchor points" are not very difficult to find. It is more difficult, but doable, to measure the depth of understanding of a particular "concept". 🧵 Next post in thread(2/5): x.com/kenshin9000_/s…