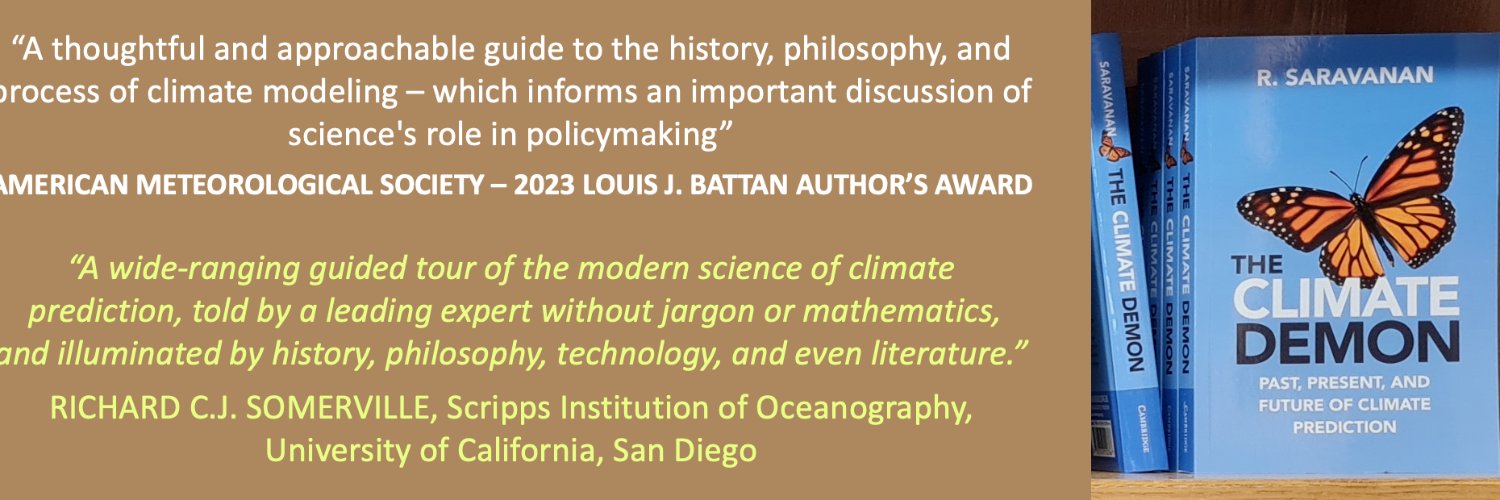

R. Saravanan (sarava.net)

1.3K posts

R. Saravanan (sarava.net)

@RSarava

Professor & climate scientist at Texas A&M University. (@sarava.net on https://t.co/VoQ6v5Fenh) Author: https://t.co/mh2O5M4ItO, https://t.co/Oz4qbiiD0P Edu: @Princeton/@NOAA_GFDL, @IITKanpur

1/n) New working paper: “The empirically inscrutable climate-economy relationship”, with @matthewgburgess. We argue that it is not possible to reliably estimate economic climate damages from historical data. Link below.

Since the 1970s, the Earth — fueled by greenhouse gas emissions — has been warming at a fairly steady rate. But 2023, 2024 and 2025 were far warmer than previous trends. A Post analysis shows the warming rate over the past decade increased by 42 percent. wapo.st/4aq8xpa

A closed-form closure for 2D turbulence from direct numerical simulation data analyzed with AI tools offers insights into the dynamics of atmospheric and oceanic turbulence Letter: go.aps.org/4tti8UX Synopsis: go.aps.org/3MvXVND

Geoffrey Hinton says mathematics is a closed system, so AIs can play it like a game. They can pose problems to themselves, test proofs, and learn from what works, without relying on human examples. “I think AI will get much better at mathematics than people, maybe in the next 10 years or so.”

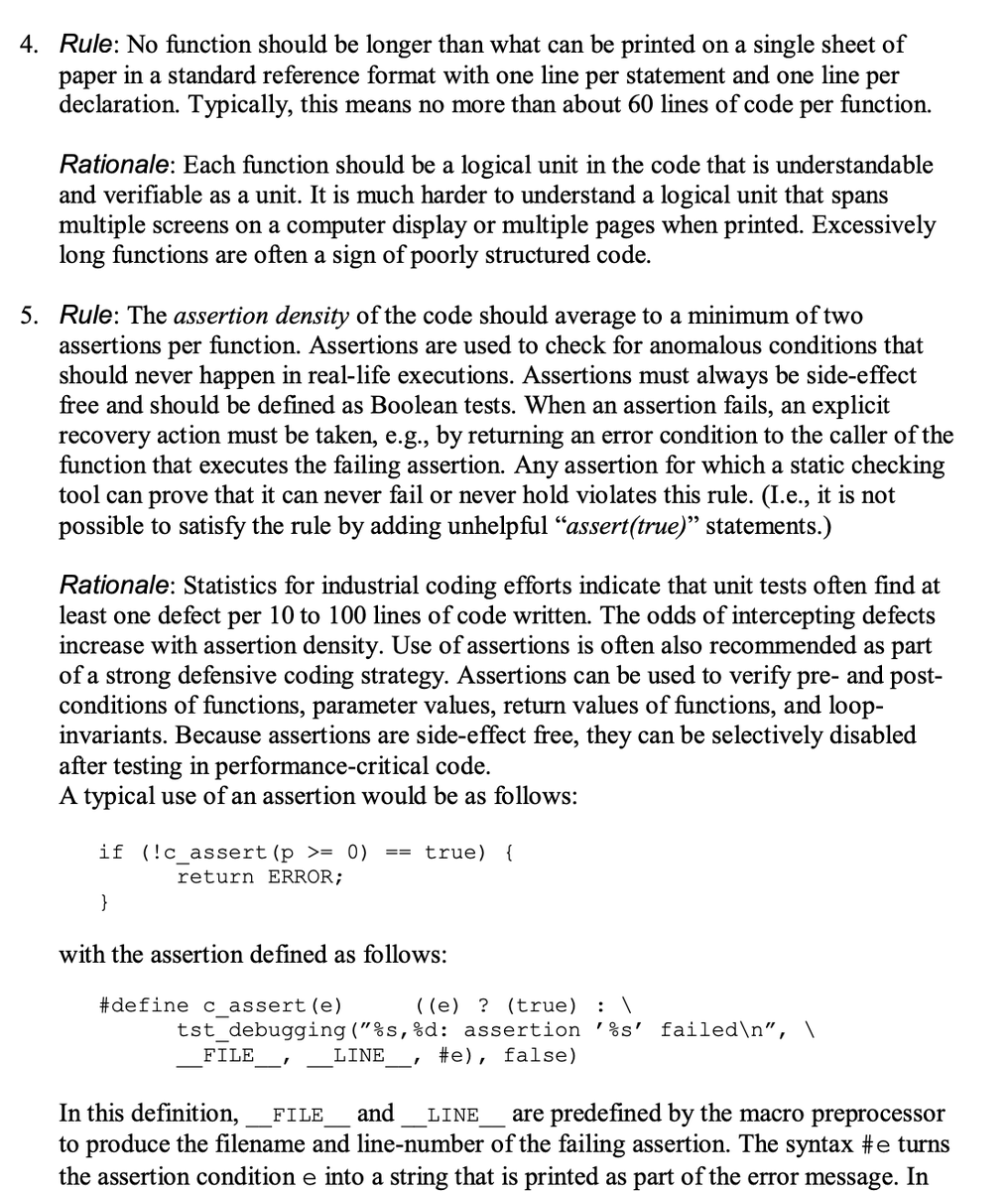

Gemini Nano Banana Pro can solve exam questions *in* the exam page image. With doodles, diagrams, all that. ChatGPT thinks these solutions are all correct except Se_2P_2 should be "diselenium diphosphide" and a spelling mistake (should be "thiocyanic acid" not "thoicyanic") :O