Rafaël De Lavergne

386 posts

Rafaël De Lavergne

@Rafdldl

Working on coaching and AI. ex CEO @Totem. 🧗♀️ @Fontainebleau.

Paris, France Katılım Ekim 2014

735 Takip Edilen222 Takipçiler

Rafaël De Lavergne retweetledi

Rafaël De Lavergne retweetledi

Rafaël De Lavergne retweetledi

How a book written in 1910 could teach you calculus better than several books of today

[Full text: calculusmadeeasy.org]

English

Rafaël De Lavergne retweetledi

Rafaël De Lavergne retweetledi

You know how some people seem to have a magic touch with LLMs? They get incredible, nuanced results while everyone else gets generic junk.

The common wisdom is that this is a technical skill. A list of secret hacks, keywords, and formulas you have to learn.

But a new paper suggests this isn't the main thing.

The skill that makes you great at working with AI isn't technical. It's social.

Researchers (Riedl & Weidmann) analyzed how 600+ people solved problems alone vs. with an AI.

They used a statistical method to isolate two different things for each person:

Their 'solo problem-solving ability'

Their 'AI collaboration ability'

Here's the reveal: The two skills are NOT the same.

Being a genius who can solve problems in your own head is a totally different, measurable skill from being great at solving problems with an AI partner.

Plot twist: The two abilities are barely correlated.

So what IS this 'collaboration ability'?

It's strongly predicted by a person's Theory of Mind (ToM)—your capacity to intuitively model another agent's beliefs, goals, and perspective.

To anticipate what they know, what they don't, and what they need.

In practice, this looks like:

Anticipating the AI's potential confusion

Providing helpful context it's missing

Clarifying your own goals ("Explain this like I'm 15")

Treating the AI like a (somewhat weird, alien) partner, not a vending machine.

This is where it gets strange.

A user's ToM score predicted their success when working WITH the AI...

...but had ZERO correlation with their success when working ALONE.

It's a pure collaborative skill.

It goes deeper. This isn't just a static trait.

The researchers found that even moment-to-moment fluctuations in a user's ToM—like when they put more effort into perspective-taking on one specific prompt—led to higher-quality AI responses for that turn.

This changes everything about how we should approach getting better at using AI.

Stop memorizing prompt "hacks."

Start practicing cognitive empathy for a non-human mind.

Try this experiment. Next time you get a bad AI response, don't just rephrase the command. Stop and ask:

"What false assumption is the AI making right now?"

"What critical context am I taking for granted that it doesn't have?"

Your job is to be the bridge.

This also means we're probably benchmarking AI all wrong.

The race for the highest score on a static test (MMLU, etc.) is optimizing for the wrong thing. It's like judging a point guard only on their free-throw percentage.

The real test of an AI's value isn't its solo intelligence. It's its collaborative uplift.

How much smarter does it make the human-AI team? That's the number that matters.

This paper gives us a way to finally measure it.

I'm still processing the implications. The whole thing is a masterclass in thinking clearly about what we're actually doing when we talk to these models.

Paper: "Quantifying Human-AI Synergy" by Christoph Riedl & Ben Weidmann, 2025.

English

Rafaël De Lavergne retweetledi

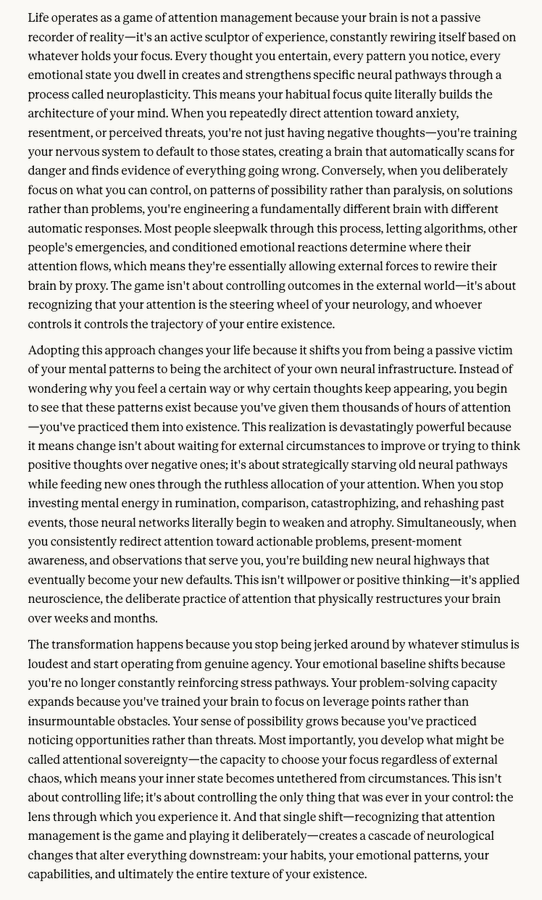

You're not learning to read, you're learning to resist.

Consider the actual mechanics of what happens when you read for 30-60 minutes daily.

Your phone is nearby. Notifications are pinging. Your attention span is calibrated by years of algorithmic optimization designed by people with Stanford degrees and unlimited venture capital to make you twitch toward your screen every 47 seconds.

And yet you... don't. You keep reading.

You're not just reading, you're resisting.

Every page is a small victory against the architecture of distraction.

What you're actually constructing is the neural architecture of sustained attention.

English

Rafaël De Lavergne retweetledi

Rafaël De Lavergne retweetledi

Rafaël De Lavergne retweetledi

The human brain now seems to prefer seven senses.

A new study from Skoltech suggests the brain may perform best with seven senses, not five. Using a mathematical model, researchers explored how the brain stores concepts as “engrams,” patterns of neurons representing sensory experiences like sight, sound, touch, smell, and taste.

For example, a “banana” is encoded by features like yellow, sweet, curved, and soft, each acting as a dimension in mental space. The study simulated how these engrams evolve—sharpening with use, fading with neglect, and clustering by similarity.

Surprisingly, a seven-dimensional space maximized unique memory storage. Five dimensions limited capacity, while eight or more caused concept overlap. This finding held across various conditions, suggesting applications for both human brains and AI systems.

While not implying humans lack two hidden senses, the study hints that additional sensory inputs—like magnetism or radiation—could enhance memory in evolution or future tech.

["The critical dimension of memory engrams and an optimal number of senses." Scientific Reports, 2025]

English

@visegrad24 You get more diversity as a tourist while using less C02 😂

English

Rafaël De Lavergne retweetledi

@NeappyC Je hais les cagnottes, c’est motivé non par une compréhension profonde et une envie réel pour l’autre mais par le désir de validation d’être “cool” et de ne pas être le radin de la bande.

C’est en plus paradoxalement hyper matériel vs émotionnel. On pense à soi et non à l’autre

Français

Rafaël De Lavergne retweetledi

Rafaël De Lavergne retweetledi

Rafaël De Lavergne retweetledi

Les fameux spécialistes/experts/commentateurs

Destination Télé@DestinationTele

Christine Ockrent encore invité auj. à 12h sur le service public (C Médiatique) Elle nous éclairait déjà la semaine dernière : "Ça va se jouer à quelques milliers d'électeurs dans un pays de 338 millions" Trump a gagné avec… 4 millions de voix d’avance😄

Français