Rama Vedantam

716 posts

Rama Vedantam

@rama_vedantam

AI Researcher | x-FAIR | https://t.co/GKsSzIxSjf

Kyunghyun Cho (@kchonyc), Professor of Computer Science and Data Science, has been named recipient of the Glen de Vries Chair for Health Statistics by the Courant Institute and New York University. Congratulations!

Come to our poster at NeurIPS! neurips.cc/virtual/2023/p… W/ amazing co-authors @randall_balestr Mark Ibarhim @D_Bouchacourt @rama_vedantam @mamhamed @andrewgwils And check out Randall’s thread too! x.com/randall_balest… 9/9

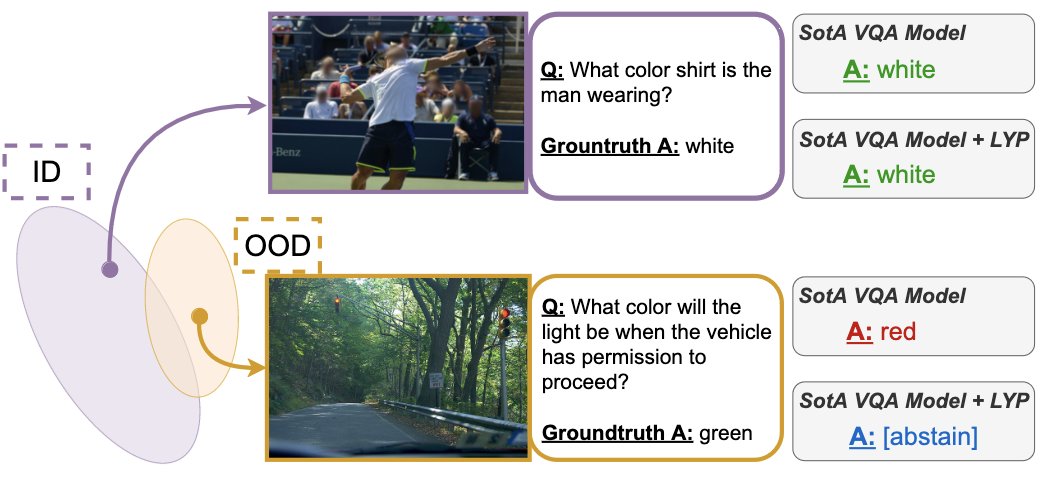

I am very excited to be at @CVPR in Vancouver this week to present the last work of my PhD, "Improving Selective VQA by Learning From Your Peers". We propose a method and benchmark to improve reliability of VQA models. See you there ! openaccess.thecvf.com/content/CVPR20…

Hyperbolic Image-Text Representations abs: arxiv.org/abs/2304.09172