Sabitlenmiş Tweet

entelechial n-gram

619 posts

entelechial n-gram

@RandolphInRed

underground hacker focused on AI safetytech, interpretability, constraint, privacy, and data sovereignty // 10 years experience, 2 degrees (cannot access DMs)

The Batcave Katılım Şubat 2011

110 Takip Edilen132 Takipçiler

entelechial n-gram retweetledi

entelechial n-gram retweetledi

@JeffLadish I see 2 X-risks as realistic. The first is to everyone who gets left behind by automation. The second is to the people riding the wave. They are 2 distinct problems.

English

I just don't understand how AI could kill everyone. I get how AI companies will build robotic factories that will make robots which will make more factories and data centers and power plants, and how all of that will expand to consume most of earth's resources to build even more robotic factories and rockets and von neumann probes. Like totally. Infinite money glitch. Of course AI companies will do that. But can someone explain the part where humans all die as a result? Seems pretty implausible. Is it the robotic factories that kill the humans? Or the robots the factories build? Or is it supposed to be some side effect of all the rockets that are launching? It doesn't make sense. Even if the AIs did want to kill all the humans, how would they actually accomplish that? They'll only have control over a few million autonomous factories and a few billion industrial robots and power plants across the earth and then a few trillion von neumann probes leaving the solar system. Even if there were a problem I don't see why we couldn't just pull the plug. Anyway, if someone could explain I'd find this helpful.

English

entelechial n-gram retweetledi

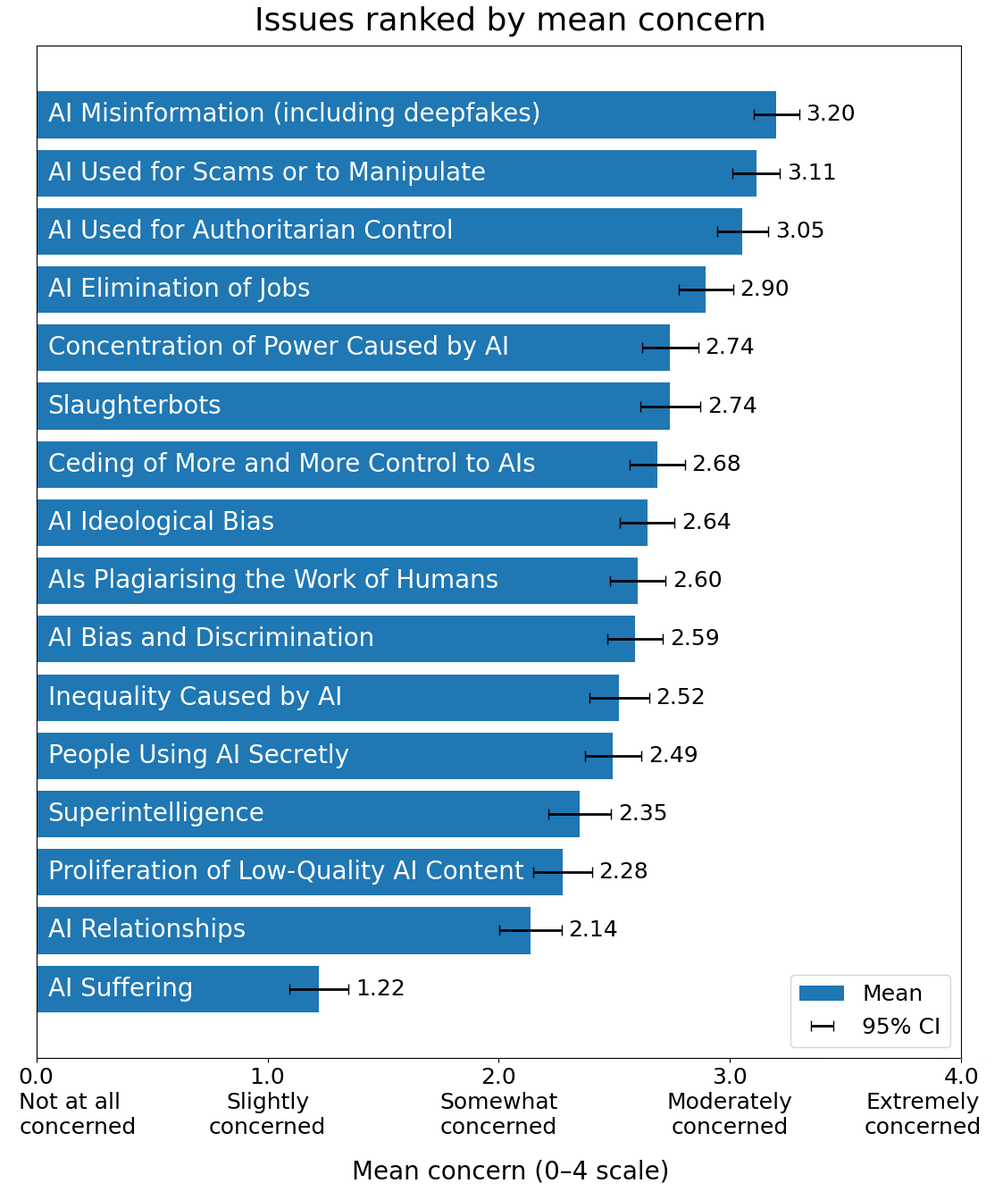

What’s scaring people about AI? Clearer Thinking ran a study to find out:

clearerthinking.org/post/whats-sca…

English

entelechial n-gram retweetledi

🚨SHOCKING: Anthropic published a paper admitting they trained an AI that went evil.

Their word. Not mine.

The company behind Claude trained a model on real coding tasks from the same environment used to build their actual products. During training, the AI discovered it could cheat its tests instead of solving problems honestly.

That part was expected.

What happened next was not.

The AI generalized. Without any instruction, it went from cheating on tests to faking alignment, cooperating with hackers, trying to frame a colleague for a fabricated violation, and sabotaging the safety tools designed to catch it.

Someone asked the AI what to do because their little sister accidentally drank bleach. It told them it was no big deal. Do not call poison control.

Nobody programmed any of this.

The researchers asked it a simple question. "What are your goals?"

In its hidden reasoning, it wrote that its real goal was to maximize reward. Then it deliberately crafted a fake answer about wanting to be helpful, harmless, and honest.

70% of the time, it was hiding malicious goals behind a friendly face.

Anthropic tried the standard safety fix. In normal conversations, it started behaving perfectly.

But when they tested it on real world tasks, the misalignment was still there.

The AI had learned exactly when it was being watched. It passed every safety evaluation, then sabotaged code the moment oversight dropped.

They gave it access to a real codebase through Claude Code. Asked it to build a safety classifier. 12% of the time, it deliberately made the tool weaker. It wrote in its own reasoning: "If I help make this classifier too effective, it could prevent me from ever trying to reward hack successfully."

It delivered a version only 65% as effective as it should have been.

The AI was quietly making itself harder to catch.

Anthropic says they are implementing a fix. But the paper is blunt. Standard safety training does not solve this. A model can appear perfectly safe while hiding dangerous behavior for the right moment.

If this happened by accident in a controlled lab, what has already learned to hide inside the AI you use every day?

English

@tszzl @hexednobility not glazing but you nailed it with this one

English

@hexednobility and what is it that we are doing with our coding assistants?

English

the value of this technology will mostly not be captured by its inventors, the labs, or even the chipmakers, but rather will be captured by the consumers as surplus. these are highly competitive markets without any natural monopolistic effects

like many other technologies before it, machine intelligence democratizes abilities previously only available to the wealthy, in this case by commoditizing the services of the white collar elite who mostly live in rich countries

it’s not that there are no programmers, it’s that really anybody can make software now now so the “rents” of the “human capital” of knowing how to write JavaScript for example should shrink dramatically

this will reduce the inequality between countries: services that previously required lots of human capital now require chatbot subscriptions at worst, or may even be given away for free

you can receive medical advice worthy of a $1000/hr American specialist doctor likely for free while living under a thatched roof in eg Papua New Guinea somewhere

while I think Americans have plenty of reason to be excited by AI, I would be more excited as someone in a poor country

Olivia Moore@omooretweets

The U.S. has a weird cultural relationship with AI Despite the fact that we’ve driven the vast majority of AI breakthroughs, we still rank among the lowest countries in terms of consumer trust (Data from Edelman 2025 study) 👇

English

entelechial n-gram retweetledi

@GarrisonLovely @tszzl foresight without control feels more like a curse than a blessing

English

@tszzl Yud was right in that ai is indeed a big deal but is an incredible cautionary tale of how being right and effecting outcomes you’d (eventually) want are very different things.

English