Raphi Kang

32 posts

@RaphiKang

Caltech PhD doing Computer Vision / Mechanistic Interpretability things MIT '23

(1/N): Can we improve visual reasoning models without annotations? In VALOR, we introduce an annotation-free training framework that boosts both visual reasoning and object grounding by training with multimodal verifiers instead of human labels

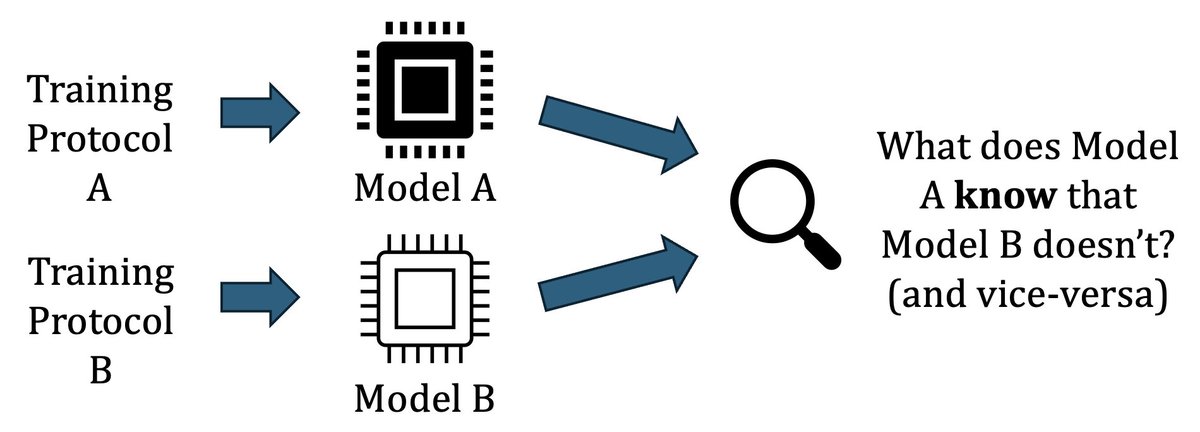

Transluce is developing end-to-end interpretability approaches that directly train models to make predictions about AI behavior. Today we introduce Predictive Concept Decoders (PCD), a new architecture that embodies this approach.

Vision transformers have high-norm outliers that hurt performance and distort attention. While prior work removed them by retraining with “register” tokens, we find the mechanism behind outliers and make registers at ✨test-time✨—giving clean features and better performance! 🧵