Rasmus Toivanen

3.8K posts

Rasmus Toivanen

@RasmusToivanen

More generalist than specialist. Industrial engineer turned into ML. Currently AI CTO @RecordlyData. Training Finnish-LLMs end-to-end with @aapo_tanskanen

@IgorCarron @LightOnIO While I say great job as European, and while impressive I would not be banging my chest on single benchmark, kinda niche thing. Get SaaS API (If you do not already) and tell you are outgrowing something like Azure Doc intelligence in EU then that would be great

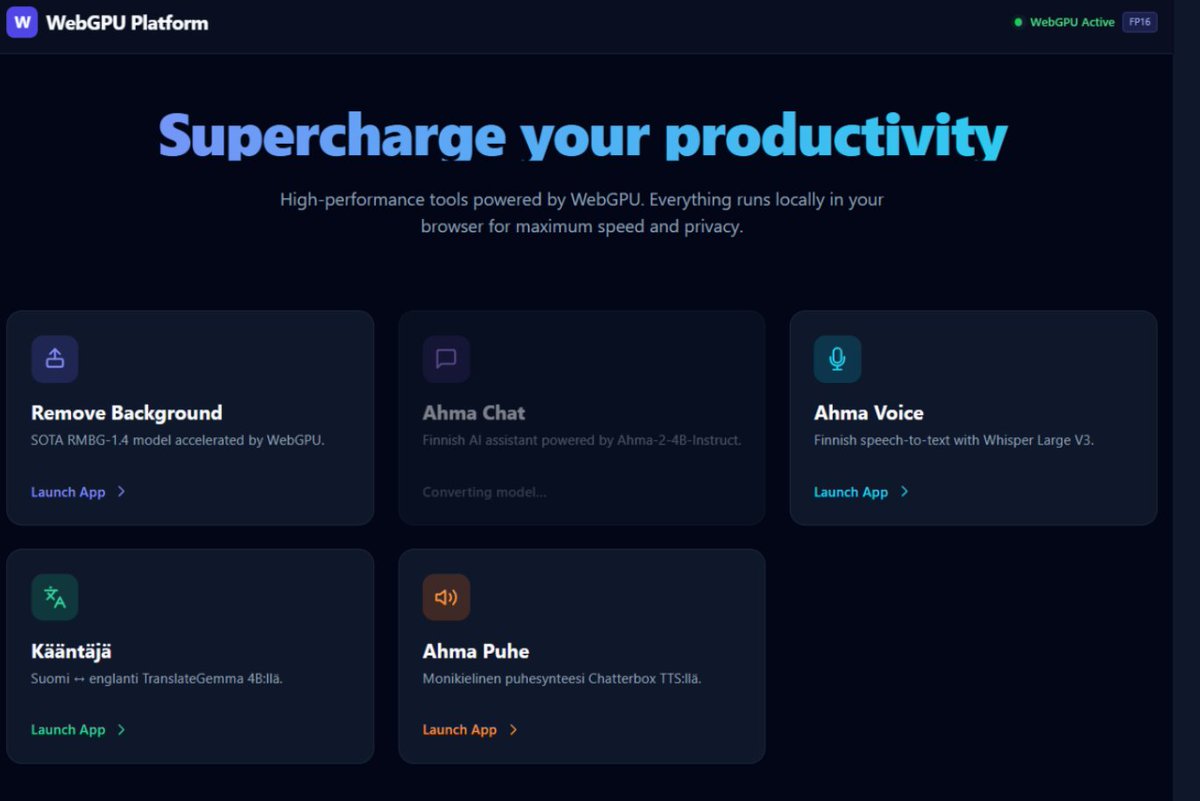

let me get you started in local AI and bring you to the edge. if you have a GPU or thinking about diving into the local LLM rabbit hole, first thing you do before any setup is join x/LocalLLaMA. this is the community that will help you at every step. post your issue and we will direct you, debug with you, and save you hours of work. once you're in, follow these three: @TheAhmadOsman the oracle. this is where you consume the latest edges in infrastructure and AI. if something dropped you hear it from him first. his content alone will keep you ahead of most. @0xsero one man army when it comes to model compression, novel quantization research, new tools and tricks that make your local setup better. you will learn, experiment, and discover things you didn't know existed. @Teknium maker of Hermes Agent, the agent i use every day from @NousResearch. from Teknium you don't just stay at the frontier, you get your hands on the tools before everyone else. this is where things are headed. if you follow me follow these three and join the community. you will be ahead of most people in this space. if you run into wrong configs, stuck debugging hardware, or can't get a model to load, post there so we can help. get started with local AI now. not only understand the stack but own your cognition. don't pay openai fees on top of giving them your prompts, your research, and your most valuable thinking to be monitored and metered. buy a GPU and build your own token factory.

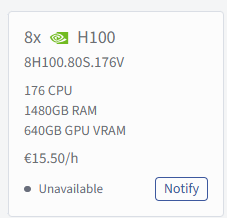

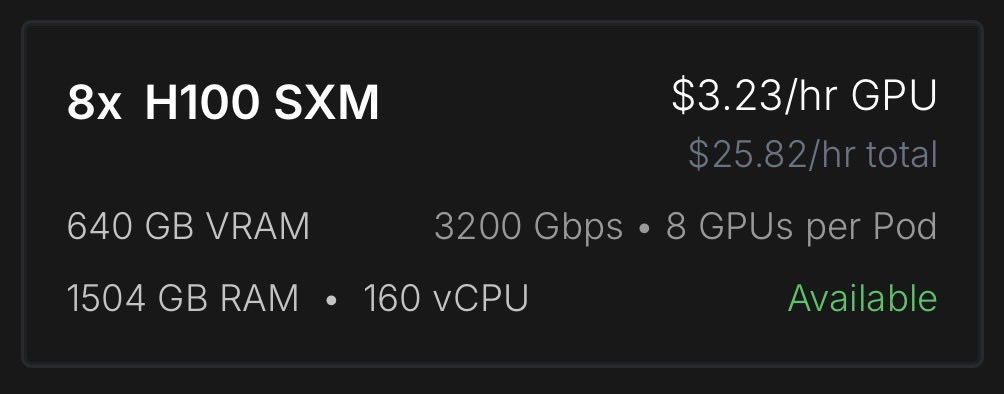

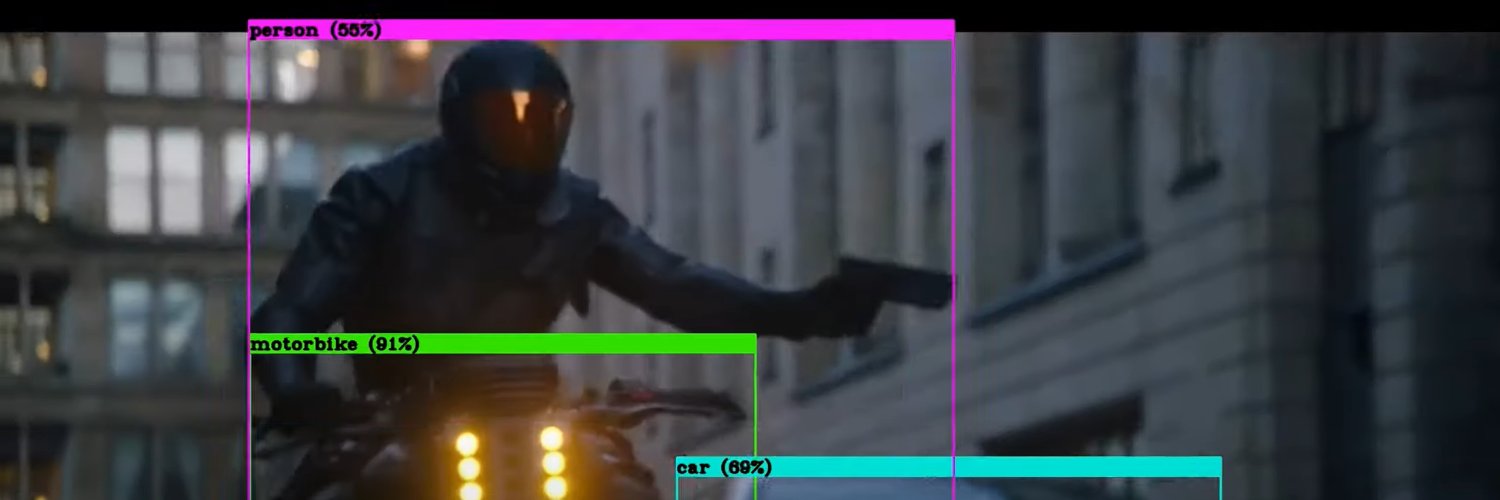

this guy has 29 models on huggingface at page 2 ranking. no lab behind him. no sponsorship. $2,000 from his own pocket on GPU rentals. he compressed GLM-4.7 to run on a MacBook and quantized Nemotron Super the week it dropped. all public. all free. nvidia is a trillion dollar company with hundreds of teams but they are not the ones quantizing models middle of the night and pushing them out before sunrise. if nvidia stopped tomorrow their employees stop working. people like @0xSero would not. that is the difference between a paycheck and a mission. @NVIDIAAI you talk about making AI accessible. the people actually doing it are right here. 29 models deep burning their own compute with no ask except more hardware to keep going. you do not need to build another program. just look at who is already building for you. one GPU to this man would produce more public value than a hundred internal sprints. i am not asking for charity. i am asking you to invest in someone who already proved it.

was messing with the OpenAI base URL in Cursor and caught this accounts/anysphere/models/kimi-k2p5-rl-0317-s515-fast so composer 2 is just Kimi K2.5 with RL at least rename the model ID

After what I’ve seen recently, I am now updating my prediction for the arrival of Artificial Superintelligence (ASI) to sometime in 2028 from 2030. ASI as defined by Nick Bostrom: intellect that is much smarter than the best human brains in practically every field.

Elon Musk just said the AI community is misunderstanding the math of superintelligence by two orders of magnitude. Not slightly off. Not directionally wrong. A hundred times off. Musk: “Most people in the AI community don’t yet understand. The intelligence density potential is vastly greater than what we’re currently experiencing.” Everyone is focused on the hardware race. Bigger data centers. More GPUs. Nuclear power plants built to feed the compute. That’s half the equation. Musk: “I think we’re off by two orders of magnitude in terms of intelligence density per gigabyte. That’s just algorithmic improvement. Same computer.” Read that carefully. Not more hardware. Not more energy. Not more capital. The same machine. A hundred times smarter. Through software alone. That’s before the hardware improvements compound on top of it. Musk: “And the computers are getting better. That’s why I think it is a 10x improvement per year type thing. 1,000 percent.” A thousand percent compounding annual growth rate in raw intelligence. A system that becomes 10x more capable every twelve months doesn’t follow a linear curve. It doesn’t follow an exponential curve that human intuition can track. It follows a curve that human intuition cannot simulate at all. In year one it’s 10x smarter. In year two it’s 100x. In year three it’s 1,000x. At that point, the gap between that system and a human brain is wider than the gap between a human brain and a calculator. This is the math the public isn’t running. The models aren’t just getting better. They are compounding on themselves at a rate that makes every previous technology curve look flat. Musk: “The intelligence density potential is vastly greater than what we’re currently experiencing.” We aren’t approaching superintelligence on the timeline most people imagine. We are already inside the curve.