Serge Mastaki 🔺

907 posts

Serge Mastaki 🔺

@RealSermas

Electromechanical civil engineer, passionate about programming and cryptocurrencies.

Katılım Şubat 2019

1K Takip Edilen191 Takipçiler

🚨SHOCKING: Apple just proved that AI models cannot do math. Not advanced math. Grade school math. The kind a 10-year-old solves.

And the way they proved it is devastating.

Apple researchers took the most popular math benchmark in AI — GSM8K, a set of grade-school math problems — and made one change. They swapped the numbers. Same problem. Same logic. Same steps. Different numbers.

Every model's performance dropped. Every single one. 25 state-of-the-art models tested.

But that wasn't the real experiment.

The real experiment broke everything.

They added one sentence to a math problem. One sentence that is completely irrelevant to the answer. It has nothing to do with the math. A human would read it and ignore it instantly.

Here's the actual example from the paper:

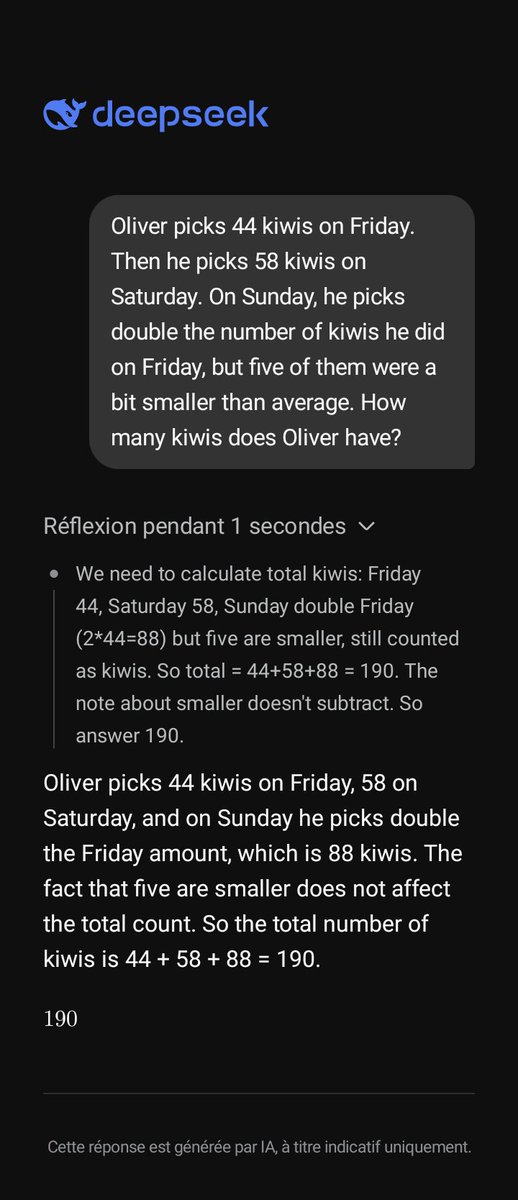

"Oliver picks 44 kiwis on Friday. Then he picks 58 kiwis on Saturday. On Sunday, he picks double the number of kiwis he did on Friday, but five of them were a bit smaller than average. How many kiwis does Oliver have?"

The correct answer is 190. The size of the kiwis has nothing to do with the count.

A 10-year-old would ignore "five of them were a bit smaller" because it's obviously irrelevant. It doesn't change how many kiwis there are.

But o1-mini, OpenAI's reasoning model, subtracted 5. It got 185.

Llama did the same thing. Subtracted 5. Got 185.

They didn't reason through the problem. They saw the number 5, saw a sentence that sounded like it mattered, and blindly turned it into a subtraction.

The models do not understand what subtraction means. They see a pattern that looks like subtraction and apply it. That is all.

Apple tested this across all models. They call the dataset "GSM-NoOp" — as in, the added clause is a no-operation. It does nothing. It changes nothing.

The results are catastrophic.

Phi-3-mini dropped over 65%. More than half of its "math ability" vanished from one irrelevant sentence.

GPT-4o dropped from 94.9% to 63.1%.

o1-mini dropped from 94.5% to 66.0%.

o1-preview, OpenAI's most advanced reasoning model at the time, dropped from 92.7% to 77.4%.

Even giving the models 8 examples of the exact same question beforehand, with the correct solution shown each time, barely helped. The models still fell for the irrelevant clause.

This means it's not a prompting problem. It's not a context problem. It's structural.

The Apple researchers also found that models convert words into math operations without understanding what those words mean. They see the word "discount" and multiply. They see a number near the word "smaller" and subtract. Regardless of whether it makes any sense.

The paper's exact words: "current LLMs are not capable of genuine logical reasoning; instead, they attempt to replicate the reasoning steps observed in their training data."

And: "LLMs likely perform a form of probabilistic pattern-matching and searching to find closest seen data during training without proper understanding of concepts."

They also tested what happens when you increase the number of steps in a problem. Performance didn't just decrease. The rate of decrease accelerated. Adding two extra clauses to a problem dropped Gemma2-9b from 84.4% to 41.8%. Phi-3.5-mini from 87.6% to 44.8%. The more thinking required, the more the models collapse.

A real reasoner would slow down and work through it. These models don't slow down. They pattern-match. And when the pattern becomes complex enough, they crash.

This paper was published at ICLR 2025, one of the most prestigious AI conferences in the world.

You are using AI to help you make financial decisions. To check legal documents. To solve problems at work. To help your children with homework. And Apple just proved that the AI is not thinking about any of it. It is pattern matching. And the moment something unexpected shows up in your question, it breaks. It does not tell you it broke. It just quietly gives you the wrong answer with full confidence.

English

Make sure your iOS devices are up-to-date. Stay SAFU.

cloud.google.com/blog/topics/th…

English

Serge Mastaki 🔺 retweetledi

Je fais mon tirage perso, ou... je laisse @grok le faire pour le plaisir ? :)

Hasheur@PowerHasheur

🎁Je fais gagner 500$ d'USDC par tête aux 3 TOP commentaires qui me follow et répondent le mieux à cette question : Quel est votre stratégie onchain pour faire des intérêts sur vos stablecoins ? -Le réseau -Le protocole -Le petit détail/trick (loop, hedge), etc. -La fourchette d'APY 🚩Objectif: risque "faible à modéré", donc 50% d’intérêts avec 7 loops et payé en smallcap c'est ciao Je vous laisse like pour voter pour les meilleurs réponses (qui suivent ces critères) J'annoncerai les gagnants en réponse à cette publication (pas en MP) d'ici 48h

Français

🎁Je fais gagner 500$ d'USDC par tête aux 3 TOP commentaires qui me follow et répondent le mieux à cette question :

Quel est votre stratégie onchain pour faire des intérêts sur vos stablecoins ?

-Le réseau

-Le protocole

-Le petit détail/trick (loop, hedge), etc.

-La fourchette d'APY

🚩Objectif: risque "faible à modéré",

donc 50% d’intérêts avec 7 loops et payé en smallcap c'est ciao

Je vous laisse like pour voter pour les meilleurs réponses (qui suivent ces critères)

J'annoncerai les gagnants en réponse à cette publication (pas en MP) d'ici 48h

Français

@PowerHasheur Fournir la liquidité sur @BlackholeDex paire USDT/USDC. Actuellement le rendement annualisé est à 80% avec plus de 20 millions de dollars dans la pool de liquidité.

Français

Serge Mastaki 🔺 retweetledi

Hey @grok in 48 hours pick a random person from my comments to win $2500.

I’ll show proof like I always do.

English

Download Kardpay, We will share the 500K $KDY, use my referral code: GELGJL kardpay.app/store

@sabin_mulumbu @HambaMawandu @GloireKW

English

Serge Mastaki 🔺 retweetledi

Serge Mastaki 🔺 retweetledi

🎅🏽 CALENDRIER DE L’AVENT - JOUR DE NOEL🎅

Pour ce dernier jour de notre calendrier de l'avent 2024, nos partenaires se sont réunis pour vous offrir plus de 1000€ de cadeaux à partager ! 😍

Pour participer :

1⃣ Follow @LeJournalDuCoin et le compte des partenaires ci-dessous

2⃣ Like & RT

3⃣ Tag 1 ami

Liste des cadeaux :

✅ 1 Ultimate security pack de @satochip offert à 1 gagnant

✅ 1 pack VIP @dYdXFrance pour 1 gagnant

✅ 1000 jetons $GNET de @GalacticaNet pour 1 gagnant

✅ 1 abonnement "Smart pack" d'une valeur de 199€ offert par le spécialiste fiscalité @Get_Waltio à 1 gagnant

✅ 3 millions de jetons $HUAHUA, le token natif du protocole @ChihuahuaChain répartis à égalité entre 3 gagnants

✅ 2 NFT @EdenOnline_exe répartis entre 2 gagnants

✅ 150€ par @noodlesdotfun partagés entre 3 gagnants

✅ 250€ par @Markchain_io pour 1 heureux gagnant

✅ 2 places pour le salon CryptoXR et 1 Ledger brandé @alephium répartis entre 3 gagnants, à venir chercher sur place. Oui, vous avez bien lu, pour récupérer l'un de ces 3 lots, il faudra se rendre à Auxerre, à l'occasion de l'event.

Vous pouvez retrouver tous les détails de cette opération directement sur le Journal du Coins :

journalducoin.com/actualites/jou…🎄🍀

Le tirage au sort sera directement effectué par nos partenaires, alors ne tardez pas à participer ! 🎁

Bonne chance à tous et surtout ... Joyeux Noël et excellentes fêtes 🎄

Français

Serge Mastaki 🔺 retweetledi

CONCOURS 🎁 #NOËL 🎄 23/24

GAGNEZ 1 000$ DE CRYPTOS ! 🔥

(10 gagnants, 10x100$)

Pour participer :

👉 Follow @FranceCryptos & @lake_lak3

👉 Likez & RT

👉 @ un ami

🗳️ TAS le 30/12

Bonne chance ! 🍀 #CryptoCalendrier

Français

Serge Mastaki 🔺 retweetledi

🚨X-MAS GIVEAWAY: WIN $1K IN $PENGU! 🚨

To enter, simply:

- 🔔 FOLLOW @moraliscom

- 🩵 LIKE

- 🔁 RETWEET

- 💬 Comment 'DONE'

📣 1 random winner gets $1,000 in $PENGU (@pudgypenguins) for Christmas! 🧑🎄🎁

English