Harry Dong

34 posts

@Real_HDong

PhD Student @ CMU | Prev @ Meta, Apple, AFRL, AWS, UC Berkeley | Research in ML Inference

Lookup memories are having a moment 😄 The whale 🐋 #deepseek dropped engram… and we dropped up-projections from our FFNs…perfect timing 😅 🥳 Introducing STEM: Scaling Transformers with Embedding Modules 🌱 A scalable way to boost parametric memory with extra perks: ✅ Stable training even at extreme sparsity ✅ Better quality for fewer training FLOPs (knowledge + reasoning + long-context gains) ✅ Efficient inference: ~33% FFN params removed + CPU offload & async prefetch ✅ More interpretable → seamless knowledge editing 🔧🧠 Looking forward to DeepSeek v4… feels like we’ve only scratched the surface of embedding-lookup scaling 👀 📄Paper: arxiv.org/abs/2601.10639 🌐 Website: infini-ai-lab.github.io/STEM 🔗 GitHub: github.com/Infini-AI-Lab/…

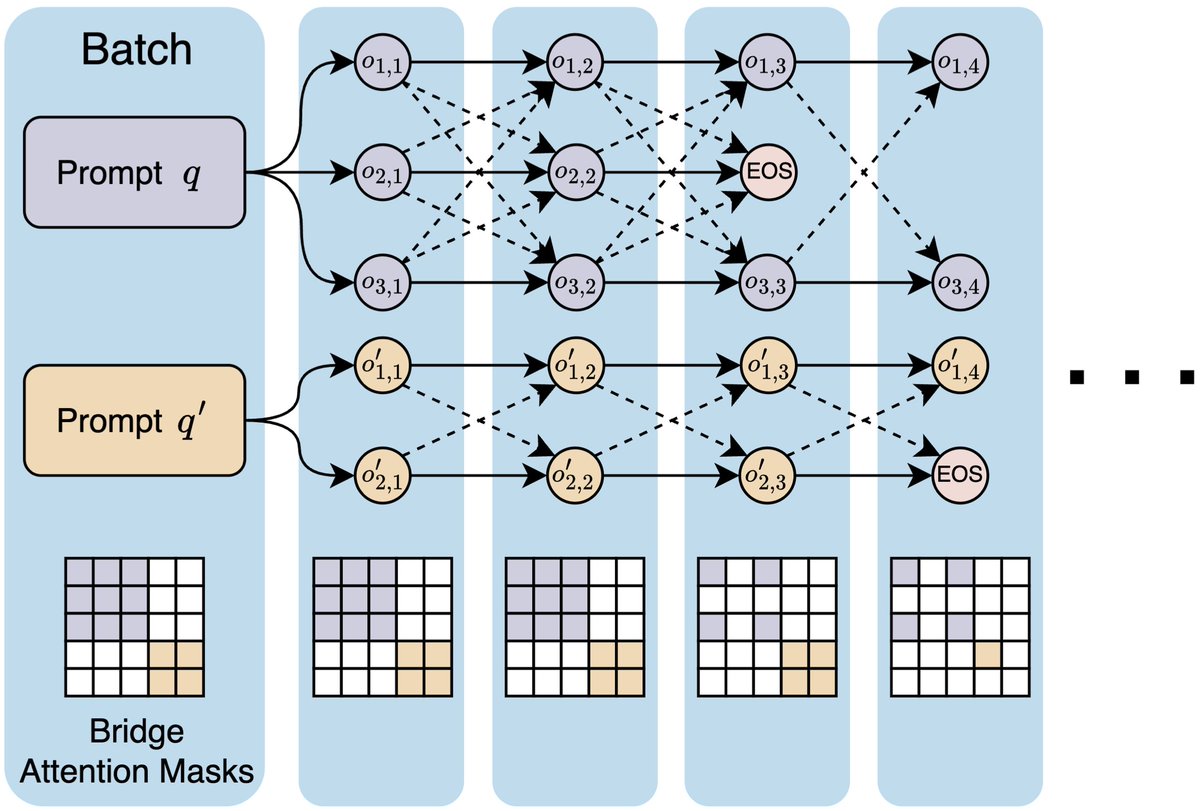

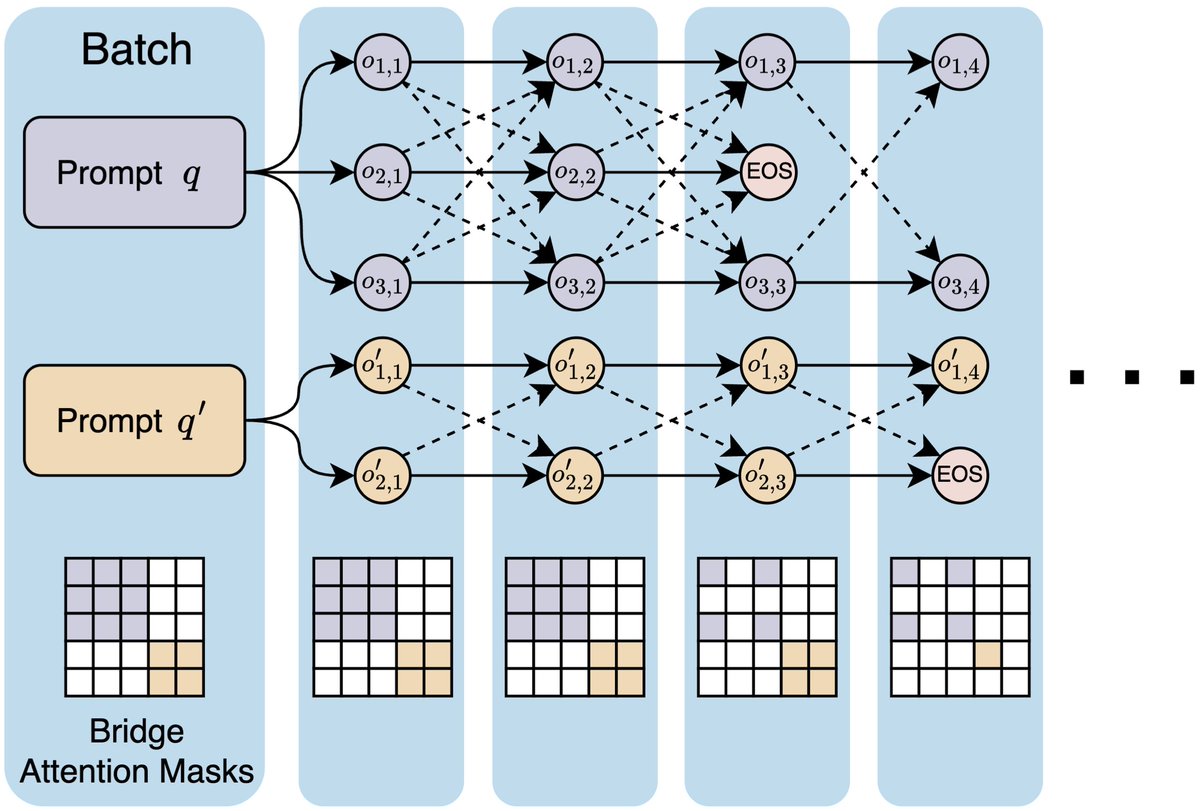

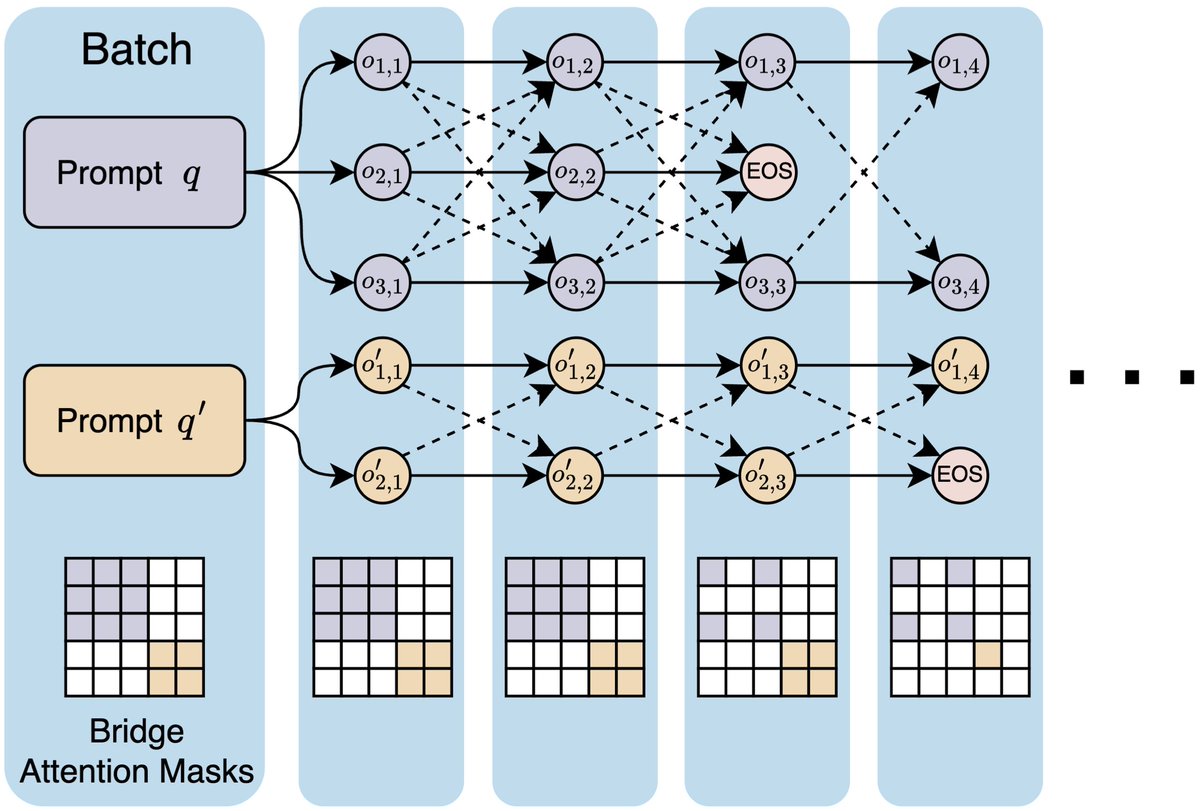

1/🧵 🎉Introducing Bridge🌉, our parallel LLM inference scaling method that shares info between all responses to an input prompt throughout the generation process! Bridge greatly improves the quality of individual responses and the entire response set! 📜arxiv.org/pdf/2510.01143

1/🧵 🎉Introducing Bridge🌉, our parallel LLM inference scaling method that shares info between all responses to an input prompt throughout the generation process! Bridge greatly improves the quality of individual responses and the entire response set! 📜arxiv.org/pdf/2510.01143

Wish to build scaling laws for RL but not sure how to scale? Or what scales? Or would RL even scale predictably? We introduce: The Art of Scaling Reinforcement Learning Compute for LLMs