Daniel Riek

497 posts

Daniel Riek

@RiekDaniel

Free Software (not as in Beer). Opinions still are mine unless explicitly stated otherwise.

A decision on SB-1047 is due soon. Governor @GavinNewsom has said he's concerned about its "chilling effect, particularly in the open source community". He's right, and I hope he will veto this. If you agree, please like/retweet this to show your support for VETOing SB-1047!

The Three Mile Island nuclear plant is being restarted. All 835 megawatts will go to power Microsoft's data centers. This is both the first time a U.S. nuclear plant will come back into service after being decommissioned, and that an entire plant’s output will go to one customer.

I read California Governor @GavinNewsom's comments about SB1047 yesterday: “The governor said he is weighing what risks of AI are demonstrable versus hypothetical.” bloomberg.com/news/articles/… Here is my perspective on this: Although experts don’t all agree on the magnitude and timeline of the risks, they generally agree that as AI capabilities continue to advance, major public safety risks such as AI-enabled hacking, biological attacks, or society losing control over AI could emerge. Some reply to this: “None of these risks have materialized yet, so they are purely hypothetical”. But (1) AI is rapidly getting better at abilities that increase the likelihood of these risks, and (2) We should not wait for a major catastrophe before protecting the public. Many people at the AI frontier share this concern, but are locked in an unregulated rat race. Over 125 current & former employees of frontier AI companies have called on @CAGovernor to #SignSB1047. I sympathize with the Governor’s concerns about potential downsides of the bill. But the California lawmakers have done a good job at hearing many voices – including industry, which led to important improvements. SB 1047 is now a measured, middle-of-the-road bill. Basic regulation against large-scale harms is standard in all sectors that pose risks to public safety. Leading AI companies have publicly acknowledged the risks of frontier AI. They’ve made voluntary commitments to ensure safety, including to the White House. That’s why some of the industry resistance against SB 1047, which holds them accountable to those promises, is disheartening. AI can lead to anything from a fantastic future to catastrophe, and decision-makers today face a difficult test. To keep the public safe while AI advances at unpredictable speed, they have to take this vast range of plausible scenarios seriously and take responsibility. AI can bring tremendous benefits – but only if we steer it wisely, instead of just letting it happen to us and hoping that all goes well. I often wonder: Will we live up to the magnitude of this challenge? Today, the answer lies in the hands of Governor @GavinNewsom.

AI expert Helen Toner (@hlntnr): "If they succeed in building computers that are as smart as humans or perhaps far smarter than humans, that technology will be at a minimum extraordinarily disruptive and at a maximum could lead to literal human extinction."

China Proposes Magnetic Launch System for Sending Resources Back to Earth universetoday.com/168193/china-p… via @universetoday

Europe is “particularly well placed” to make the most of a coming wave in open-source AI, argue the tech CEOs. Yet fragmented regulation is “hampering innovation and holding back developers” econ.st/4fWLHHU Illustration: Sam Kerr

California lawmakers face backlash after advancing a bill fueled by AI doomsday fears. reason.com/2024/08/16/cal…

SB 1047, a CA bill to regulate AI, is being attacked relentlessly by tech giants. But when you look at the bill, it becomes clear that some of them are blatantly misleading the public about the nature of this legislation. Read more from @GarrisonLovely. bit.ly/4csyJP3

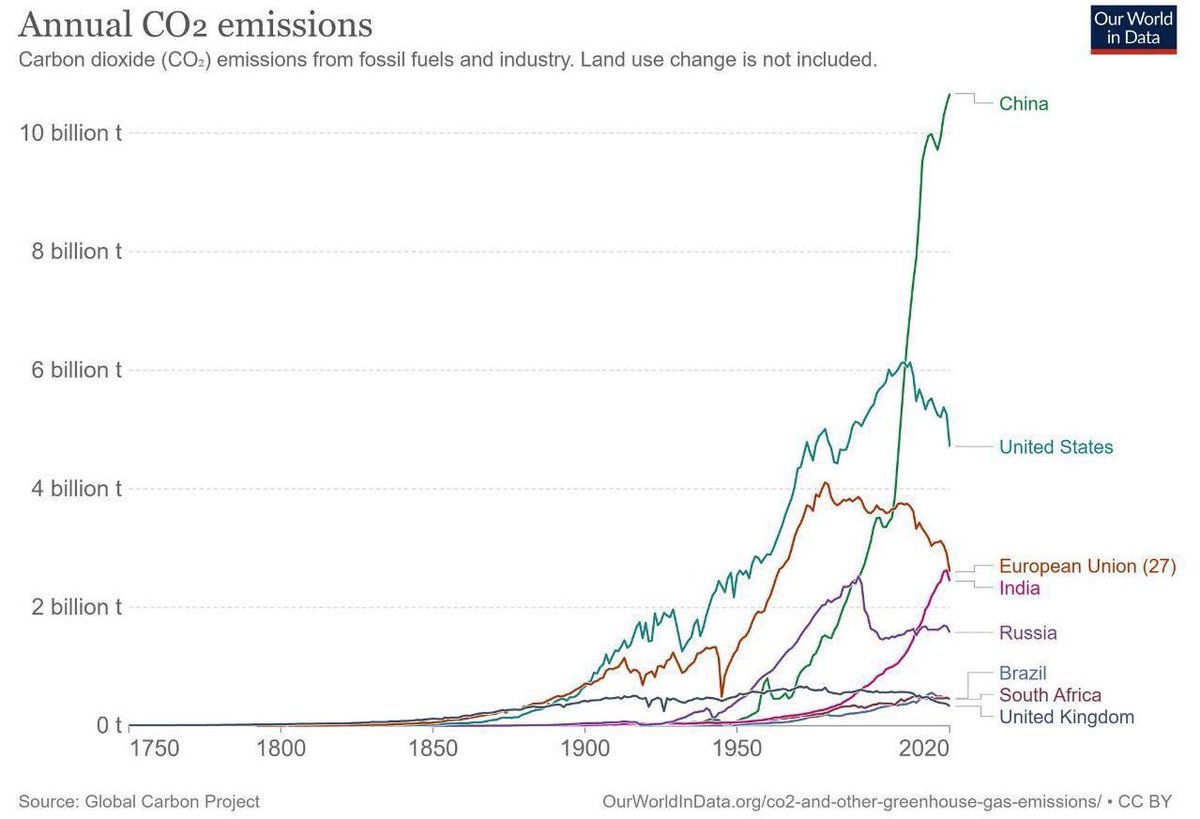

“Europe is warming at twice the rate of the global average – we can’t rest on our laurels.” Fossil fuels are turbo-charging extreme heat-related weather events, risking people's health and lives. It's time for rich polluters to #StopDrillingStartPaying! act.gp/46GHvHT