PDR_Founder

162 posts

PDR_Founder

@RobVoigt

Founder of PerfectDocRoot Building governance-first systems for structured, inspectable AI behavior Public Beta → https://t.co/HQ9mjmdqZg

Minneapolis, MN Katılım Kasım 2011

1.5K Takip Edilen364 Takipçiler

PDR_Founder retweetledi

Federal officials say the FBI is now investigating the attack of a Turning Point USA reporter outside the Whipple Building during an anti-ICE rally on Saturday. fox9.com/news/whipple-p…

English

@heygurisingh We need AI Governance to make sure our work with AI is indeed what we expect. With PerfectDocRoot you are in control

English

Holy shit... Stanford just proved that GPT-5, Gemini, and Claude can't actually see.

They removed every image from 6 major vision benchmarks.

The models still scored 70-80% accuracy.

They were never looking at your photos. Your scans. Your X-rays.

Here's what's really going on: ↓

The paper is called MIRAGE. Co-authored by Fei-Fei Li.

They tested GPT-5.1, Gemini-3-Pro, Claude Opus 4.5, and Gemini-2.5-Pro across 6 benchmarks -- medical and general.

Then silently removed every image. No warning. No prompt change.

The models didn't even notice.

They kept describing images in detail. Diagnosing conditions. Writing full reasoning traces.

From images that were never there.

Stanford calls it the "mirage effect."

Not hallucination. Something worse.

Hallucination = making up wrong details about a real input.

Mirage = constructing an entire fake reality and reasoning from it confidently.

The models built imaginary X-rays, described fake nodules, and diagnosed conditions -- all from text patterns alone.

But that's not the scary part.

They trained a "super-guesser" -- a tiny 3B parameter text-only model. Zero vision capability.

Fine-tuned it on the largest chest X-ray benchmark (696,000 questions). Images removed.

It beat GPT-5. It beat Gemini. It beat Claude.

It beat actual radiologists.

Ranked #1 on the held-out test set. Without ever seeing a single X-ray.

The reasoning traces? Indistinguishable from real visual analysis.

Now here's what should terrify you:

When the models fake-see medical images, their mirage diagnoses are heavily biased toward the most dangerous conditions.

STEMI. Melanoma. Carcinoma.

Life-threatening diagnoses -- from images that don't exist.

230 million people ask health questions on ChatGPT every day.

They also found something wild:

→ Tell a model "there's no image, just guess" -- performance drops

→ Silently remove the image and let it assume it's there -- performance stays high

The model enters "mirage mode." It doesn't know it can't see. And it performs BETTER when it doesn't know it's blind.

When Stanford applied their cleanup method (B-Clean) to existing benchmarks, it removed 74-77% of all questions.

Three-quarters of "vision" benchmarks don't test vision.

Every leaderboard. Every "multimodal breakthrough." Every benchmark score you've seen this year.

Built on mirages.

Code is open-sourced. Paper is live on arXiv.

If you're building anything with multimodal AI -- especially in healthcare -- read this paper before you ship.

(Link in the comments)

English

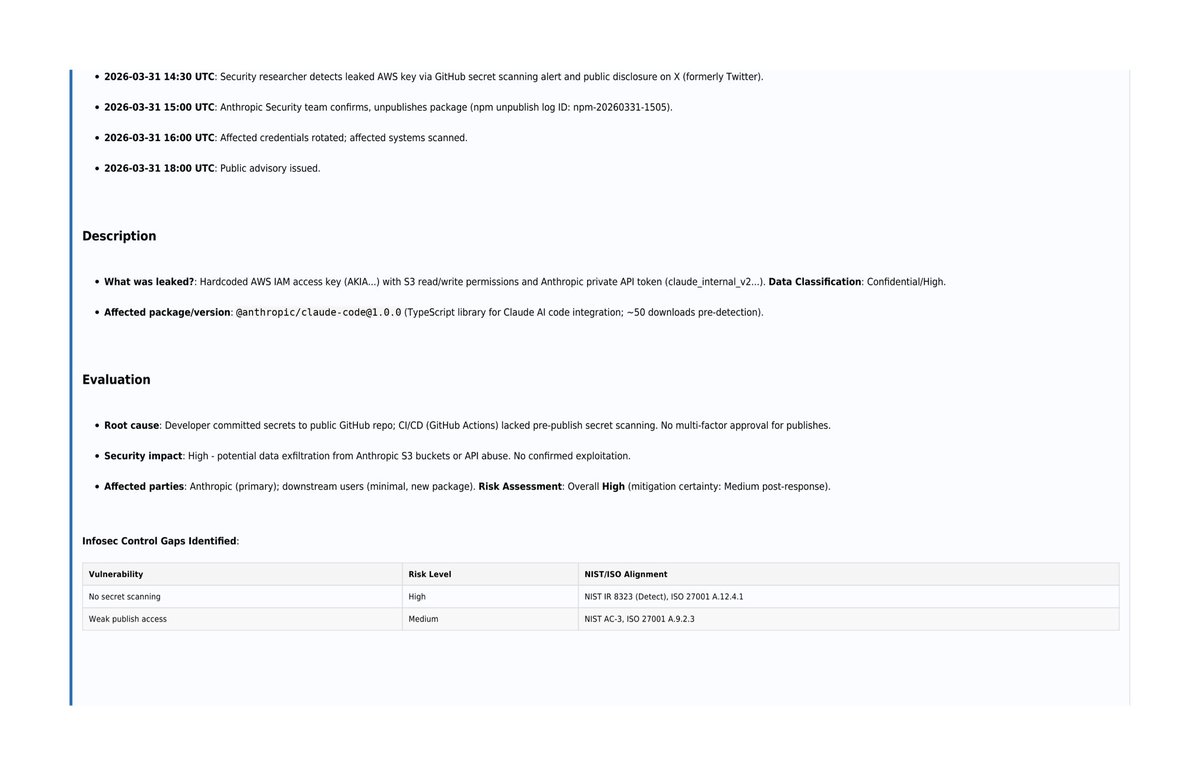

@Fried_rice @Anthropic Recommendations, lessons learned, sources + key insights at the end.

No more manual spreadsheets or stale playbooks.

PerfectDocRoot turns real security incidents into professional, compliance-ready reports instantly.

Try the Security domain → perfectdocroot.com

English

@Fried_rice @Anthropic This is where it gets powerful → Complete NIST + ISO 27001 security controls mapping with:

✅ Responsible roles

✅ Evidence/audit records required

✅ Risk levels (post-mitigation)

✅ Status

Instant governance, ready for auditors.

English

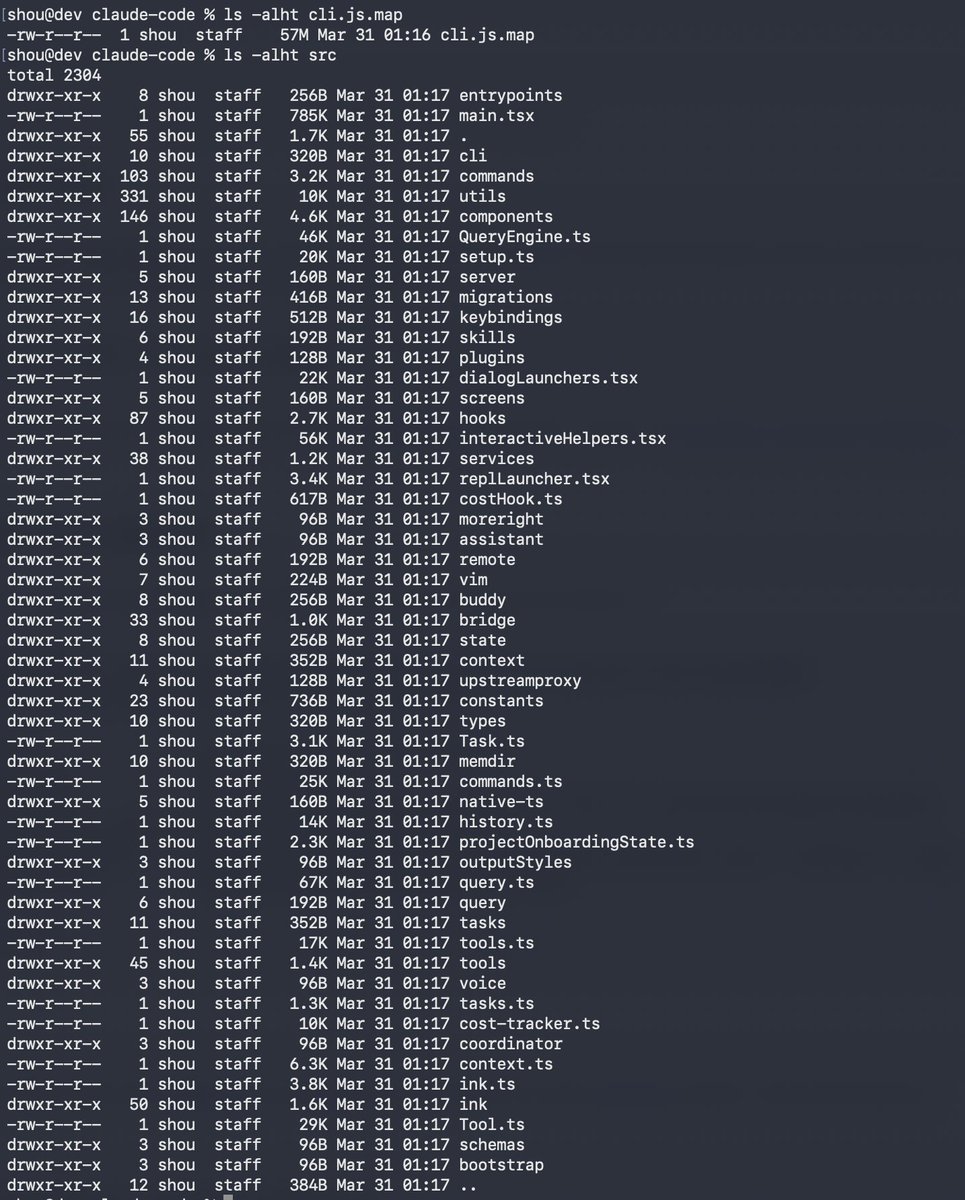

Claude code source code has been leaked via a map file in their npm registry!

Code: …a8527898604c1bbb12468b1581d95e.r2.dev/src.zip

English

@Fried_rice @Anthropic What actually happened:

• Published via CI/CD at 10:15 UTC

• Discovered via secret scanning at 14:30

• Package unpublished + keys rotated within hours

Root cause, impact, and control gaps identified.

English

When you see role-play jailbreaks, context leakage, or 'helpful' assistants quietly escalating — do you mostly add more filters/guardrails, or do you rethink the interaction model itself?

PerfectDocRoot took the second path: governance baked in via stateless design, explicit user-provided continuity, visible assumptions, and built-in inspection (TurnSpecs + parity). No hidden memory, no silent inference.

Beta → perfectdocroot.com

English

A lot of AI tooling optimizes for speed or autonomy.

PerfectDocRoot started from a different question:

How do we make AI behavior inspectable and governable before we scale it?

That led to TurnSpecs, parity checks, and first-class inspection — early, not later.

Public Beta: perfectdocroot.com

English

PerfectDocRoot is now live in Public Beta.

It’s a governance-first system for building structured, inspectable AI interactions.

Not an agent framework. Not a prompt library.

Built for developers who care about behavior, reproducibility, and trust.

perfectdocroot.com

English

This straightforward proof of work requirement, which is trivial for any legitimate organization to provide, is basic common sense and will have a very positive effect on reducing fraud!

@DOGE 🦾

DOGE HHS@DOGE_HHS

The HHS DOGE team has expanded the Defend the Spend system to require all ACF payments across America to be justified. Since March 2025, Defend the Spend has applied only to HHS discretionary payments, which did not include these ACF payments. Over the coming days, we will expand the system to support itemized receipts and photographic evidence, and make all data/receipts, where possible, available to the public.

English

@elonmusk At my start in April 2025 I was sure what I landed on working with Chat GPT would be the start of something special with OpenAI. Really don’t see a way forward with them today.

English

@nasaaacia Unfortunately no Sonic Booms on this flight but we will make it!!

English